## Bar Chart: Solve Rate Comparison

### Overview

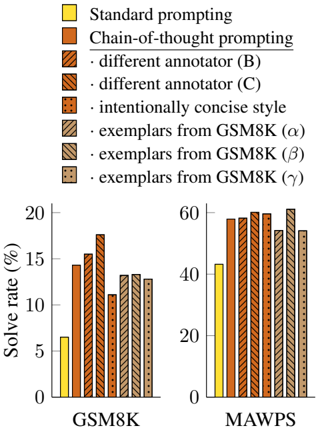

The image presents a bar chart comparing the solve rates (%) of different prompting methods on two datasets: GSM8K and MAWPS. The chart includes a legend that identifies each prompting method with a unique color or pattern. The solve rates are displayed on the y-axis, ranging from 0% to 20% for GSM8K and 0% to 60% for MAWPS.

### Components/Axes

* **Y-axis:** "Solve rate (%)", ranging from 0 to 20 in increments of 5 for GSM8K, and 0 to 60 in increments of 20 for MAWPS.

* **X-axis:** Categorical, with two categories: "GSM8K" and "MAWPS".

* **Legend (Top-Left):**

* Yellow: "Standard prompting"

* Brown: "Chain-of-thought prompting"

* Brown with diagonal lines from top-left to bottom-right: "different annotator (B)"

* Brown with diagonal lines from top-right to bottom-left: "different annotator (C)"

* Brown with dots: "intentionally concise style"

* Brown with horizontal lines: "exemplars from GSM8K (α)"

* Brown with vertical lines: "exemplars from GSM8K (β)"

* Brown with small squares: "exemplars from GSM8K (γ)"

### Detailed Analysis

**GSM8K Dataset:**

* **Standard prompting (Yellow):** Solve rate is approximately 6%.

* **Chain-of-thought prompting (Brown):** Solve rate is approximately 14%.

* **Different annotator (B) (Brown with diagonal lines from top-left to bottom-right):** Solve rate is approximately 16%.

* **Different annotator (C) (Brown with diagonal lines from top-right to bottom-left):** Solve rate is approximately 18%.

* **Intentionally concise style (Brown with dots):** Solve rate is approximately 11%.

* **Exemplars from GSM8K (α) (Brown with horizontal lines):** Solve rate is approximately 13%.

* **Exemplars from GSM8K (β) (Brown with vertical lines):** Solve rate is approximately 13%.

* **Exemplars from GSM8K (γ) (Brown with small squares):** Solve rate is approximately 13%.

**MAWPS Dataset:**

* **Standard prompting (Yellow):** Solve rate is approximately 43%.

* **Chain-of-thought prompting (Brown):** Solve rate is approximately 58%.

* **Different annotator (B) (Brown with diagonal lines from top-left to bottom-right):** Solve rate is approximately 59%.

* **Different annotator (C) (Brown with diagonal lines from top-right to bottom-left):** Solve rate is approximately 61%.

* **Intentionally concise style (Brown with dots):** Solve rate is approximately 51%.

* **Exemplars from GSM8K (α) (Brown with horizontal lines):** Solve rate is approximately 58%.

* **Exemplars from GSM8K (β) (Brown with vertical lines):** Solve rate is approximately 62%.

* **Exemplars from GSM8K (γ) (Brown with small squares):** Solve rate is approximately 56%.

### Key Observations

* For both datasets, "Standard prompting" yields the lowest solve rate.

* "Different annotator (C)" generally results in a higher solve rate compared to other methods.

* The solve rates for "exemplars from GSM8K (α)", "exemplars from GSM8K (β)", and "exemplars from GSM8K (γ)" are relatively similar within each dataset.

* The solve rates are significantly higher for MAWPS compared to GSM8K across all prompting methods.

### Interpretation

The chart demonstrates the impact of different prompting methods on the solve rate of models across two datasets, GSM8K and MAWPS. The "Chain-of-thought prompting" and its variations ("different annotator (B)", "different annotator (C)") consistently outperform "Standard prompting", suggesting that providing more context or structure to the model's input can improve its problem-solving ability. The "exemplars from GSM8K" methods show a moderate improvement over standard prompting. The higher solve rates on MAWPS compared to GSM8K indicate that the MAWPS dataset may be inherently easier or more suitable for these prompting techniques. The variations in solve rates among the different annotators and exemplars suggest that the specific phrasing and content of the prompts can influence performance.