\n

## Bar Chart: Solve Rate Comparison for Different Prompting Strategies

### Overview

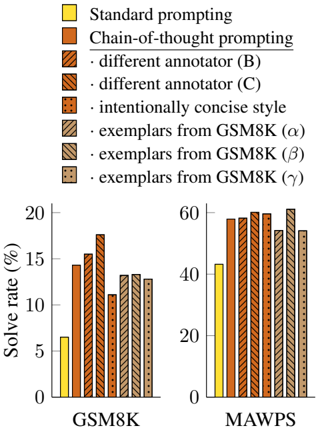

This image presents a comparative bar chart illustrating the solve rates achieved by various prompting strategies on two datasets: GSM8K and MAWPS. The chart consists of two sub-charts, one for each dataset, with bars representing the solve rate (%) for each prompting method.

### Components/Axes

* **X-axis:** Dataset names - GSM8K and MAWPS.

* **Y-axis:** Solve rate (%), ranging from 0 to 20 for GSM8K and 0 to 60 for MAWPS.

* **Legend (Top-Left):**

* Yellow: Standard prompting

* Orange: Chain-of-thought prompting

* Light Brown (Striped): different annotator (B)

* Darker Brown (Striped): different annotator (C)

* Black: intentionally concise style

* Dark Grey (Striped): exemplars from GSM8K (α)

* Medium Grey (Striped): exemplars from GSM8K (β)

* Light Grey (Striped): exemplars from GSM8K (γ)

### Detailed Analysis or Content Details

**GSM8K Sub-Chart:**

* **Standard prompting (Yellow):** Solve rate approximately 6%.

* **Chain-of-thought prompting (Orange):** Solve rate approximately 16%.

* **different annotator (B) (Light Brown):** Solve rate approximately 14%.

* **different annotator (C) (Darker Brown):** Solve rate approximately 11%.

* **intentionally concise style (Black):** Solve rate approximately 13%.

* **exemplars from GSM8K (α) (Dark Grey):** Solve rate approximately 13%.

* **exemplars from GSM8K (β) (Medium Grey):** Solve rate approximately 12%.

* **exemplars from GSM8K (γ) (Light Grey):** Solve rate approximately 12%.

**MAWPS Sub-Chart:**

* **Standard prompting (Yellow):** Solve rate approximately 45%.

* **Chain-of-thought prompting (Orange):** Solve rate approximately 57%.

* **different annotator (B) (Light Brown):** Solve rate approximately 57%.

* **different annotator (C) (Darker Brown):** Solve rate approximately 56%.

* **intentionally concise style (Black):** Solve rate approximately 56%.

* **exemplars from GSM8K (α) (Dark Grey):** Solve rate approximately 56%.

* **exemplars from GSM8K (β) (Medium Grey):** Solve rate approximately 55%.

* **exemplars from GSM8K (γ) (Light Grey):** Solve rate approximately 55%.

### Key Observations

* Chain-of-thought prompting consistently outperforms standard prompting across both datasets.

* The solve rates are significantly higher for the MAWPS dataset compared to the GSM8K dataset, regardless of the prompting strategy.

* Variations in annotators (B and C) and style (concise) have a relatively small impact on solve rates, especially for MAWPS.

* Using exemplars from GSM8K also yields high solve rates on MAWPS, suggesting transfer learning benefits.

### Interpretation

The data suggests that chain-of-thought prompting is a highly effective technique for improving the performance of language models on mathematical reasoning tasks. The substantial difference in solve rates between GSM8K and MAWPS indicates that the difficulty of the problems varies significantly between the two datasets. MAWPS appears to be easier to solve, as evidenced by the higher solve rates across all prompting strategies. The relatively minor impact of annotator variations and stylistic choices suggests that the core prompting strategy (chain-of-thought) is more important than these factors. The successful transfer of exemplars from GSM8K to MAWPS highlights the potential for leveraging knowledge from one dataset to improve performance on another. The chart demonstrates the importance of prompt engineering in enhancing the capabilities of language models for complex problem-solving.