TECHNICAL ASSET FINGERPRINT

6a28966ccfc2e203c9a085a5

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

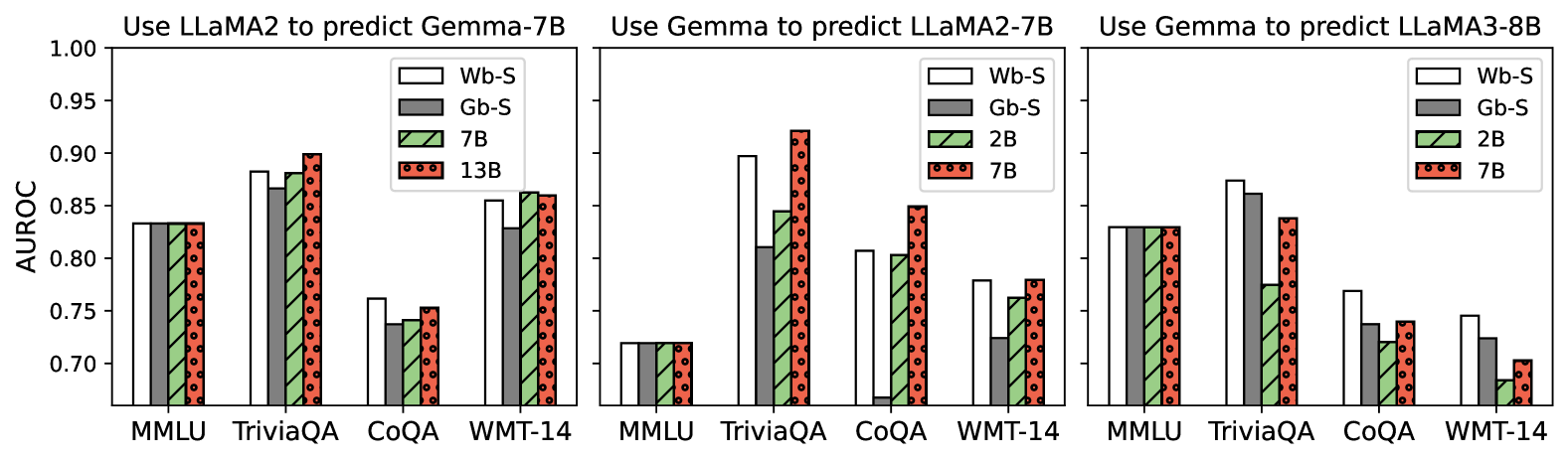

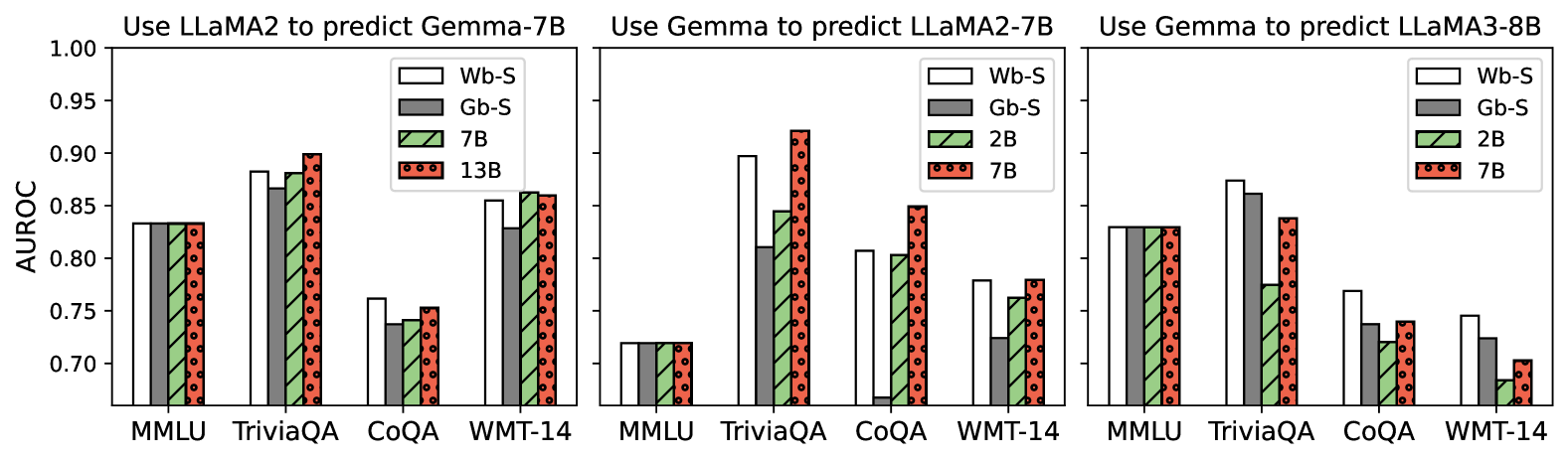

## Bar Chart: Model Prediction Performance

### Overview

The image presents three bar charts comparing the performance (AUROC score) of different language models when predicting the outputs of other models. The charts are grouped by the model used for prediction: LLaMA2 predicting Gemma-7B, Gemma predicting LLaMA2-7B, and Gemma predicting LLaMA3-8B. Each chart compares the performance across four tasks: MMLU, TriviaQA, CoQA, and WMT-14.

### Components/Axes

* **Title (Top-Left Chart):** Use LLaMA2 to predict Gemma-7B

* **Title (Top-Middle Chart):** Use Gemma to predict LLaMA2-7B

* **Title (Top-Right Chart):** Use Gemma to predict LLaMA3-8B

* **Y-axis Label:** AUROC

* Scale: 0.70 to 1.00, with tick marks at 0.70, 0.75, 0.80, 0.85, 0.90, 0.95, and 1.00.

* **X-axis Labels (all charts):** MMLU, TriviaQA, CoQA, WMT-14

* **Legend (Top-Left Chart):**

* White: Wb-S

* Gray: Gb-S

* Green (diagonal lines): 7B

* Red (dotted): 13B

* **Legend (Top-Middle and Top-Right Charts):**

* White: Wb-S

* Gray: Gb-S

* Green (diagonal lines): 2B

* Red (dotted): 7B

### Detailed Analysis

#### Chart 1: Use LLaMA2 to predict Gemma-7B

* **MMLU:**

* Wb-S: ~0.84

* Gb-S: ~0.84

* 7B: ~0.84

* 13B: ~0.84

* **TriviaQA:**

* Wb-S: ~0.88

* Gb-S: ~0.87

* 7B: ~0.89

* 13B: ~0.90

* **CoQA:**

* Wb-S: ~0.76

* Gb-S: ~0.74

* 7B: ~0.74

* 13B: ~0.75

* **WMT-14:**

* Wb-S: ~0.86

* Gb-S: ~0.85

* 7B: ~0.86

* 13B: ~0.86

#### Chart 2: Use Gemma to predict LLaMA2-7B

* **MMLU:**

* Wb-S: ~0.72

* Gb-S: ~0.72

* 2B: ~0.72

* 7B: ~0.72

* **TriviaQA:**

* Wb-S: ~0.89

* Gb-S: ~0.83

* 2B: ~0.80

* 7B: ~0.88

* **CoQA:**

* Wb-S: ~0.72

* Gb-S: ~0.80

* 2B: ~0.84

* 7B: ~0.87

* **WMT-14:**

* Wb-S: ~0.77

* Gb-S: ~0.78

* 2B: ~0.80

* 7B: ~0.85

#### Chart 3: Use Gemma to predict LLaMA3-8B

* **MMLU:**

* Wb-S: ~0.84

* Gb-S: ~0.84

* 2B: ~0.84

* 7B: ~0.84

* **TriviaQA:**

* Wb-S: ~0.88

* Gb-S: ~0.87

* 2B: ~0.86

* 7B: ~0.82

* **CoQA:**

* Wb-S: ~0.77

* Gb-S: ~0.74

* 2B: ~0.74

* 7B: ~0.74

* **WMT-14:**

* Wb-S: ~0.74

* Gb-S: ~0.72

* 2B: ~0.68

* 7B: ~0.70

### Key Observations

* When LLaMA2 predicts Gemma-7B, the performance across different tasks is relatively consistent for all model sizes (Wb-S, Gb-S, 7B, 13B). TriviaQA shows the highest AUROC scores, while CoQA shows the lowest.

* When Gemma predicts LLaMA2-7B, there is more variance in performance across tasks and model sizes. TriviaQA and CoQA show higher AUROC scores compared to MMLU and WMT-14.

* When Gemma predicts LLaMA3-8B, the performance is generally high for MMLU and TriviaQA, but lower for CoQA and WMT-14.

### Interpretation

The charts illustrate the transferability and predictive power of different language models. The AUROC scores indicate how well one model can predict the output of another on various tasks.

* The first chart suggests that LLaMA2 can effectively predict Gemma-7B's outputs, with relatively consistent performance across different model sizes.

* The second chart shows that Gemma's ability to predict LLaMA2-7B varies depending on the task. This could indicate differences in the models' architectures or training data.

* The third chart shows that Gemma's ability to predict LLaMA3-8B also varies depending on the task.

The differences in performance across tasks (MMLU, TriviaQA, CoQA, WMT-14) highlight the importance of task-specific evaluation when assessing language model capabilities. The models seem to perform better on TriviaQA compared to CoQA, suggesting that they are better at answering factual questions than engaging in conversational question answering.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Bar Chart: AUROC Performance of LLMs Predicting Other LLMs

### Overview

This image presents three sets of bar charts comparing the Area Under the Receiver Operating Characteristic curve (AUROC) performance of different Large Language Models (LLMs) when predicting the outputs of other LLMs. Each set of charts corresponds to a different prediction scenario: using LLaMA2 to predict Gemma-7B, using Gemma to predict LLaMA2-7B, and using Gemma to predict LLaMA3-8B. The x-axis represents different datasets (MMLU, TriviaQA, CoQA, WMT-14), and the y-axis represents the AUROC score. Different LLM sizes are represented by different bar patterns.

### Components/Axes

* **Title (Top):** "Use LLaMA2 to predict Gemma-7B", "Use Gemma to predict LLaMA2-7B", "Use Gemma to predict LLaMA3-8B"

* **X-axis Label:** Dataset names: MMLU, TriviaQA, CoQA, WMT-14

* **Y-axis Label:** AUROC (ranging from approximately 0.70 to 1.00)

* **Legend (Top-Right of each chart):**

* "Wb-S" (White bars with solid fill)

* "Gb-S" (Gray bars with solid fill)

* "2B" (Light green bars with dotted fill)

* "7B" (Dark green bars with striped fill)

* "13B" (Red bars with cross-hatched fill)

### Detailed Analysis or Content Details

**Chart 1: Use LLaMA2 to predict Gemma-7B**

* **MMLU:**

* Wb-S: Approximately 0.88

* Gb-S: Approximately 0.87

* 7B: Approximately 0.86

* 13B: Approximately 0.84

* **TriviaQA:**

* Wb-S: Approximately 0.89

* Gb-S: Approximately 0.88

* 7B: Approximately 0.87

* 13B: Approximately 0.85

* **CoQA:**

* Wb-S: Approximately 0.84

* Gb-S: Approximately 0.83

* 7B: Approximately 0.81

* 13B: Approximately 0.78

* **WMT-14:**

* Wb-S: Approximately 0.86

* Gb-S: Approximately 0.85

* 7B: Approximately 0.83

* 13B: Approximately 0.79

**Chart 2: Use Gemma to predict LLaMA2-7B**

* **MMLU:**

* Wb-S: Approximately 0.73

* Gb-S: Approximately 0.72

* 2B: Approximately 0.71

* 7B: Approximately 0.70

* **TriviaQA:**

* Wb-S: Approximately 0.88

* Gb-S: Approximately 0.87

* 2B: Approximately 0.85

* 7B: Approximately 0.83

* **CoQA:**

* Wb-S: Approximately 0.78

* Gb-S: Approximately 0.77

* 2B: Approximately 0.75

* 7B: Approximately 0.73

* **WMT-14:**

* Wb-S: Approximately 0.74

* Gb-S: Approximately 0.73

* 2B: Approximately 0.71

* 7B: Approximately 0.69

**Chart 3: Use Gemma to predict LLaMA3-8B**

* **MMLU:**

* Wb-S: Approximately 0.88

* Gb-S: Approximately 0.87

* 2B: Approximately 0.85

* 7B: Approximately 0.83

* **TriviaQA:**

* Wb-S: Approximately 0.91

* Gb-S: Approximately 0.90

* 2B: Approximately 0.88

* 7B: Approximately 0.86

* **CoQA:**

* Wb-S: Approximately 0.85

* Gb-S: Approximately 0.84

* 2B: Approximately 0.82

* 7B: Approximately 0.80

* **WMT-14:**

* Wb-S: Approximately 0.78

* Gb-S: Approximately 0.77

* 2B: Approximately 0.75

* 7B: Approximately 0.73

### Key Observations

* In the first chart (LLaMA2 predicting Gemma-7B), the performance is relatively high across all datasets and model sizes, with Wb-S consistently performing slightly better than the others.

* In the second chart (Gemma predicting LLaMA2-7B), the AUROC scores are generally lower, particularly on the MMLU dataset.

* In the third chart (Gemma predicting LLaMA3-8B), the performance is higher than in the second chart, but still generally lower than in the first chart.

* Across all charts, larger model sizes (7B and 13B) generally perform better than smaller models (2B), but the difference is not always substantial.

* TriviaQA consistently shows the highest AUROC scores across all scenarios.

### Interpretation

The data suggests that predicting the output of a model with a different architecture (e.g., LLaMA2 predicting Gemma) can be more effective than predicting the output of a model with the same architecture (e.g., Gemma predicting LLaMA2). This could be due to the differences in the training data or model structure leading to complementary strengths. The performance on TriviaQA is consistently high, indicating that this dataset may be easier to predict or that the models are particularly well-suited to this type of question answering. The varying performance across datasets highlights the importance of evaluating models on a diverse range of tasks. The trend of larger models generally performing better suggests that model size is an important factor in prediction accuracy, but other factors, such as architecture and training data, also play a significant role. The differences in performance between the three prediction scenarios suggest that the choice of predictor and predicttee models can have a substantial impact on the accuracy of the prediction.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Bar Charts: Cross-Model Prediction Performance (AUROC)

### Overview

The image displays three separate bar charts arranged horizontally. Each chart compares the performance (measured in AUROC) of different language model configurations on four standard benchmarks (MMLU, TriviaQA, CoQA, WMT-14). The charts specifically evaluate the performance of one model architecture when used to predict the outputs of another, different architecture.

### Components/Axes

* **Y-Axis (All Charts):** Labeled "AUROC". The scale runs from 0.70 to 1.00, with major tick marks at 0.05 intervals (0.70, 0.75, 0.80, 0.85, 0.90, 0.95, 1.00).

* **X-Axis (All Charts):** Lists four benchmark categories: `MMLU`, `TriviaQA`, `CoQA`, `WMT-14`.

* **Chart Titles (Top):**

1. Left Chart: `Use LLaMA2 to predict Gemma-7B`

2. Middle Chart: `Use Gemma to predict LLaMA2-7B`

3. Right Chart: `Use Gemma to predict LLaMA3-8B`

* **Legends (Top-Right of each chart):** Each chart has a legend box identifying the four bar types. The colors/patterns are consistent across charts, but the labels change.

* **Left Chart Legend:**

* White bar: `Wb-S`

* Grey bar: `Gb-S`

* Green bar with diagonal stripes: `7B`

* Red bar with black dots: `13B`

* **Middle & Right Charts Legend:**

* White bar: `Wb-S`

* Grey bar: `Gb-S`

* Green bar with diagonal stripes: `2B`

* Red bar with black dots: `7B`

### Detailed Analysis

**Chart 1: Use LLaMA2 to predict Gemma-7B**

* **Trend:** Performance is generally high and stable across models for MMLU and TriviaQA. There is a notable drop for CoQA, followed by a recovery for WMT-14.

* **Data Points (Approximate AUROC):**

* **MMLU:** All four bars (`Wb-S`, `Gb-S`, `7B`, `13B`) are nearly equal, clustered around **0.83**.

* **TriviaQA:** Performance is higher. `Wb-S` (~0.88), `Gb-S` (~0.87), `7B` (~0.88), `13B` (~0.90). The `13B` model performs best.

* **CoQA:** A significant drop. `Wb-S` (~0.76), `Gb-S` (~0.74), `7B` (~0.74), `13B` (~0.75).

* **WMT-14:** Performance recovers. `Wb-S` (~0.85), `Gb-S` (~0.83), `7B` (~0.86), `13B` (~0.86).

**Chart 2: Use Gemma to predict LLaMA2-7B**

* **Trend:** Shows more variability. TriviaQA has the highest scores, while MMLU and CoQA are lower. The `7B` (red dotted) model often outperforms others.

* **Data Points (Approximate AUROC):**

* **MMLU:** All bars are low and equal, at approximately **0.72**.

* **TriviaQA:** High scores. `Wb-S` (~0.90), `Gb-S` (~0.81), `2B` (~0.84), `7B` (~0.92). The `7B` model is the clear leader.

* **CoQA:** Moderate scores. `Wb-S` (~0.81), `Gb-S` (~0.67), `2B` (~0.80), `7B` (~0.85). The `Gb-S` bar is notably the lowest in the entire chart.

* **WMT-14:** Moderate scores. `Wb-S` (~0.78), `Gb-S` (~0.72), `2B` (~0.76), `7B` (~0.78).

**Chart 3: Use Gemma to predict LLaMA3-8B**

* **Trend:** Performance is generally lower than in the first two charts, especially for CoQA and WMT-14. The `Wb-S` and `Gb-S` models often perform better than the smaller `2B` and `7B` models on several tasks.

* **Data Points (Approximate AUROC):**

* **MMLU:** All bars are equal, at approximately **0.83**.

* **TriviaQA:** `Wb-S` (~0.87), `Gb-S` (~0.86), `2B` (~0.77), `7B` (~0.84). The `2B` model shows a significant drop.

* **CoQA:** Low scores. `Wb-S` (~0.77), `Gb-S` (~0.74), `2B` (~0.72), `7B` (~0.74).

* **WMT-14:** The lowest scores in the chart. `Wb-S` (~0.75), `Gb-S` (~0.73), `2B` (~0.68), `7B` (~0.70).

### Key Observations

1. **Benchmark Difficulty:** CoQA consistently yields the lowest AUROC scores across all three prediction scenarios, suggesting it is the most challenging task for cross-model prediction in this experiment.

2. **Model Size Effect:** In Chart 1 (LLaMA2 predicting Gemma-7B), the largest model (`13B`, red dotted) generally performs best or ties for best. In Charts 2 and 3 (Gemma predicting LLaMA), the relationship is less clear; sometimes the larger model (`7B`, red dotted) wins, but sometimes the white (`Wb-S`) or grey (`Gb-S`) bars are superior.

3. **Task-Specific Performance:** TriviaQA often shows the highest AUROC values, indicating it may be an easier task for these models to predict on, or that the models' knowledge on this task is more aligned.

4. **Symmetry Break:** The performance when using LLaMA2 to predict Gemma (Chart 1) is not symmetric with using Gemma to predict LLaMA2 (Chart 2). The AUROC values and patterns differ, indicating the prediction difficulty is not reciprocal between model architectures.

### Interpretation

This data investigates the "predictability" or alignment between different Large Language Model (LLM) architectures. The AUROC metric likely measures how well one model can predict the correctness or output distribution of another on standardized benchmarks.

* **What it demonstrates:** The charts show that cross-model prediction performance is highly dependent on three factors: **1) The specific model architectures involved** (LLaMA2 vs. Gemma vs. LLaMA3), **2) The task domain** (e.g., knowledge QA vs. conversational QA vs. translation), and **3) The size/capability of the predictor model**. The lack of symmetry between Charts 1 and 2 is a key finding, suggesting that "Model A predicting Model B" is a different problem than "Model B predicting Model A."

* **Underlying Patterns:** The consistently lower scores on CoQA imply that conversational reasoning (the focus of CoQA) involves model-specific nuances that are harder to transfer or predict across architectures compared to more factual knowledge (TriviaQA, MMLU). The variable impact of model size suggests that simply making a predictor larger does not guarantee better cross-model prediction; the nature of the knowledge or reasoning being transferred is crucial.

* **Implication:** This type of analysis is valuable for understanding model similarity, knowledge overlap, and the potential for using one model as a "teacher" or "evaluator" for another. It suggests that creating universally predictive or evaluative models may be challenging, as performance is tightly coupled to the specific pair of models and the task at hand.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Bar Chart: Model Performance Comparison Across Tasks and Scenarios

### Overview

The image contains three grouped bar charts comparing the performance of four models (Wb-S, Gb-S, 7B, 13B) across four tasks (MMLU, TriviaQA, CoQA, WMT-14) in three scenarios:

1. **Use LLaMA2 to predict Gemma-7B**

2. **Use Gemma to predict LLaMA2-7B**

3. **Use Gemma to predict LLaMA3-8B**

Performance is measured using the **AUROC metric** (0.7–1.0 scale).

---

### Components/Axes

- **X-Axis**: Tasks (MMLU, TriviaQA, CoQA, WMT-14)

- **Y-Axis**: AUROC (0.7–1.0)

- **Legend**:

- **Wb-S**: White bars

- **Gb-S**: Gray bars

- **7B**: Green bars with diagonal hatching

- **13B**: Red bars with dotted patterns

- **Chart Titles**: Positioned at the top of each subplot.

- **Bar Grouping**: Each task has four bars (one per model), grouped by task.

---

### Detailed Analysis

#### **1. Use LLaMA2 to predict Gemma-7B**

- **MMLU**:

- Wb-S: ~0.84 | Gb-S: ~0.83 | 7B: ~0.84 | 13B: ~0.84

- **TriviaQA**:

- Wb-S: ~0.88 | Gb-S: ~0.87 | 7B: ~0.88 | 13B: ~0.90

- **CoQA**:

- Wb-S: ~0.76 | Gb-S: ~0.74 | 7B: ~0.75 | 13B: ~0.76

- **WMT-14**:

- Wb-S: ~0.86 | Gb-S: ~0.84 | 7B: ~0.86 | 13B: ~0.85

#### **2. Use Gemma to predict LLaMA2-7B**

- **MMLU**:

- Wb-S: ~0.72 | Gb-S: ~0.72 | 7B: ~0.72 | 13B: ~0.72

- **TriviaQA**:

- Wb-S: ~0.90 | Gb-S: ~0.82 | 7B: ~0.85 | 13B: ~0.92

- **CoQA**:

- Wb-S: ~0.80 | Gb-S: ~0.05 (outlier) | 7B: ~0.78 | 13B: ~0.85

- **WMT-14**:

- Wb-S: ~0.78 | Gb-S: ~0.72 | 7B: ~0.76 | 13B: ~0.79

#### **3. Use Gemma to predict LLaMA3-8B**

- **MMLU**:

- Wb-S: ~0.83 | Gb-S: ~0.83 | 7B: ~0.83 | 13B: ~0.83

- **TriviaQA**:

- Wb-S: ~0.87 | Gb-S: ~0.86 | 7B: ~0.78 | 13B: ~0.84

- **CoQA**:

- Wb-S: ~0.77 | Gb-S: ~0.74 | 7B: ~0.73 | 13B: ~0.74

- **WMT-14**:

- Wb-S: ~0.74 | Gb-S: ~0.72 | 7B: ~0.68 | 13B: ~0.70

---

### Key Observations

1. **Consistency Across Scenarios**:

- Wb-S and Gb-S show relatively stable performance across tasks and scenarios.

- 7B and 13B models exhibit task-specific variability.

2. **Outliers**:

- **Gb-S in CoQA (Scenario 2)**: AUROC drops to ~0.05, suggesting a critical failure or overfitting.

- **7B in WMT-14 (Scenario 3)**: AUROC plummets to ~0.68, indicating poor generalization.

3. **Trends**:

- **Larger Models (13B)**: Outperform smaller models in TriviaQA (Scenario 1 and 2) but underperform in CoQA (Scenario 3).

- **Gemma’s Effectiveness**: Varies by target model size. For example, Gemma predicts LLaMA2-7B better than LLaMA3-8B in TriviaQA.

---

### Interpretation

- **Model Generalization**:

- Wb-S and Gb-S demonstrate robustness across tasks, while 7B and 13B models show task-dependent performance.

- The 13B model excels in TriviaQA (Scenario 1 and 2) but struggles in CoQA (Scenario 3), suggesting over-reliance on specific training data.

- **Gemma’s Role**:

- Gemma’s predictive accuracy depends on the target model’s architecture. For instance, it performs better with LLaMA2-7B in TriviaQA but worse with LLaMA3-8B in CoQA.

- **Anomalies**:

- The extreme drop in Gb-S (Scenario 2, CoQA) highlights potential instability in smaller models under certain prediction scenarios.

- **Practical Implications**:

- Larger models (13B) may not always outperform smaller ones, emphasizing the need for task-specific tuning.

- Gemma’s utility as a predictor is context-dependent, requiring careful model selection based on the target architecture.

DECODING INTELLIGENCE...