\n

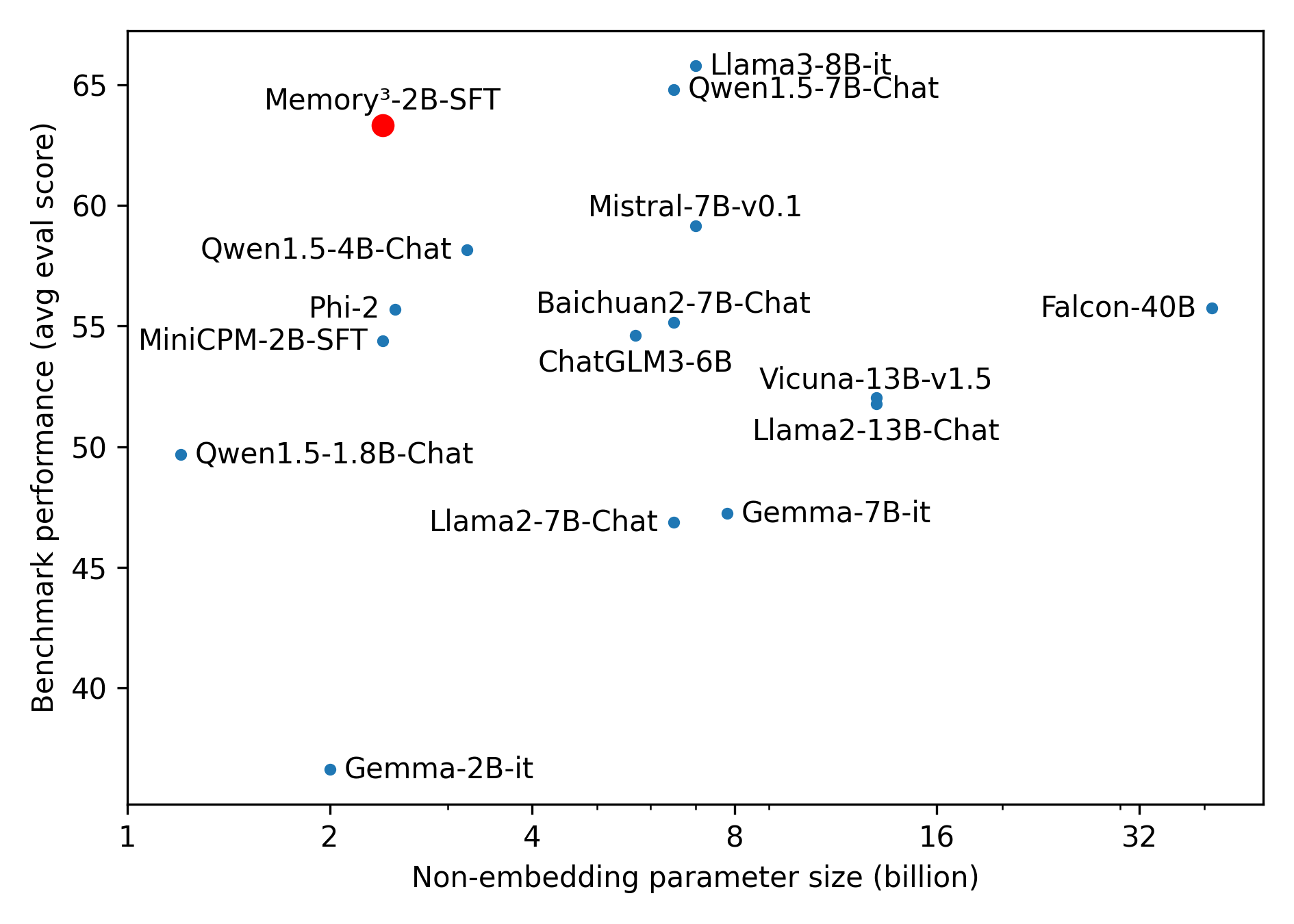

## Scatter Plot: Benchmark Performance vs. Model Size

### Overview

This image presents a scatter plot comparing the benchmark performance (average evaluation score) of various language models against their non-embedding parameter size. The plot visualizes the relationship between model size and performance, allowing for a comparison of different models.

### Components/Axes

* **X-axis:** Non-embedding parameter size (billion). Scale ranges from approximately 1 to 32 billion.

* **Y-axis:** Benchmark performance (avg eval score). Scale ranges from approximately 40 to 65.

* **Data Points:** Each point represents a specific language model. The points are labeled with the model name.

* **Models:** The following models are represented:

* Memory³-2B-SFT

* Qwen1.5-4B-Chat

* Phi-2

* MiniCPM-2B-SFT

* Qwen1.5-1.8B-Chat

* Mistral-7B-v0.1

* Baichuan2-7B-Chat

* ChatGLM3-6B

* Llama2-7B-Chat

* Vicuna-13B-v1.5

* Llama3-8B-it

* Qwen1.5-7B-Chat

* Gemma-7B-it

* Llama2-13B-Chat

* Falcon-40B

* Gemma-2B-it

### Detailed Analysis

The data points are scattered across the plot, indicating varying levels of performance for different model sizes.

* **Memory³-2B-SFT:** Located at approximately (2, 64).

* **Qwen1.5-4B-Chat:** Located at approximately (4, 59).

* **Phi-2:** Located at approximately (2, 55).

* **MiniCPM-2B-SFT:** Located at approximately (2, 54).

* **Qwen1.5-1.8B-Chat:** Located at approximately (2, 51).

* **Mistral-7B-v0.1:** Located at approximately (7, 61).

* **Baichuan2-7B-Chat:** Located at approximately (7, 57).

* **ChatGLM3-6B:** Located at approximately (6, 56).

* **Llama2-7B-Chat:** Located at approximately (7, 47).

* **Vicuna-13B-v1.5:** Located at approximately (13, 55).

* **Llama3-8B-it:** Located at approximately (8, 64).

* **Qwen1.5-7B-Chat:** Located at approximately (7, 63).

* **Gemma-7B-it:** Located at approximately (7, 48).

* **Llama2-13B-Chat:** Located at approximately (13, 52).

* **Falcon-40B:** Located at approximately (32, 55).

* **Gemma-2B-it:** Located at approximately (2, 41).

**Trends:**

* There is a general trend of increasing performance with increasing model size, but it is not strictly linear.

* Models with similar parameter sizes can exhibit significantly different performance scores.

* The largest model, Falcon-40B, does not achieve the highest performance score.

* Several models cluster around the 7 billion parameter mark.

### Key Observations

* **Outlier:** Gemma-2B-it has a relatively low benchmark performance compared to other models of similar size.

* **High Performers:** Llama3-8B-it and Memory³-2B-SFT exhibit the highest benchmark performance scores.

* **Performance Plateau:** The performance increase appears to plateau at higher parameter sizes (e.g., Falcon-40B).

### Interpretation

The scatter plot suggests that model size is a significant, but not the sole, determinant of benchmark performance. While larger models generally perform better, the architecture, training data, and other factors play a crucial role. The presence of outliers like Gemma-2B-it indicates that model design and training methodologies can have a substantial impact on performance, even with a smaller parameter size. The plateau in performance at higher parameter sizes suggests diminishing returns – increasing model size beyond a certain point may not yield significant improvements in benchmark scores. The clustering of models around the 7 billion parameter mark suggests this may be a sweet spot for balancing performance and computational cost. The data highlights the complexity of evaluating language models and the importance of considering multiple factors beyond just model size.