## Scatter Plot: Benchmark performance vs. Non-embedding parameter size

### Overview

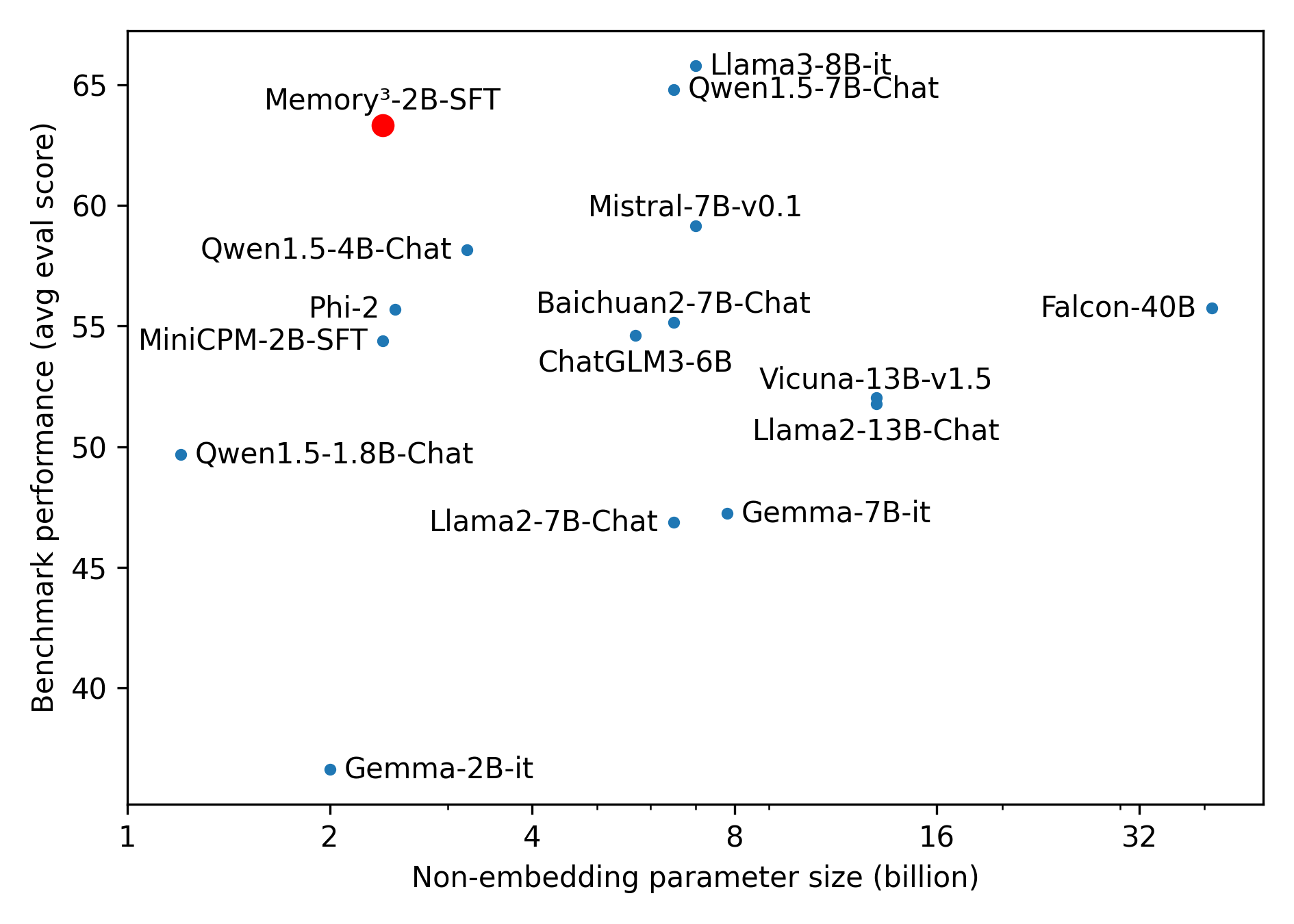

The image shows a scatter plot comparing AI model performance (y-axis: avg eval score) against model size (x-axis: non-embedding parameter size in billions). Models are represented by colored dots, with a legend on the right indicating two categories: blue dots for "Chat" models and a single red dot for "Memory³-2B-SFT".

### Components/Axes

- **X-axis**: Non-embedding parameter size (billion) ranging from 1 to 32

- **Y-axis**: Benchmark performance (avg eval score) ranging from 40 to 65

- **Legend**:

- Blue dots: Chat models (14 instances)

- Red dot: Memory³-2B-SFT (1 instance)

- **Key labels**: Model names with parameter sizes (e.g., "Llama3-8B-it", "Falcon-40B")

### Detailed Analysis

1. **Model distribution**:

- 14 blue dots (Chat models) clustered between 1.8B-40B parameters

- 1 red dot (Memory³-2B-SFT) at 2B parameters

2. **Performance range**:

- Lowest: Gemma-2B-it (37 score)

- Highest: Memory³-2B-SFT (63 score)

3. **Size-performance relationship**:

- No clear linear correlation

- Highest performance at 2B parameters (Memory³)

- Largest model (Falcon-40B) at 55 score

4. **Clustering patterns**:

- 1.8B-7B range: 6 models (Qwen1.5 variants, Phi-2, MiniCPM)

- 7B-13B range: 4 models (Baichuan2, ChatGLM3, Llama2-7B, Gemma-7B)

- 13B-40B range: 4 models (Vicuna, Llama2-13B, Falcon-40B)

### Key Observations

1. **Outlier performance**: Memory³-2B-SFT (red) achieves 63 score at 2B parameters, outperforming all larger models

2. **Size vs. performance tradeoff**:

- Falcon-40B (32B parameters) scores 55

- Llama3-8B-it (8B parameters) scores 65

3. **Efficiency cluster**: 7 models between 1.8B-4B parameters score 45-58

4. **Diminishing returns**: Models above 13B parameters show compressed performance range (50-55)

### Interpretation

The data suggests that model efficiency (performance per parameter) is more critical than raw size. Memory³-2B-SFT demonstrates exceptional performance for its size, while larger models like Falcon-40B show diminishing returns. The clustering of mid-sized models (7B-13B) around 50-55 scores indicates a potential "sweet spot" for practical deployment. The absence of a clear size-performance correlation challenges assumptions about model scaling, suggesting architectural innovations (like Memory³'s approach) may be more impactful than parameter count alone. This has implications for resource-constrained deployments where smaller, more efficient models could outperform larger ones.