## Line Chart: Training Loss Curve for Mistral-7B-v0.3-Chat

### Overview

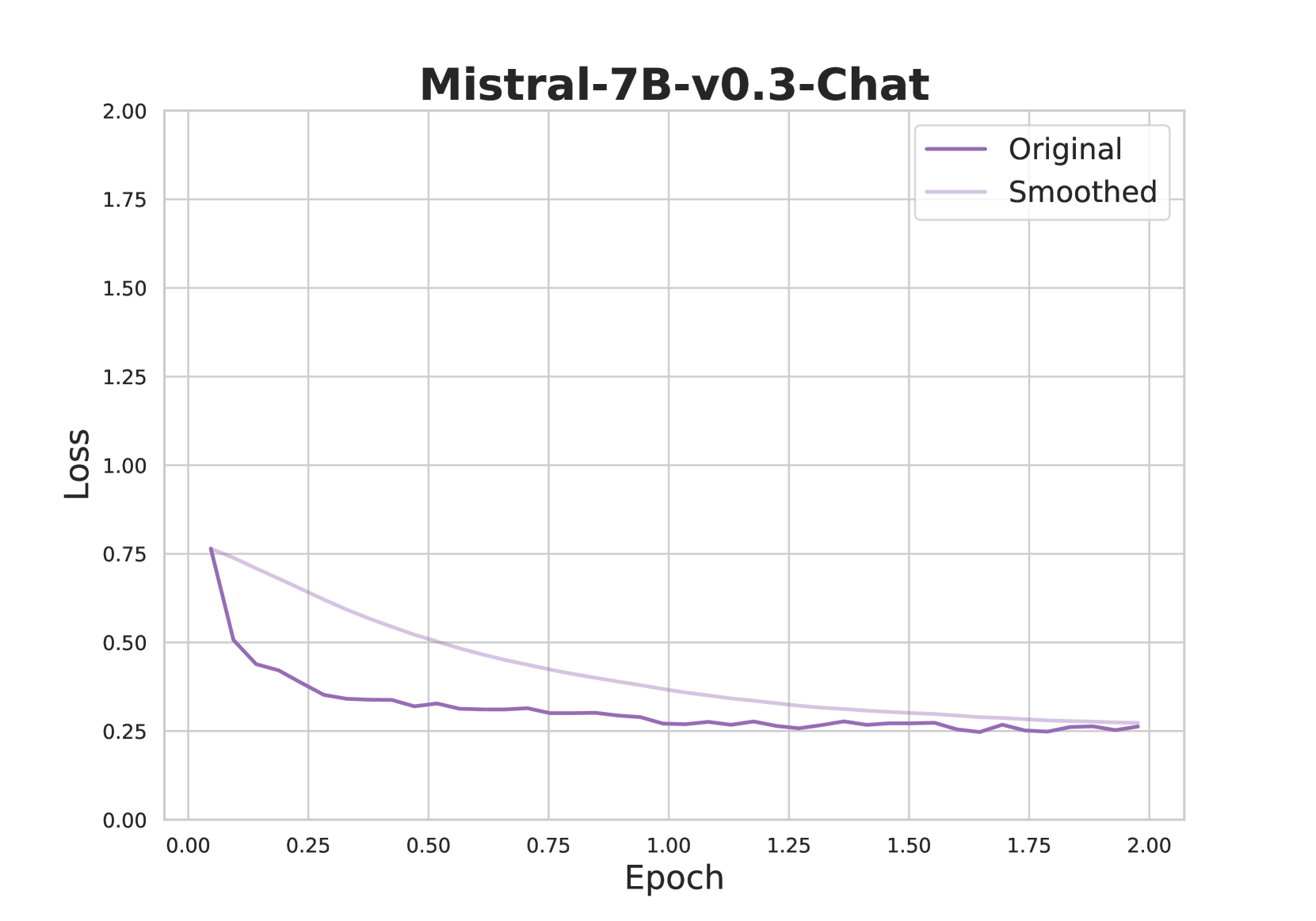

This image is a line chart displaying the training loss over epochs for a machine learning model identified as "Mistral-7B-v0.3-Chat". It plots two data series: the raw, "Original" loss values and a "Smoothed" version of the same data. The chart demonstrates the model's learning progress, showing a characteristic rapid initial decrease in loss followed by a plateau.

### Components/Axes

* **Chart Title:** "Mistral-7B-v0.3-Chat" (centered at the top).

* **X-Axis:** Labeled "Epoch". The scale runs from 0.00 to 2.00, with major tick marks at intervals of 0.25 (0.00, 0.25, 0.50, 0.75, 1.00, 1.25, 1.50, 1.75, 2.00).

* **Y-Axis:** Labeled "Loss". The scale runs from 0.00 to 2.00, with major tick marks at intervals of 0.25 (0.00, 0.25, 0.50, 0.75, 1.00, 1.25, 1.50, 1.75, 2.00).

* **Legend:** Positioned in the top-right corner of the plot area. It contains two entries:

* "Original" - represented by a dark purple line.

* "Smoothed" - represented by a light purple (lavender) line.

* **Grid:** A light gray grid is present, with lines corresponding to the major tick marks on both axes.

### Detailed Analysis

**Data Series Trends & Approximate Values:**

1. **Original (Dark Purple Line):**

* **Trend:** The line exhibits a very steep, near-vertical drop at the beginning, followed by a sharp knee, and then a much more gradual, fluctuating decline that plateaus.

* **Key Points (Approximate):**

* Epoch 0.00: Loss starts at approximately **0.75**.

* Epoch ~0.05: Loss drops sharply to ~**0.50**.

* Epoch ~0.10: Loss is ~**0.45**.

* Epoch ~0.25: Loss is ~**0.35**.

* Epoch 0.50: Loss is ~**0.30**.

* From Epoch 0.50 to 2.00: The loss fluctuates in a narrow band between approximately **0.25 and 0.30**, showing minor ups and downs but no significant downward trend. The final value at Epoch 2.00 is approximately **0.27**.

2. **Smoothed (Light Purple Line):**

* **Trend:** This line shows a smooth, continuous, and decelerating decline from the start to the end of the plotted epochs. It lacks the noise and sharp initial drop of the original data.

* **Key Points (Approximate):**

* Epoch 0.00: Loss starts at approximately **0.75** (same starting point as Original).

* Epoch 0.25: Loss is ~**0.60**.

* Epoch 0.50: Loss is ~**0.50**.

* Epoch 1.00: Loss is ~**0.35**.

* Epoch 1.50: Loss is ~**0.28**.

* Epoch 2.00: Loss ends at approximately **0.26**, very close to the final value of the Original line.

### Key Observations

* **Rapid Initial Learning:** The most dramatic reduction in loss occurs within the first 0.1 epochs (approximately the first 5% of the displayed training time).

* **Plateau Phase:** After approximately epoch 0.5, the model's loss enters a plateau phase, indicating that further training yields minimal improvement in the loss metric.

* **Convergence of Series:** While the smoothed line starts above the original line after the initial drop, the two series converge and become nearly indistinguishable from approximately epoch 1.5 onward.

* **Noise in Original Data:** The "Original" line shows visible high-frequency fluctuations, especially in the plateau region, which the "Smoothed" line successfully filters out to reveal the underlying trend.

### Interpretation

This chart is a classic visualization of a model's training dynamics. The steep initial drop signifies that the model is quickly learning the most prominent patterns in the training data. The subsequent plateau suggests the model has reached a point of diminishing returns, where additional training epochs provide only marginal gains in fitting the training set. The "Smoothed" line is crucial for interpretation, as it clarifies the overall trajectory by removing the stochastic noise inherent in batch-based training loss calculations. The fact that both lines converge to a similar final value (~0.26-0.27) indicates the smoothing process is accurate and the model's performance has stabilized. For a practitioner, this chart would signal that training beyond 1.5-2.0 epochs may not be cost-effective unless the loss needs to be driven marginally lower for specific performance requirements.