# Technical Document Extraction: Model Accuracy vs. Generation Budget

## 1. Image Overview

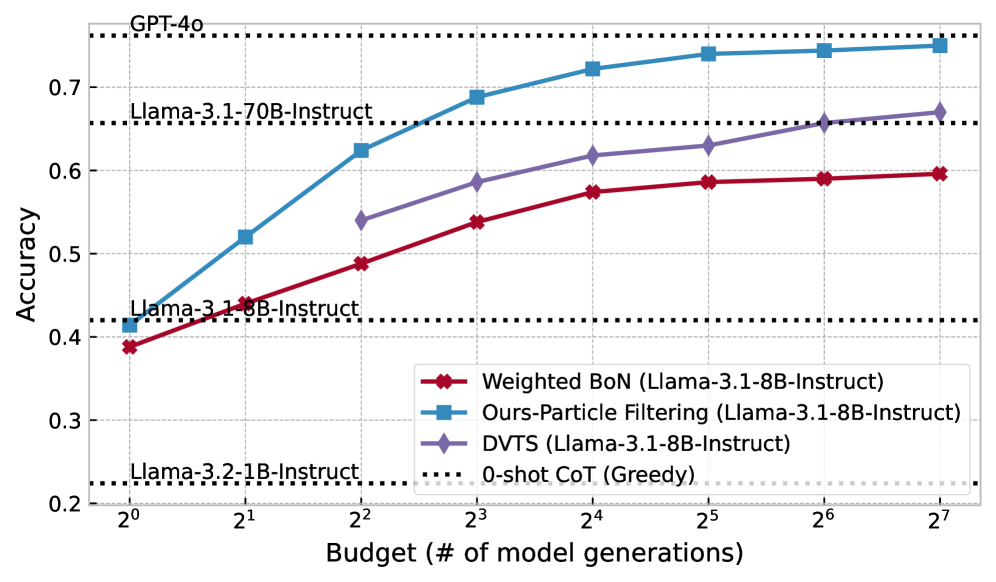

This image is a line graph comparing the performance (Accuracy) of different inference-time scaling methods against a computational budget (number of model generations). The chart specifically evaluates these methods using the **Llama-3.1-8B-Instruct** model as a base, while providing horizontal baselines for other models.

---

## 2. Component Isolation

### A. Header / Baselines (Top & Middle Regions)

The chart contains four horizontal black dotted lines representing "0-shot CoT (Greedy)" performance for various models. These serve as static benchmarks.

| Model | Approximate Accuracy |

| :--- | :--- |

| GPT-4o | 0.76 |

| Llama-3.1-70B-Instruct | 0.66 |

| Llama-3.1-8B-Instruct | 0.42 |

| Llama-3.2-1B-Instruct | 0.225 |

### B. Main Chart Area (Data Series)

The x-axis is logarithmic (base 2), and the y-axis is linear. There are three primary data series plotted.

#### Legend

* **Red Line with Diamond Markers**: `Weighted BoN (Llama-3.1-8B-Instruct)`

* **Blue Line with Square Markers**: `Ours-Particle Filtering (Llama-3.1-8B-Instruct)`

* **Purple Line with Diamond Markers**: `DVTS (Llama-3.1-8B-Instruct)`

* **Black Dotted Line**: `0-shot CoT (Greedy)` (Reference for the baselines mentioned in section A).

---

## 3. Trend Verification and Data Extraction

### Series 1: Ours-Particle Filtering (Blue Square)

* **Trend**: This is the highest-performing method. It shows a steep logarithmic growth from $2^0$ to $2^4$, then begins to plateau as it approaches the GPT-4o baseline.

* **Data Points (Approximate):**

* $2^0$ (1): 0.41

* $2^1$ (2): 0.52

* $2^2$ (4): 0.62

* $2^3$ (8): 0.69

* $2^4$ (16): 0.72

* $2^5$ (32): 0.74

* $2^6$ (64): 0.745

* $2^7$ (128): 0.75

### Series 2: DVTS (Purple Diamond)

* **Trend**: This series starts at a higher budget ($2^2$). It shows a steady upward slope, consistently performing better than Weighted BoN but significantly lower than Particle Filtering. It surpasses the Llama-3.1-70B-Instruct baseline at a budget of $2^6$.

* **Data Points (Approximate):**

* $2^2$ (4): 0.54

* $2^3$ (8): 0.59

* $2^4$ (16): 0.62

* $2^5$ (32): 0.63

* $2^6$ (64): 0.66

* $2^7$ (128): 0.67

### Series 3: Weighted BoN (Red Diamond)

* **Trend**: The lowest performing of the three active methods. It shows a steady but slower increase in accuracy, failing to reach the Llama-3.1-70B-Instruct baseline even at the maximum budget shown.

* **Data Points (Approximate):**

* $2^0$ (1): 0.39

* $2^1$ (2): 0.44

* $2^2$ (4): 0.49

* $2^3$ (8): 0.54

* $2^4$ (16): 0.575

* $2^5$ (32): 0.585

* $2^6$ (64): 0.59

* $2^7$ (128): 0.595

---

## 4. Axis and Labels

* **Y-Axis Title**: `Accuracy`

* **Y-Axis Markers**: 0.2, 0.3, 0.4, 0.5, 0.6, 0.7

* **X-Axis Title**: `Budget (# of model generations)`

* **X-Axis Markers (Log Scale)**: $2^0, 2^1, 2^2, 2^3, 2^4, 2^5, 2^6, 2^7$ (representing 1 to 128 generations).

---

## 5. Key Technical Insights

1. **Efficiency**: The "Ours-Particle Filtering" method using an 8B model achieves GPT-4o level performance (approx. 0.75) with a budget of 128 generations ($2^7$).

2. **Scaling**: All methods show diminishing returns as the budget increases, evidenced by the flattening of the curves at higher x-values.

3. **Model Comparison**: The 8B model using Particle Filtering outperforms the 70B model's greedy baseline at a budget of only 8 generations ($2^3$).