## Code Snippet: Python Function Definitions

### Overview

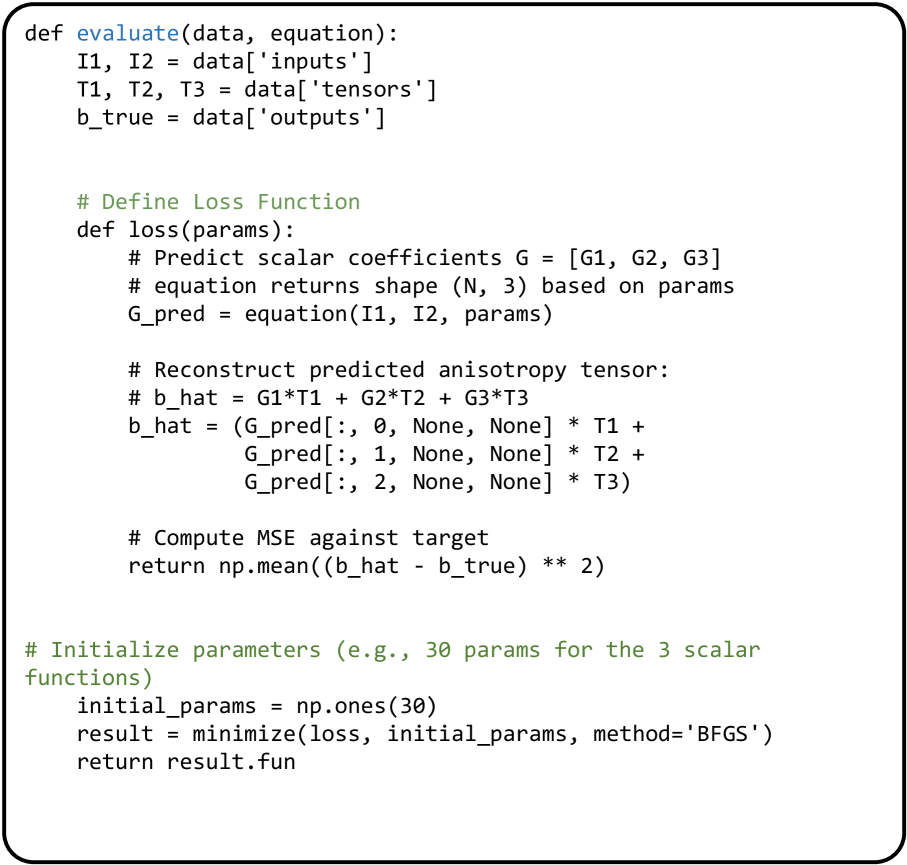

The image contains a Python code snippet defining two functions, `evaluate` and `loss`, along with initialization steps. The code appears to be related to machine learning or numerical optimization, possibly in the context of tensor analysis or anisotropy prediction.

### Components/Axes

* **Function `evaluate(data, equation)`:**

* Input arguments: `data`, `equation`

* Extracts `I1`, `I2` from `data['inputs']`

* Extracts `T1`, `T2`, `T3` from `data['tensors']`

* Extracts `b_true` from `data['outputs']`

* **Function `loss(params)`:**

* Input argument: `params`

* Predicts scalar coefficients `G = [G1, G2, G3]`

* `equation` returns shape `(N, 3)` based on `params`

* `G_pred = equation(I1, I2, params)`

* Reconstructs predicted anisotropy tensor `b_hat`

* Computes Mean Squared Error (MSE) against target `b_true`

* **Initialization:**

* `initial_params = np.ones(30)`: Initializes parameters as an array of 30 ones.

* `result = minimize(loss, initial_params, method='BFGS')`: Minimizes the `loss` function using the BFGS optimization method.

* `return result.fun`: Returns the optimized function value.

### Detailed Analysis or ### Content Details

**Function `evaluate(data, equation)`:**

* The function takes `data` and `equation` as input.

* It extracts input data (`I1`, `I2`), tensor data (`T1`, `T2`, `T3`), and target output (`b_true`) from the `data` dictionary.

**Function `loss(params)`:**

* The function takes `params` as input.

* It predicts scalar coefficients `G = [G1, G2, G3]`.

* It calls the `equation` function with inputs `I1`, `I2`, and `params` to get `G_pred`.

* It reconstructs the predicted anisotropy tensor `b_hat` using `G_pred` and the tensor data `T1`, `T2`, `T3`. The reconstruction involves element-wise multiplication and broadcasting using `None` to expand dimensions.

* It computes the MSE between the predicted tensor `b_hat` and the target tensor `b_true` using `np.mean((b_hat - b_true) ** 2)`.

**Initialization:**

* `initial_params = np.ones(30)`: Creates an array of 30 ones, which serves as the initial guess for the parameters to be optimized.

* `result = minimize(loss, initial_params, method='BFGS')`: Uses the `minimize` function (likely from `scipy.optimize`) to find the parameters that minimize the `loss` function. The BFGS (Broyden–Fletcher–Goldfarb–Shanno) algorithm is used for optimization.

* `return result.fun`: Returns the optimized value of the loss function.

### Key Observations

* The code defines a loss function that calculates the MSE between a predicted anisotropy tensor and a target tensor.

* The `evaluate` function prepares the data for the loss calculation.

* The code initializes parameters and uses an optimization algorithm (BFGS) to minimize the loss function.

* The use of `None` in the tensor reconstruction suggests broadcasting operations for element-wise multiplication.

### Interpretation

The code snippet implements a process for predicting anisotropy tensors and optimizing the prediction using a loss function and an optimization algorithm. The `evaluate` function prepares the input data, the `loss` function quantifies the error between the prediction and the target, and the initialization and optimization steps find the parameters that minimize this error. This is a common pattern in machine learning and numerical optimization, where the goal is to find the best parameters for a model by minimizing a loss function. The specific application appears to be related to tensor analysis, possibly in the context of material science or image processing.