\n

## Diagram: Neural Network Architecture

### Overview

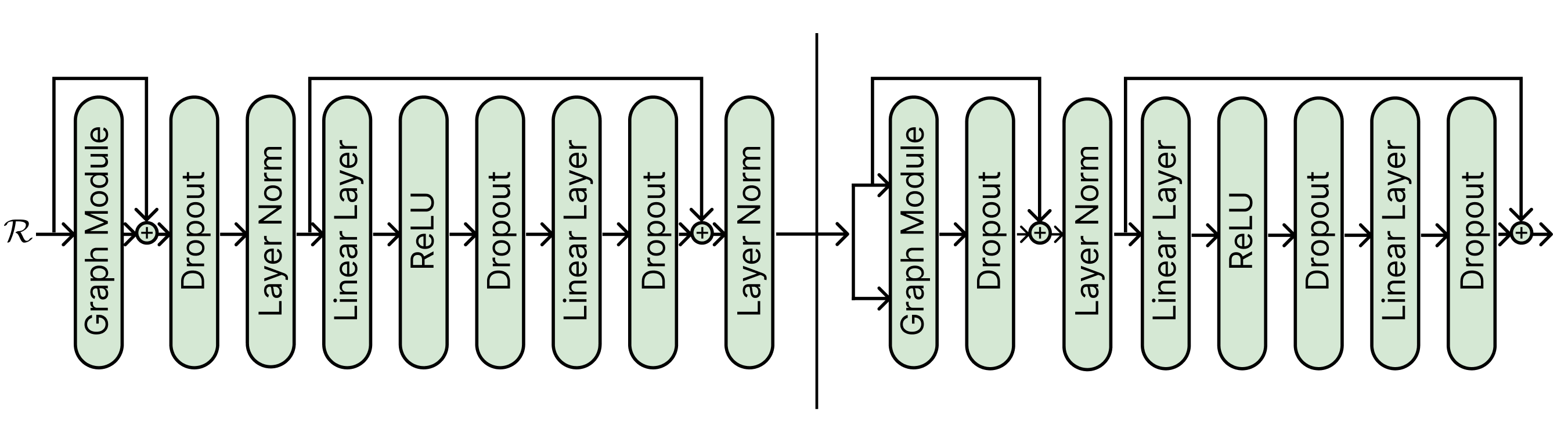

The image depicts a diagram of a neural network architecture, specifically showcasing two identical blocks of layers connected in a sequential manner. The diagram illustrates the flow of data through these blocks, highlighting the different layers and operations involved.

### Components/Axes

The diagram consists of the following components, arranged horizontally:

* **Input:** Labeled as "x" with an arrow indicating input from the left side.

* **Graph Module:** Appears twice, once at the beginning of each block.

* **Dropout:** Appears twice in each block.

* **Layer Norm:** Appears twice in each block.

* **Linear Layer:** Appears twice in each block.

* **ReLU:** Appears twice in each block.

* **Output:** Indicated by arrows pointing to the right side of the second block.

The layers are connected by arrows indicating the direction of data flow. Each layer is represented as a rounded rectangle.

### Detailed Analysis or Content Details

The diagram shows two identical blocks of layers. Each block consists of the following sequence:

1. **Graph Module:** The initial layer, receiving the input "x".

2. **Dropout:** Applies dropout regularization.

3. **Layer Norm:** Applies layer normalization.

4. **Linear Layer:** A fully connected linear transformation.

5. **ReLU:** Applies the Rectified Linear Unit activation function.

6. **Dropout:** Applies dropout regularization.

7. **Linear Layer:** A fully connected linear transformation.

8. **Layer Norm:** Applies layer normalization.

The output of the first block is then fed as input to the second block, which has the same layer sequence. The output of the second block is the final output of the network.

### Key Observations

* The architecture is symmetrical, with two identical blocks.

* Dropout and Layer Normalization are used within each block, likely for regularization and improved training stability.

* The ReLU activation function is used after each Linear Layer.

* The diagram does not provide any specific numerical values or parameters for the layers.

### Interpretation

This diagram represents a common pattern in neural network design: stacking multiple blocks of layers to learn complex representations of data. The use of dropout and layer normalization suggests that the network is designed to be robust to overfitting and to train efficiently. The repeated structure of the blocks indicates that the network is learning hierarchical features, with each block refining the representation of the input data. The "Graph Module" suggests that the network is designed to process graph-structured data, or that the layers within the module are designed to capture relationships between data points. Without further information, it is difficult to determine the specific purpose or application of this network. The diagram is a high-level architectural overview and does not provide details about the specific implementation or training process.