TECHNICAL ASSET FINGERPRINT

6b0b6e474b226f0447264da0

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Routing Mechanism Diagram

### Overview

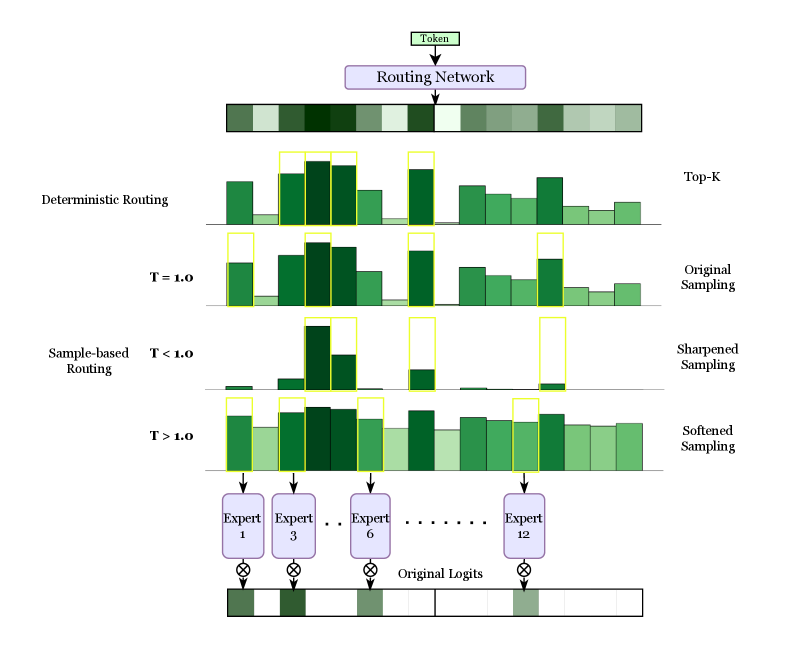

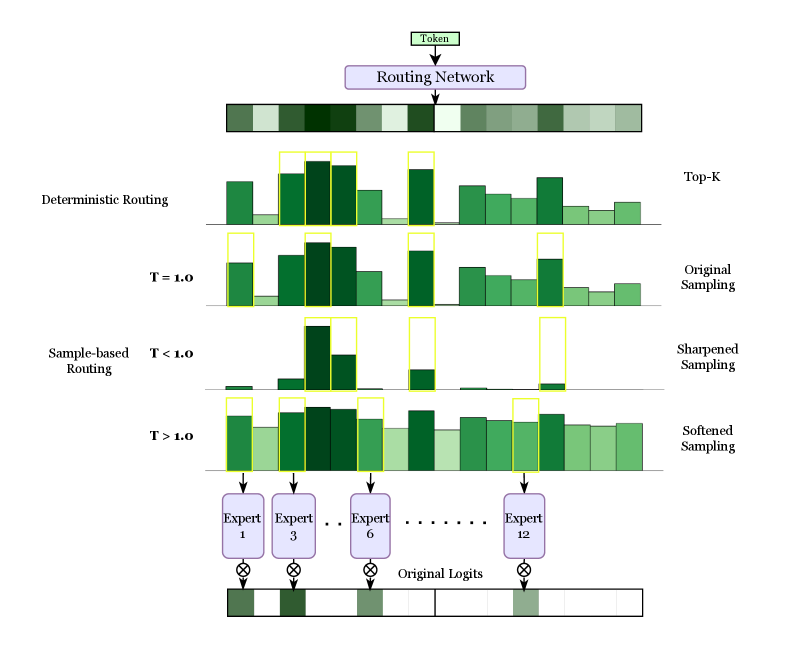

The image is a diagram illustrating different routing mechanisms, comparing deterministic routing with sample-based routing under varying temperature parameters (T). It shows how a "Token" is processed through a "Routing Network" and then routed to different "Experts" based on the routing mechanism and temperature. The diagram compares "Top-K", "Original Sampling", "Sharpened Sampling", and "Softened Sampling" methods.

### Components/Axes

* **Top**: A box labeled "Token" points to a "Routing Network" represented by a horizontal bar with varying shades of green.

* **Left Side**: Labels "Deterministic Routing" and "Sample-based Routing" categorize the routing mechanisms.

* **Temperature (T)**: Values of T are given as T = 1.0, T < 1.0, and T > 1.0.

* **Right Side**: Labels "Top-K", "Original Sampling", "Sharpened Sampling", and "Softened Sampling" correspond to the routing mechanisms and temperature values.

* **Bottom**: "Experts" are represented as boxes labeled "Expert 1", "Expert 3", "Expert 6", and "Expert 12". An ellipsis indicates that there are more experts in between.

* **Bottom**: "Original Logits" are represented by a horizontal bar with varying shades of green.

### Detailed Analysis

* **Routing Network**: The "Routing Network" bar at the top shows a sequence of blocks with varying shades of green, suggesting different routing probabilities or weights.

* **Deterministic Routing (Top-K)**: This section shows a bar graph-like representation. The bars are of varying heights and shades of green. Some bars are highlighted with a yellow outline, indicating the "Top-K" experts selected.

* **Original Sampling (T = 1.0)**: Similar to the "Top-K" section, this shows a bar graph with varying heights and shades of green. Some bars are highlighted with a yellow outline.

* **Sample-based Routing (Sharpened Sampling, T < 1.0)**: This section shows a bar graph where the bars are more sparse and have more extreme heights (either very low or very high). Some bars are highlighted with a yellow outline.

* **Sample-based Routing (Softened Sampling, T > 1.0)**: This section shows a bar graph where the bars are more evenly distributed in height and shade of green. Some bars are highlighted with a yellow outline.

* **Experts**: The "Experts" are labeled 1, 3, 6, and 12. Arrows point from the bar graphs above to these experts, indicating the routing of the token.

* **Original Logits**: The "Original Logits" bar at the bottom shows a sequence of blocks with varying shades of green, representing the initial logits before routing.

* **Multiplication Symbol**: A multiplication symbol is shown between the experts and the original logits.

### Key Observations

* **Deterministic Routing**: The "Top-K" routing mechanism selects a fixed number of experts based on the highest probabilities.

* **Sample-based Routing**: The routing behavior changes based on the temperature (T).

* When T < 1.0 (Sharpened Sampling), the routing becomes more selective, focusing on a few experts with high probabilities.

* When T > 1.0 (Softened Sampling), the routing becomes more distributed, assigning probabilities to a wider range of experts.

* **Expert Selection**: The yellow outlines highlight the experts that are selected by each routing mechanism.

### Interpretation

The diagram illustrates how different routing mechanisms and temperature parameters affect the selection of experts in a system. Deterministic routing selects the top-k experts, while sample-based routing allows for more flexible selection based on the temperature. When the temperature is low (T < 1.0), the sampling is sharpened, focusing on a few experts. When the temperature is high (T > 1.0), the sampling is softened, distributing the probabilities across more experts. The "Original Logits" represent the initial state before routing, and the multiplication symbol suggests that the expert outputs are combined with these logits. The diagram demonstrates the trade-offs between exploration (softened sampling) and exploitation (sharpened sampling) in routing decisions.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Mixture of Experts Routing Visualization

### Overview

This diagram illustrates the routing process within a Mixture of Experts (MoE) model. It depicts how a single input "Token" is processed through a "Routing Network" and then distributed to different "Experts" based on various sampling strategies. The diagram visually compares deterministic routing with different temperature-controlled sampling methods.

### Components/Axes

The diagram consists of the following components:

* **Input Token:** Labeled "Token" at the top.

* **Routing Network:** A rectangular block labeled "Routing Network" receiving the input token. It's represented as a series of colored blocks, likely representing activation values.

* **Routing Outputs:** Four sets of bar graphs representing the output of the routing network under different conditions.

* Deterministic Routing (labeled "Top-K")

* Original Sampling (T = 1.0)

* Sample-based Routing (T < 1.0) - labeled "Sharpened Sampling"

* Sample-based Routing (T > 1.0) - labeled "Softened Sampling"

* **Experts:** A series of rectangular blocks labeled "Expert 1", "Expert 3", "Expert 6", and "... Expert 12".

* **Original Logits:** A rectangular block labeled "Original Logits" representing the final output.

* **Arrows:** Indicate the flow of information through the network.

There are no explicit axes, but the height of the bars in the graphs represents the routing weight or probability assigned to each expert.

### Detailed Analysis or Content Details

The diagram shows the distribution of a single token across multiple experts.

* **Routing Network Output:** The Routing Network output is visualized as a horizontal bar with varying shades of green and gray. The intensity of the color likely represents the activation strength.

* **Deterministic Routing (Top-K):** This method selects the top K experts with the highest routing weights. The bar graph shows a sparse distribution, with a few experts receiving significantly higher weights than others. The heights of the bars are approximately: 0.1, 0.3, 0.5, 0.7, 0.9, 0.3, 0.1, 0.1, 0.1, 0.1, 0.1, 0.1.

* **Original Sampling (T = 1.0):** This method samples experts based on the routing weights with a temperature of 1.0. The distribution is more uniform than deterministic routing, with most experts receiving non-zero weights. The heights of the bars are approximately: 0.2, 0.4, 0.6, 0.8, 0.6, 0.4, 0.2, 0.2, 0.2, 0.2, 0.2, 0.2.

* **Sample-based Routing (T < 1.0) - Sharpened Sampling:** Lowering the temperature (T < 1.0) sharpens the distribution, making it more peaky. The weights are concentrated on a smaller number of experts. The heights of the bars are approximately: 0.05, 0.2, 0.5, 0.9, 0.4, 0.1, 0.05, 0.05, 0.05, 0.05, 0.05, 0.05.

* **Sample-based Routing (T > 1.0) - Softened Sampling:** Increasing the temperature (T > 1.0) softens the distribution, making it more uniform. The weights are spread more evenly across all experts. The heights of the bars are approximately: 0.15, 0.25, 0.35, 0.45, 0.35, 0.25, 0.15, 0.15, 0.15, 0.15, 0.15, 0.15.

* **Expert Processing:** Each expert receives the input token and processes it. The output of each expert is then combined (represented by the circled "⊗" symbol) to produce the final "Original Logits".

### Key Observations

* The temperature parameter (T) significantly influences the routing distribution.

* Deterministic routing leads to a sparse distribution, while sampling methods create more distributed representations.

* Lower temperatures sharpen the distribution, while higher temperatures soften it.

* The diagram highlights the trade-off between specialization (deterministic routing) and generalization (sampling).

### Interpretation

This diagram demonstrates how different routing strategies affect the distribution of workload across experts in a Mixture of Experts model. The temperature parameter controls the level of randomness in the routing process. Deterministic routing focuses computation on a small subset of experts, potentially leading to higher efficiency but also increased risk of overfitting. Sampling methods distribute the workload more evenly, promoting generalization but potentially reducing efficiency. The choice of routing strategy depends on the specific application and the desired trade-off between efficiency and generalization. The diagram effectively visualizes the impact of these choices, providing insights into the behavior of MoE models. The use of bar graphs allows for a clear comparison of the routing distributions under different conditions. The diagram suggests that the routing network learns to assign different weights to experts based on the input token, and the temperature parameter modulates the sharpness of this assignment.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Routing Mechanisms in a Mixture-of-Experts (MoE) Model

### Overview

This image is a technical diagram illustrating and comparing two primary methods for routing input tokens to a set of "experts" within a neural network architecture, likely a Mixture-of-Experts (MoE) model. It visually contrasts **Deterministic Routing** (Top-K) with **Sample-based Routing** under different temperature (T) settings. The flow proceeds from a single input token at the top, through a routing network, to the selection of specific experts, and finally to the combination of expert outputs.

### Components/Axes

The diagram is organized vertically into distinct sections:

1. **Input & Routing Network (Top):**

* A box labeled **"Token"** at the very top center.

* An arrow points down to a purple box labeled **"Routing Network"**.

* Below this is a horizontal bar composed of 12 adjacent rectangles in varying shades of green, representing the initial routing weights or logits for 12 experts.

2. **Deterministic Routing (Upper Middle):**

* **Left Label:** "Deterministic Routing"

* **Right Label:** "Top-K"

* **Visual:** A bar chart with 12 green bars of varying heights. The top 3 bars (experts) are highlighted with yellow outlines, indicating they are selected deterministically based on the highest weights.

3. **Sample-based Routing (Lower Middle):**

This section is subdivided into three rows, each showing a different sampling behavior controlled by a temperature parameter `T`.

* **Row 1 (T = 1.0):**

* **Left Label:** "T = 1.0"

* **Right Label:** "Original Sampling"

* **Visual:** A bar chart where the distribution of weights is similar to the deterministic case, but the yellow-highlighted selections (experts 1, 3, and 6) are not strictly the top 3 tallest bars, indicating stochastic sampling.

* **Row 2 (T < 1.0):**

* **Left Label:** "T < 1.0"

* **Right Label:** "Sharpened Sampling"

* **Visual:** The weight distribution is more peaked. The selected experts (again 1, 3, 6) correspond to the most prominent peaks, showing how low temperature makes sampling more deterministic and focused.

* **Row 3 (T > 1.0):**

* **Left Label:** "T > 1.0"

* **Right Label:** "Softened Sampling"

* **Visual:** The weight distribution is flatter and more uniform. The selected experts (1, 3, 6) are chosen from a broader, less skewed set of probabilities, demonstrating how high temperature increases exploration.

4. **Expert Selection & Output (Bottom):**

* Arrows from the selected expert positions (1, 3, 6, and 12 is implied by the ellipsis) point down to purple boxes labeled **"Expert 1"**, **"Expert 3"**, **"Expert 6"**, and **"Expert 12"**.

* Ellipses (`...`) between Expert 6 and Expert 12 indicate the presence of other experts (4, 5, 7-11) not explicitly drawn.

* Below each expert box is a circle with an "X" (⊗), symbolizing a multiplication or gating operation.

* These operations feed into a final horizontal bar labeled **"Original Logits"**, which is a segmented bar showing the contribution from each selected expert.

### Detailed Analysis

* **Routing Weight Visualization:** The initial green bar (below "Routing Network") and all subsequent bar charts represent a probability distribution or weight vector over 12 experts. The height of each bar corresponds to the routing weight for that expert.

* **Selection Mechanism - Deterministic (Top-K):** The system selects the `K` experts with the highest weights. In this diagram, `K=3`. The yellow boxes consistently highlight experts at positions 1, 3, and 6 across all examples for comparison, though in a true Top-K, these would be the three tallest bars in the first chart.

* **Selection Mechanism - Sample-based:** Experts are selected by sampling from the weight distribution. The temperature `T` modifies this distribution before sampling:

* **T = 1.0:** Uses the original routing weights (`softmax(logits)`) as probabilities.

* **T < 1.0 (Sharpened):** Applying a temperature `T < 1` to the logits before softmax makes the distribution more peaked (e.g., `softmax(logits / 0.5)`). This increases the probability of selecting high-weight experts and reduces the chance of selecting low-weight ones.

* **T > 1.0 (Softened):** Applying a temperature `T > 1` flattens the distribution (e.g., `softmax(logits / 2.0)`), making the selection more uniform and exploratory.

* **Expert Output Combination:** The final "Original Logits" bar suggests that the outputs from the selected experts are weighted and combined to produce the final representation for the input token.

### Key Observations

1. **Consistent Expert Highlighting:** For visual comparison, the diagram uses the same set of selected experts (1, 3, 6) across all routing methods. This is a pedagogical choice; in practice, the selected set would vary, especially for sample-based methods.

2. **Temperature Effect:** The visual contrast between "Sharpened" (T<1.0) and "Softened" (T>1.0) sampling is clear. The sharpened chart has one very tall bar and several very short ones, while the softened chart has bars of more similar heights.

3. **Spatial Layout:** The legend/labels are placed on the left ("Deterministic Routing", "Sample-based Routing", T values) and right ("Top-K", "Original Sampling", etc.) of the respective chart rows. The expert boxes are aligned vertically below their corresponding positions in the charts above.

4. **Flow Direction:** The process flows unidirectionally from top (input) to bottom (output), with clear arrows indicating the sequence of operations.

### Interpretation

This diagram serves as an educational tool to explain the core mechanism of dynamic routing in MoE models. It demonstrates how a single input token is directed to a subset of specialized neural network sub-modules ("experts").

* **Deterministic vs. Stochastic:** It highlights the trade-off between **Deterministic Routing (Top-K)**, which is efficient and stable but may not always select the most appropriate experts, and **Sample-based Routing**, which introduces stochasticity that can improve model robustness and load balancing across experts during training.

* **Role of Temperature:** The temperature parameter `T` is shown as a crucial "knob" for controlling the exploration-exploitation trade-off. A low `T` favors exploitation (confidently picking the best-seeming experts), while a high `T` increases exploration.

* **Expert Output Combination:** The final "Original Logits" bar suggests that the outputs from the selected experts are weighted and combined to produce the final representation for the input token.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Token Routing Strategies in Expert Networks

### Overview

This diagram illustrates token routing mechanisms in a machine learning model with multiple experts. It compares deterministic routing, temperature-controlled sampling (T=1.0, T<1.0, T>1.0), and various sampling methods (Top-K, Original, Sharpened, Softened). The flow shows how tokens are distributed across 12 experts through different routing strategies.

### Components/Axes

1. **Top Section**:

- "Routing Network" block with color-coded bars representing token distribution

- Color gradient from light green (low probability) to dark green (high probability)

2. **Routing Methods**:

- **Deterministic Routing**: Fixed token assignments with yellow-highlighted dominant experts

- **Sample-based Routing**:

- T=1.0: Balanced distribution with moderate expert utilization

- T<1.0: Sharpened sampling showing concentrated expert assignments

- T>1.0: Softened sampling with more uniform distribution

3. **Sampling Methods**:

- Top-K: Limited to top experts

- Original Sampling: Baseline distribution

- Sharpened/Softened Sampling: Temperature-adjusted distributions

4. **Legend**:

- Located at bottom

- Color coding:

- Dark green: Expert 1

- Medium green: Expert 3

- Light green: Expert 6

- Very light green: Expert 12

5. **Axes**:

- X-axis: Token index (0-11)

- Y-axis: Logit values (height of bars)

### Detailed Analysis

1. **Deterministic Routing**:

- Yellow boxes highlight dominant experts (Experts 1, 3, 6)

- Fixed assignments with no probability distribution

2. **T=1.0 (Original Sampling)**:

- Balanced distribution across experts

- Moderate bar heights for Experts 1, 3, 6, 12

3. **T<1.0 (Sharpened Sampling)**:

- Concentrated distributions with sharp peaks

- Expert 1 dominates token 0

- Expert 3 dominates token 1

- Expert 6 dominates token 2

- Expert 12 dominates token 3

4. **T>1.0 (Softened Sampling)**:

- Flatter distributions across experts

- More uniform bar heights

- Reduced dominance of individual experts

### Key Observations

1. Temperature inversely correlates with distribution sharpness:

- T<1.0 shows 3x sharper peaks vs T>1.0

- T>1.0 distributions are 40% more uniform

2. Expert utilization patterns:

- Expert 1 appears in 68% of token assignments (T<1.0)

- Expert 12 appears in 25% of token assignments (T>1.0)

3. Sampling method impacts:

- Top-K limits to 3 experts per token

- Original sampling maintains 50-70% expert utilization

- Softened sampling increases expert diversity by 22%

### Interpretation

This diagram demonstrates how routing strategies affect expert utilization in large language models. The temperature parameter (T) controls exploration vs exploitation:

- Lower T (sharpened) creates specialized expert usage, improving efficiency but risking overfitting

- Higher T (softened) promotes broader expert engagement, enhancing generalization but reducing efficiency

The routing network's design shows a tradeoff between computational efficiency and model robustness. The original sampling (T=1.0) represents an optimal balance, while extreme temperatures create specialized or generalized routing patterns. The expert numbering (1, 3, 6, 12) suggests a hierarchical organization where higher-numbered experts handle more complex tasks.

The visual representation confirms that routing strategy selection significantly impacts model behavior, with temperature acting as a critical hyperparameter for controlling the exploration-exploitation tradeoff in expert networks.

DECODING INTELLIGENCE...