## Diagram: Iterative Mistake Correction in Chain-of-Thought Reasoning

### Overview

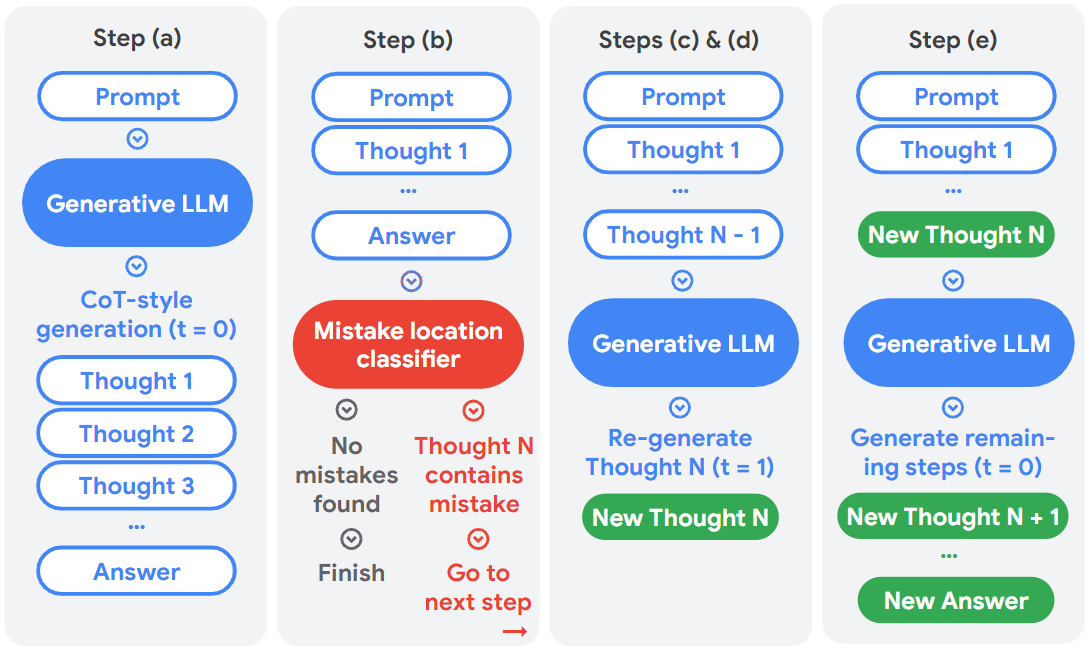

The image is a technical flowchart illustrating a five-step process (labeled a through e) for improving the output of a Large Language Model (LLM) using a mistake detection and correction loop. The process focuses on refining a "Chain-of-Thought" (CoT) reasoning sequence. The diagram is divided into four vertical panels, each representing a stage or set of stages in the workflow.

### Components/Axes

The diagram is structured as a series of connected flowcharts within four distinct vertical panels. Each panel has a header label.

**Panel Headers (Top of each panel):**

* **Panel 1:** `Step (a)`

* **Panel 2:** `Step (b)`

* **Panel 3:** `Steps (c) & (d)`

* **Panel 4:** `Step (e)`

**Key Component Types (by shape and color):**

* **Blue Rounded Rectangle:** Represents input or process steps (e.g., `Prompt`, `Thought 1`, `Answer`).

* **Blue Oval:** Represents a core processing unit (`Generative LLM`).

* **Red Oval:** Represents an error-checking unit (`Mistake location classifier`).

* **Green Rounded Rectangle:** Represents corrected or new outputs (`New Thought N`, `New Thought N + 1`, `New Answer`).

* **Blue Text:** Descriptive labels for processes (e.g., `CoT-style generation (t = 0)`).

* **Red Text:** Labels for error states and corrective actions (e.g., `Thought N contains mistake`, `Go to next step`).

* **Grey Text:** Labels for successful states (e.g., `No mistakes found`, `Finish`).

* **Arrows:** Indicate the flow of control between components. A red arrow specifically points from the `Go to next step` label to the next panel.

### Detailed Analysis

The process flows sequentially from left to right through the panels.

**Step (a): Initial Generation**

* **Flow:** `Prompt` → `Generative LLM` → `CoT-style generation (t = 0)` → A sequence of thoughts (`Thought 1`, `Thought 2`, `Thought 3`, ...) → `Answer`.

* **Description:** This step shows the standard, initial generation of a chain-of-thought reasoning sequence and a final answer from a prompt.

**Step (b): Mistake Identification**

* **Flow:** `Prompt` → `Thought 1` → ... → `Answer` → `Mistake location classifier`.

* **Branching Logic from Classifier:**

* **Path 1 (Success):** `No mistakes found` → `Finish`. (This path ends the process).

* **Path 2 (Error):** `Thought N contains mistake` → `Go to next step` (with a red arrow pointing rightward to the next panel).

**Steps (c) & (d): Regeneration of Faulty Thought**

* **Flow:** `Prompt` → `Thought 1` → ... → `Thought N - 1` → `Generative LLM` → `Re-generate Thought N (t = 1)` → `New Thought N`.

* **Description:** This stage takes the sequence up to the thought before the mistake (`Thought N - 1`) and uses the Generative LLM again (now with `t = 1`, indicating a second attempt) to create a corrected version, `New Thought N`.

**Step (e): Completion with Corrected Thought**

* **Flow:** `Prompt` → `Thought 1` → ... → `New Thought N` → `Generative LLM` → `Generate remaining steps (t = 0)` → `New Thought N + 1` → ... → `New Answer`.

* **Description:** The corrected thought (`New Thought N`) is inserted back into the sequence. The Generative LLM then continues generating the rest of the reasoning chain (`New Thought N + 1`, etc.) and a final `New Answer`, reverting to the initial generation mode (`t = 0`).

### Key Observations

1. **Color-Coded Workflow:** The diagram uses a consistent color scheme: blue for standard processes, red for error detection and branching, and green for corrected outputs.

2. **Temporal Notation:** The use of `(t = 0)` and `(t = 1)` suggests different modes or iterations of the Generative LLM. `t = 0` appears to be the initial generation mode, while `t = 1` is specifically for regenerating a single faulty step.

3. **Localized Correction:** The process does not restart from the beginning. It isolates the mistake to `Thought N`, regenerates only that step, and then continues, which is efficient.

4. **Sequential Dependency:** Each step explicitly depends on the output of the previous one, forming a strict linear chain that is only interrupted by the mistake-correction loop.

### Interpretation

This diagram outlines a **self-correcting reasoning pipeline** for LLMs. It demonstrates a method to enhance reliability by incorporating an automated verification step (`Mistake location classifier`) into the chain-of-thought generation process.

* **What it suggests:** The core idea is that LLMs can be made more accurate not just by improving the base model, but by architecting a system that can detect its own errors mid-process and perform targeted repairs. This is a form of iterative refinement.

* **How elements relate:** The `Mistake location classifier` is the critical control point. It acts as a gatekeeper that either validates the entire chain (leading to `Finish`) or identifies a specific weak link (`Thought N`) for repair. The `Generative LLM` serves a dual purpose: initial creator and subsequent editor.

* **Notable Anomalies/Patterns:** The most significant pattern is the shift from a monolithic generation (Step a) to an interactive, feedback-driven loop (Steps b-e). The process acknowledges that the first attempt (`t = 0`) may be flawed and builds in a structured mechanism for correction. The return to `t = 0` in Step (e) after a `t = 1` correction implies that the system reverts to its standard mode once the specific error is resolved. This architecture could significantly reduce cascading errors in complex reasoning tasks.