## Flow Diagram: Generative LLM Process with Mistake Detection

### Overview

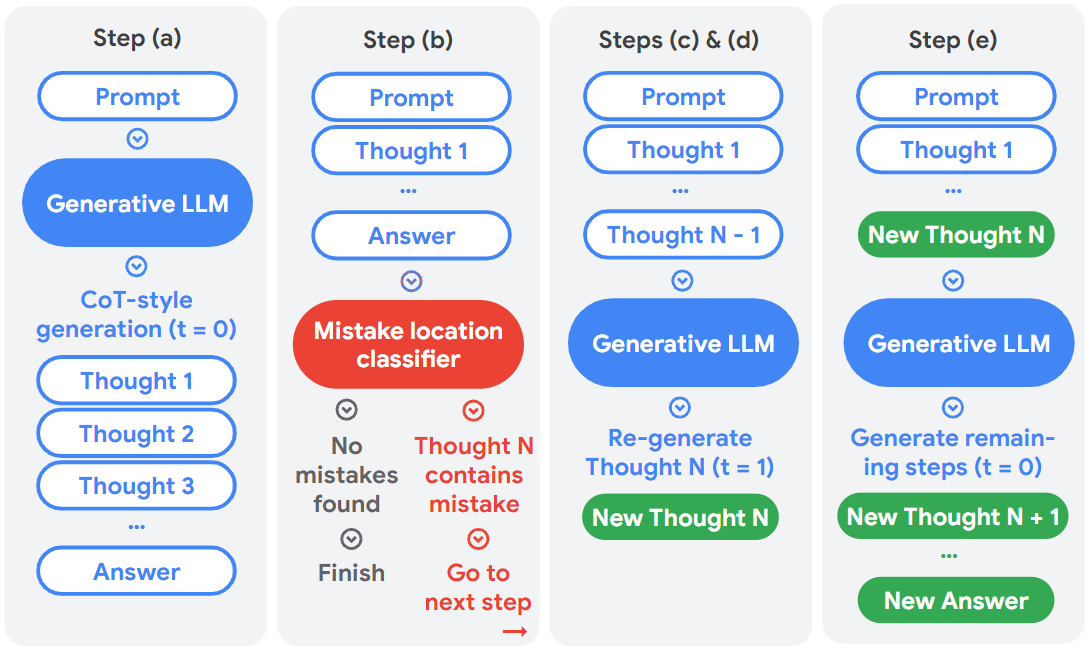

The image presents a flow diagram illustrating the steps involved in a Generative Large Language Model (LLM) process, incorporating a mechanism for mistake detection and correction. The process is divided into five steps, labeled (a) through (e), each depicting different stages of thought generation, mistake classification, and regeneration.

### Components/Axes

The diagram uses rounded rectangles to represent processes or states. Arrows indicate the flow of information. Colors are used to distinguish between different types of operations:

* **Blue:** Represents standard LLM operations (prompting, thought generation).

* **Red:** Represents the mistake location classifier and error states.

* **Green:** Represents new or corrected thoughts/answers.

The steps are labeled as follows:

* **Step (a):** Initial prompt and CoT-style (Chain-of-Thought) generation.

* **Step (b):** Mistake location classification.

* **Steps (c) & (d):** Regeneration of thought upon mistake detection.

* **Step (e):** Generation of remaining steps after correction.

Each step contains the following elements:

* **Prompt:** The initial input to the LLM.

* **Thought:** Intermediate steps in the reasoning process.

* **Answer:** The final output of the LLM.

* **Generative LLM:** The core language model.

* **Mistake location classifier:** A component that identifies errors in the generated thoughts.

### Detailed Analysis or ### Content Details

**Step (a):**

* Starts with a "Prompt" (blue).

* Flows into "Generative LLM" (blue).

* "CoT-style generation (t = 0)" is indicated below the LLM.

* Generates a series of "Thought 1", "Thought 2", "Thought 3", and so on (blue).

* Ends with an "Answer" (blue).

**Step (b):**

* Starts with a "Prompt" (blue).

* Generates "Thought 1", followed by "...", and then "Answer" (blue).

* Flows into "Mistake location classifier" (red).

* Two possible outcomes:

* "No mistakes found" (gray text) leading to "Finish" (gray text).

* "Thought N contains mistake" (gray text) leading to "Go to next step" (gray text) with an arrow pointing to the right.

**Steps (c) & (d):**

* Starts with a "Prompt" (blue).

* Generates "Thought 1", followed by "...", and then "Thought N - 1" (blue).

* Flows into "Generative LLM" (blue).

* "Re-generate Thought N (t = 1)" is indicated below the LLM.

* Generates "New Thought N" (green).

**Step (e):**

* Starts with a "Prompt" (blue).

* Generates "Thought 1", followed by "...", and then "New Thought N" (green).

* Flows into "Generative LLM" (blue).

* "Generate remaining steps (t = 0)" is indicated below the LLM.

* Generates "New Thought N + 1", followed by "...", and then "New Answer" (green).

### Key Observations

* The diagram illustrates an iterative process where the LLM generates thoughts, a classifier checks for mistakes, and the LLM regenerates thoughts if mistakes are found.

* The use of color highlights the different stages of the process, with red indicating error detection and green indicating corrected outputs.

* The time parameter 't' is used to indicate the number of regeneration attempts.

* Step (b) is the only step that has a decision point, based on whether a mistake is found.

### Interpretation

The diagram demonstrates a system for improving the accuracy of LLM outputs by incorporating a mistake detection and correction mechanism. The process leverages Chain-of-Thought prompting to generate intermediate reasoning steps, which are then evaluated for errors. If an error is detected, the LLM regenerates the erroneous thought, potentially leading to a more accurate final answer. This iterative process aims to enhance the reliability and trustworthiness of LLM-generated content. The diagram highlights the importance of error detection and correction in complex AI systems.