## Line Graph: Percentage of Negative Objects Over Training Epochs

### Overview

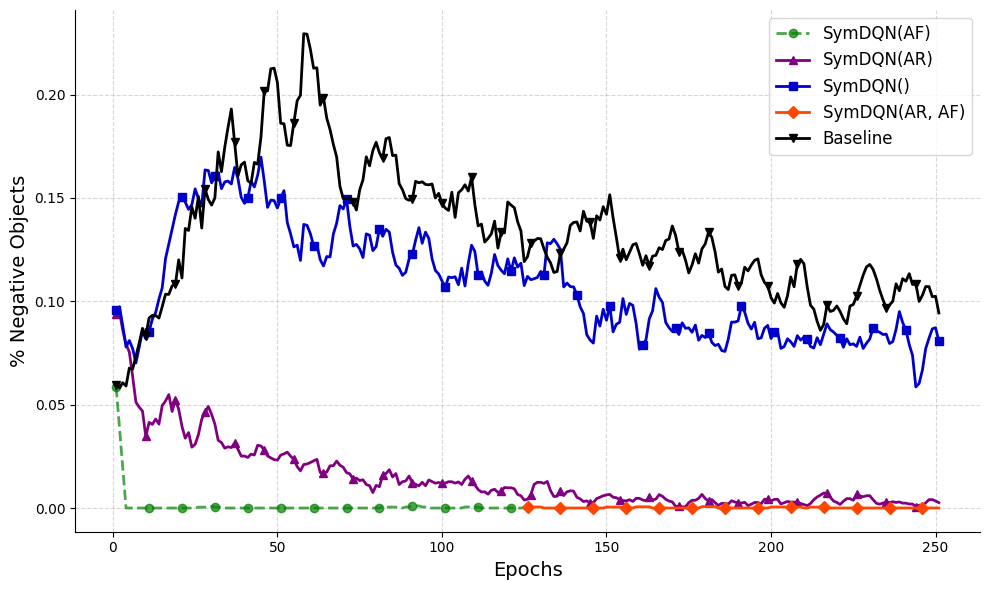

The graph depicts the performance of different SymDQN model configurations and a baseline over 250 training epochs, measuring the percentage of negative objects retained. Five data series are plotted with distinct markers and colors, showing varying trends in object retention.

### Components/Axes

- **X-axis (Epochs)**: Ranges from 0 to 250 in increments of 50.

- **Y-axis (% Negative Objects)**: Scaled from 0.00 to 0.20 in 0.05 increments.

- **Legend**: Located in the top-right corner, mapping colors/markers to:

- Green dashed line: SymDQN(AF)

- Purple triangle line: SymDQN(AR)

- Blue square line: SymDQN()

- Orange diamond line: SymDQN(AR, AF)

- Black solid line: Baseline

### Detailed Analysis

1. **Baseline (Black Solid Line)**:

- Starts at ~0.06 at epoch 0.

- Peaks sharply at ~0.20 around epoch 50.

- Exhibits high volatility, fluctuating between ~0.08 and ~0.20 throughout training.

- Ends at ~0.10 by epoch 250.

2. **SymDQN(AF) (Green Dashed Line)**:

- Drops abruptly from ~0.06 to near 0.00 within the first 10 epochs.

- Remains flat at ~0.00 for all subsequent epochs.

3. **SymDQN(AR) (Purple Triangle Line)**:

- Declines gradually from ~0.06 to ~0.01 by epoch 50.

- Fluctuates slightly but stabilizes near ~0.005 by epoch 250.

4. **SymDQN() (Blue Square Line)**:

- Begins at ~0.09, rises to ~0.15 by epoch 50.

- Shows moderate volatility, averaging ~0.09–0.12 after epoch 100.

- Ends at ~0.08 by epoch 250.

5. **SymDQN(AR, AF) (Orange Diamond Line)**:

- Follows a similar trajectory to SymDQN(AR), starting at ~0.06.

- Declines to ~0.005 by epoch 100, stabilizing near ~0.002 by epoch 250.

### Key Observations

- **Baseline Volatility**: The Baseline exhibits the highest variability, with sharp peaks and troughs, suggesting instability in negative object retention.

- **SymDQN(AF) Efficacy**: SymDQN(AF) achieves the fastest and most complete reduction in negative objects, reaching near-zero retention within 10 epochs.

- **Combined Configurations**: SymDQN(AR, AF) and SymDQN(AR) show complementary performance, with SymDQN(AR, AF) achieving marginally better results than SymDQN(AR).

- **SymDQN() Limitations**: The SymDQN() configuration retains the highest percentage of negative objects, indicating suboptimal performance compared to other variants.

### Interpretation

The data demonstrates that SymDQN models with the AF (Adaptive Feature) component are most effective at minimizing negative object retention. The Baseline's high volatility and persistent negative object retention suggest it lacks mechanisms to suppress irrelevant features. SymDQN(AR) and SymDQN(AR, AF) configurations show that AR (Adaptive Regularization) contributes to gradual improvement, while AF enables rapid suppression. The SymDQN() variant's poor performance highlights the importance of combining AR and AF for optimal results. These trends align with the hypothesis that adaptive regularization and feature engineering are critical for reducing negative object influence in training.