## Grouped Bar Chart: LLM Task Performance Comparison

### Overview

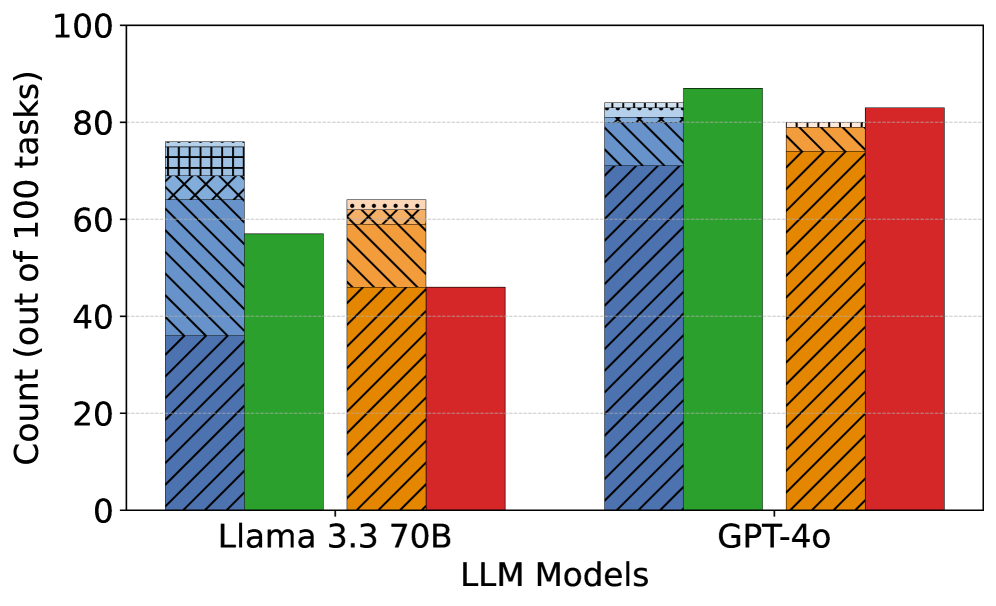

This image displays a grouped bar chart comparing the performance of two Large Language Models (LLMs) across four distinct task categories. The chart quantifies success as a count out of 100 attempted tasks for each model-category pair.

### Components/Axes

* **Chart Type:** Grouped Bar Chart.

* **X-Axis:** Labeled "LLM Models". It contains two primary categories:

1. `Llama 3.3 70B`

2. `GPT-4o`

* **Y-Axis:** Labeled "Count (out of 100 tasks)". The scale runs from 0 to 100 in increments of 20, with horizontal grid lines at these intervals.

* **Legend/Series:** Four distinct data series are represented by colored and patterned bars. The legend is embedded within the bar patterns themselves, requiring visual matching.

* **Blue with diagonal hatching (\\):** Represents "Code Generation".

* **Solid Green:** Represents "Math Problem Solving".

* **Orange with cross-hatching (X):** Represents "Creative Writing".

* **Solid Red:** Represents "Factual Q&A".

* **Spatial Layout:** For each LLM model on the x-axis, the four task bars are grouped together in the order listed above (Blue, Green, Orange, Red from left to right within the group).

### Detailed Analysis

**Llama 3.3 70B Performance (Left Group):**

* **Code Generation (Blue, hatched):** The bar reaches approximately **76**. It is the highest-performing task for this model.

* **Math Problem Solving (Green, solid):** The bar reaches approximately **57**.

* **Creative Writing (Orange, cross-hatched):** The bar reaches approximately **64**.

* **Factual Q&A (Red, solid):** The bar reaches approximately **46**. It is the lowest-performing task for this model.

**GPT-4o Performance (Right Group):**

* **Code Generation (Blue, hatched):** The bar reaches approximately **84**.

* **Math Problem Solving (Green, solid):** The bar reaches approximately **87**. It is the highest-performing task for this model.

* **Creative Writing (Orange, cross-hatched):** The bar reaches approximately **80**.

* **Factual Q&A (Red, solid):** The bar reaches approximately **83**.

**Trend Verification:**

* For **Llama 3.3 70B**, the performance trend from highest to lowest is: Code Generation > Creative Writing > Math Problem Solving > Factual Q&A.

* For **GPT-4o**, the performance trend is more clustered: Math Problem Solving > Factual Q&A > Code Generation > Creative Writing. All scores are above 80.

* **Cross-Model Trend:** GPT-4o shows a clear and consistent performance advantage over Llama 3.3 70B across all four task categories. The performance gap is most pronounced in Math Problem Solving (~30 point difference) and Factual Q&A (~37 point difference).

### Key Observations

1. **Model Superiority:** GPT-4o demonstrates significantly higher and more consistent performance across all measured tasks compared to Llama 3.3 70B.

2. **Task Strength Variability:** Llama 3.3 70B shows greater variability in performance between tasks (range ~30 points), while GPT-4o's performance is more uniform (range ~7 points).

3. **Task-Specific Strengths:** For Llama, Code Generation is a relative strength. For GPT-4o, Math Problem Solving is the top-performing task, though all are strong.

4. **Visual Encoding:** The chart uses both color and pattern (hatching) to distinguish data series, which aids in accessibility and black-and-white printing.

### Interpretation

This chart provides a direct performance benchmark between two prominent LLMs on a standardized set of 100 tasks per category. The data suggests that GPT-4o is a more capable and reliable model across a diverse set of cognitive tasks, including technical (Code, Math), creative (Writing), and knowledge-based (Factual Q&A) domains.

The significant performance gap, especially in Math and Factual Q&A, may indicate differences in model architecture, training data quality/quantity, or reasoning capabilities. Llama 3.3 70B's relative strength in Code Generation could point to a training focus or architectural bias favoring structured, logical outputs.

The uniformity of GPT-4o's high scores implies robust generalization, whereas Llama's more varied results suggest its performance is more sensitive to the specific nature of the task. This analysis is crucial for practitioners selecting a model for specific applications; for instance, GPT-4o appears to be the safer choice for a general-purpose assistant, while Llama's performance in coding tasks might still be competitive for specialized development tools. The chart effectively communicates not just raw scores, but the comparative reliability and task-specialization profiles of the two models.