## Flowchart: Fact-Checking Strategies

### Overview

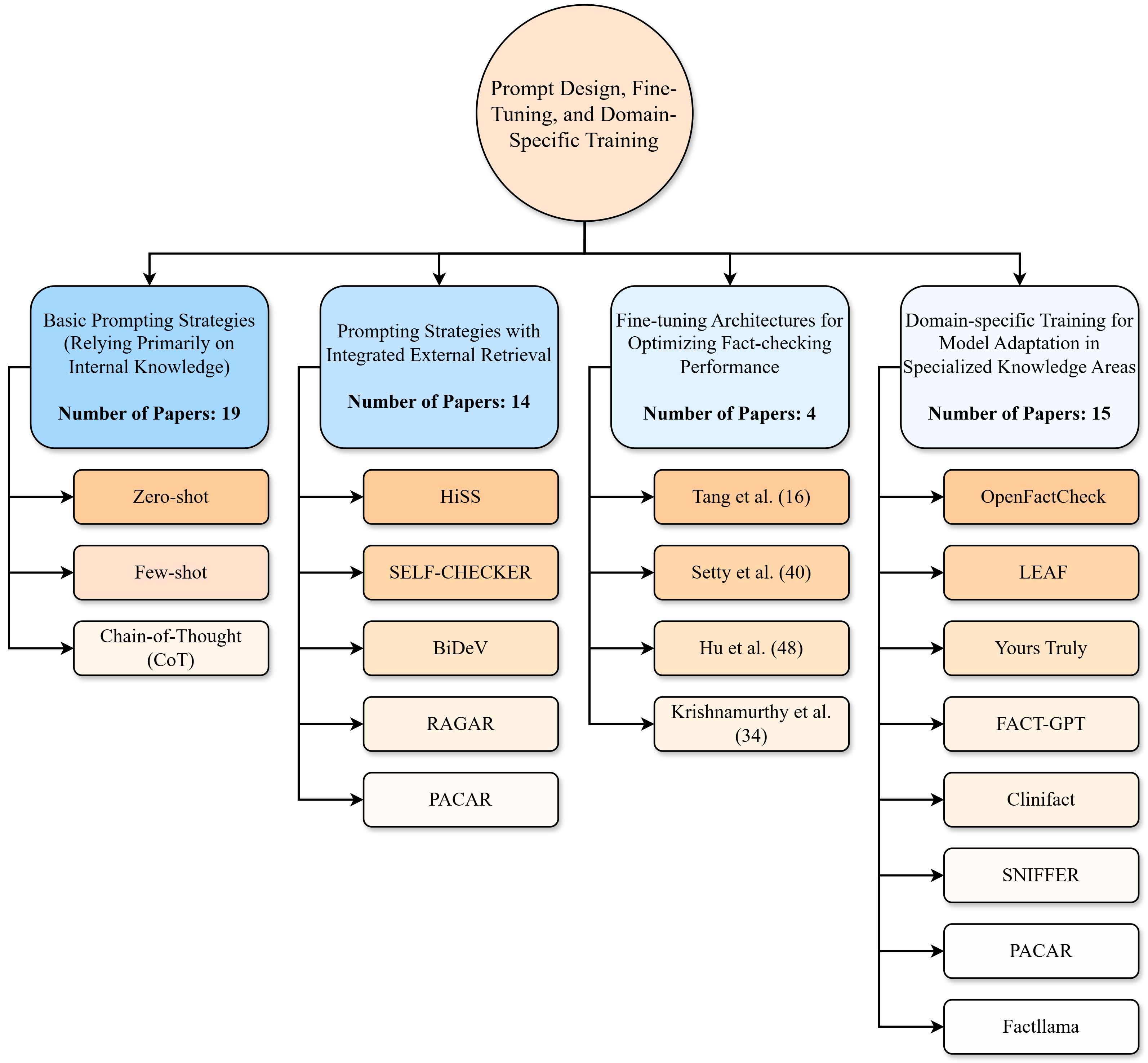

The image is a flowchart illustrating different strategies for fact-checking, categorized into four main approaches: basic prompting strategies, prompting strategies with integrated external retrieval, fine-tuning architectures, and domain-specific training. Each category lists specific techniques or models, along with the number of papers associated with each category.

### Components/Axes

* **Top Node:** "Prompt Design, Fine-Tuning, and Domain-Specific Training" (in a circle)

* **Level 1 Nodes (Blue Rounded Rectangles):**

* "Basic Prompting Strategies (Relying Primarily on Internal Knowledge)" - Number of Papers: 19

* "Prompting Strategies with Integrated External Retrieval" - Number of Papers: 14

* "Fine-tuning Architectures for Optimizing Fact-checking Performance" - Number of Papers: 4

* "Domain-specific Training for Model Adaptation in Specialized Knowledge Areas" - Number of Papers: 15

* **Level 2 Nodes (Orange/White Rounded Rectangles):** Specific techniques/models associated with each Level 1 category.

### Detailed Analysis or ### Content Details

**1. Prompt Design, Fine-Tuning, and Domain-Specific Training**

* This is the root node of the flowchart.

**2. Basic Prompting Strategies (Relying Primarily on Internal Knowledge)**

* Number of Papers: 19

* Sub-categories:

* Zero-shot (Orange)

* Few-shot (Orange)

* Chain-of-Thought (CoT) (White)

**3. Prompting Strategies with Integrated External Retrieval**

* Number of Papers: 14

* Sub-categories:

* HiSS (Orange)

* SELF-CHECKER (Orange)

* BiDeV (Orange)

* RAGAR (White)

* PACAR (White)

**4. Fine-tuning Architectures for Optimizing Fact-checking Performance**

* Number of Papers: 4

* Sub-categories:

* Tang et al. (16) (Orange)

* Setty et al. (40) (Orange)

* Hu et al. (48) (Orange)

* Krishnamurthy et al. (34) (White)

**5. Domain-specific Training for Model Adaptation in Specialized Knowledge Areas**

* Number of Papers: 15

* Sub-categories:

* OpenFactCheck (Orange)

* LEAF (Orange)

* Yours Truly (Orange)

* FACT-GPT (White)

* Clinifact (White)

* SNIFFER (White)

* PACAR (White)

* Factllama (White)

### Key Observations

* The "Basic Prompting Strategies" category has the highest number of associated papers (19).

* The "Fine-tuning Architectures" category has the lowest number of associated papers (4).

* Some techniques/models appear to be more prevalent (Orange), while others are less so (White).

* PACAR appears in two categories: "Prompting Strategies with Integrated External Retrieval" and "Domain-specific Training".

### Interpretation

The flowchart provides a structured overview of different approaches to fact-checking, highlighting the relative prevalence of each strategy based on the number of published papers. The categorization helps to understand the different dimensions of fact-checking research, from basic prompting techniques to more sophisticated methods involving external knowledge retrieval, fine-tuning, and domain-specific adaptation. The presence of "PACAR" in multiple categories suggests its versatility or applicability across different fact-checking paradigms. The number of papers associated with each category could reflect the maturity or popularity of each approach within the research community.