\n

## Diagram: Fact-Checking Approaches Taxonomy

### Overview

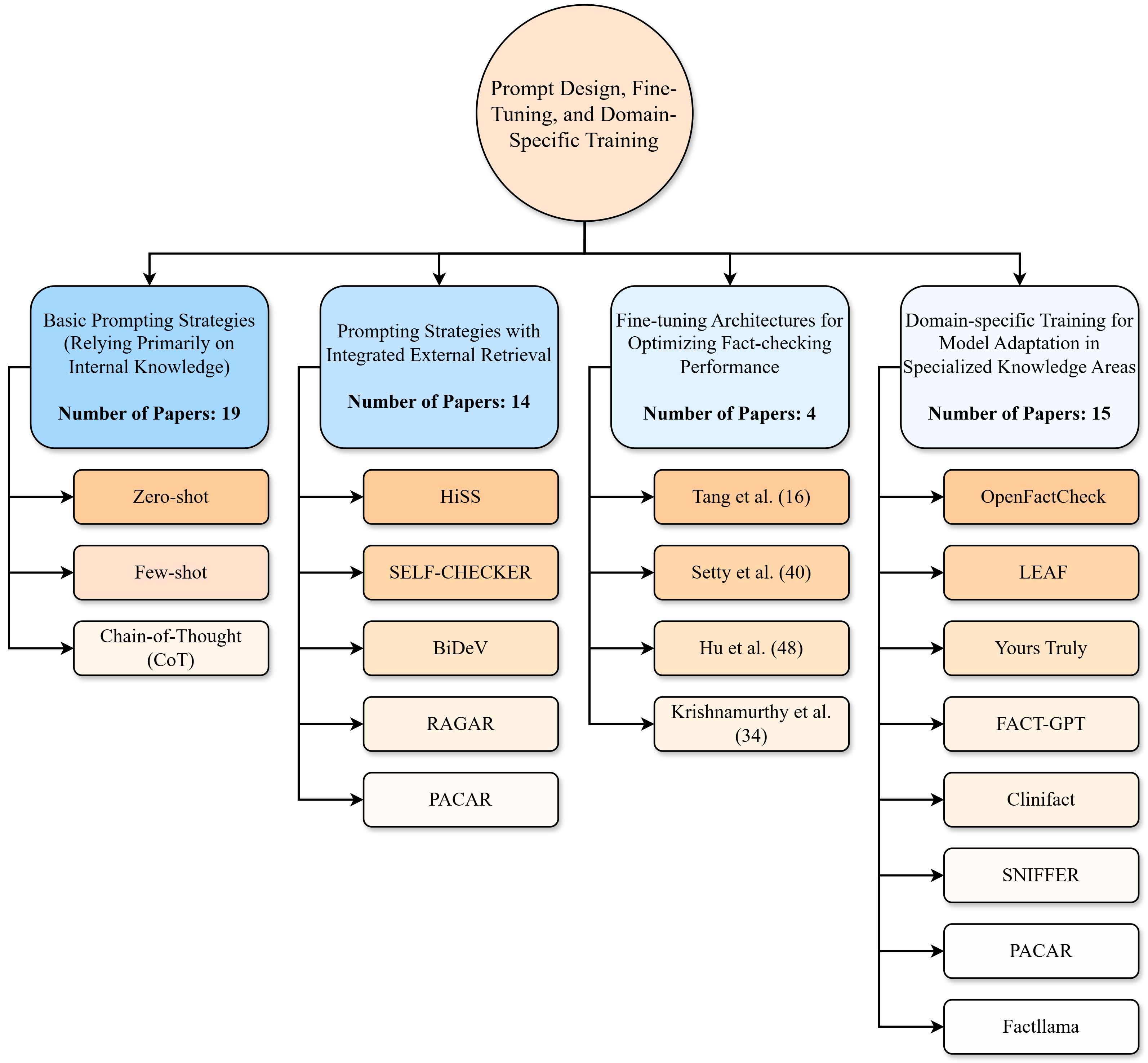

This diagram presents a taxonomy of fact-checking approaches, categorized by the training and prompting strategies employed. It illustrates a hierarchical structure, starting with a broad category ("Prompt Design, Fine-Tuning, and Domain-Specific Training") and branching down into four main strategies, each further subdivided into specific methods or models. The number of papers associated with each category is indicated.

### Components/Axes

The diagram is a directed acyclic graph. The top-level node is "Prompt Design, Fine-Tuning, and Domain-Specific Training". The four main branches are:

1. "Basic Prompting Strategies (Relying Primarily on Internal Knowledge)"

2. "Prompting Strategies with Integrated External Retrieval"

3. "Fine-tuning Architectures for Optimizing Fact-checking Performance"

4. "Domain-specific Training for Model Adaptation in Specialized Knowledge Areas"

Each branch then splits into several specific approaches or models. The number of papers associated with each main branch is indicated in a box below the branch title.

### Detailed Analysis or Content Details

Here's a breakdown of the diagram's content, moving from top to bottom:

* **Prompt Design, Fine-Tuning, and Domain-Specific Training:** This is the root node.

* **Basic Prompting Strategies (Relying Primarily on Internal Knowledge) - Number of Papers: 19:**

* Zero-shot

* Few-shot

* Chain-of-Thought (CoT)

* **Prompting Strategies with Integrated External Retrieval - Number of Papers: 14:**

* HiSS

* SELF-CHECKER

* BiDev

* RAGAR

* PACAR

* **Fine-tuning Architectures for Optimizing Fact-checking Performance - Number of Papers: 4:**

* Tang et al. (16)

* Setty et al. (40)

* Hu et al. (48)

* Krishnamurthy et al. (34)

* **Domain-specific Training for Model Adaptation in Specialized Knowledge Areas - Number of Papers: 15:**

* OpenFactCheck

* LEAF

* Yours Truly

* FACT-GPT

* Clinifact

* SNIFFER

* PACAR

* Factllama

The arrows indicate the flow from the general strategy to the specific methods. The numbers in parentheses after some model names (e.g., Tang et al. (16)) likely represent citation counts or publication years.

### Key Observations

* Basic Prompting Strategies have the highest number of associated papers (19).

* Fine-tuning Architectures have the fewest associated papers (4).

* PACAR appears in two different branches, suggesting it can be used with both external retrieval and domain-specific training.

* The diagram provides a clear categorization of fact-checking approaches based on the underlying techniques.

### Interpretation

The diagram illustrates the evolving landscape of fact-checking research. Initially, the focus was on basic prompting strategies leveraging the inherent knowledge within large language models. As the field progressed, researchers began incorporating external knowledge retrieval mechanisms to enhance accuracy. More recently, there's been a trend towards fine-tuning existing architectures and specializing models for specific domains. The varying number of papers associated with each category suggests the relative maturity and research activity in each area. The presence of PACAR in multiple branches highlights the versatility of certain approaches. The diagram is a useful tool for understanding the different avenues being explored to improve automated fact-checking. It suggests a shift from relying solely on internal model knowledge to incorporating external sources and specialized training.