## Line Chart: BLEU Score vs. Noise Level for Different Methods

### Overview

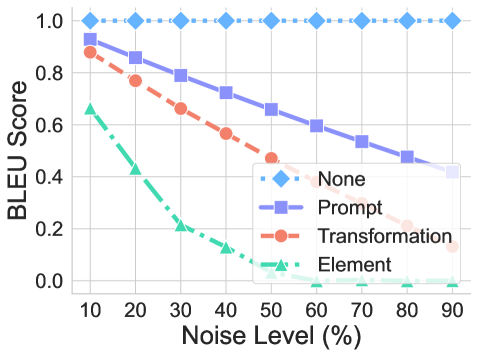

This image is a line chart comparing the performance of four different methods ("None", "Prompt", "Transformation", "Element") as measured by BLEU Score across increasing levels of noise. The chart demonstrates how each method's performance degrades as the input noise increases from 10% to 90%.

### Components/Axes

* **X-Axis (Horizontal):** Labeled "Noise Level (%)". The axis markers are at intervals of 10, starting at 10 and ending at 90.

* **Y-Axis (Vertical):** Labeled "BLEU Score". The axis scale ranges from 0.0 to 1.0, with markers at intervals of 0.2 (0.0, 0.2, 0.4, 0.6, 0.8, 1.0).

* **Legend:** Located in the bottom-right quadrant of the chart area. It contains four entries, each with a colored line sample, marker symbol, and label:

1. **None:** Light blue, diamond marker, dotted line.

2. **Prompt:** Purple, square marker, solid line.

3. **Transformation:** Orange-red, circle marker, dashed line.

4. **Element:** Teal/green, triangle marker, dash-dot line.

### Detailed Analysis

The chart plots four distinct data series. Below is an analysis of each, including approximate data points extracted from the visual plot.

**1. Series: "None" (Light Blue, Diamond, Dotted)**

* **Trend:** Perfectly horizontal line. This series shows no degradation in performance with increasing noise.

* **Data Points (Approximate):** The BLEU Score remains constant at **1.0** for all Noise Levels from 10% to 90%.

**2. Series: "Prompt" (Purple, Square, Solid)**

* **Trend:** Linearly decreasing trend. The line slopes downward steadily from left to right.

* **Data Points (Approximate):**

* Noise 10%: BLEU Score ≈ **0.95**

* Noise 20%: BLEU Score ≈ **0.85**

* Noise 30%: BLEU Score ≈ **0.78**

* Noise 40%: BLEU Score ≈ **0.72**

* Noise 50%: BLEU Score ≈ **0.65**

* Noise 60%: BLEU Score ≈ **0.60**

* Noise 70%: BLEU Score ≈ **0.55**

* Noise 80%: BLEU Score ≈ **0.48**

* Noise 90%: BLEU Score ≈ **0.42**

**3. Series: "Transformation" (Orange-Red, Circle, Dashed)**

* **Trend:** Linearly decreasing trend, steeper than the "Prompt" series.

* **Data Points (Approximate):**

* Noise 10%: BLEU Score ≈ **0.88**

* Noise 20%: BLEU Score ≈ **0.78**

* Noise 30%: BLEU Score ≈ **0.65**

* Noise 40%: BLEU Score ≈ **0.58**

* Noise 50%: BLEU Score ≈ **0.48**

* Noise 60%: BLEU Score ≈ **0.40**

* Noise 70%: BLEU Score ≈ **0.30**

* Noise 80%: BLEU Score ≈ **0.22**

* Noise 90%: BLEU Score ≈ **0.15**

**4. Series: "Element" (Teal/Green, Triangle, Dash-Dot)**

* **Trend:** Sharply decreasing, non-linear trend. The line drops rapidly initially and then flattens near zero.

* **Data Points (Approximate):**

* Noise 10%: BLEU Score ≈ **0.65**

* Noise 20%: BLEU Score ≈ **0.42**

* Noise 30%: BLEU Score ≈ **0.20**

* Noise 40%: BLEU Score ≈ **0.12**

* Noise 50%: BLEU Score ≈ **0.05**

* Noise 60%: BLEU Score ≈ **0.02**

* Noise 70%: BLEU Score ≈ **0.01**

* Noise 80%: BLEU Score ≈ **0.00**

* Noise 90%: BLEU Score ≈ **0.00**

### Key Observations

1. **Baseline Performance:** The "None" method serves as a perfect baseline, maintaining a BLEU Score of 1.0 regardless of noise. This suggests it represents an ideal or uncorrupted reference.

2. **Performance Hierarchy:** At all noise levels, the performance order from best to worst is consistent: "None" > "Prompt" > "Transformation" > "Element".

3. **Varying Resilience:** The methods show dramatically different resilience to noise. "Prompt" degrades the most gracefully, while "Element" is highly sensitive, losing most of its performance by 40% noise.

4. **Crossover Point:** The "Prompt" and "Transformation" lines do not cross; "Prompt" maintains a consistent performance advantage over "Transformation" across the entire noise spectrum.

5. **Convergence to Zero:** The "Element" method's performance effectively reaches zero (BLEU Score ≈ 0.0) at noise levels of 80% and above.

### Interpretation

This chart likely evaluates the robustness of different natural language processing or machine translation techniques against input corruption (noise). The "BLEU Score" is a standard metric for evaluating machine-generated text against a human reference.

* **"None"** probably represents the evaluation of the original, uncorrupted text against itself, hence the perfect score. It acts as the control.

* The downward trends for the other three methods demonstrate that introducing noise (e.g., typos, word swaps, deletions) degrades the quality of their output.

* The **"Prompt"** method appears to be the most robust technique among those tested. Its linear, shallow decline suggests it has mechanisms to handle or ignore a significant amount of input noise.

* The **"Transformation"** method is moderately robust but consistently underperforms the "Prompt" method.

* The **"Element"** method is the least robust. Its sharp initial decline indicates it is highly dependent on precise, clean input and fails catastrophically as noise increases. This could represent a more brittle, rule-based, or fine-grained processing approach.

The key takeaway is that the choice of method has a profound impact on system performance in noisy, real-world conditions. The "Prompt" approach demonstrates superior stability, making it potentially more reliable for applications where input quality cannot be guaranteed.