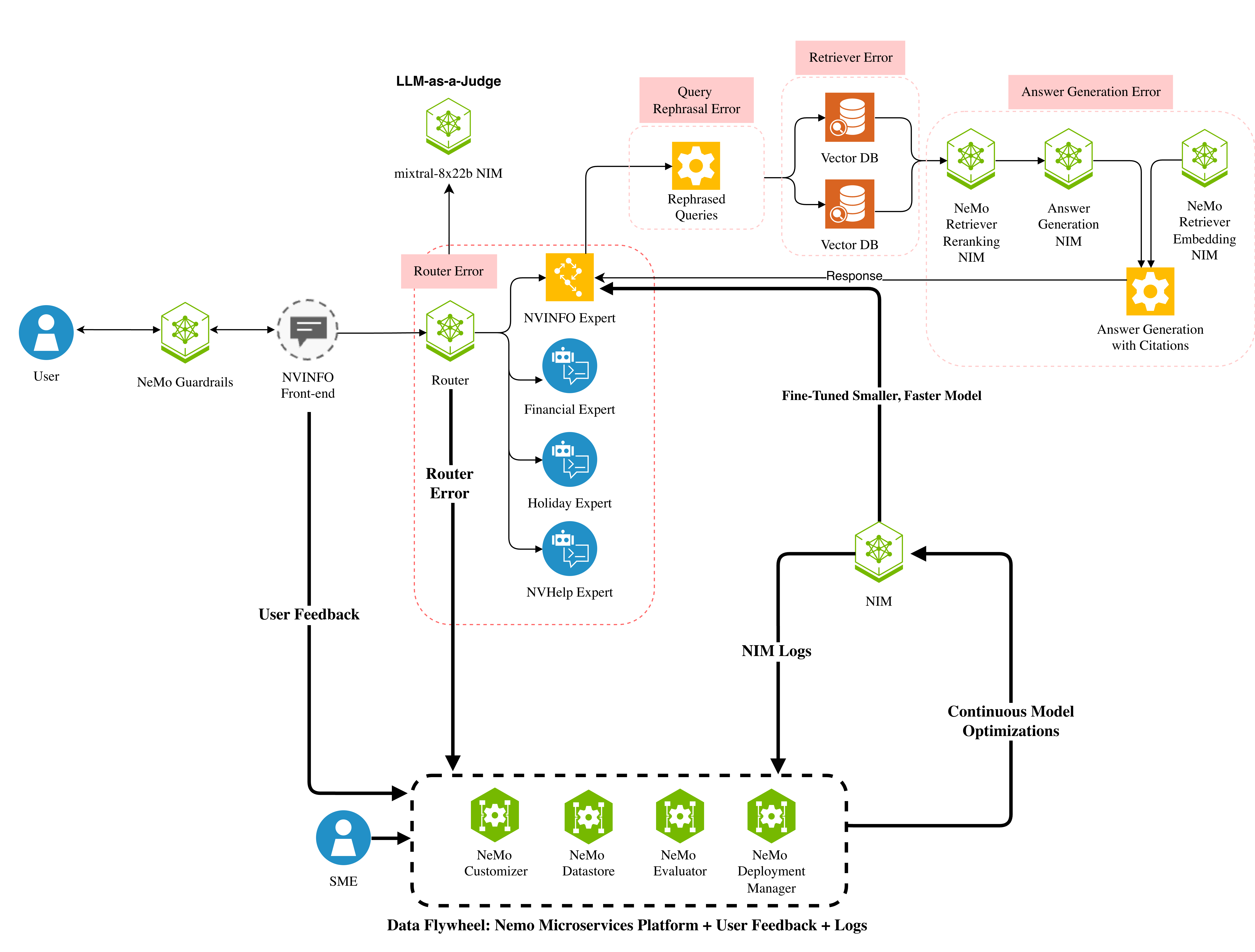

## System Architecture Diagram: NeMo-Based AI Assistant with Continuous Optimization Flywheel

### Overview

This is a technical architecture diagram illustrating an end-to-end domain-specialized AI assistant system built on NVIDIA NeMo microservices. It features a modular query routing pipeline, segmented error tracking zones, and a closed-loop data flywheel for continuous model optimization, designed for enterprise-grade support use cases.

### Components & Flow

The diagram is organized into 4 core regions, with directional arrows defining data/control flow:

1. **User Entry & Feedback Region (Left):**

- `User` (blue person icon, top-left) → `NeMo Guardrails` (green hexagon, safety/compliance layer) → `NVINFO Front-end` (gray chat bubble, user interface)

- `User Feedback` (black arrow) flows from `NVINFO Front-end` to `SME` (blue person icon, bottom-left) and `NeMo Customizer`

- `SME` (Subject Matter Expert) provides input to `NeMo Customizer`

2. **Query Routing & Expert Modules (Central, red dashed box labeled *Router Error*):**

- `Router` (green hexagon) receives input from `NVINFO Front-end`, and routes queries to 4 specialized expert modules (blue chat bubble icons):

- `NVINFO Expert`

- `Financial Expert`

- `Holiday Expert`

- `NVHelp Expert`

- `LLM-as-a-Judge` (green hexagon, labeled `mixtral-8x22b NIM`) is connected to `Router` for response quality validation

3. **Response Generation Pipeline (Top-Right, segmented error zones):**

- *Query Rephrasal Error* (red dashed box): `Router` → `Rephrased Queries` (yellow gear icon)

- *Retriever Error* (red dashed box): `Rephrased Queries` → two `Vector DB` (orange database icons)

- *Answer Generation Error* (red dashed box):

- `Vector DB` → `NeMo Retriever Embedding NIM` (green hexagon) → `NeMo Retriever Reranking NIM` (green hexagon) → `Answer Generation NIM` (green hexagon) → `Answer Generation with Citations` (yellow gear icon)

- `Answer Generation with Citations` sends a `Response` back to `Router`

4. **Data Flywheel & Optimization (Bottom, black dashed box labeled *Data Flywheel: NeMo Microservices Platform + User Feedback + Logs*):**

- `Router` connects to `Fine-Tuned Smaller, Faster Model` (green hexagon labeled `NIM`)

- `NIM` sends `NIM Logs` to `NeMo Deployment Manager` (green hexagon)

- `NeMo Deployment Manager` sends `Continuous Model Optimizations` back to `NIM`

- `NeMo Deployment Manager` → `NeMo Evaluator` (green hexagon) → `NeMo Datastore` (green hexagon) → `NeMo Customizer` (green hexagon)

- `NeMo Customizer` receives input from `User Feedback` and `SME`, and feeds back to the routing pipeline

### Detailed Analysis

- **Error Tracking Zones:** 4 distinct red dashed boxes isolate failure points in the pipeline:

1. *Router Error*: Covers query routing and expert module selection

2. *Query Rephrasal Error*: Covers query rephrasing step

3. *Retriever Error*: Covers vector database retrieval

4. *Answer Generation Error*: Covers embedding, reranking, and final answer generation

- **Model Types:** The system uses two model tiers:

- Large judge model: `mixtral-8x22b NIM` (for quality validation)

- Fine-tuned smaller, faster model (for low-latency response generation)

- **NeMo Microservices:** 4 core platform components enable optimization:

- `NeMo Customizer`: Adapts models using feedback

- `NeMo Datastore`: Stores training/feedback data

- `NeMo Evaluator`: Validates model performance

- `NeMo Deployment Manager`: Orchestrates model updates

### Key Observations

1. The system uses domain-specific expert routing to improve query response accuracy for specialized use cases (finance, holidays, NVINFO support)

2. Error monitoring is granular, with dedicated zones for each pipeline stage to simplify debugging

3. A closed-loop data flywheel combines user feedback, SME input, and model logs to drive continuous model improvement

4. Safety/compliance is prioritized with `NeMo Guardrails` at the user entry point

5. The architecture balances speed (fine-tuned small model) and quality (LLM-as-a-Judge validation)

### Interpretation

This diagram represents a production-ready AI assistant system optimized for enterprise support workflows. The modular design allows for easy scaling of domain-specific experts, while the segmented error zones enable targeted reliability improvements. The data flywheel ensures the system evolves over time, adapting to user needs and reducing response errors. The combination of a large judge model and a fine-tuned small model balances quality and latency, making it suitable for high-volume customer support environments. This architecture addresses key challenges in AI assistant deployment: domain specialization, reliability, and continuous learning.