## Diagram: Meta-Learning Parameter Adaptation Flow

### Overview

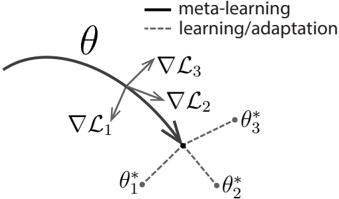

This image is a conceptual diagram illustrating the process of meta-learning and subsequent task-specific adaptation. It visually represents how a meta-learned parameter (θ) serves as a starting point for learning or adapting to multiple distinct tasks, each resulting in a specialized parameter set (θ*).

### Components/Axes

* **Legend (Top-Right):**

* **Solid Line (—):** Labeled "meta-learning"

* **Dashed Line (---):** Labeled "learning/adaptation"

* **Primary Elements:**

* A solid, curved line labeled **θ** (theta), representing the meta-learned parameter or model state.

* Three gradient symbols: **∇L₁**, **∇L₂**, **∇L₃** (nabla L sub 1, 2, 3). These represent the gradients of loss functions for three different tasks.

* Three dashed lines originating from the end of the solid θ curve, each leading to a distinct point.

* Three endpoint labels: **θ*₁**, **θ*₂**, **θ*₃** (theta star sub 1, 2, 3). These represent the task-specific parameters after adaptation.

* **Spatial Layout:**

* The solid **θ** curve flows from the upper-left towards the center.

* The gradient symbols (**∇L₁**, **∇L₂**, **∇L₃**) are positioned around the terminus of the θ curve, with arrows pointing from the curve towards them.

* The dashed adaptation lines diverge from a common point at the end of θ, spreading out towards the bottom-right.

* The final parameters (**θ*₁**, **θ*₂**, **θ*₃**) are located at the ends of their respective dashed lines, forming a fan-like pattern.

### Detailed Analysis

The diagram depicts a two-phase process:

1. **Meta-Learning Phase (Solid Line):** A single, shared parameter trajectory (θ) is learned. This represents the acquisition of general knowledge or a model initialization that is good for rapid adaptation.

2. **Learning/Adaptation Phase (Dashed Lines):** From the meta-learned state θ, the model adapts to specific tasks. This is shown by:

* The computation of task-specific gradients (∇L₁, ∇L₂, ∇L₃).

* The subsequent parameter updates along dashed paths, leading to distinct, optimized parameters for each task (θ*₁, θ*₂, θ*₃).

The arrows from the θ curve to the gradient symbols indicate that these gradients are derived from the current state of θ with respect to each task's loss function (L₁, L₂, L₃).

### Key Observations

* **Common Origin, Divergent Paths:** All task-specific parameters (θ*₁, θ*₂, θ*₃) originate from the same meta-learned point (θ), but follow separate adaptation paths.

* **Gradient Direction:** The gradient arrows (∇L) point away from the main θ curve, suggesting the direction of parameter update needed to minimize each task's loss.

* **Visual Metaphor:** The solid line represents a "highway" of general knowledge, while the dashed lines represent "local roads" to task-specific solutions.

### Interpretation

This diagram is a canonical representation of **meta-learning** or **learning-to-learn**. It illustrates the core hypothesis that a model can learn a parameter initialization (θ) that is not optimal for any single task but is exceptionally **adaptable**. The value of θ is that it lies in a region of the parameter space where small, task-specific updates (guided by ∇L₁, ∇L₂, ∇L₃) can quickly lead to high-performing specialized models (θ*₁, θ*₂, θ*₃).

The separation into solid and dashed lines emphasizes the distinction between the slow, aggregated learning of the meta-parameters (across many tasks) and the fast, individual adaptation to new tasks. The diagram suggests efficiency: instead of learning three separate models from scratch, one learns a single, flexible starting point. This is foundational for fields like few-shot learning, where the goal is to perform well on new tasks with very little data by leveraging this meta-learned adaptability.