\n

## Diagram: Federated Learning Process

### Overview

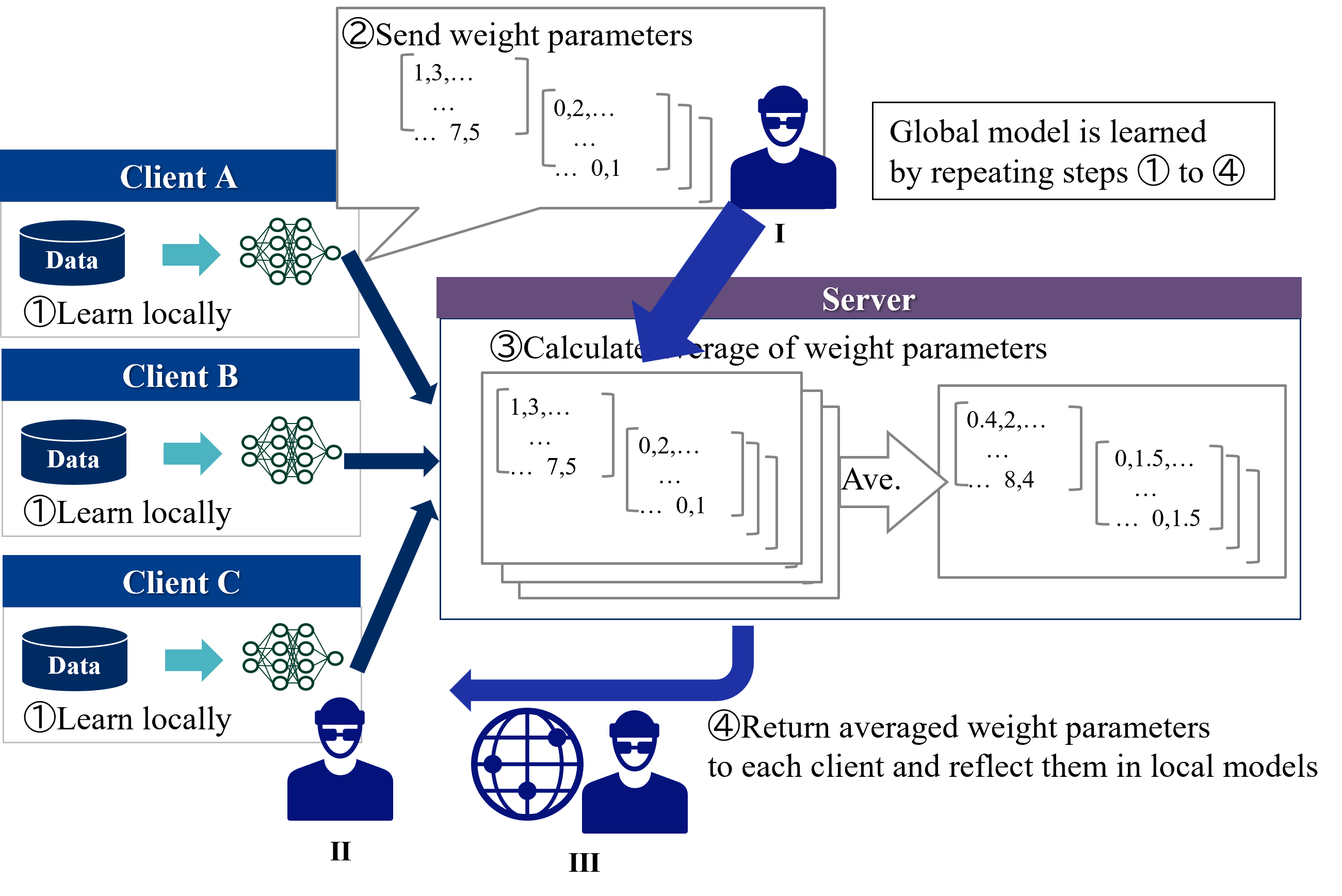

This diagram illustrates the process of Federated Learning, where a global model is trained across multiple clients without directly exchanging data. The diagram depicts three clients (A, B, and C) learning locally from their respective data and then communicating weight parameters to a central server for aggregation. The server then returns the averaged weight parameters to the clients, updating their local models. This process is repeated iteratively to improve the global model.

### Components/Axes

The diagram consists of three client nodes (Client A, Client B, Client C), a central server node, and directional arrows indicating the flow of information. Each client has a "Data" input and a "Learn locally" process represented by a brain icon. The server has a "Calculate average of weight parameters" process and a "Return averaged weight parameters" process. Numbered steps (①, ②, ③, ④) guide the process flow. There are also three user icons labeled I, II, and III.

### Detailed Analysis or Content Details

The diagram shows the following steps:

**① Learn locally:** Each client (A, B, and C) independently learns from its local data. This is represented by the "Data" input and the brain icon.

**② Send weight parameters:** Each client sends its learned weight parameters to the server. The weight parameters are represented as matrices:

* Client A: `[1,3,... 7,5 ... 0,2,... 0,1]`

* Client B: `[1,3,... 7,5 ... 0,2,... 0,1]`

* Client C: `[1,3,... 7,5 ... 0,2,... 0,1]`

**③ Calculate average of weight parameters:** The server calculates the average of the received weight parameters. The averaged weight parameters are represented as matrices:

* Averaged: `[0.4,2,... 8,4 ... 0,1,5,... 0,1,5]`

**④ Return averaged weight parameters:** The server returns the averaged weight parameters to each client, which updates their local models.

The diagram also shows a global model being learned by repeating steps ① to ④.

The user icons represent:

* I: Located on the server, near the "Global model is learned" text.

* II: Located near the bottom-left, connected to Client C.

* III: Located near the bottom-right, connected to the server.

### Key Observations

The weight parameter matrices for Clients A, B, and C are identical before averaging. This suggests that the initial models or data distributions might be similar. The averaged weight parameters show changes in values (e.g., from 7.5 to 8.4, and 0.1 to 0.15), indicating the impact of aggregation. The diagram emphasizes the decentralized nature of the learning process, with data remaining on the client devices.

### Interpretation

This diagram illustrates the core concept of Federated Learning, a privacy-preserving machine learning technique. By keeping the data localized on each client and only sharing model updates (weight parameters), the risk of data breaches and privacy violations is reduced. The server acts as a central coordinator, aggregating the knowledge learned by each client to build a more robust global model. The iterative process of local learning and global aggregation allows the model to improve over time without compromising data privacy. The identical initial weight parameters suggest a potential starting point for the learning process, and the changes observed in the averaged parameters demonstrate the effect of combining knowledge from multiple sources. The user icons likely represent the individuals or entities involved in the process – the clients and the server administrator. The diagram is a simplified representation, and real-world Federated Learning systems often involve more complex mechanisms for handling data heterogeneity, communication constraints, and security considerations.