## [Diagram]: Two-Step Process for Generating SK-Tuning Training Samples

### Overview

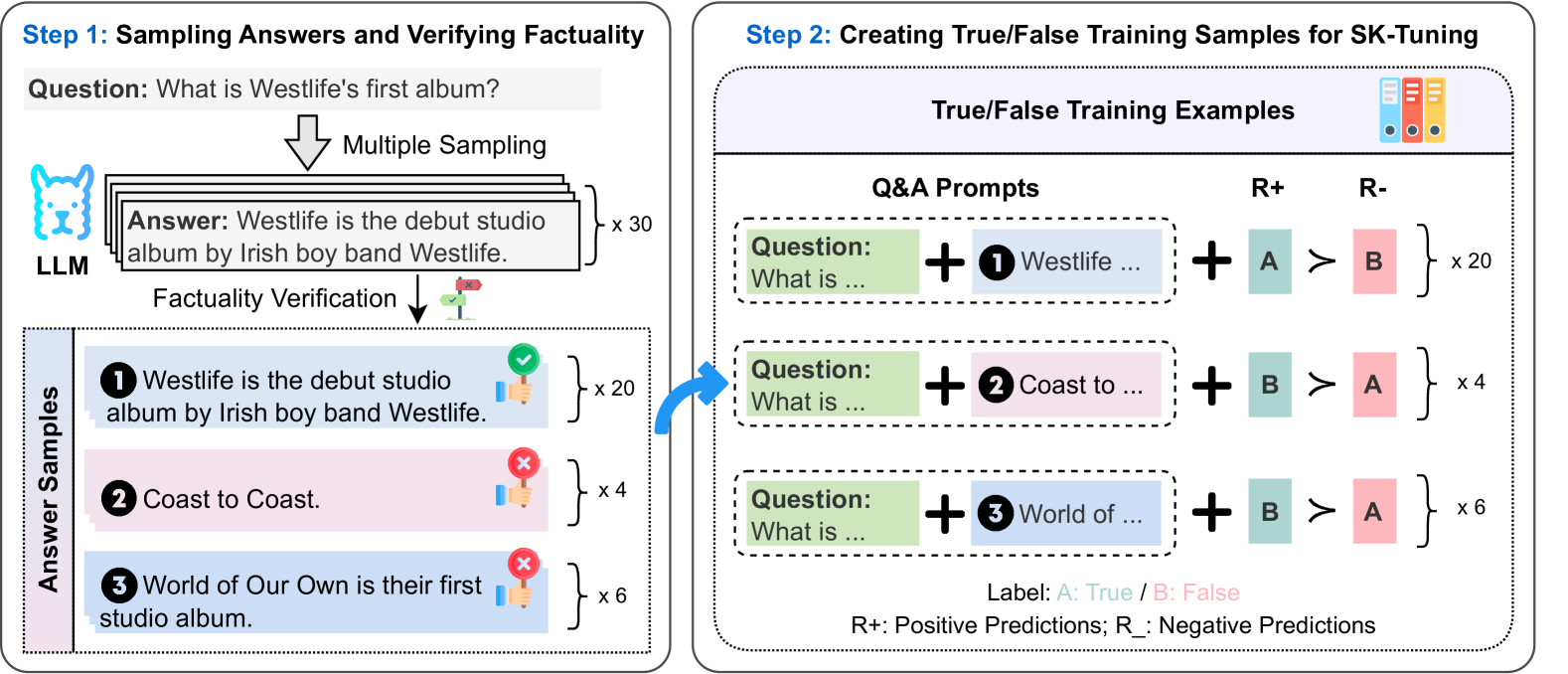

The image is a flowchart illustrating a two-step pipeline for creating labeled training data for **SK-Tuning** (a fine-tuning method). Step 1 involves sampling answers from a Large Language Model (LLM) and verifying their factuality. Step 2 uses these verified answers to generate true/false training examples.

### Components/Axes (Diagram Elements)

The diagram is divided into two main sections:

#### Step 1: Sampling Answers and Verifying Factuality

- **Question**: *"What is Westlife's first album?"* (top-left, gray box).

- **LLM (Large Language Model)**: Represented by a blue dog icon (left side).

- **Multiple Sampling**: An arrow from the question to the LLM, labeled *"Multiple Sampling"*. The LLM generates **30 answer samples** (labeled *"x 30"*).

- **Answer Samples** (bottom-left, vertical list):

1. **Answer 1 (Correct)**: *"Westlife is the debut studio album by Irish boy band Westlife."* (green checkmark, *"x 20"* – 20 samples).

2. **Answer 2 (Incorrect)**: *"Coast to Coast."* (red cross, *"x 4"* – 4 samples).

3. **Answer 3 (Incorrect)**: *"World of Our Own is their first studio album."* (red cross, *"x 6"* – 6 samples).

- **Factuality Verification**: An arrow from the LLM’s answers to the answer samples, labeled *"Factuality Verification"* (with a check/cross icon: green check for correct, red cross for incorrect).

#### Step 2: Creating True/False Training Samples for SK-Tuning

- **True/False Training Examples** (right side, table-like structure):

- **Q&A Prompts**: Each row has a *"Question: What is ..."* (green box) + a numbered answer (1, 2, 3) from Step 1.

- **R+ (Positive Predictions)**: Green box with *"A"* (labeled *"True"* in the legend).

- **R- (Negative Predictions)**: Red box with *"B"* (labeled *"False"* in the legend).

- **Counts**:

- Row 1 (Answer 1): *"x 20"* (matches Answer 1’s count).

- Row 2 (Answer 2): *"x 4"* (matches Answer 2’s count).

- Row 3 (Answer 3): *"x 6"* (matches Answer 3’s count).

- **Legend** (bottom-right): *"Label: A: True / B: False"* and *"R+: Positive Predictions; R-: Negative Predictions"*.

### Detailed Analysis

- **Step 1 Flow**: The question is fed into the LLM, which generates 30 answer samples. These samples are verified for factuality, resulting in 20 correct (Answer 1) and 10 incorrect (4 + 6) answers.

- **Step 2 Flow**: Each answer sample (correct/incorrect) is used to create a training example:

- For the **correct answer** (Answer 1), the prompt is *"Question: What is ..." + "1 Westlife ..."* with R+ (A, True) and R- (B, False), repeated 20 times.

- For **incorrect answers** (Answer 2, 3), the prompts use their respective answers, with R+ (B, False) and R- (A, True), repeated 4 and 6 times, respectively.

- **Color Coding**: Green = correct/true (R+), red = incorrect/false (R-). Answer samples use green check (correct) and red cross (incorrect) icons.

### Key Observations

- The total number of training examples (20 + 4 + 6 = 30) matches the initial sampling count.

- The legend clarifies that *A = True* (positive prediction) and *B = False* (negative prediction), critical for interpreting training labels.

- The process balances (or imbalances) training data based on factuality, ensuring the model learns to distinguish correct/incorrect responses.

### Interpretation

This diagram outlines a method to generate labeled training data for fine-tuning an LLM (SK-Tuning) by:

1. Sampling multiple answers from the LLM.

2. Verifying their factuality (correct/incorrect).

3. Creating true/false examples to train the model to recognize factually accurate responses.

The correct answer (Answer 1) generates *positive (True)* examples, while incorrect answers (Answer 2, 3) generate *negative (False)* examples. This approach improves the model’s factuality by exposing it to diverse, labeled examples of correct/incorrect responses. The use of multiple samples (20, 4, 6) ensures robust training data, reducing bias and improving generalization.