TECHNICAL ASSET FINGERPRINT

6d3f90981cb86a1381af7329

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Diagram: Efficient Layer Set Suggestion and Parallel Candidate Evaluation

### Overview

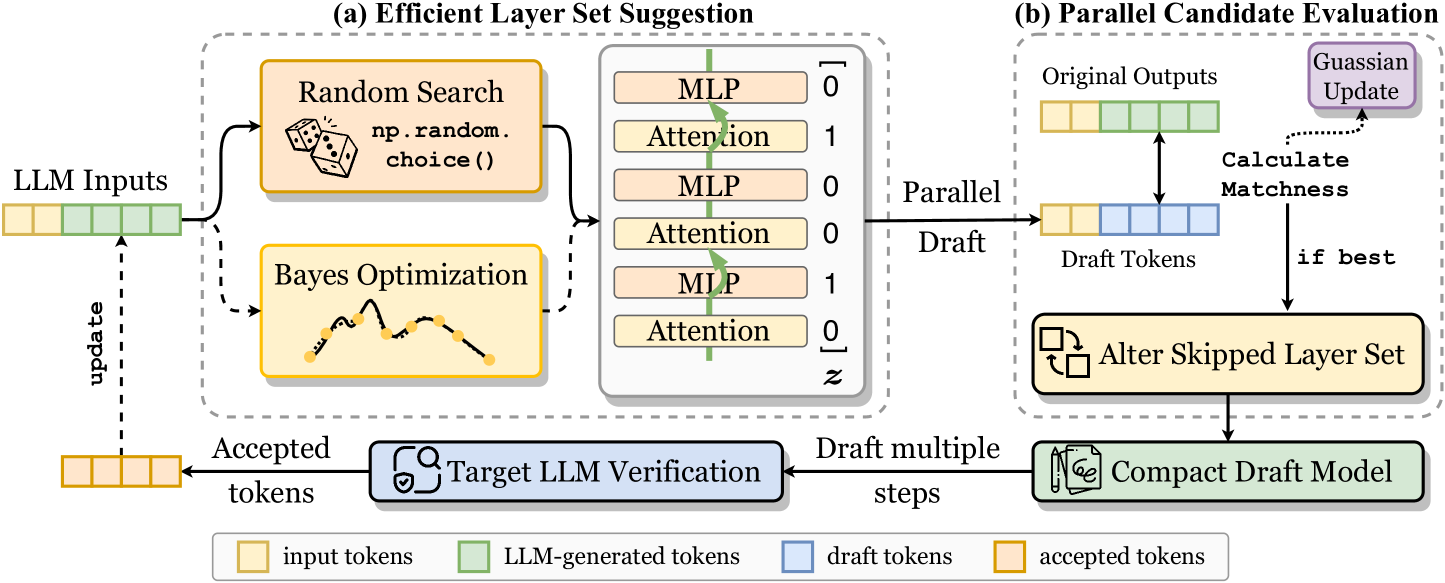

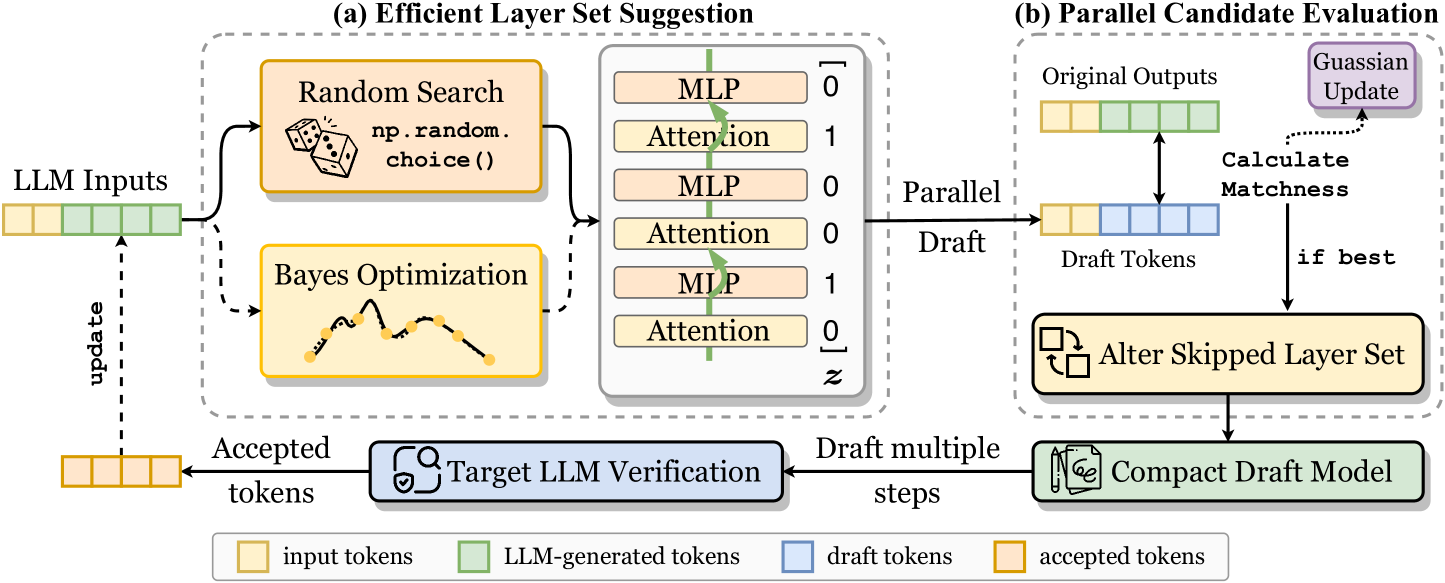

The image presents a diagram illustrating a system for efficient layer set suggestion and parallel candidate evaluation in the context of Large Language Models (LLMs). It is divided into two main parts: (a) Efficient Layer Set Suggestion and (b) Parallel Candidate Evaluation. The diagram outlines the flow of data and processes involved in generating and evaluating different layer configurations for an LLM.

### Components/Axes

**Overall Structure:** The diagram is split into two sections, (a) and (b), enclosed in dashed-line boxes.

**Section (a) - Efficient Layer Set Suggestion:**

* **LLM Inputs:** Represented by a series of alternating tan and green blocks, indicating input tokens and LLM-generated tokens.

* **Random Search:** A tan rounded rectangle containing the text "Random Search" and the code snippet "np.random.choice()", accompanied by a dice icon.

* **Bayes Optimization:** A tan rounded rectangle containing the text "Bayes Optimization" and a line graph with several data points.

* **Layer Set:** A vertical stack of alternating tan blocks labeled "MLP" and "Attention", with binary values (0 or 1) to their right, enclosed in square brackets. The bottom value is labeled 'z'.

* **Target LLM Verification:** A blue rounded rectangle containing the text "Target LLM Verification" and an icon resembling a target with a checkmark.

* **Arrows:** Solid and dashed arrows indicate the flow of data and control between components.

**Section (b) - Parallel Candidate Evaluation:**

* **Original Outputs:** A series of alternating tan and green blocks, representing original outputs.

* **Draft Tokens:** A series of blue blocks, representing draft tokens.

* **Calculate Matchness:** Text label indicating the calculation of matchness between original outputs and draft tokens.

* **Gaussian Update:** A purple rounded rectangle containing the text "Gaussian Update".

* **Alter Skipped Layer Set:** A tan rounded rectangle containing the text "Alter Skipped Layer Set" and an icon of two squares with rotating arrows.

* **Compact Draft Model:** A green rounded rectangle containing the text "Compact Draft Model" and an icon of a document with a pencil.

* **Arrows:** Solid and dotted arrows indicate the flow of data and control between components.

**Legend (Bottom):**

* Tan: input tokens

* Green: LLM-generated tokens

* Blue: draft tokens

* Tan: accepted tokens

### Detailed Analysis

**Section (a) - Efficient Layer Set Suggestion:**

1. **LLM Inputs:** A sequence of 5 blocks, alternating between tan (input tokens) and green (LLM-generated tokens).

2. **Random Search:** The "Random Search" block suggests a method for randomly selecting layer configurations. The "np.random.choice()" code snippet indicates the use of a random choice function, likely from the NumPy library.

3. **Bayes Optimization:** The "Bayes Optimization" block suggests an alternative method for selecting layer configurations using Bayesian optimization techniques. The line graph within the block likely represents the optimization process.

4. **Layer Set:** The stack of "MLP" and "Attention" layers represents a possible layer configuration for the LLM. The binary values (0 or 1) next to each layer likely indicate whether that layer is included (1) or skipped (0) in the current configuration. The 'z' at the bottom is likely a variable representing the final binary value.

* From top to bottom, the layers are: MLP (0), Attention (1), MLP (0), Attention (0), MLP (1), Attention (0).

5. **Flow:**

* The "LLM Inputs" feed into both "Random Search" and "Bayes Optimization".

* Both "Random Search" and "Bayes Optimization" contribute to the "Layer Set" configuration.

* The "Layer Set" is then passed to "Parallel Candidate Evaluation".

* "Target LLM Verification" receives "Draft multiple steps" from "Compact Draft Model" and outputs "Accepted tokens" which updates "LLM Inputs".

**Section (b) - Parallel Candidate Evaluation:**

1. **Original Outputs:** A sequence of 5 blocks, alternating between tan and green, representing the original outputs of the LLM.

2. **Draft Tokens:** A sequence of 5 blue blocks, representing the draft tokens generated by the current layer configuration.

3. **Calculate Matchness:** The "Calculate Matchness" step compares the "Original Outputs" and "Draft Tokens" to assess the quality of the draft.

4. **Gaussian Update:** The "Gaussian Update" block suggests that a Gaussian process is used to update the model based on the matchness score.

5. **Alter Skipped Layer Set:** If the draft is not the best, the "Alter Skipped Layer Set" block indicates that the layer configuration is modified.

6. **Compact Draft Model:** The "Compact Draft Model" block represents the final, optimized draft model.

7. **Flow:**

* "Original Outputs" and "Draft Tokens" are compared in "Calculate Matchness".

* "Calculate Matchness" feeds into "Gaussian Update".

* If the draft is not the best, "Calculate Matchness" feeds into "Alter Skipped Layer Set".

* "Alter Skipped Layer Set" feeds back into the "Draft Tokens".

* The "Draft Tokens" are passed to "Compact Draft Model".

**Legend:** The legend at the bottom clarifies the color coding used for different types of tokens: input tokens (tan), LLM-generated tokens (green), draft tokens (blue), and accepted tokens (tan).

### Key Observations

* The diagram illustrates a closed-loop system for optimizing layer configurations in LLMs.

* Two methods, "Random Search" and "Bayes Optimization", are used to suggest layer configurations.

* Parallel candidate evaluation is performed to assess the quality of different layer configurations.

* The system incorporates a mechanism for updating the model based on the matchness score between original outputs and draft tokens.

### Interpretation

The diagram presents a method for efficiently exploring and optimizing the layer structure of Large Language Models. By using a combination of random search and Bayesian optimization, the system can generate a diverse set of layer configurations. The parallel candidate evaluation process allows for the simultaneous assessment of these configurations, enabling the system to quickly identify promising layer structures. The Gaussian update mechanism provides a means for refining the model based on the performance of different layer configurations. The closed-loop nature of the system allows for continuous improvement and adaptation of the LLM's layer structure. The use of draft tokens and the comparison with original outputs suggests a form of iterative refinement, where the model gradually improves its performance by exploring different layer configurations.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Diagram: Efficient Layer Set Suggestion & Parallel Candidate Evaluation

### Overview

The image presents a diagram illustrating two processes: (a) Efficient Layer Set Suggestion and (b) Parallel Candidate Evaluation, both related to Large Language Model (LLM) optimization. The diagram depicts the flow of tokens through these processes, highlighting the interaction between random search, Bayesian optimization, and LLM verification, as well as the parallel evaluation of candidate models.

### Components/Axes

The diagram is divided into two main sections, labeled (a) and (b). Each section contains several components represented by boxes and arrows. A legend at the bottom of the image defines the color coding for different types of tokens:

* Yellow: input tokens

* Light Green: LLM-generated tokens

* Light Blue: draft tokens

* Light Yellow: accepted tokens

Section (a) includes:

* LLM Inputs: A block of yellow tokens.

* Random Search: A box with a dice icon and the text "np.random.choice()".

* Bayes Optimization: A box with a curve icon.

* MLP/Attention Layer Sets: A table listing layer configurations with associated binary values (0 or 1).

* Target LLM Verification: A box with a target icon.

* Accepted Tokens: A block of light yellow tokens.

Section (b) includes:

* Original Outputs: A block of light green tokens.

* Parallel Draft Tokens: A block of light blue tokens.

* Calculate Matchness: A box.

* Gaussian Update: A box.

* Alter Skipped Layer Set: A box with an edit icon.

* Compact Model: A box with a model icon.

* Draft multiple steps: A box.

Arrows indicate the flow of tokens and data between these components. Dashed arrows represent update signals.

### Detailed Analysis or Content Details

**Section (a): Efficient Layer Set Suggestion**

* **LLM Inputs:** The process begins with a series of yellow "input tokens".

* **Random Search & Bayes Optimization:** These two methods operate in parallel, receiving input tokens and generating layer set suggestions.

* **Layer Sets:** The table within this section lists the following layer configurations and their binary values:

* MLP: 0

* Attention: 1

* MLP: 0

* Attention: 0

* MLP: 1

* Attention: 0

* **Target LLM Verification:** The generated layer sets are then sent to "Target LLM Verification".

* **Accepted Tokens:** Verified layer sets result in "accepted tokens" (light yellow), which are fed back into the "LLM Inputs" via a dashed "update" arrow.

**Section (b): Parallel Candidate Evaluation**

* **Original Outputs:** The process starts with "original outputs" (light green tokens).

* **Parallel Draft Tokens:** These outputs are used to generate "parallel draft tokens" (light blue).

* **Calculate Matchness:** The "matchness" between the original and draft tokens is calculated.

* **Gaussian Update:** The results of the matchness calculation are used to perform a "Gaussian Update".

* **Alter Skipped Layer Set:** Based on the update, the "skipped layer set" is altered.

* **Compact Model:** The altered layer set leads to a "compact model".

* **Draft multiple steps:** The process can be repeated in multiple steps.

* **Conditional Flow:** A "if best" arrow indicates that the process continues if the current candidate is the best.

### Key Observations

* The diagram highlights a closed-loop optimization process where layer sets are suggested, verified, and updated based on performance.

* The parallel evaluation of candidate models allows for efficient exploration of different layer configurations.

* The use of "draft tokens" suggests an iterative refinement process.

* The "Gaussian Update" implies a probabilistic approach to optimization.

* The diagram does not provide specific numerical data or performance metrics.

### Interpretation

The diagram illustrates a method for efficiently optimizing LLMs by suggesting and evaluating different layer configurations. The combination of random search and Bayesian optimization allows for a balance between exploration and exploitation of the layer space. The parallel evaluation of candidate models accelerates the optimization process. The feedback loop, driven by the "Target LLM Verification" and "Gaussian Update", ensures that the model converges towards an optimal configuration. The use of different token colors clearly visualizes the flow of information and the transformation of data throughout the process. The diagram suggests a focus on model compression or efficiency, as indicated by the "Compact Model" component. The overall goal appears to be to find a layer set that maintains performance while reducing computational cost.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Technical Diagram: LLM Layer Skipping Optimization Process

### Overview

The image is a technical flowchart illustrating a two-stage process for optimizing Large Language Model (LLM) inference by dynamically selecting which layers to skip. The system uses draft models and verification to accelerate generation while maintaining output quality. The diagram is divided into two main dashed-box sections: (a) Efficient Layer Set Suggestion and (b) Parallel Candidate Evaluation, connected by a feedback loop.

### Components/Axes

The diagram is a process flow with labeled boxes, arrows indicating data flow, and a legend.

**Main Sections:**

1. **Left Input:** "LLM Inputs" (a sequence of colored token blocks).

2. **Section (a) - Efficient Layer Set Suggestion:** A dashed box containing:

* Two optimization methods: "Random Search" (with an icon of dice and text `np.random.choice()`) and "Bayes Optimization" (with an icon of a sampled function).

* A layer selection matrix showing a stack of "MLP" and "Attention" layers, each associated with a binary value (0 or 1) in a column labeled `z`. The sequence from top to bottom is: MLP-0, Attention-1, MLP-0, Attention-0, MLP-1, Attention-0.

3. **Section (b) - Parallel Candidate Evaluation:** A dashed box containing:

* "Original Outputs" (a sequence of colored token blocks).

* "Draft Tokens" (a sequence of colored token blocks).

* A "Calculate Matchness" step comparing Original and Draft tokens.

* A "Gaussian Update" box.

* An "Alter Skipped Layer Set" box (with an icon of swapping squares).

* A "Compact Draft Model" box (with an icon of a document and pen).

4. **Feedback & Verification Loop:**

* "Target LLM Verification" box (with a shield/check icon).

* Arrows labeled "update", "Accepted tokens", and "Draft multiple steps".

5. **Legend (Bottom Center):** Defines the color coding for token types:

* Yellow box: "input tokens"

* Green box: "LLM-generated tokens"

* Blue box: "draft tokens"

* Orange box: "accepted tokens"

**Spatial Grounding:**

* The entire flow moves generally from left to right.

* Section (a) is on the left, feeding into Section (b) on the right via an arrow labeled "Parallel Draft".

* The "Target LLM Verification" is centered below the two main sections.

* The legend is positioned at the very bottom, centered horizontally.

### Detailed Analysis

**Process Flow:**

1. **Input:** "LLM Inputs" (yellow and green tokens) enter the system.

2. **Layer Suggestion (a):** The system uses either "Random Search" or "Bayes Optimization" to propose a set of layers to skip. This is represented by the binary vector `z` applied to the MLP and Attention layers. A value of `1` likely means the layer is active, `0` means it is skipped.

3. **Draft Generation:** The suggested layer set is used to create a "Parallel Draft" via a "Compact Draft Model". This generates "Draft Tokens" (blue).

4. **Evaluation (b):** The "Draft Tokens" are compared to the "Original Outputs" (green) to "Calculate Matchness".

5. **Optimization:** If the matchness is good ("if best"), the system performs a "Gaussian Update" and proceeds to "Alter Skipped Layer Set", refining the layer selection strategy.

6. **Verification & Acceptance:** The draft tokens undergo "Target LLM Verification". Tokens that pass are output as "Accepted tokens" (orange) and also fed back via an "update" arrow to inform future "LLM Inputs". The process can "Draft multiple steps" before verification.

**Token Color Coding (from Legend):**

* The initial "LLM Inputs" block contains 4 yellow (input) and 3 green (LLM-generated) tokens.

* The "Original Outputs" block contains 4 green tokens.

* The "Draft Tokens" block contains 4 blue tokens.

* The final "Accepted tokens" block contains 4 orange tokens.

### Key Observations

1. **Hybrid Optimization:** The system combines stochastic ("Random Search") and model-based ("Bayes Optimization") methods to find optimal layer skip patterns.

2. **Parallel Drafting:** The "Compact Draft Model" generates multiple draft tokens in parallel, which are then verified, suggesting a speculative decoding approach.

3. **Closed-Loop Learning:** The process is iterative. Results from verification ("Accepted tokens") and matchness calculation ("Gaussian Update") feed back to improve the layer suggestion and draft model.

4. **Binary Layer Control:** The core mechanism is a binary mask (`z`) applied to the transformer's MLP and Attention layers, enabling fine-grained control over the model's active computation path.

### Interpretation

This diagram depicts a sophisticated system for **accelerating LLM inference through dynamic layer skipping and speculative decoding**. The core idea is to use a smaller, faster "draft model" (which skips certain layers as per the suggested set) to generate candidate tokens quickly. These candidates are then verified in parallel by the full "target LLM". Only the accepted tokens are kept, ensuring output quality matches the full model.

The "Efficient Layer Set Suggestion" module is the brain of the operation, using optimization algorithms to learn which layers are most dispensable for a given input context, thereby maximizing speed without sacrificing accuracy. The "Parallel Candidate Evaluation" module is the engine, efficiently testing multiple draft hypotheses. The feedback loops create a self-improving system where the layer selection strategy and draft model are continuously refined based on verification outcomes.

**Significance:** This approach addresses the key trade-off in LLM deployment: speed versus quality. By intelligently skipping computation and verifying results, it promises significant reductions in latency and computational cost for applications like real-time chat, translation, and code generation, without degrading the user experience. The use of both random and Bayesian optimization suggests a robust strategy to avoid local minima in finding the optimal skip pattern.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Flowchart: Layer Optimization and Draft Model Evaluation Process

### Overview

The diagram illustrates a two-stage technical process for optimizing layer sets in a language model (LLM) and evaluating draft models. It combines probabilistic search methods with parallel candidate evaluation, featuring explicit token tracking and model verification steps.

### Components/Axes

**Legend (bottom of diagram):**

- Orange: Input tokens

- Green: LLM-generated tokens

- Blue: Draft tokens

- Orange: Accepted tokens

**Key Components:**

1. **Efficient Layer Set Suggestion (Left Section)**

- LLM Inputs → Random Search (np.random.choice()) → Bayes Optimization (graph with optimization curve)

- Accepted tokens → Target LLM Verification (blue box with checkmark)

- Layer configuration visualization (MLP/Attention blocks with binary flags)

2. **Parallel Candidate Evaluation (Right Section)**

- Original Outputs → Parallel Draft (blue tokens)

- Calculate Matchness → Conditional Update (if best)

- Alter Skipped Layer Set → Compact Draft Model (green box)

- Gaussian Update (purple box with arrow from original outputs)

### Detailed Analysis

**Left Section Flow:**

1. Input tokens (orange) feed into dual optimization processes:

- Random Search (dice icon with "np.random.choice()")

- Bayes Optimization (graph showing optimization curve with yellow data points)

2. Accepted tokens (orange) from optimization feed into:

- Target LLM Verification (blue box with checkmark icon)

3. Layer configuration visualization shows:

- MLP blocks (orange) with binary flags (0/1)

- Attention blocks (yellow) with binary flags

- Z-axis parameter (bottom right)

**Right Section Flow:**

1. Original outputs (green tokens) split into:

- Parallel Draft (blue tokens)

- Gaussian Update (purple box)

2. Draft tokens flow through:

- Calculate Matchness (decision point)

- Conditional Update (if best)

3. Final output:

- Compact Draft Model (green box)

- Alter Skipped Layer Set (icon with bidirectional arrows)

### Key Observations

1. Token color consistency:

- Input/accepted tokens share orange color

- Draft tokens maintain blue throughout

- LLM-generated tokens use green

2. Optimization duality:

- Random search (stochastic) vs. Bayes optimization (deterministic)

3. Parallel processing:

- Simultaneous evaluation of multiple draft candidates

4. Model adaptation:

- Dynamic layer skipping based on matchness evaluation

5. Typographical correction:

- "Gaussian" instead of "Guassian" in update component

### Interpretation

This diagram represents a hybrid optimization framework combining:

1. **Layer Selection Optimization** (left):

- Uses probabilistic methods (random search) and Bayesian optimization to identify optimal layer configurations

- Verifies selections against target LLM performance

2. **Candidate Evaluation System** (right):

- Implements parallel processing of draft models

- Employs matchness calculation for quality assessment

- Features dynamic model adaptation through layer skipping

3. **Token Tracking System**:

- Color-coded token flow visualization enables monitoring of:

- Input preservation (orange)

- Draft generation (blue)

- Accepted modifications (orange)

- LLM-generated content (green)

The process suggests an iterative optimization approach where layer configurations are continuously refined through probabilistic search, parallel evaluation, and dynamic adaptation based on performance metrics. The color-coded token tracking system provides visual transparency into the model's processing pipeline, while the dual optimization methods balance exploration (random search) and exploitation (Bayes optimization) in layer selection.

DECODING INTELLIGENCE...