## Diagram: Efficient Layer Set Suggestion & Parallel Candidate Evaluation

### Overview

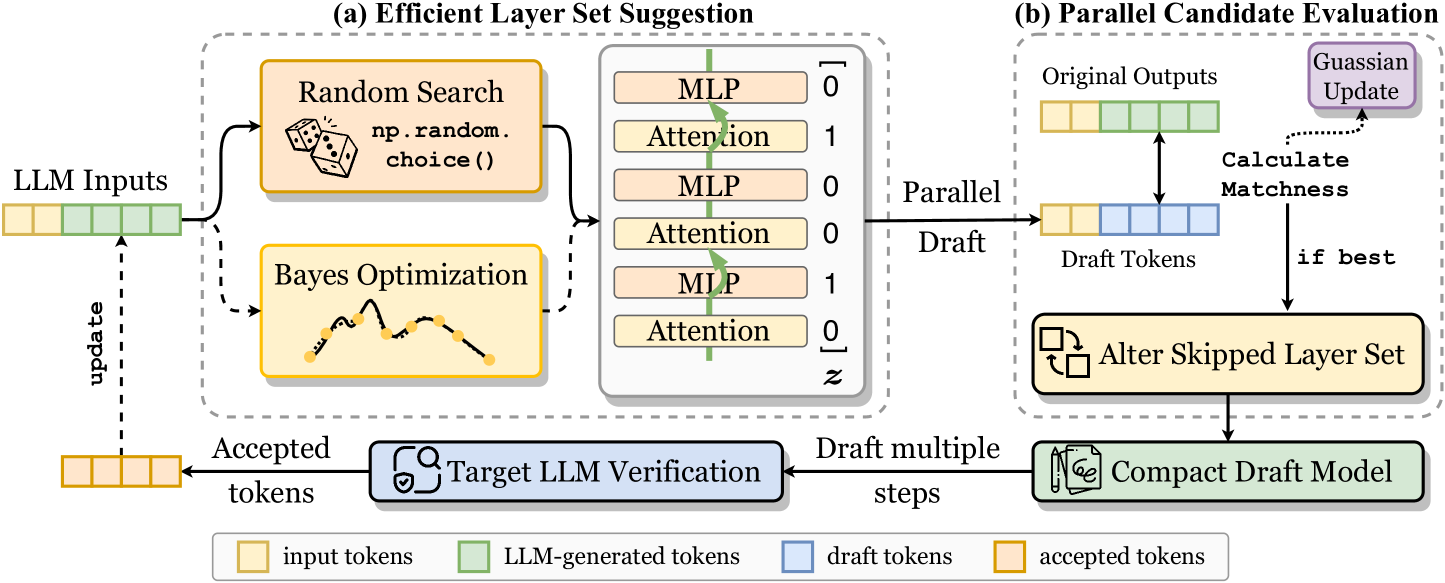

The image presents a diagram illustrating two processes: (a) Efficient Layer Set Suggestion and (b) Parallel Candidate Evaluation, both related to Large Language Model (LLM) optimization. The diagram depicts the flow of tokens through these processes, highlighting the interaction between random search, Bayesian optimization, and LLM verification, as well as the parallel evaluation of candidate models.

### Components/Axes

The diagram is divided into two main sections, labeled (a) and (b). Each section contains several components represented by boxes and arrows. A legend at the bottom of the image defines the color coding for different types of tokens:

* Yellow: input tokens

* Light Green: LLM-generated tokens

* Light Blue: draft tokens

* Light Yellow: accepted tokens

Section (a) includes:

* LLM Inputs: A block of yellow tokens.

* Random Search: A box with a dice icon and the text "np.random.choice()".

* Bayes Optimization: A box with a curve icon.

* MLP/Attention Layer Sets: A table listing layer configurations with associated binary values (0 or 1).

* Target LLM Verification: A box with a target icon.

* Accepted Tokens: A block of light yellow tokens.

Section (b) includes:

* Original Outputs: A block of light green tokens.

* Parallel Draft Tokens: A block of light blue tokens.

* Calculate Matchness: A box.

* Gaussian Update: A box.

* Alter Skipped Layer Set: A box with an edit icon.

* Compact Model: A box with a model icon.

* Draft multiple steps: A box.

Arrows indicate the flow of tokens and data between these components. Dashed arrows represent update signals.

### Detailed Analysis or Content Details

**Section (a): Efficient Layer Set Suggestion**

* **LLM Inputs:** The process begins with a series of yellow "input tokens".

* **Random Search & Bayes Optimization:** These two methods operate in parallel, receiving input tokens and generating layer set suggestions.

* **Layer Sets:** The table within this section lists the following layer configurations and their binary values:

* MLP: 0

* Attention: 1

* MLP: 0

* Attention: 0

* MLP: 1

* Attention: 0

* **Target LLM Verification:** The generated layer sets are then sent to "Target LLM Verification".

* **Accepted Tokens:** Verified layer sets result in "accepted tokens" (light yellow), which are fed back into the "LLM Inputs" via a dashed "update" arrow.

**Section (b): Parallel Candidate Evaluation**

* **Original Outputs:** The process starts with "original outputs" (light green tokens).

* **Parallel Draft Tokens:** These outputs are used to generate "parallel draft tokens" (light blue).

* **Calculate Matchness:** The "matchness" between the original and draft tokens is calculated.

* **Gaussian Update:** The results of the matchness calculation are used to perform a "Gaussian Update".

* **Alter Skipped Layer Set:** Based on the update, the "skipped layer set" is altered.

* **Compact Model:** The altered layer set leads to a "compact model".

* **Draft multiple steps:** The process can be repeated in multiple steps.

* **Conditional Flow:** A "if best" arrow indicates that the process continues if the current candidate is the best.

### Key Observations

* The diagram highlights a closed-loop optimization process where layer sets are suggested, verified, and updated based on performance.

* The parallel evaluation of candidate models allows for efficient exploration of different layer configurations.

* The use of "draft tokens" suggests an iterative refinement process.

* The "Gaussian Update" implies a probabilistic approach to optimization.

* The diagram does not provide specific numerical data or performance metrics.

### Interpretation

The diagram illustrates a method for efficiently optimizing LLMs by suggesting and evaluating different layer configurations. The combination of random search and Bayesian optimization allows for a balance between exploration and exploitation of the layer space. The parallel evaluation of candidate models accelerates the optimization process. The feedback loop, driven by the "Target LLM Verification" and "Gaussian Update", ensures that the model converges towards an optimal configuration. The use of different token colors clearly visualizes the flow of information and the transformation of data throughout the process. The diagram suggests a focus on model compression or efficiency, as indicated by the "Compact Model" component. The overall goal appears to be to find a layer set that maintains performance while reducing computational cost.