## Diagram: Scaled Dot-Product Attention Mechanism

### Overview

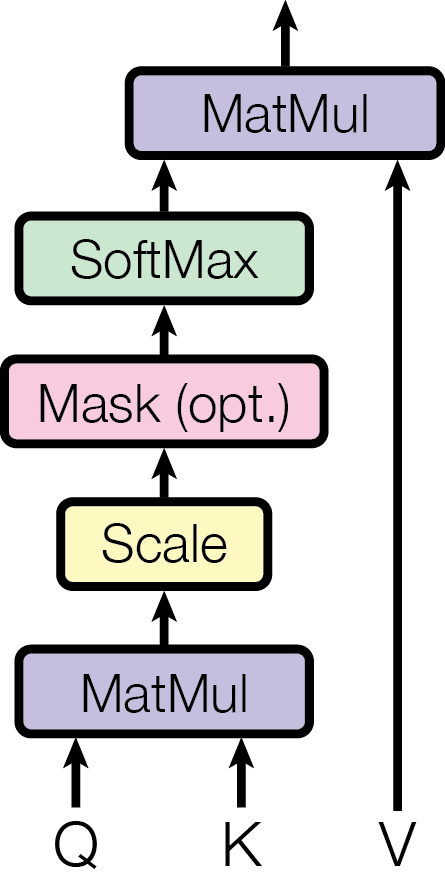

The image is a technical flowchart illustrating the computational steps of the scaled dot-product attention mechanism, a core component of transformer architectures in machine learning. It depicts a sequential data flow from inputs (Q, K, V) through several processing blocks to a final output.

### Components/Axes

The diagram consists of labeled rectangular blocks connected by directional arrows, indicating the flow of data. The components are arranged vertically from bottom to top.

**Inputs (Bottom of Diagram):**

* **Q**: Positioned at the bottom-left. Represents the Query matrix.

* **K**: Positioned at the bottom-center. Represents the Key matrix.

* **V**: Positioned at the bottom-right. Represents the Value matrix.

**Processing Blocks (from bottom to top):**

1. **MatMul** (Purple box): First matrix multiplication block. Receives inputs from **Q** and **K**.

2. **Scale** (Yellow box): Scaling operation block. Receives input from the first **MatMul**.

3. **Mask (opt.)** (Pink box): Optional masking operation block. Receives input from **Scale**.

4. **SoftMax** (Green box): Softmax normalization block. Receives input from **Mask (opt.)**.

5. **MatMul** (Purple box): Second matrix multiplication block. Receives input from **SoftMax** and directly from **V**.

**Output (Top of Diagram):**

* An upward-pointing arrow from the final **MatMul** block indicates the output of the attention mechanism.

### Detailed Analysis

The diagram explicitly details the sequence of operations for scaled dot-product attention:

1. **Initial Computation**: The Query (**Q**) and Key (**K**) matrices are multiplied together in the first **MatMul** operation. This computes the raw attention scores.

2. **Scaling**: The result is passed to the **Scale** block. This typically involves dividing the scores by the square root of the key dimension (`√d_k`) to stabilize gradients.

3. **Optional Masking**: The scaled scores then pass through the **Mask (opt.)** block. This step is optional and is used to prevent attention to certain positions (e.g., future tokens in causal language modeling).

4. **Normalization**: The (potentially masked) scores are processed by the **SoftMax** block, which converts them into a probability distribution (attention weights).

5. **Final Aggregation**: The attention weights from the **SoftMax** are multiplied with the Value (**V**) matrix in the final **MatMul** operation. This produces the weighted sum of values, which is the output of the attention layer.

**Spatial Grounding & Flow Verification:**

* The flow is strictly bottom-to-top, as indicated by the arrows.

* The **V** input has a direct, long arrow bypassing the intermediate scaling/masking/softmax steps to connect only to the final **MatMul** block. This is a critical architectural detail.

* The two **MatMul** blocks are visually identical (purple) but perform different functions in the sequence: the first computes scores, the second applies weights to values.

### Key Observations

* **Modularity**: The diagram presents the mechanism as a clear pipeline of discrete, functional modules.

* **Optionality**: The "Mask (opt.)" label explicitly notes that this step is not always required, highlighting a configurable aspect of the architecture.

* **Color Coding**: Blocks are color-coded by function type (purple for matrix operations, yellow for scaling, pink for masking, green for normalization), aiding visual parsing.

* **Input Separation**: The three distinct inputs (Q, K, V) are clearly labeled and enter the pipeline at different points, emphasizing their separate roles.

### Interpretation

This diagram is a canonical representation of the scaled dot-product attention function, mathematically expressed as:

`Attention(Q, K, V) = softmax( (QK^T) / √d_k ) V`

It visually answers the question: "How do Query, Key, and Value matrices interact to produce a context-aware output?" The flow demonstrates how raw compatibility scores (Q·K) are refined through scaling and normalization to create attention weights, which then selectively aggregate information from the Value matrix. The optional mask component reveals the mechanism's adaptability for tasks like autoregressive generation, where future information must be hidden. This process allows a model to dynamically focus on relevant parts of an input sequence when producing each part of the output, which is the foundational innovation of the transformer model.