\n

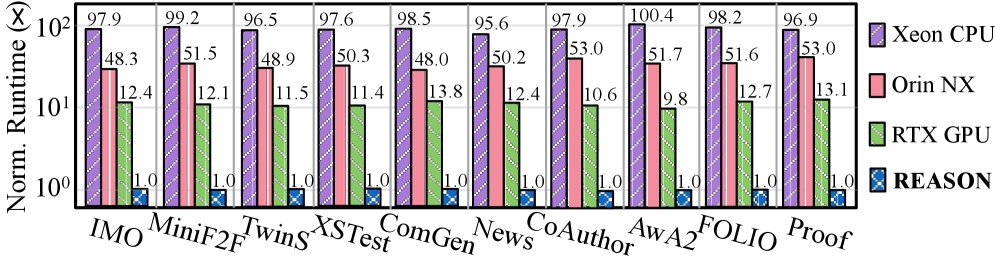

## Bar Chart: Normalized Runtime Comparison of Different Hardware

### Overview

This bar chart compares the normalized runtime (in percentage, represented on a logarithmic y-axis) of several language models (IMO, MiniF2F, Twins, XSTest, ComGen, News, CoAuthor, AwA2, FOLIO, Proof) across three different hardware platforms: Xeon CPU, Orin NX, and RTX GPU. Each model has three bars representing its runtime on each hardware. The chart aims to demonstrate the performance differences between the hardware platforms for these specific models.

### Components/Axes

* **X-axis:** Language Models - IMO, MiniF2F, Twins, XSTest, ComGen, News, CoAuthor, AwA2, FOLIO, Proof.

* **Y-axis:** Normalized Runtime (%), Logarithmic Scale. The scale ranges from 1 to 102, with tick marks at 1, 10, and 100.

* **Legend:** Located in the top-right corner.

* Xeon CPU (Blue)

* Orin NX (Pink/Red)

* RTX GPU (Green)

* REASON (Dark Purple)

### Detailed Analysis

The chart consists of 10 groups of three bars each, one for each language model. The values are read from the top of each bar.

* **IMO:**

* Xeon CPU: 97.9%

* Orin NX: 48.3%

* RTX GPU: 12.4%

* **MiniF2F:**

* Xeon CPU: 99.2%

* Orin NX: 51.5%

* RTX GPU: 12.1%

* **Twins:**

* Xeon CPU: 96.5%

* Orin NX: 48.9%

* RTX GPU: 11.5%

* **XSTest:**

* Xeon CPU: 97.6%

* Orin NX: 50.3%

* RTX GPU: 11.4%

* **ComGen:**

* Xeon CPU: 98.5%

* Orin NX: 48.0%

* RTX GPU: 13.8%

* **News:**

* Xeon CPU: 95.6%

* Orin NX: 50.2%

* RTX GPU: 12.4%

* **CoAuthor:**

* Xeon CPU: 97.9%

* Orin NX: 53.0%

* RTX GPU: 10.6%

* **AwA2:**

* Xeon CPU: 100.4%

* Orin NX: 51.7%

* RTX GPU: 9.8%

* **FOLIO:**

* Xeon CPU: 98.2%

* Orin NX: 51.6%

* RTX GPU: 12.7%

* **Proof:**

* Xeon CPU: 96.9%

* Orin NX: 53.0%

* RTX GPU: 13.1%

**Trends:**

* The Xeon CPU consistently exhibits the highest normalized runtime across all models, generally around 96-100%.

* The Orin NX shows intermediate runtimes, typically ranging from 48% to 53%.

* The RTX GPU consistently demonstrates the lowest normalized runtime, generally between 9.8% and 13.8%.

### Key Observations

* The RTX GPU consistently outperforms both the Xeon CPU and Orin NX by a significant margin across all models.

* The performance difference between the Xeon CPU and Orin NX is less pronounced, but the Xeon CPU is consistently slower.

* AwA2 has the highest runtime on the Xeon CPU (100.4%).

* AwA2 has the lowest runtime on the RTX GPU (9.8%).

### Interpretation

The data strongly suggests that the RTX GPU is the most efficient hardware platform for running these language models, offering significantly faster performance compared to the Xeon CPU and Orin NX. The logarithmic scale emphasizes the substantial speedup achieved with the RTX GPU. The consistent pattern across all models indicates that this performance advantage is not specific to any particular model architecture or dataset.

The high runtimes on the Xeon CPU suggest that it is not well-suited for these types of workloads, likely due to its lack of specialized hardware for parallel processing. The Orin NX offers a moderate improvement over the Xeon CPU, but still falls far short of the RTX GPU's performance.

The differences in runtime could be attributed to the parallel processing capabilities of the RTX GPU, which are well-suited for the matrix operations commonly used in deep learning models. The Xeon CPU, being a general-purpose processor, lacks these specialized capabilities. The Orin NX is an ARM-based processor, and while it offers some parallel processing capabilities, it is not as powerful as the RTX GPU.

The fact that the RTX GPU consistently achieves runtimes in the 10-13% range, while the Xeon CPU is in the 96-100% range, indicates a roughly 8-10x speedup. This is a significant performance improvement that could have a substantial impact on the cost and efficiency of running these language models.