## Diagram: LLM-based Agent Architecture with Memory and Environment Interaction

### Overview

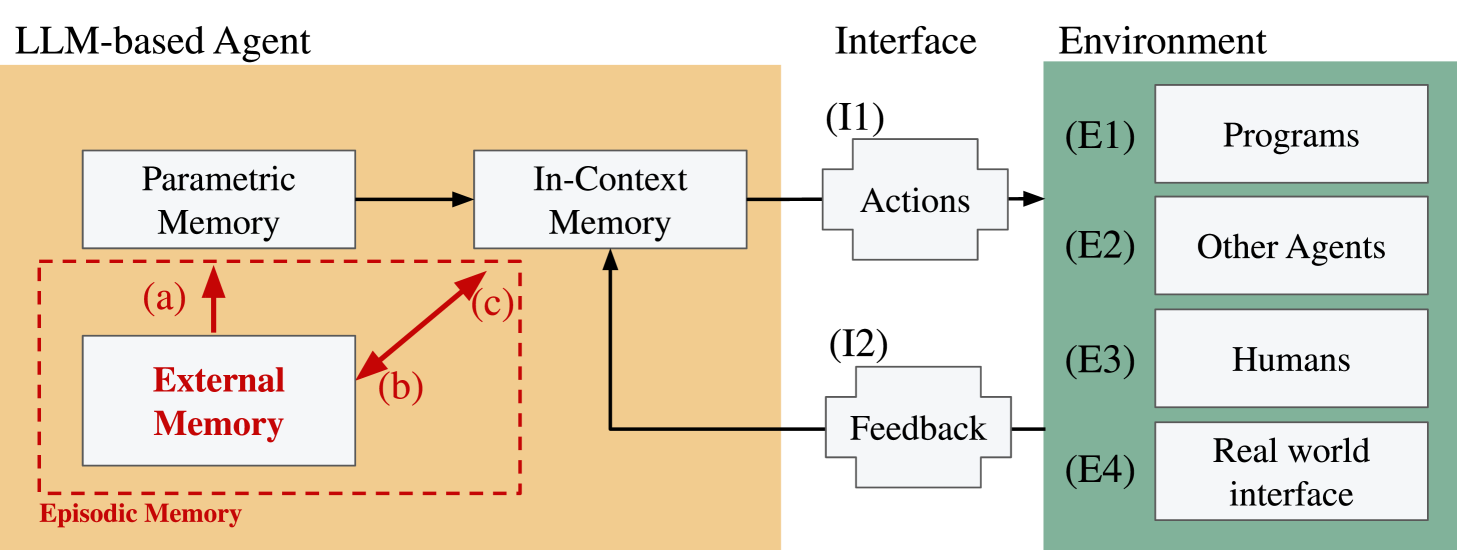

This image is a technical block diagram illustrating the architecture of a Large Language Model (LLM)-based agent. It details the agent's internal memory components, its interface for interaction, and the external environment it operates within. The diagram uses color-coded regions, labeled boxes, and directional arrows to show the flow of information and control.

### Components/Axes

The diagram is organized into three primary vertical sections, each with a distinct background color and title:

1. **LLM-based Agent (Left, Orange Background):**

* **Parametric Memory:** A white box in the upper-left.

* **In-Context Memory:** A white box to the right of Parametric Memory.

* **Episodic Memory:** A red dashed-line box encompassing the lower-left area. Inside it is:

* **External Memory:** A white box with red text.

* **Arrows within Agent:**

* A black arrow points from **Parametric Memory** to **In-Context Memory**.

* Three red arrows are labeled with lowercase letters:

* **(a)**: Points upward from **External Memory** to **Parametric Memory**.

* **(b)**: Points diagonally upward-right from **External Memory** to **In-Context Memory**.

* **(c)**: Points diagonally upward-left from **In-Context Memory** to **External Memory**.

2. **Interface (Center, Light Gray Background):**

* **(I1) Actions:** A white box with a bracket-like shape on its right side. It receives an arrow from **In-Context Memory**.

* **(I2) Feedback:** A white box with a bracket-like shape on its left side. It sends an arrow to **In-Context Memory**.

3. **Environment (Right, Green Background):**

* Four white boxes are stacked vertically, each labeled with an (E#) identifier:

* **(E1) Programs**

* **(E2) Other Agents**

* **(E3) Humans**

* **(E4) Real world interface**

* A black arrow points from the **(I1) Actions** box to the entire **Environment** section.

* A black arrow points from the **(E4) Real world interface** box to the **(I2) Feedback** box.

### Detailed Analysis

The diagram defines a closed-loop system for an autonomous agent.

* **Memory System:** The agent's cognition is based on three memory types:

* **Parametric Memory:** Likely represents the fixed, pre-trained weights of the LLM.

* **In-Context Memory:** Represents the dynamic context window or working memory used during a task.

* **Episodic Memory (External):** An auxiliary memory system, highlighted in red, that stores experiences. It interacts bidirectionally with both core memory types (arrows a, b, c), suggesting it can inform and be informed by the agent's ongoing processing.

* **Interaction Flow:**

1. The agent's **In-Context Memory** generates **Actions (I1)**.

2. These **Actions** are applied to the **Environment**, which contains **Programs, Other Agents, Humans,** and a **Real world interface**.

3. The environment, specifically via the **Real world interface (E4)**, generates **Feedback (I2)**.

4. This **Feedback** is fed back into the agent's **In-Context Memory**, completing the loop and allowing for adaptation.

### Key Observations

* **Spatial Grounding:** The **Episodic Memory** block is positioned in the lower-left of the agent section, visually separate but connected. The **Interface** acts as a narrow bridge between the agent and the broad **Environment**.

* **Color Coding:** Orange denotes the agent's internal state, gray the communication layer, and green the external world. Red is used exclusively to highlight the **Episodic Memory** and its associated interactions, suggesting it is a key or novel component of this architecture.

* **Flow Direction:** The primary action-feedback loop flows from left to right (agent to environment) and then back from right to left (environment to agent). The internal memory arrows (a, b, c) show complex, multi-directional information exchange within the agent itself.

### Interpretation

This diagram presents a sophisticated model for an LLM-based agent designed for interactive, real-world tasks. It moves beyond a simple input-output model by incorporating:

1. **Structured Memory:** The separation of Parametric, In-Context, and External Episodic memory suggests a design aimed at overcoming the limitations of fixed model weights and finite context windows. The External Memory likely allows for long-term learning and retrieval of past experiences.

2. **Embodied Interaction:** By explicitly including **Humans, Other Agents,** and a **Real world interface** in the Environment, the diagram frames the LLM not just as a text processor, but as an *embodied agent* that must perceive and act in a complex, social, and physical world.

3. **Closed-Loop Learning:** The Feedback (I2) pathway is critical. It indicates the agent is designed to learn from the consequences of its actions, enabling iterative improvement and adaptation—a fundamental requirement for autonomous systems.

The architecture implies that for an LLM to function effectively as an agent, it requires not only its internal knowledge (Parametric Memory) and current task focus (In-Context Memory) but also a dedicated system (Episodic Memory) to learn from past interactions within a dynamic environment. The red highlighting of this component underscores its importance in this proposed framework.