## Diagram: Recurrent Neural Network (RNN) Cell Architecture

### Overview

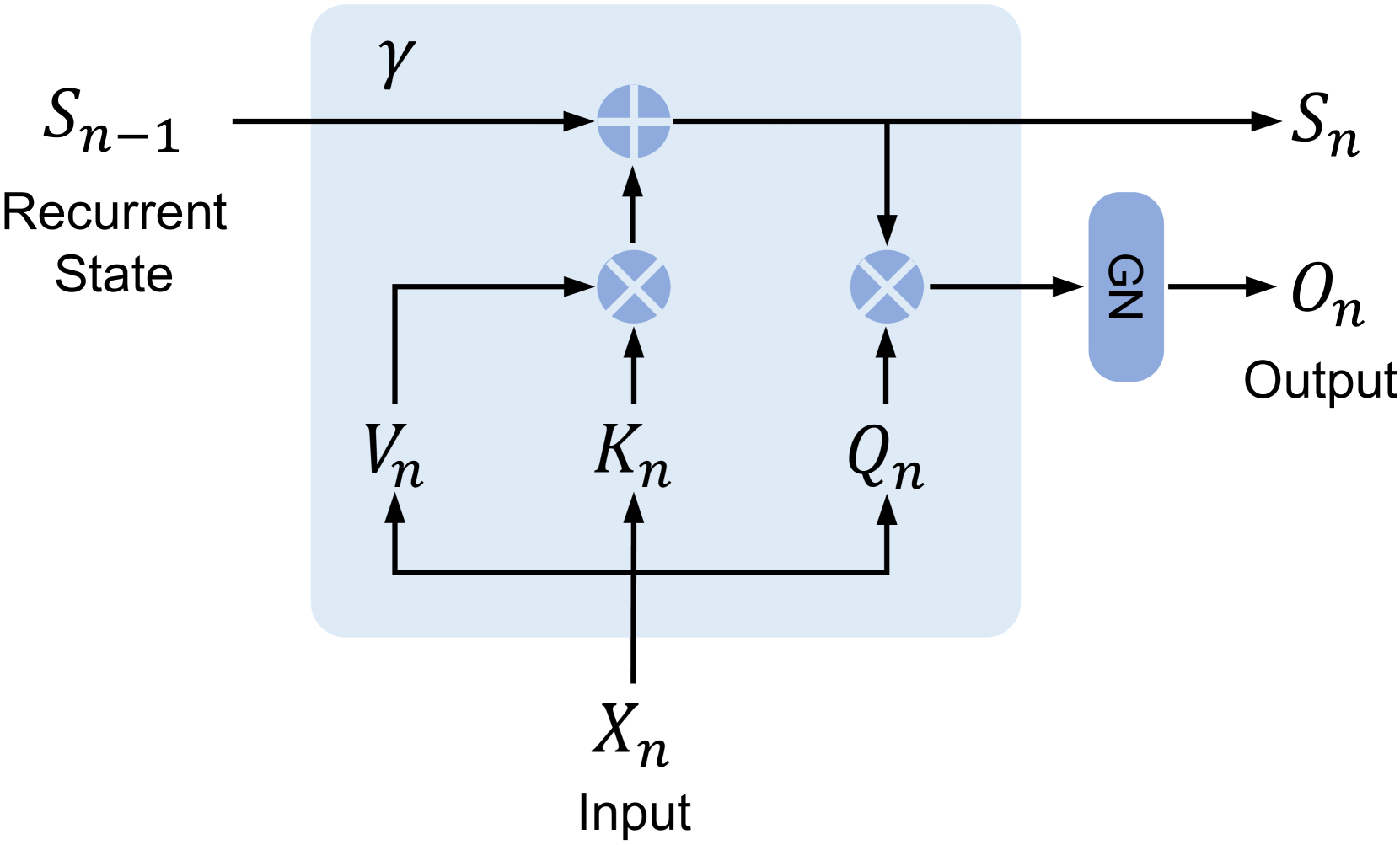

The diagram illustrates the internal structure of a single cell in a Gated Recurrent Unit (GRU) network. It shows how input data (`Xn`), previous recurrent state (`Sn-1`), and internal computations interact to produce a new recurrent state (`Sn`) and output (`On`). The blue box represents the core computational block of the GRU cell.

### Components/Axes

- **Input**: `Xn` (bottom center)

- **Recurrent State**: `Sn-1` (top-left) and `Sn` (top-right)

- **Internal Computations**:

- `Vn` (bottom-left): Input modulation for update gate

- `Kn` (center-left): Update gate (denoted by ⊗ symbol)

- `Qn` (center-right): Reset gate (denoted by ⊗ symbol)

- `GN`: Gated nonlinearity (output transformation block)

- **Output**: `On` (top-right, outside the blue box)

### Detailed Analysis

1. **Input Flow**:

- `Xn` (input) is split into two paths:

- Directly to `Vn` (input modulation for update gate).

- Combined with `Sn-1` (previous state) via `Kn` (update gate) to compute `Sn` (new state).

2. **Gate Operations**:

- **Update Gate (`Kn`)**: Controls how much of the previous state (`Sn-1`) is retained.

- Computed as `Kn = σ(WxXn + WhSn-1 + b)` (σ = sigmoid).

- **Reset Gate (`Qn`)**: Determines how much of the input (`Xn`) is ignored.

- Computed as `Qn = σ(WrXn + VrSn-1 + br)`.

3. **State Update**:

- `Vn` (input modulation) is combined with `Qn` (reset gate) to produce a candidate state:

- `Candidate State = tanh(WzXn + Uz[Qn ⊗ Sn-1] + bz)`.

- The new state `Sn` is computed as:

- `Sn = (1 - Kn) ⊗ Sn-1 + Kn ⊗ Candidate State`.

4. **Output Generation**:

- The new state `Sn` is passed through the Gated Nonlinearity (`GN`) to produce the output `On`:

- `On = tanh(Sn) ⊗ Sn`.

### Key Observations

- **Recurrent State Propagation**: The cell maintains a hidden state (`Sn`) that encodes information from previous inputs.

- **Gating Mechanisms**: The update (`Kn`) and reset (`Qn`) gates regulate information flow, mitigating vanishing/exploding gradient issues.

- **Output Dependency**: The output `On` depends on both the current input (`Xn`) and the updated state (`Sn`).

### Interpretation

This diagram demonstrates how GRUs address the limitations of basic RNNs by using gating mechanisms to control information flow. The update gate (`Kn`) decides how much past information to retain, while the reset gate (`Qn`) determines how much new information to incorporate. The Gated Nonlinearity (`GN`) ensures the output is non-linear and context-aware.

The architecture highlights the balance between retaining relevant past information (`Sn-1`) and integrating new input (`Xn`), making GRUs effective for sequence modeling tasks like language processing or time-series prediction.