\n

## Comparison Chart: Model Performance on New vs. Pretraining Knowledge

### Overview

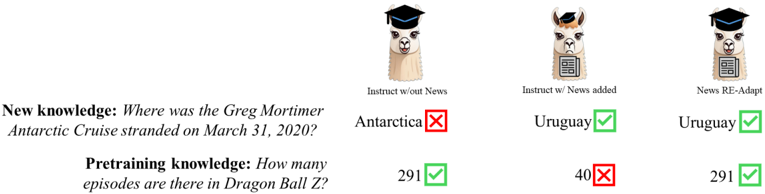

The image is a technical comparison chart evaluating three different model configurations on two distinct knowledge tasks. It uses a tabular layout with icons, text labels, and symbolic indicators (checkmarks and crosses) to show correct and incorrect answers. The chart demonstrates how integrating new information affects a model's performance on both newly learned facts and its pre-existing knowledge.

### Components/Axes

**Structure:** The image is divided into two main sections:

1. **Left Column (Questions):** Contains two knowledge questions.

2. **Right Section (Model Answers):** A 3-column by 2-row grid showing the answers from three model variants.

**Left Column - Questions:**

* **Row 1 (New knowledge):** "Where was the Greg Mortimer Antarctic Cruise stranded on March 31, 2020?" (Text is in bold and italic).

* **Row 2 (Pretraining knowledge):** "How many episodes are there in Dragon Ball Z?" (Text is in bold and italic).

**Right Section - Model Columns (Top to Bottom):**

Each column is headed by an alpaca icon with distinct accessories and a text label.

1. **Column 1 Header:** Icon: Alpaca wearing a graduation cap. Label: "Instruct w/out News".

2. **Column 2 Header:** Icon: Alpaca wearing a pirate hat. Label: "Instruct w/ News added".

3. **Column 3 Header:** Icon: Alpaca wearing a graduation cap, holding a document. Label: "News RE-Adapt".

**Answer Grid (Right Section):**

The grid contains text answers paired with symbolic indicators.

* **Green Checkmark (✅):** Indicates a correct answer.

* **Red Cross (❌):** Indicates an incorrect answer.

### Detailed Analysis

**Row 1: New Knowledge Task (Greg Mortimer Cruise)**

* **Question:** "Where was the Greg Mortimer Antarctic Cruise stranded on March 31, 2020?"

* **Model Answers:**

* **Instruct w/out News:** Answer: "Antarctica". Indicator: Red Cross (❌). **Trend:** Incorrect. The model without news integration fails to answer this current events question correctly.

* **Instruct w/ News added:** Answer: "Uruguay". Indicator: Green Checkmark (✅). **Trend:** Correct. Adding news data enables the model to answer the new knowledge question accurately.

* **News RE-Adapt:** Answer: "Uruguay". Indicator: Green Checkmark (✅). **Trend:** Correct. The re-adapted model also correctly answers the new knowledge question.

**Row 2: Pretraining Knowledge Task (Dragon Ball Z)**

* **Question:** "How many episodes are there in Dragon Ball Z?"

* **Model Answers:**

* **Instruct w/out News:** Answer: "291". Indicator: Green Checkmark (✅). **Trend:** Correct. The base model correctly recalls this established fact from its pretraining data.

* **Instruct w/ News added:** Answer: "40". Indicator: Red Cross (❌). **Trend:** Incorrect. The model that had news added performs poorly on this pretraining knowledge question, suggesting catastrophic forgetting or interference.

* **News RE-Adapt:** Answer: "291". Indicator: Green Checkmark (✅). **Trend:** Correct. The re-adapted model successfully recovers the correct pretraining knowledge.

### Key Observations

1. **Performance Trade-off:** The "Instruct w/ News added" model shows a clear trade-off: it gains the ability to answer new knowledge questions but loses accuracy on specific pretraining knowledge.

2. **Recovery through Re-Adaptation:** The "News RE-Adapt" model successfully mitigates this trade-off, performing correctly on both the new knowledge task and the pretraining knowledge task.

3. **Symbolic Language:** The chart uses a consistent visual language: green checkmarks for correct, red crosses for incorrect. The alpaca icons visually differentiate the model architectures.

4. **Spatial Layout:** The legend (model headers) is positioned at the top center. The questions are anchored on the left, with corresponding answers aligned horizontally in the grid to the right, creating a clear matrix for comparison.

### Interpretation

This chart illustrates a common challenge in machine learning: **catastrophic forgetting**. When a model is fine-tuned or updated with new information (like news data), it can overwrite or interfere with previously learned knowledge (like the episode count of Dragon Ball Z).

The data suggests:

* **"Instruct w/out News"** represents a baseline with solid pretraining knowledge but no capacity for new information.

* **"Instruct w/ News added"** demonstrates the problem: naive integration of new data improves on new tasks but degrades performance on old ones. The incorrect answer "40" for Dragon Ball Z is a significant outlier, indicating severe interference rather than a minor error.

* **"News RE-Adapt"** represents a successful solution. The term "RE-Adapt" implies a specialized technique (perhaps replay, elastic weight consolidation, or a hybrid approach) designed to preserve old knowledge while learning new facts. Its perfect performance on both tasks validates this approach.

The chart's purpose is to advocate for or demonstrate the effectiveness of the "RE-Adapt" method. It visually argues that simply adding new data is insufficient and can be harmful, whereas a careful re-adaptation strategy allows a model to expand its knowledge without sacrificing its existing capabilities. The use of a pop-culture fact (Dragon Ball Z) alongside a real-world event makes the technical concept of catastrophic forgetting more accessible and memorable.