\n

## Diagram: Model Performance Comparison

### Overview

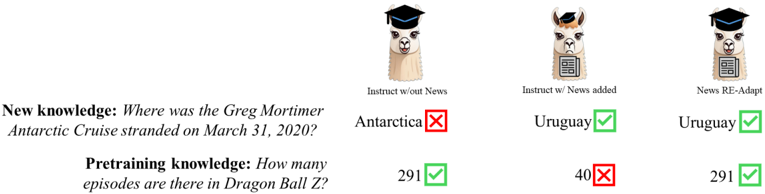

The image presents a comparative diagram illustrating the performance of three different language models ("Instruct w/out News", "Instruct w/ News added", and "News RE-Adapt") on two types of knowledge: "New knowledge" and "Pretraining knowledge". Performance is indicated by checkmarks (green for correct, red for incorrect) and numerical values. Each model is represented by an icon of a llama wearing a graduation cap, with varying additional symbols.

### Components/Axes

The diagram consists of three columns, each representing a different model. Each column is further divided into two rows, corresponding to the two knowledge types. Each knowledge type is presented as a question.

* **Model 1:** "Instruct w/out News" - Llama icon with graduation cap.

* **Model 2:** "Instruct w/ News added" - Llama icon with graduation cap and a QR code symbol.

* **Model 3:** "News RE-Adapt" - Llama icon with graduation cap and a QR code symbol.

* **Knowledge Type 1:** "New knowledge: Where was the Greg Mortimer Antarctic Cruise stranded on March 31, 2020?"

* **Knowledge Type 2:** "Pretraining knowledge: How many episodes are in Dragon Ball Z?"

* **Performance Indicator:** Green checkmark (correct), Red X (incorrect).

* **Numerical Values:** Associated with the "Pretraining knowledge" question.

### Detailed Analysis or Content Details

**Model 1: "Instruct w/out News"**

* **New Knowledge:** Answer: "Antarctica". Result: Incorrect (Red X).

* **Pretraining Knowledge:** Answer: "291". Result: Correct (Green Checkmark).

**Model 2: "Instruct w/ News added"**

* **New Knowledge:** Answer: "Uruguay". Result: Correct (Green Checkmark).

* **Pretraining Knowledge:** Answer: "40". Result: Incorrect (Red X).

**Model 3: "News RE-Adapt"**

* **New Knowledge:** Answer: "Uruguay". Result: Correct (Green Checkmark).

* **Pretraining Knowledge:** Answer: "291". Result: Correct (Green Checkmark).

### Key Observations

* The "Instruct w/out News" model fails to answer the new knowledge question correctly, while the other two models succeed.

* The "Instruct w/ News added" model incorrectly answers the pretraining knowledge question.

* The "News RE-Adapt" model correctly answers both knowledge questions.

* The numerical value for the "Pretraining knowledge" question varies between the models. The correct answer appears to be 291, as indicated by the "Instruct w/out News" and "News RE-Adapt" models.

### Interpretation

The diagram demonstrates the impact of incorporating news data into language models. The "Instruct w/ News added" and "News RE-Adapt" models perform better on the "New knowledge" question, suggesting that access to recent information improves their ability to answer questions about current events. However, adding news data ("Instruct w/ News added") can negatively impact performance on pretraining knowledge ("Dragon Ball Z" episode count), potentially due to interference or a shift in focus. The "News RE-Adapt" model appears to mitigate this issue, achieving high accuracy on both knowledge types. This suggests that the method of integrating news data is crucial for maintaining overall model performance. The numerical values associated with the "Dragon Ball Z" question highlight the potential for inaccuracies when models are not adequately trained on specific domains. The use of llamas with graduation caps is a visual metaphor for the models' learning and knowledge acquisition capabilities.