\n

## Diagram: Simple Neural Network Model and Memory Allocation

### Overview

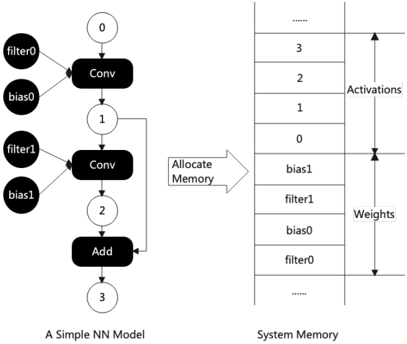

The image depicts a simplified neural network (NN) model and its corresponding memory allocation scheme. The left side shows a two-layer convolutional neural network, while the right side illustrates how the weights and activations are stored in system memory. An arrow indicates the mapping between the model and the memory allocation.

### Components/Axes

The diagram consists of the following components:

* **Neural Network Model:**

* `filter0`, `filter1`: Input filters.

* `bias0`, `bias1`: Input biases.

* `Conv`: Convolutional layers (two instances).

* `Add`: Addition operation.

* Nodes labeled 0, 1, 2, 3 representing intermediate outputs.

* **Memory Allocation:**

* A table representing system memory.

* Labels "Activations" and "Weights" indicating the memory regions.

* Entries within the table: 3, 2, 1, 0, `bias1`, `filter1`, `bias0`, `filter0`.

* **Arrow:** Indicates the allocation of memory for the NN model.

* **Text Labels:** "A Simple NN Model", "Allocate Memory", "System Memory".

### Detailed Analysis / Content Details

The neural network model consists of two convolutional layers followed by an addition operation.

* `filter0` and `bias0` are inputs to the first `Conv` layer, resulting in output node `0`.

* `filter1` and `bias1` are inputs to the second `Conv` layer, resulting in output node `1`.

* The outputs of the two `Conv` layers (nodes `0` and `1`) are added together by the `Add` operation, resulting in output node `2`.

* Node `3` is the final output of the model.

The system memory allocation is structured as follows:

* The top portion of the memory is allocated for "Activations", containing values 3, 2, 1, and 0.

* The bottom portion of the memory is allocated for "Weights", containing `bias1`, `filter1`, `bias0`, and `filter0`.

* The arrow indicates that the activations and weights of the NN model are stored in these respective memory regions.

### Key Observations

* The memory allocation appears to be a sequential storage of activations followed by weights.

* The order of weights in memory (`filter0`, `bias0`, `filter1`, `bias1`) corresponds to the order of their appearance in the NN model.

* The activations are stored in the order 0, 1, 2, 3.

### Interpretation

This diagram illustrates a fundamental concept in deep learning: the mapping between a neural network model and its memory representation. The diagram demonstrates how the weights and activations, which are essential for the NN's operation, are stored in system memory. The sequential allocation suggests a simple memory management scheme. The diagram highlights the importance of memory allocation in the efficient execution of neural networks. The diagram is a conceptual illustration and does not provide specific details about memory addressing or data types. It serves to convey the basic idea of how a simple NN model's data is organized in memory.