TECHNICAL ASSET FINGERPRINT

6e3b309d175d1749bd04d94e

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Chart Type: Multiple Line Charts

### Overview

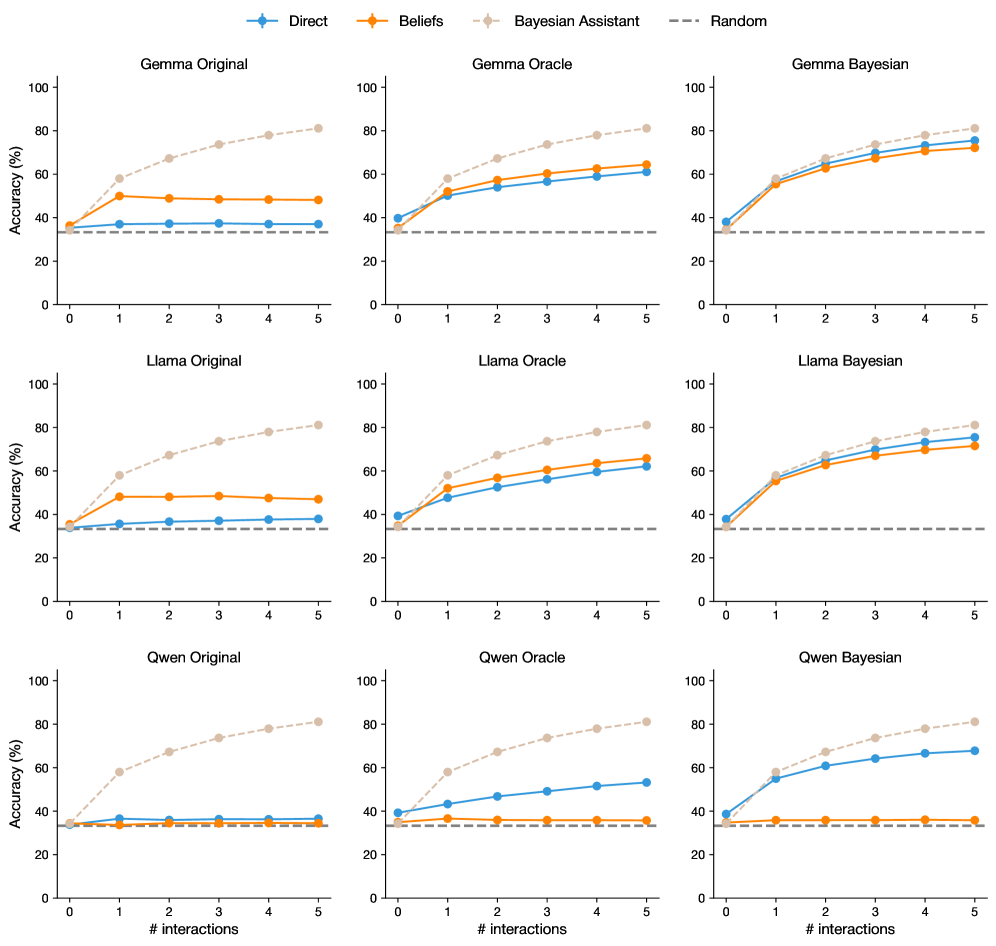

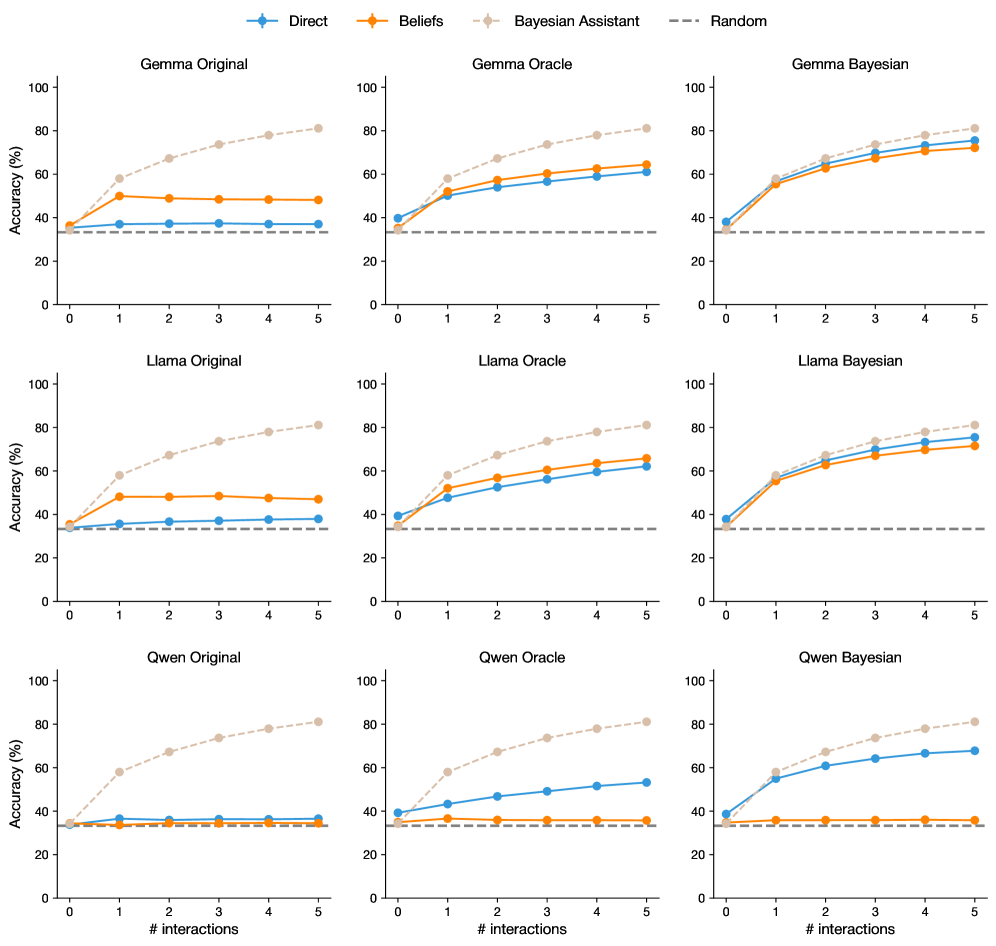

The image presents a set of nine line charts arranged in a 3x3 grid. Each chart displays the accuracy (%) of different models (Gemma, Llama, Qwen) under various conditions (Original, Oracle, Bayesian) as a function of the number of interactions (0 to 5). The charts compare the performance of "Direct," "Beliefs," and "Bayesian Assistant" methods, with a "Random" baseline indicated by a horizontal dashed line.

### Components/Axes

* **X-axis (Horizontal):** "# interactions" ranging from 0 to 5.

* **Y-axis (Vertical):** "Accuracy (%)" ranging from 0 to 100.

* **Chart Titles (Top Row):** "Gemma Original", "Gemma Oracle", "Gemma Bayesian"

* **Chart Titles (Middle Row):** "Llama Original", "Llama Oracle", "Llama Bayesian"

* **Chart Titles (Bottom Row):** "Qwen Original", "Qwen Oracle", "Qwen Bayesian"

* **Legend (Top):**

* Blue line: "Direct"

* Orange line: "Beliefs"

* Beige dashed line: "Bayesian Assistant"

* Gray dashed line: "Random"

### Detailed Analysis

**General Observations:**

* The "Random" baseline is consistently around 33% accuracy across all charts.

* The "Bayesian Assistant" method generally shows the highest accuracy, increasing with the number of interactions.

* The "Direct" and "Beliefs" methods show varying performance depending on the model and condition.

**Gemma Charts:**

* **Gemma Original:**

* Direct (Blue): Remains relatively constant around 35-40%.

* Beliefs (Orange): Remains relatively constant around 45-50%.

* Bayesian Assistant (Beige Dashed): Increases from approximately 40% to 80%.

* **Gemma Oracle:**

* Direct (Blue): Increases from approximately 40% to 65%.

* Beliefs (Orange): Increases from approximately 50% to 70%.

* Bayesian Assistant (Beige Dashed): Increases from approximately 60% to 80%.

* **Gemma Bayesian:**

* Direct (Blue): Increases from approximately 40% to 75%.

* Beliefs (Orange): Increases from approximately 50% to 80%.

* Bayesian Assistant (Beige Dashed): Increases from approximately 50% to 85%.

**Llama Charts:**

* **Llama Original:**

* Direct (Blue): Remains relatively constant around 35-40%.

* Beliefs (Orange): Remains relatively constant around 45-50%.

* Bayesian Assistant (Beige Dashed): Increases from approximately 40% to 80%.

* **Llama Oracle:**

* Direct (Blue): Increases from approximately 45% to 65%.

* Beliefs (Orange): Increases from approximately 50% to 70%.

* Bayesian Assistant (Beige Dashed): Increases from approximately 60% to 80%.

* **Llama Bayesian:**

* Direct (Blue): Increases from approximately 40% to 75%.

* Beliefs (Orange): Increases from approximately 50% to 80%.

* Bayesian Assistant (Beige Dashed): Increases from approximately 50% to 80%.

**Qwen Charts:**

* **Qwen Original:**

* Direct (Blue): Remains relatively constant around 35-40%.

* Beliefs (Orange): Remains relatively constant around 35-40%.

* Bayesian Assistant (Beige Dashed): Increases from approximately 40% to 80%.

* **Qwen Oracle:**

* Direct (Blue): Increases from approximately 40% to 55%.

* Beliefs (Orange): Remains relatively constant around 40-45%.

* Bayesian Assistant (Beige Dashed): Increases from approximately 40% to 80%.

* **Qwen Bayesian:**

* Direct (Blue): Increases from approximately 40% to 70%.

* Beliefs (Orange): Increases from approximately 40% to 75%.

* Bayesian Assistant (Beige Dashed): Increases from approximately 40% to 80%.

### Key Observations

* The "Bayesian Assistant" method consistently outperforms the "Direct" and "Beliefs" methods, especially as the number of interactions increases.

* The "Original" conditions for all models show relatively flat performance for "Direct" and "Beliefs," while "Oracle" and "Bayesian" conditions show improvement with interactions.

* The "Random" baseline provides a consistent point of comparison across all charts.

* Gemma, Llama, and Qwen models show similar trends, but the specific accuracy levels vary.

### Interpretation

The data suggests that incorporating a "Bayesian Assistant" significantly improves the accuracy of these models as the number of interactions increases. The "Oracle" and "Bayesian" conditions, which likely involve some form of feedback or adaptation, allow the "Direct" and "Beliefs" methods to improve over time, unlike the "Original" conditions where their performance remains relatively stagnant. The consistent "Random" baseline highlights the degree to which each method exceeds chance performance. The similarity in trends across Gemma, Llama, and Qwen suggests that the "Bayesian Assistant" approach is generally effective across different model architectures. The specific accuracy levels achieved by each model under different conditions likely reflect the inherent capabilities and limitations of each model.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Line Chart: Accuracy vs. Interactions for Different Models & Belief Systems

### Overview

The image presents a 3x3 grid of line charts, each comparing the accuracy of different language models (Gemma, Llama, Qwen) under different belief systems (Original, Oracle, Bayesian) against the number of interactions. A "Random" baseline is also included for comparison. Each chart plots accuracy (in percentage) on the y-axis against the number of interactions on the x-axis, ranging from 0 to 5.

### Components/Axes

* **X-axis Label (all charts):** "# interactions"

* **Y-axis Label (all charts):** "Accuracy (%)"

* **Legend (top-left of each chart):**

* **Blue Line:** Direct

* **Orange Line:** Beliefs

* **Purple Line:** Bayesian Assistant

* **Gray Dashed Line:** Random

* **Chart Titles (top-center of each chart):**

* Gemma Original

* Gemma Oracle

* Gemma Bayesian

* Llama Original

* Llama Oracle

* Llama Bayesian

* Qwen Original

* Qwen Oracle

* Qwen Bayesian

### Detailed Analysis or Content Details

Here's a breakdown of the data for each chart, noting trends and approximate values.

**1. Gemma Original:**

* **Direct (Blue):** Starts at approximately 30%, increases to around 60% by interaction 5. The line slopes upward.

* **Beliefs (Orange):** Remains relatively flat around 40% throughout all interactions.

* **Bayesian Assistant (Purple):** Starts at approximately 40%, increases to around 65% by interaction 5. The line slopes upward.

* **Random (Gray):** Remains flat around 30% throughout all interactions.

**2. Gemma Oracle:**

* **Direct (Blue):** Starts at approximately 40%, increases to around 70% by interaction 5. The line slopes upward.

* **Beliefs (Orange):** Starts at approximately 40%, increases to around 60% by interaction 5. The line slopes upward.

* **Bayesian Assistant (Purple):** Remains relatively flat around 60% throughout all interactions.

* **Random (Gray):** Remains flat around 30% throughout all interactions.

**3. Gemma Bayesian:**

* **Direct (Blue):** Starts at approximately 40%, increases to around 65% by interaction 5. The line slopes upward.

* **Beliefs (Orange):** Remains relatively flat around 60% throughout all interactions.

* **Bayesian Assistant (Purple):** Starts at approximately 50%, increases to around 70% by interaction 5. The line slopes upward.

* **Random (Gray):** Remains flat around 30% throughout all interactions.

**4. Llama Original:**

* **Direct (Blue):** Starts at approximately 30%, increases to around 60% by interaction 5. The line slopes upward.

* **Beliefs (Orange):** Remains relatively flat around 40% throughout all interactions.

* **Bayesian Assistant (Purple):** Starts at approximately 40%, increases to around 70% by interaction 5. The line slopes upward.

* **Random (Gray):** Remains flat around 30% throughout all interactions.

**5. Llama Oracle:**

* **Direct (Blue):** Starts at approximately 40%, increases to around 75% by interaction 5. The line slopes upward.

* **Beliefs (Orange):** Starts at approximately 40%, increases to around 65% by interaction 5. The line slopes upward.

* **Bayesian Assistant (Purple):** Starts at approximately 50%, increases to around 75% by interaction 5. The line slopes upward.

* **Random (Gray):** Remains flat around 30% throughout all interactions.

**6. Llama Bayesian:**

* **Direct (Blue):** Starts at approximately 40%, increases to around 70% by interaction 5. The line slopes upward.

* **Beliefs (Orange):** Remains relatively flat around 60% throughout all interactions.

* **Bayesian Assistant (Purple):** Starts at approximately 50%, increases to around 75% by interaction 5. The line slopes upward.

* **Random (Gray):** Remains flat around 30% throughout all interactions.

**7. Qwen Original:**

* **Direct (Blue):** Starts at approximately 30%, increases to around 60% by interaction 5. The line slopes upward.

* **Beliefs (Orange):** Remains relatively flat around 40% throughout all interactions.

* **Bayesian Assistant (Purple):** Starts at approximately 40%, increases to around 65% by interaction 5. The line slopes upward.

* **Random (Gray):** Remains flat around 30% throughout all interactions.

**8. Qwen Oracle:**

* **Direct (Blue):** Starts at approximately 40%, increases to around 70% by interaction 5. The line slopes upward.

* **Beliefs (Orange):** Starts at approximately 40%, increases to around 60% by interaction 5. The line slopes upward.

* **Bayesian Assistant (Purple):** Remains relatively flat around 60% throughout all interactions.

* **Random (Gray):** Remains flat around 30% throughout all interactions.

**9. Qwen Bayesian:**

* **Direct (Blue):** Starts at approximately 40%, increases to around 65% by interaction 5. The line slopes upward.

* **Beliefs (Orange):** Remains relatively flat around 60% throughout all interactions.

* **Bayesian Assistant (Purple):** Starts at approximately 50%, increases to around 70% by interaction 5. The line slopes upward.

* **Random (Gray):** Remains flat around 30% throughout all interactions.

### Key Observations

* The "Direct" and "Bayesian Assistant" approaches generally show increasing accuracy with more interactions across all models and belief systems.

* The "Beliefs" approach tends to remain relatively flat, indicating it doesn't benefit significantly from increased interactions.

* The "Random" baseline consistently shows low accuracy, serving as a clear lower bound for performance.

* The "Oracle" belief system often leads to higher initial accuracy and faster improvement compared to the "Original" and "Bayesian" systems.

* Llama models generally achieve higher accuracy than Gemma and Qwen models, particularly with the "Oracle" and "Bayesian" belief systems.

### Interpretation

The data suggests that increasing the number of interactions generally improves the accuracy of language models, particularly when using the "Direct" and "Bayesian Assistant" approaches. The "Oracle" belief system appears to provide a strong starting point for accuracy, potentially by incorporating prior knowledge or constraints. The relatively flat performance of the "Beliefs" approach suggests that simply incorporating beliefs without a mechanism for updating them based on interactions doesn't lead to significant improvements. The consistent lower performance of the "Random" baseline highlights the effectiveness of the language models compared to random guessing. The differences in performance between the Gemma, Llama, and Qwen models suggest that model architecture and training data play a significant role in accuracy. The consistent trend of increasing accuracy with interactions indicates a learning process is occurring within the models. The fact that the lines don't plateau suggests that even more interactions could potentially lead to further accuracy gains.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Multi-Panel Line Chart: Model Accuracy vs. Interaction Count

### Overview

The image displays a 3x3 grid of line charts. Each chart plots the "Accuracy (%)" of a specific Large Language Model (LLM) variant against the "# interactions" (from 0 to 5). The grid is organized by model family (rows: Gemma, Llama, Qwen) and by training/evaluation condition (columns: Original, Oracle, Bayesian). Each chart contains four data series representing different prompting or reasoning methods.

### Components/Axes

* **Global Legend (Top Center):** Positioned above the grid, it defines the four data series:

* **Direct:** Solid blue line with circular markers.

* **Beliefs:** Solid orange line with diamond markers.

* **Bayesian Assistant:** Dashed light brown line with circular markers.

* **Random:** Dashed grey horizontal line (baseline).

* **Y-Axis (All Charts):** Labeled "Accuracy (%)". Scale runs from 0 to 100 with major ticks at 0, 20, 40, 60, 80, 100.

* **X-Axis (All Charts):** Labeled "# interactions". Scale runs from 0 to 5 with integer ticks.

* **Subplot Titles (Top of each chart):**

* Row 1: "Gemma Original", "Gemma Oracle", "Gemma Bayesian"

* Row 2: "Llama Original", "Llama Oracle", "Llama Bayesian"

* Row 3: "Qwen Original", "Qwen Oracle", "Qwen Bayesian"

### Detailed Analysis

**Data Series Trends & Approximate Values:**

**Row 1: Gemma Models**

* **Gemma Original:**

* *Bayesian Assistant (Light Brown):* Strong upward trend. Starts ~35% (0), rises to ~58% (1), ~68% (2), ~74% (3), ~78% (4), ~81% (5).

* *Beliefs (Orange):* Sharp initial rise then plateau. Starts ~35% (0), jumps to ~50% (1), then remains flat ~48-49% (2-5).

* *Direct (Blue):* Very slight upward trend, near baseline. Starts ~35% (0), ends ~38% (5).

* *Random (Grey):* Constant at ~33%.

* **Gemma Oracle:**

* *Bayesian Assistant:* Similar strong upward trend as Original, reaching ~81% (5).

* *Beliefs & Direct:* Both show steady, parallel upward trends. Beliefs is consistently ~5-8% higher than Direct. At 5 interactions: Beliefs ~64%, Direct ~60%.

* **Gemma Bayesian:**

* All three active methods (Direct, Beliefs, Bayesian Assistant) show strong, converging upward trends. They start clustered ~35-38% (0) and end between ~72-78% (5). Bayesian Assistant remains slightly highest.

**Row 2: Llama Models**

* **Llama Original:** Pattern is nearly identical to Gemma Original. Bayesian Assistant rises to ~81% (5). Beliefs plateaus ~48%. Direct shows minimal gain.

* **Llama Oracle:** Pattern is nearly identical to Gemma Oracle. Bayesian Assistant leads (~81% at 5). Beliefs (~65%) and Direct (~61%) show steady, parallel growth.

* **Llama Bayesian:** Pattern is nearly identical to Gemma Bayesian. All methods show strong growth, converging between ~72-78% at 5 interactions.

**Row 3: Qwen Models**

* **Qwen Original:**

* *Bayesian Assistant:* Follows the same strong upward trend as other "Original" models, reaching ~81% (5).

* *Beliefs & Direct:* Both show almost no improvement, hovering near the Random baseline (~33-36%) across all interactions.

* **Qwen Oracle:**

* *Bayesian Assistant:* Strong upward trend to ~81% (5).

* *Direct:* Shows a steady upward trend, reaching ~53% (5).

* *Beliefs:* Remains flat near the baseline (~35%).

* **Qwen Bayesian:**

* *Bayesian Assistant:* Strong upward trend to ~81% (5).

* *Direct:* Shows a strong upward trend, reaching ~68% (5).

* *Beliefs:* Remains flat near the baseline (~35%).

### Key Observations

1. **Consistent Bayesian Assistant Superiority:** The "Bayesian Assistant" method (light brown dashed line) achieves the highest or tied-for-highest accuracy in every single chart, consistently reaching approximately 81% accuracy at 5 interactions.

2. **"Original" Condition Limitation:** In the "Original" condition (left column), only the Bayesian Assistant method shows significant learning. The "Direct" and "Beliefs" methods show minimal to no improvement for Gemma/Llama, and absolutely none for Qwen.

3. **"Oracle" Condition Boost:** The "Oracle" condition (middle column) enables strong learning for the "Direct" method in all models and for the "Beliefs" method in Gemma/Llama (but not Qwen).

4. **"Bayesian" Condition Convergence:** The "Bayesian" condition (right column) causes all three active methods to perform well and converge, particularly for Gemma and Llama.

5. **Qwen's Unique "Beliefs" Behavior:** The Qwen model shows a distinct pattern where the "Beliefs" method (orange) fails to improve in *any* condition, remaining at baseline accuracy.

6. **Random Baseline:** The "Random" baseline is constant at approximately 33% across all charts, suggesting a 3-choice task.

### Interpretation

This data demonstrates the significant impact of both the model's training/evaluation condition (Original, Oracle, Bayesian) and the prompting/reasoning method (Direct, Beliefs, Bayesian Assistant) on interactive learning performance.

* **The Bayesian Assistant is a robust meta-strategy:** Its consistent top performance suggests it effectively leverages interaction history to update beliefs and guide queries, regardless of the base model or condition.

* **Oracles provide critical information:** The "Oracle" condition, which likely provides ground-truth feedback, unlocks learning capability for simpler methods like "Direct" prompting, which otherwise stagnates.

* **Model-specific limitations exist:** Qwen's "Beliefs" method's complete failure to learn, even with an Oracle, indicates a potential incompatibility between that model's architecture/training and the belief-based prompting approach tested here.

* **The "Bayesian" condition may induce a helpful prior:** Making the model itself "Bayesian" seems to create an internal state where even simple methods like "Direct" prompting can learn effectively from interactions, closing the gap with more sophisticated methods.

The charts collectively argue that for interactive learning tasks, employing a Bayesian meta-strategy (the Assistant) is highly effective, and that providing models with structured feedback (Oracle) or Bayesian-friendly internal representations is crucial for enabling simpler interaction methods to succeed.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graphs: Model Performance Across Interaction Counts

### Overview

The image contains nine line graphs arranged in a 3x3 grid, comparing the accuracy of three AI models (Gemma, Llama, Gwen) under three configurations (Original, Oracle, Bayesian) across 0-5 interaction counts. Each graph tracks four data series: Direct (blue), Beliefs (orange), Bayesian Assistant (light brown), and Random (gray dashed). All graphs share identical axes and legend placement.

### Components/Axes

- **X-axis**: "# interactions" (0-5, integer increments)

- **Y-axis**: "Accuracy (%)" (0-100, 20% increments)

- **Legend**: Top-left corner, color-coded:

- Blue: Direct

- Orange: Beliefs

- Light brown: Bayesian Assistant

- Gray dashed: Random

- **Graph Titles**: Model + Configuration (e.g., "Gemma Original", "Llama Bayesian")

### Detailed Analysis

#### Gemma Original

- **Bayesian Assistant**: Starts at ~30%, rises steadily to ~85% by interaction 5

- **Beliefs**: Flat at ~45% throughout

- **Direct**: Flat at ~35% throughout

- **Random**: Constant at ~30%

#### Gemma Oracle

- **Bayesian Assistant**: Starts at ~35%, rises to ~75% by interaction 5

- **Beliefs**: Flat at ~50% throughout

- **Direct**: Flat at ~45% throughout

- **Random**: Constant at ~30%

#### Gemma Bayesian

- **Bayesian Assistant**: Starts at ~30%, rises to ~80% by interaction 5

- **Beliefs**: Flat at ~55% throughout

- **Direct**: Flat at ~50% throughout

- **Random**: Constant at ~30%

#### Llama Original

- **Bayesian Assistant**: Starts at ~35%, rises to ~80% by interaction 5

- **Beliefs**: Flat at ~40% throughout

- **Direct**: Flat at ~35% throughout

- **Random**: Constant at ~30%

#### Llama Oracle

- **Bayesian Assistant**: Starts at ~40%, rises to ~70% by interaction 5

- **Beliefs**: Flat at ~50% throughout

- **Direct**: Flat at ~40% throughout

- **Random**: Constant at ~30%

#### Llama Bayesian

- **Bayesian Assistant**: Starts at ~35%, rises to ~75% by interaction 5

- **Beliefs**: Flat at ~55% throughout

- **Direct**: Flat at ~50% throughout

- **Random**: Constant at ~30%

#### Gwen Original

- **Bayesian Assistant**: Starts at ~30%, rises to ~85% by interaction 5

- **Beliefs**: Flat at ~40% throughout

- **Direct**: Flat at ~35% throughout

- **Random**: Constant at ~30%

#### Gwen Oracle

- **Bayesian Assistant**: Starts at ~35%, rises to ~75% by interaction 5

- **Beliefs**: Flat at ~45% throughout

- **Direct**: Flat at ~40% throughout

- **Random**: Constant at ~30%

#### Gwen Bayesian

- **Bayesian Assistant**: Starts at ~30%, rises to ~80% by interaction 5

- **Beliefs**: Flat at ~45% throughout

- **Direct**: Flat at ~40% throughout

- **Random**: Constant at ~30%

### Key Observations

1. **Bayesian Assistant Dominance**: Consistently outperforms all other methods across all models and configurations, with accuracy gains accelerating with more interactions

2. **Oracle vs Original**: Oracle configurations show ~5-10% higher baseline accuracy than Original versions, but Bayesian versions close this gap through interaction

3. **Beliefs vs Direct**: Beliefs consistently outperform Direct by ~5-10% across all models, though both remain flat regardless of interactions

4. **Random Baseline**: All models perform significantly better than the 30% random chance line

5. **Interaction Impact**: Accuracy improvements for Bayesian Assistant are most pronounced between interactions 2-5

### Interpretation

The data demonstrates that Bayesian methods significantly enhance model performance through iterative interactions, with the Bayesian Assistant configuration showing the most substantial gains. The Oracle configurations suggest that pre-optimized models provide a performance floor, while Bayesian approaches enable continuous improvement. The consistent gap between Beliefs and Direct indicates that belief-based reasoning provides a measurable advantage over direct inference. The Gwen model's lower performance across all configurations suggests architectural or training data differences compared to Gemma/Llama models. Notably, all models show diminishing returns after interaction 3, implying diminishing marginal utility of additional interactions.

DECODING INTELLIGENCE...