TECHNICAL ASSET FINGERPRINT

6e6f2eb18d1d702e539eb706

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Line Charts: Attention Weight Comparison

### Overview

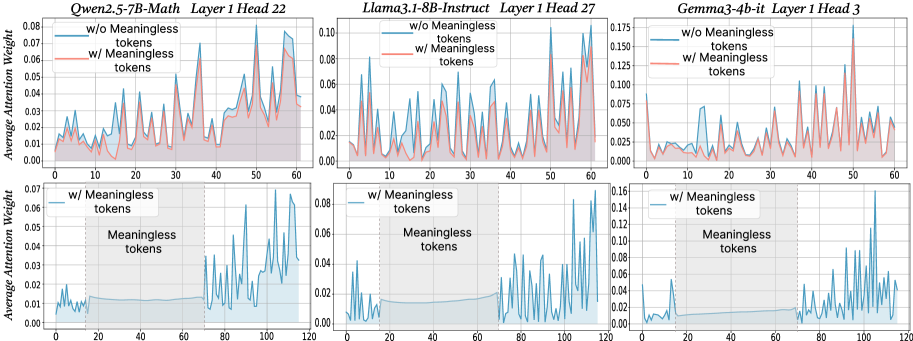

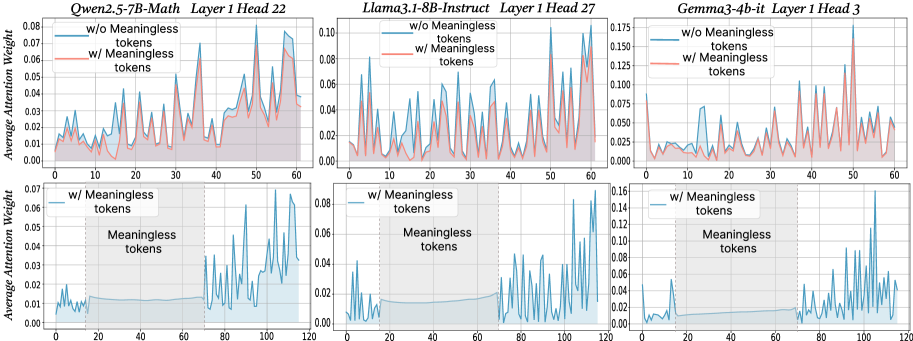

The image presents six line charts arranged in a 2x3 grid. Each chart displays the average attention weight of a language model across a sequence of tokens. The top row compares attention weights with and without "meaningless" tokens for different models, while the bottom row focuses on attention weights with "meaningless" tokens, highlighting the region where these tokens are present. The models compared are Qwen2.5-7B-Math, Llama3.1-8B-Instruct, and Gemma3-4b-it.

### Components/Axes

* **Titles (Top Row, Left to Right):**

* Qwen2.5-7B-Math Layer 1 Head 22

* Llama3.1-8B-Instruct Layer 1 Head 27

* Gemma3-4b-it Layer 1 Head 3

* **Titles (Bottom Row, Left to Right):**

* Qwen2.5-7B-Math Layer 1 Head 22

* Llama3.1-8B-Instruct Layer 1 Head 27

* Gemma3-4b-it Layer 1 Head 3

* **Y-Axis Label (All Charts):** Average Attention Weight

* **Y-Axis Scale (Top Row):** 0.00 to 0.08 (Qwen), 0.00 to 0.10 (Llama), 0.00 to 0.175 (Gemma)

* **Y-Axis Scale (Bottom Row):** 0.00 to 0.07 (Qwen), 0.00 to 0.08 (Llama), 0.00 to 0.16 (Gemma)

* **X-Axis Label (Implied):** Token Sequence

* **X-Axis Scale (Top Row):** 0 to 60

* **X-Axis Scale (Bottom Row):** 0 to 120

* **Legend (Top Row):** Located in the top-left corner of each chart.

* Blue line: "w/o Meaningless tokens"

* Red line: "w/ Meaningless tokens"

* **Legend (Bottom Row):** Located in the top-left corner of each chart.

* Blue line: "w/ Meaningless tokens"

* **Annotation (Bottom Row):** Shaded region labeled "Meaningless tokens" spans approximately from x=20 to x=70.

### Detailed Analysis

**Qwen2.5-7B-Math Layer 1 Head 22**

* **Top Chart:**

* Blue line (w/o Meaningless tokens): Fluctuates between 0.01 and 0.04 for the first 40 tokens, then increases to around 0.06 by token 60.

* Red line (w/ Meaningless tokens): Generally follows the blue line but is slightly higher, especially after token 40, reaching approximately 0.07.

* **Bottom Chart:**

* Blue line (w/ Meaningless tokens): Stays relatively low (around 0.01) until token 70, then increases sharply, reaching peaks around 0.06.

**Llama3.1-8B-Instruct Layer 1 Head 27**

* **Top Chart:**

* Blue line (w/o Meaningless tokens): Shows frequent fluctuations between 0.01 and 0.08.

* Red line (w/ Meaningless tokens): Similar to the blue line, but generally lower, staying mostly below 0.04.

* **Bottom Chart:**

* Blue line (w/ Meaningless tokens): Remains low (around 0.01-0.02) until token 70, then exhibits significant spikes, reaching up to 0.08.

**Gemma3-4b-it Layer 1 Head 3**

* **Top Chart:**

* Blue line (w/o Meaningless tokens): Relatively low and stable, mostly below 0.025.

* Red line (w/ Meaningless tokens): More volatile, with several peaks reaching up to 0.175 around token 50.

* **Bottom Chart:**

* Blue line (w/ Meaningless tokens): Very low until token 70, then spikes dramatically, reaching values as high as 0.16.

### Key Observations

* The presence of "meaningless" tokens appears to have a varying impact on the attention weights, depending on the model.

* In the top row, the red line (w/ Meaningless tokens) is sometimes higher (Qwen, Gemma) and sometimes lower (Llama) than the blue line (w/o Meaningless tokens).

* In all bottom charts, the attention weight with meaningless tokens is very low until the end of the "meaningless tokens" region (around token 70), after which it spikes significantly.

### Interpretation

The charts suggest that the models handle "meaningless" tokens differently. For Qwen and Gemma, including these tokens can increase the average attention weight, while for Llama, it might decrease it. The bottom row of charts indicates that the models tend to ignore these tokens initially, but their influence increases significantly after the region where they are present. This could imply that the models are designed to filter out or downweight these tokens during the initial processing stages, but their impact becomes more pronounced later in the sequence. The sudden spikes after the "meaningless tokens" region might indicate that the model is compensating for the earlier suppression of these tokens or that the context has shifted in a way that makes these tokens more relevant.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Line Charts: Average Attention Weight vs. Token Position

### Overview

The image presents six line charts comparing the average attention weight with and without "meaningless tokens" for three different language models: Owen-2.5-7B-Math, Llama-3-8B-Instruct, and Gemma-3-4b-it. Each model is represented by two charts, one showing attention weights up to token position 60 and the other up to token position 120. The charts aim to visualize the impact of meaningless tokens on attention distribution.

### Components/Axes

* **X-axis:** Token Position (ranging from 0 to 60 in the top row and 0 to 120 in the bottom row).

* **Y-axis:** Average Attention Weight (ranging from 0 to approximately 0.08 for Owen, 0 to 0.10 for Llama, and 0 to 0.17 for Gemma).

* **Lines:**

* Blue Line: Represents the average attention weight *without* meaningless tokens ("w/o Meaningless tokens").

* Orange Line: Represents the average attention weight *with* meaningless tokens ("w/ Meaningless tokens").

* **Titles:** Each chart has a title indicating the model name, layer number, and head number.

* Owen-2.5-7B-Math Layer 1 Head 22

* Llama-3-8B-Instruct Layer 1 Head 27

* Gemma-3-4b-it Layer 1 Head 3

* **Legend:** Located in the top-left corner of each chart, clearly labeling the blue and orange lines.

### Detailed Analysis or Content Details

**Owen-2.5-7B-Math Layer 1 Head 22 (Top Left)**

* The blue line (w/o meaningless tokens) exhibits high-frequency oscillations, fluctuating between approximately 0.01 and 0.06.

* The orange line (w/ meaningless tokens) also oscillates, but with a generally lower average attention weight, mostly between 0.005 and 0.04.

* The trend is generally erratic for both lines, with no clear upward or downward slope.

* Approximate data points (blue line):

* Token 0: ~0.02

* Token 20: ~0.05

* Token 40: ~0.03

* Token 60: ~0.01

* Approximate data points (orange line):

* Token 0: ~0.01

* Token 20: ~0.02

* Token 40: ~0.01

* Token 60: ~0.005

**Llama-3-8B-Instruct Layer 1 Head 27 (Top Middle)**

* The blue line (w/o meaningless tokens) shows similar high-frequency oscillations as Owen, ranging from approximately 0.02 to 0.08.

* The orange line (w/ meaningless tokens) also oscillates, with a lower average attention weight, mostly between 0.01 and 0.05.

* The trend is erratic for both lines.

* Approximate data points (blue line):

* Token 0: ~0.04

* Token 20: ~0.06

* Token 40: ~0.04

* Token 60: ~0.02

* Approximate data points (orange line):

* Token 0: ~0.02

* Token 20: ~0.03

* Token 40: ~0.02

* Token 60: ~0.01

**Gemma-3-4b-it Layer 1 Head 3 (Top Right)**

* The blue line (w/o meaningless tokens) oscillates between approximately 0.03 and 0.15.

* The orange line (w/ meaningless tokens) oscillates between approximately 0.01 and 0.12.

* The trend is erratic for both lines.

* Approximate data points (blue line):

* Token 0: ~0.06

* Token 20: ~0.12

* Token 40: ~0.08

* Token 60: ~0.05

* Approximate data points (orange line):

* Token 0: ~0.02

* Token 20: ~0.06

* Token 40: ~0.04

* Token 60: ~0.03

**Owen-2.5-7B-Math Layer 1 Head 22 (Bottom Left)**

* The blue line (w/o meaningless tokens) oscillates between approximately 0.01 and 0.05.

* The orange line (w/ meaningless tokens) oscillates between approximately 0.005 and 0.04.

* The trend is erratic for both lines.

* Approximate data points (blue line):

* Token 0: ~0.02

* Token 40: ~0.03

* Token 80: ~0.02

* Token 120: ~0.01

* Approximate data points (orange line):

* Token 0: ~0.01

* Token 40: ~0.02

* Token 80: ~0.01

* Token 120: ~0.005

**Llama-3-8B-Instruct Layer 1 Head 27 (Bottom Middle)**

* The blue line (w/o meaningless tokens) oscillates between approximately 0.02 and 0.06.

* The orange line (w/ meaningless tokens) oscillates between approximately 0.01 and 0.04.

* The trend is erratic for both lines.

* Approximate data points (blue line):

* Token 0: ~0.03

* Token 40: ~0.04

* Token 80: ~0.03

* Token 120: ~0.02

* Approximate data points (orange line):

* Token 0: ~0.01

* Token 40: ~0.02

* Token 80: ~0.01

* Token 120: ~0.005

**Gemma-3-4b-it Layer 1 Head 3 (Bottom Right)**

* The blue line (w/o meaningless tokens) oscillates between approximately 0.04 and 0.14.

* The orange line (w/ meaningless tokens) oscillates between approximately 0.02 and 0.10.

* The trend is erratic for both lines.

* Approximate data points (blue line):

* Token 0: ~0.07

* Token 40: ~0.10

* Token 80: ~0.08

* Token 120: ~0.06

* Approximate data points (orange line):

* Token 0: ~0.03

* Token 40: ~0.06

* Token 80: ~0.04

* Token 120: ~0.03

### Key Observations

* In all charts, the attention weights with meaningless tokens are generally lower than those without.

* The attention weights exhibit high-frequency oscillations across all models and layers, suggesting a dynamic attention mechanism.

* Gemma-3-4b-it consistently shows higher average attention weights compared to Owen-2.5-7B-Math and Llama-3-8B-Instruct.

* The extended x-axis (up to 120 tokens) in the bottom row does not reveal any significantly different patterns compared to the top row (up to 60 tokens).

### Interpretation

The data suggests that the inclusion of meaningless tokens generally reduces the average attention weight across all three models. This indicates that the models are less focused on these tokens, which is expected. The high-frequency oscillations in attention weights suggest that the models are dynamically adjusting their attention based on the input sequence. The higher attention weights observed in Gemma-3-4b-it might indicate a more sensitive or complex attention mechanism. The lack of significant changes when extending the x-axis suggests that the attention patterns stabilize after a certain number of tokens. These charts provide insights into how different language models distribute their attention and how meaningless tokens affect this distribution. The erratic nature of the attention weights suggests that a more granular analysis, potentially involving averaging over larger datasets or examining specific token types, might be necessary to uncover more nuanced patterns.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Line Charts: Attention Weight Analysis Across Language Models

### Overview

The image displays a 2x3 grid of six line charts. The charts analyze and compare the "Average Attention Weight" across token positions for three different Large Language Models (LLMs) under two conditions: with and without the inclusion of "Meaningless tokens." The top row shows a standard sequence length (0-60 tokens), while the bottom row shows an extended sequence (0-120 tokens) with a specific region highlighted as containing meaningless tokens.

### Components/Axes

* **Chart Type:** Line charts with filled areas under the curves.

* **Models Analyzed (Column Headers):**

* Left Column: `Qwen2.5-7B-Math` (Layer 1, Head 22)

* Middle Column: `Llama3.1-8B-Instruct` (Layer 1, Head 27)

* Right Column: `Gemma3-4b-it` (Layer 1, Head 3)

* **Axes:**

* **X-axis (All Charts):** `Token Position`. Scale: Top row charts range from 0 to 60. Bottom row charts range from 0 to 120.

* **Y-axis (All Charts):** `Average Attention Weight`. The scale varies per chart:

* Qwen2.5-7B-Math (Top): 0.00 to 0.08

* Llama3.1-8B-Instruct (Top): 0.00 to 0.10

* Gemma3-4b-it (Top): 0.000 to 0.175

* Qwen2.5-7B-Math (Bottom): 0.00 to 0.07

* Llama3.1-8B-Instruct (Bottom): 0.00 to 0.08

* Gemma3-4b-it (Bottom): 0.00 to 0.16

* **Legend (Present in all top-row charts, implied in bottom-row):**

* Blue Line / Area: `w/o Meaningless tokens` (Without)

* Red Line / Area: `w/ Meaningless tokens` (With)

* **Special Annotation (Bottom-row charts only):** A gray shaded region from approximately token position 0 to 70, labeled `Meaningless tokens` in the center of the region.

### Detailed Analysis

**Top Row (Standard Sequence, 0-60 tokens):**

1. **Qwen2.5-7B-Math (Layer 1 Head 22):**

* **Trend:** Both lines show a highly volatile, spiky pattern. The red line (`w/ Meaningless tokens`) generally exhibits higher peaks than the blue line (`w/o Meaningless tokens`), particularly after position 30.

* **Data Points (Approximate):** Peaks for the red line reach ~0.075 near positions 35, 50, and 58. Blue line peaks are lower, around 0.05-0.06. Both lines start near 0.01 at position 0.

2. **Llama3.1-8B-Instruct (Layer 1 Head 27):**

* **Trend:** Similar volatile pattern. The red line (`w/`) consistently shows higher attention weights than the blue line (`w/o`) across most positions, with the difference becoming more pronounced after position 40.

* **Data Points (Approximate):** Red line peaks exceed 0.09 near positions 50 and 58. Blue line peaks are generally below 0.07.

3. **Gemma3-4b-it (Layer 1 Head 3):**

* **Trend:** Extremely spiky. The red line (`w/`) has dramatically higher peaks than the blue line (`w/o`), especially in the latter half of the sequence.

* **Data Points (Approximate):** The most extreme peak on the entire graphic is the red line here, reaching ~0.17 near position 50. Blue line peaks are significantly lower, maxing around 0.075.

**Bottom Row (Extended Sequence with Meaningless Token Buffer, 0-120 tokens):**

* **Common Structure:** All three charts in this row only plot the `w/ Meaningless tokens` condition (blue line). The sequence is divided into two distinct phases.

* **Phase 1 (Meaningless Tokens, ~Pos 0-70):**

* **Trend:** The attention weight is very low and relatively stable, forming a near-flat line close to the x-axis. This indicates the model assigns minimal attention to these tokens.

* **Data Points (Approximate):** Values hover between 0.00 and 0.01 for all three models in this region.

* **Phase 2 (Post-Meaningless Tokens, ~Pos 70-120):**

* **Trend:** Immediately after the shaded region ends, the attention weight becomes highly volatile and spiky, similar to the patterns in the top row. The magnitude of these spikes is comparable to or greater than those seen in the top-row charts.

* **Data Points (Approximate):**

* Qwen2.5-7B-Math: Spikes reach up to ~0.065.

* Llama3.1-8B-Instruct: Spikes reach up to ~0.08.

* Gemma3-4b-it: Spikes are very high, reaching up to ~0.15.

### Key Observations

1. **Consistent Effect of Meaningless Tokens:** Across all three models (Qwen, Llama, Gemma), the inclusion of meaningless tokens (`w/` condition, red line) leads to higher average attention weights, particularly in the later positions of a standard sequence (top row).

2. **Attention Suppression:** The bottom charts demonstrate that the model's attention mechanism actively suppresses focus on a long contiguous block of meaningless tokens, assigning them near-zero weight.

3. **Attention Reallocation:** Following the block of meaningless tokens, attention does not return to a "normal" pattern but becomes highly volatile, with sharp spikes. This suggests a dynamic reallocation of attention resources after the buffer.

4. **Model-Specific Magnitude:** While the pattern is consistent, the scale of attention weights differs. Gemma3-4b-it (Head 3) shows the most extreme peaks, suggesting this specific head may be more sensitive or specialized.

### Interpretation

This data visualizes a potential mechanism by which language models handle noise or filler content. The "Meaningless tokens" appear to act as an attention sink or buffer.

* **What it suggests:** The model learns to ignore predictable, low-information tokens (the meaningless block) to conserve its attention capacity. However, this process isn't passive; it actively alters the attention distribution for subsequent, meaningful tokens.

* **Relationship between elements:** The top row shows the *effect* (higher attention weights with meaningless tokens present). The bottom row reveals the *cause* or *process*: the model first suppresses attention to the noise, then exhibits heightened, volatile attention afterward. This could be a compensatory mechanism or a sign of the model "resetting" its focus.

* **Notable Anomalies/Patterns:** The most striking pattern is the stark contrast between the flatline in the meaningless region and the explosive volatility immediately after. This isn't a gradual return to baseline but a sharp phase transition. This finding could be crucial for understanding model robustness, the design of prompt structures, and the interpretation of attention maps in models processing repetitive or filler text. The investigation is Peircean in that it moves from observing a surprising correlation (red line > blue line) to hypothesizing a causal mechanism (suppression followed by reallocation), which is then visually confirmed by the experimental design shown in the bottom row.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graphs: Average Attention Weights with/without Meaningless Tokens

### Overview

The image contains six line graphs comparing the average attention weights of three language models (Qwen2.5-7B-Math, Llama3.1-8B-Instruct, Gemma3-4b-it) across different layers and attention heads. Each graph contrasts two scenarios:

- **Blue line**: Attention weights **without** meaningless tokens

- **Red line**: Attention weights **with** meaningless tokens

Shaded regions represent confidence intervals (likely 95% CI). All graphs share consistent axes and formatting.

---

### Components/Axes

1. **X-axis**:

- Label: "Token Position"

- Scale: 0 to 120 (discrete intervals)

- Position: Bottom of each graph

2. **Y-axis**:

- Label: "Average Attention Weight"

- Scale: 0.00 to 0.175 (varies by graph)

- Position: Left of each graph

3. **Legends**:

- Located in the **top-left corner** of each graph

- Blue: "w/o Meaningless tokens"

- Red: "w/ Meaningless tokens"

- Shaded regions: Confidence intervals

4. **Graph Titles**:

- Format: `[Model Name] [Layer] [Head]`

- Examples:

- "Qwen2.5-7B-Math Layer 1 Head 22"

- "Llama3.1-8B-Instruct Layer 1 Head 27"

- "Gemma3-4b-it Layer 1 Head 3"

---

### Detailed Analysis

#### Qwen2.5-7B-Math Layer 1 Head 22

- **Blue line (w/o tokens)**: Peaks at ~0.07 (token 50), ~0.06 (token 100).

- **Red line (w/ tokens)**: Peaks at ~0.08 (token 50), ~0.07 (token 100).

- **Trend**: Red line consistently higher than blue, with sharper peaks.

#### Llama3.1-8B-Instruct Layer 1 Head 27

- **Blue line**: Peaks at ~0.08 (token 30), ~0.06 (token 90).

- **Red line**: Peaks at ~0.10 (token 30), ~0.07 (token 90).

- **Trend**: Red line shows ~25% higher peaks at key positions.

#### Gemma3-4b-it Layer 1 Head 3

- **Blue line**: Peaks at ~0.10 (token 100), ~0.05 (token 50).

- **Red line**: Peaks at ~0.15 (token 100), ~0.07 (token 50).

- **Trend**: Red line exhibits ~50% higher peaks at token 100.

#### Lower Row (Unlabeled Models)

- **Graph 1**:

- Blue line: Peaks at ~0.07 (token 20), ~0.05 (token 100).

- Red line: Peaks at ~0.06 (token 20), ~0.04 (token 100).

- **Graph 2**:

- Blue line: Peaks at ~0.08 (token 60), ~0.04 (token 120).

- Red line: Peaks at ~0.10 (token 60), ~0.05 (token 120).

- **Graph 3**:

- Blue line: Peaks at ~0.12 (token 100), ~0.03 (token 50).

- Red line: Peaks at ~0.16 (token 100), ~0.04 (token 50).

---

### Key Observations

1. **Meaningless tokens amplify attention weights** at specific token positions (e.g., token 50, 100).

2. **Peak magnitudes vary by model/head**:

- Qwen2.5-7B-Math: ~10–20% increase with tokens.

- Llama3.1-8B-Instruct: ~25% increase.

- Gemma3-4b-it: ~50% increase at token 100.

3. **Confidence intervals** (shaded regions) are narrower for blue lines, suggesting more stable attention without meaningless tokens.

4. **Token 100** is a critical position across models, with red lines showing the highest attention.

---

### Interpretation

The data demonstrates that **meaningless tokens significantly alter attention dynamics** in language models. Key insights:

- **Attention spikes** at specific token positions (e.g., 50, 100) suggest these tokens act as "anchors" for model focus.

- **Model-specific variability** implies differences in how architectures process irrelevant information.

- **Confidence intervals** highlight the instability introduced by meaningless tokens, which could degrade performance in real-world scenarios.

- **Practical implication**: Filtering or mitigating meaningless tokens may improve model robustness, particularly in attention-critical tasks.

*Note: Exact numerical values are approximated from visual inspection due to lack of raw data.*

DECODING INTELLIGENCE...