## Residual Network Block Diagram: Architecture Overview

### Overview

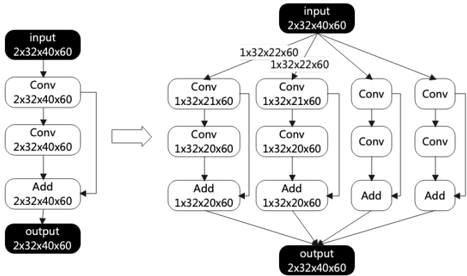

The diagram illustrates a residual network (ResNet) block architecture, showing the flow of data through convolutional layers, addition operations, and dimensional transformations. The structure emphasizes skip connections (shortcut paths) that merge with expanded feature maps to produce the final output.

### Components/Axes

- **Input**: `2x32x40x60` (batch size, channels, height, width)

- **Conv Layers**:

- Left path: Two `Conv` operations maintaining `2x32x40x60` dimensions.

- Right path: Four `Conv` operations with decreasing spatial dimensions:

- `1x32x22x60` → `1x32x20x60` (via two `Conv` layers).

- **Add Operations**: Element-wise addition of feature maps.

- **Output**: `2x32x40x60` (matches input dimensions via skip connection).

### Detailed Analysis

1. **Left Path (Shortcut)**:

- Input → `Conv(2x32x40x60)` → `Conv(2x32x40x60)` → `Add` → Output.

- Dimensions remain unchanged via identity mapping.

2. **Right Path (Expansion)**:

- Input splits into four branches:

- **Branch 1**: `Conv(1x32x22x60)` → `Conv(1x32x20x60)` → `Add` → `1x32x20x60`.

- **Branch 2**: `Conv(1x32x22x60)` → `Conv(1x32x20x60)` → `Add` → `1x32x20x60`.

- **Branch 3**: `Conv(1x32x22x60)` → `Conv(1x32x20x60)` → `Add` → `1x32x20x60`.

- **Branch 4**: `Conv(1x32x22x60)` → `Conv(1x32x20x60)` → `Add` → `1x32x20x60`.

- Branches merge via element-wise addition to produce `1x32x20x60`.

3. **Final Merge**:

- Right path output (`1x32x20x60`) is upsampled or transformed (implied but not explicitly labeled) to match the left path's `2x32x40x60` dimensions.

- Merged with left path output via `Add` to produce final `2x32x40x60` output.

### Key Observations

- **Dimensional Consistency**: The left path preserves input dimensions, while the right path reduces spatial resolution (40→20) but increases channel depth (1→2 via branching).

- **Skip Connection**: The identity shortcut ensures gradients flow directly through the network, mitigating vanishing gradient issues.

- **Feature Aggregation**: Four parallel `Conv` branches suggest feature diversification before merging.

### Interpretation

This ResNet block demonstrates the core innovation of residual networks: **learning residual functions** instead of raw outputs. By adding skip connections, the network learns identity mappings (via the left path) and complex transformations (via the right path), enabling deeper architectures without degradation. The four-branch design on the right likely captures multi-scale features, while the final upsampling (implied) restores spatial resolution for seamless integration with the shortcut path. This architecture is foundational for training very deep networks (e.g., 152+ layers) by balancing depth with gradient stability.