TECHNICAL ASSET FINGERPRINT

6ea2e75b434def2ec0e93178

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

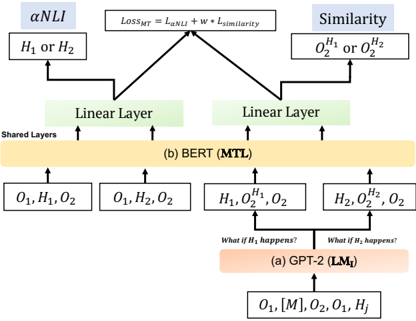

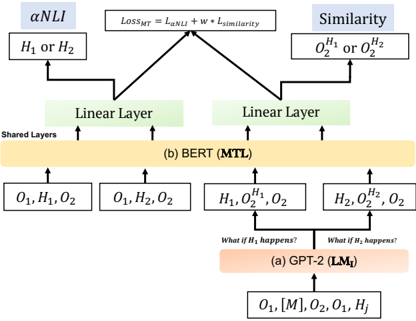

## Diagram: Model Architecture Comparison

### Overview

The image presents a diagram comparing two model architectures: GPT-2 (LM₁) and BERT (MTL). It illustrates how these models process input and generate outputs, highlighting the flow of information and the layers involved. The diagram focuses on how each model handles Natural Language Inference (NLI) and Similarity tasks.

### Components/Axes

* **Title:** The diagram compares two models, GPT-2 (LM₁) and BERT (MTL).

* **Top:** The diagram shows the loss function `LOSS_MT = L_αNLI + w * L_similarity` at the top, which is the combined loss for the multi-task learning.

* **Left Branch:** Represents the αNLI task, outputting `H₁ or H₂`.

* **Right Branch:** Represents the Similarity task, outputting `O₂^H₁ or O₂^H₂`.

* **Middle Section:** Shows the shared layers and the BERT (MTL) model.

* **Bottom Section:** Shows the GPT-2 (LM₁) model and its input.

* **Arrows:** Indicate the flow of information.

* **Boxes:** Represent layers or data representations.

### Detailed Analysis

1. **GPT-2 (LM₁) - Bottom Section:**

* Input: `O₁, [M], O₂, O₁, Hⱼ`

* Process: The input goes into the GPT-2 (LM₁) model, labeled as `(a) GPT-2 (LM₁)`.

* Conditional Statements: Two branches emerge from the GPT-2 block, labeled "What if H₁ happens?" and "What if H₂ happens?".

* Outputs: These branches feed into the BERT (MTL) layer with inputs `H₁, O₂^H₁, O₂` and `H₂, O₂^H₂, O₂` respectively.

2. **BERT (MTL) - Middle Section:**

* Input from GPT-2: `H₁, O₂^H₁, O₂` and `H₂, O₂^H₂, O₂`

* Input directly: `O₁, H₁, O₂` and `O₁, H₂, O₂`

* Process: The inputs are processed by the BERT (MTL) model, labeled as `(b) BERT (MTL)`. This layer is marked as "Shared Layers".

* Outputs: The BERT layer feeds into two "Linear Layer" blocks.

3. **Linear Layers:**

* Two "Linear Layer" blocks receive input from the BERT (MTL) layer.

* These layers feed into the αNLI and Similarity tasks.

4. **αNLI and Similarity - Top Section:**

* αNLI: Outputs `H₁ or H₂`.

* Similarity: Outputs `O₂^H₁ or O₂^H₂`.

* Loss Function: The outputs of these tasks are combined using the loss function `LOSS_MT = L_αNLI + w * L_similarity`.

### Key Observations

* The diagram illustrates a multi-task learning approach where GPT-2 generates conditional inputs for BERT.

* BERT acts as a shared layer, processing both direct inputs and inputs conditioned on GPT-2's output.

* The final loss function combines the losses from the αNLI and Similarity tasks.

### Interpretation

The diagram demonstrates a model architecture that leverages both GPT-2 and BERT for Natural Language Inference and Similarity tasks. GPT-2 is used to generate conditional inputs, allowing the model to explore different scenarios (H₁ or H₂). BERT then processes these inputs, along with direct inputs, to perform the NLI and Similarity tasks. The combined loss function ensures that the model learns to perform both tasks effectively. This architecture suggests a way to combine the strengths of different pre-trained models for improved performance on complex NLP tasks.

DECODING INTELLIGENCE...

EXPERT: gemini-2.5-flash-lite-free VERSION 1

RUNTIME: google-free/gemini-2.5-flash-lite

INTEL_VERIFIED

## Diagram: Multi-Task Learning Framework with BERT and GPT-2

### Overview

This diagram illustrates a multi-task learning (MTL) framework that utilizes both BERT and GPT-2 models. The framework aims to compute a total loss, `LOSSMT`, which is a weighted sum of two components: `L_αNLI` and `L_similarity`. The inputs to the framework are derived from different processing stages of GPT-2 and BERT, leading to distinct outputs for the NLI (Natural Language Inference) task and a similarity task.

### Components/Axes

This diagram does not contain traditional axes or scales. The components are represented by labeled boxes and arrows indicating data flow.

**Key Components and Labels:**

* **Top Section:**

* **αNLI (Blue Box):** Represents the output or task related to Natural Language Inference.

* **Similarity (Blue Box):** Represents the output or task related to similarity.

* **LOSSMT = L_αNLI + W * L_similarity (White Box):** This box defines the total loss function, indicating it's a weighted sum of the NLI loss (`L_αNLI`) and the similarity loss (`L_similarity`), where `W` is a weighting factor.

* **H₁ or H₂ (White Box):** Represents potential outputs or states related to hypothesis 1 or hypothesis 2, feeding into the αNLI task.

* **O₂<sup>H₁</sup> or O₂<sup>H₂</sup> (White Box):** Represents potential outputs or states related to hypothesis 1 or hypothesis 2, feeding into the Similarity task.

* **Middle Section:**

* **Linear Layer (Green Boxes, two instances):** These are processing layers that take input from the BERT (MTL) component and transform it before feeding into the αNLI and Similarity tasks.

* **Shared Layers (Text Label):** Indicates that the BERT (MTL) component operates on shared layers.

* **(b) BERT (MTL) (Orange Box):** Represents the BERT model configured for Multi-Task Learning. It receives various combinations of inputs.

* **Bottom Section:**

* **Input Boxes to BERT (MTL):**

* `O₁, H₁, O₂`

* `O₁, H₂, O₂`

* `H₁, O₂<sup>H₁</sup>, O₂`

* `H₂, O₂<sup>H₂</sup>, O₂`

* **(a) GPT-2 (LMᵢ) (Orange Box):** Represents the GPT-2 model acting as a Language Model (LMᵢ). It receives an initial input and branches out based on conditional questions.

* **Input Box to GPT-2 (LMᵢ):**

* `O₁, [M], O₂, O₁, Hⱼ`

* **Conditional Questions:**

* "What if H₁ happens?" (Text Label)

* "What if H₂ happens?" (Text Label)

**Arrows:** Arrows indicate the direction of data flow between components.

### Detailed Analysis or Content Details

The diagram outlines a process where:

1. **GPT-2 (LMᵢ)** processes an initial input `O₁, [M], O₂, O₁, Hⱼ`.

2. Based on the processing of GPT-2, two conditional paths emerge: "What if H₁ happens?" and "What if H₂ happens?". These conditions likely lead to different intermediate representations or outputs from GPT-2.

3. These conditional outputs from GPT-2, along with other inputs, are fed into **BERT (MTL)**. Specifically, the diagram shows four distinct input combinations to BERT:

* `O₁, H₁, O₂`

* `O₁, H₂, O₂`

* `H₁, O₂<sup>H₁</sup>, O₂`

* `H₂, O₂<sup>H₂</sup>, O₂`

It's implied that the "What if H₁ happens?" condition influences the inputs `H₁, O₂<sup>H₁</sup>, O₂`, and similarly for "What if H₂ happens?" influencing `H₂, O₂<sup>H₂</sup>, O₂`.

4. The **BERT (MTL)** component, operating on shared layers, produces outputs that are then passed through two separate **Linear Layers**.

5. One **Linear Layer** takes input from BERT and produces an output that feeds into the **αNLI** task, represented by `H₁ or H₂`.

6. The other **Linear Layer** takes input from BERT and produces an output that feeds into the **Similarity** task, represented by `O₂<sup>H₁</sup> or O₂<sup>H₂</sup>`.

7. Finally, the outputs from these two paths are used to calculate the total loss `LOSSMT = L_αNLI + W * L_similarity`.

### Key Observations

* **Modular Design:** The framework clearly separates the roles of GPT-2 and BERT, with GPT-2 potentially handling initial sequence generation or contextualization, and BERT performing multi-task learning on enriched inputs.

* **Conditional Processing:** The "What if H₁ happens?" and "What if H₂ happens?" labels suggest a conditional or counterfactual reasoning mechanism within the GPT-2 component that influences the subsequent BERT processing.

* **Task Specialization:** The two linear layers act as task-specific heads, adapting the shared BERT representations for the distinct αNLI and Similarity tasks.

* **Loss Combination:** The final loss function explicitly combines losses from two different tasks, a hallmark of multi-task learning, aiming to improve generalization by learning related tasks simultaneously.

### Interpretation

This diagram depicts a sophisticated neural network architecture designed for multi-task learning, likely in the domain of natural language understanding. The framework leverages the strengths of both GPT-2 and BERT.

* **GPT-2's Role:** GPT-2, often used for generative tasks, appears to be employed here to generate or condition inputs based on hypothetical scenarios (`H₁` or `H₂`). This suggests a capability to explore different linguistic possibilities or generate context that is then fed into BERT. The notation `LMᵢ` implies it's acting as a language model, possibly providing contextual embeddings or sequences. The input `O₁, [M], O₂, O₁, Hⱼ` to GPT-2 suggests it's processing a sequence of tokens, where `[M]` might represent a mask or a special token.

* **BERT's Role:** BERT, known for its strong performance in understanding contextual relationships, acts as the core multi-task learning backbone. It receives diverse inputs, potentially enriched by GPT-2's conditional processing, and its shared layers learn representations beneficial for both downstream tasks. The different input combinations to BERT (`O₁, H₁, O₂`, `O₁, H₂, O₂`, etc.) indicate that the model is trained on various configurations of observations (`O`) and hypotheses (`H`).

* **Task Integration:** The two linear layers serve as bridges, transforming the general-purpose BERT representations into task-specific outputs. The αNLI task likely involves determining the relationship (entailment, contradiction, neutral) between a premise and a hypothesis, while the Similarity task probably measures how semantically close two pieces of text are.

* **Learning Objective:** The `LOSSMT` equation signifies that the model is optimized to perform well on both NLI and similarity tasks simultaneously. By learning these related tasks together, the model can potentially achieve better performance and generalization than if trained on each task in isolation. The weighting factor `W` allows for tuning the relative importance of each task during training.

In essence, this framework appears to be designed to handle complex natural language tasks that require understanding context, generating hypothetical scenarios, and performing inference or similarity judgments, all within a unified learning objective. The conditional processing by GPT-2 adds a layer of flexibility, allowing the model to explore different linguistic pathways before BERT extracts shared representations for multiple tasks.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Multi-Task Learning Architecture with BERT and GPT-2

### Overview

The image depicts a diagram illustrating a multi-task learning (MTL) architecture combining BERT and GPT-2 models. The diagram shows the flow of data through these models for two tasks: Natural Language Inference (NLI) and Similarity. It highlights shared layers between the models and the loss function used for multi-task learning.

### Components/Axes

The diagram consists of the following components:

* **BERT (MTL):** Represented by a large, light-orange rectangular block labeled "(b) BERT (MTL)". This block signifies the shared layers of the BERT model used for multi-task learning.

* **GPT-2 (LM<sub>T</sub>):** Represented by a large, light-red rectangular block labeled "(a) GPT-2 (LM<sub>T</sub>)". This block signifies the GPT-2 model used for language modeling.

* **Linear Layers:** Two green rectangular blocks labeled "Linear Layer" positioned above the BERT block. These layers are specific to each task.

* **Tasks:** Two tasks are represented:

* **nNLI:** Labeled "nNLI" in a light-blue rectangular block.

* **Similarity:** Labeled "Similarity" in a light-blue rectangular block.

* **Input Data:** Various input sequences are represented as:

* O<sub>1</sub>, H<sub>1</sub>, O<sub>2</sub>

* O<sub>1</sub>, H<sub>2</sub>, O<sub>2</sub>

* H<sub>1</sub>, O<sub>2</sub>, O<sub>2</sub>

* H<sub>2</sub>, H<sub>2</sub>, O<sub>2</sub>

* O<sub>1</sub>, [M], O<sub>2</sub>, O<sub>1</sub>

* **Loss Function:** A rectangular block labeled "Loss<sub>MT</sub> = λ<sub>NLI</sub> + W * L<sub>similarity</sub>".

* **Questions:** Two questions are written above the GPT-2 block: "What if H<sub>1</sub> happens?" and "What if H<sub>2</sub> happens?".

### Detailed Analysis or Content Details

The diagram illustrates the following data flow:

1. **GPT-2 Branch:**

* Input: O<sub>1</sub>, [M], O<sub>2</sub>, O<sub>1</sub> is fed into the GPT-2 model.

* Output: H<sub>1</sub> and H<sub>2</sub> are generated.

* Questions: The questions "What if H<sub>1</sub> happens?" and "What if H<sub>2</sub> happens?" are posed, suggesting conditional generation or reasoning.

2. **BERT Branch:**

* Input: Four different input sequences are fed into the BERT model: O<sub>1</sub>, H<sub>1</sub>, O<sub>2</sub>; O<sub>1</sub>, H<sub>2</sub>, O<sub>2</sub>; H<sub>1</sub>, O<sub>2</sub>, O<sub>2</sub>; H<sub>2</sub>, H<sub>2</sub>, O<sub>2</sub>.

* Shared Layers: These inputs pass through the shared layers of the BERT model.

* Linear Layers: The outputs from the shared layers are then fed into two separate linear layers, one for each task.

3. **Tasks and Loss Function:**

* nNLI: The output of the first linear layer is used for the nNLI task, taking input H<sub>1</sub> or H<sub>2</sub>.

* Similarity: The output of the second linear layer is used for the Similarity task, taking input H<sub>2</sub> or O<sub>2</sub>.

* Loss Function: The overall loss function (Loss<sub>MT</sub>) is a weighted sum of the nNLI loss (λ<sub>NLI</sub>) and the similarity loss (L<sub>similarity</sub>), with 'W' being the weight for the similarity loss.

### Key Observations

* The diagram emphasizes the sharing of layers between the BERT model for both tasks, which is a key characteristic of multi-task learning.

* The GPT-2 model appears to be used for generating hypotheses (H<sub>1</sub> and H<sub>2</sub>) which are then used as input to the BERT model for the nNLI and Similarity tasks.

* The loss function explicitly shows the weighting between the two tasks, allowing for control over the relative importance of each task during training.

### Interpretation

This diagram illustrates a sophisticated multi-task learning approach that leverages the strengths of both BERT and GPT-2. GPT-2 is used to generate potential hypotheses or contextual information, while BERT is used to perform downstream tasks like nNLI and similarity assessment. The shared layers in BERT allow for knowledge transfer between the two tasks, potentially improving performance on both. The weighted loss function provides a mechanism for balancing the contributions of each task to the overall learning process. The questions posed above the GPT-2 block suggest a focus on counterfactual reasoning or exploring different scenarios. This architecture is likely designed to improve the robustness and generalization ability of the model by training it on multiple related tasks simultaneously. The use of [M] in the GPT-2 input suggests a masking token, commonly used in language modeling to predict missing words or phrases.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Multi-Task Learning Architecture with BERT and GPT-2

### Overview

The image is a technical flowchart illustrating a neural network architecture for multi-task learning (MTL). It depicts a pipeline where a GPT-2 model generates hypotheses or scenarios, which are then processed by a shared BERT-based encoder to perform two distinct tasks: αNLI (presumably a form of Natural Language Inference) and a Similarity task. The architecture is trained using a combined loss function.

### Components/Axes

The diagram is structured vertically, flowing from bottom (input) to top (output/loss). It contains the following labeled components, arranged spatially:

**Bottom Region (Input & Hypothesis Generation):**

* **Input Sequence:** `O₁, [M], O₂, O₁, H_j` (Located at the very bottom center).

* **Model Block (a):** Labeled `(a) GPT-2 (LM_t)`. This is an orange rectangular block.

* **Generated Hypotheses:** Two arrows point upward from the GPT-2 block, each associated with a text query:

* Left arrow: `What if H₂ happens?`

* Right arrow: `What if H₂ happens?` (Note: The text is identical for both arrows in the image).

* **Hypothesis Outputs:** These arrows lead to two boxes:

* Left box: `H₁, O₂^{H₁}, O₂`

* Right box: `H₂, O₂^{H₂}, O₂`

**Middle Region (Shared Encoder):**

* **Model Block (b):** A large yellow rectangular block labeled `(b) BERT (MTL)`. The text `Shared Layers` is written vertically along its left edge.

* **Input Feeds:** Four arrows feed into the BERT block from below:

1. From the far left: `O₁, H₁, O₂`

2. From the center-left: `O₁, H₂, O₂`

3. From the center-right: `H₁, O₂^{H₁}, O₂` (from the left GPT-2 output box)

4. From the far right: `H₂, O₂^{H₂}, O₂` (from the right GPT-2 output box)

* **Linear Layers:** Above the BERT block, two separate green rectangular blocks are labeled `Linear Layer`. Each receives an arrow from the BERT block.

**Top Region (Tasks & Loss):**

* **Task 1 (αNLI):** Located at the top left.

* A light blue box labeled `αNLI`.

* Below it, a white box containing `H₁ or H₂`.

* An arrow connects the left Linear Layer to this task.

* **Task 2 (Similarity):** Located at the top right.

* A light blue box labeled `Similarity`.

* Below it, a white box containing `O₂^{H₁} or O₂^{H₂}`.

* An arrow connects the right Linear Layer to this task.

* **Loss Function:** Centered at the very top.

* A white box containing the equation: `Loss_MTL = L_{αNLI} + w * L_{Similarity}`.

* Arrows from both the αNLI and Similarity task boxes point to this loss function box.

### Detailed Analysis

The diagram explicitly details the data flow and transformations:

1. **Input:** The process starts with a sequence containing observations (`O₁`, `O₂`), a mask token (`[M]`), and a hypothesis index (`H_j`).

2. **Hypothesis Generation:** The GPT-2 model (`LM_t`) takes this input and generates two potential hypotheses or scenarios, `H₁` and `H₂`, along with their associated conditional observations `O₂^{H₁}` and `O₂^{H₂}`.

3. **Shared Encoding:** Four different input combinations are constructed and fed into the shared BERT encoder:

* `(O₁, H₁, O₂)`

* `(O₁, H₂, O₂)`

* `(H₁, O₂^{H₁}, O₂)`

* `(H₂, O₂^{H₂}, O₂)`

4. **Task-Specific Processing:** The encoded representations from BERT are passed through separate linear layers for each downstream task.

5. **Task Outputs:**

* The **αNLI** task appears to perform inference, deciding between hypotheses `H₁` or `H₂`.

* The **Similarity** task compares the conditional observations `O₂^{H₁}` and `O₂^{H₂}`.

6. **Multi-Task Optimization:** The model is trained jointly by minimizing a weighted sum of the losses from both tasks, as defined by the equation `Loss_MTL = L_{αNLI} + w * L_{Similarity}`, where `w` is a weighting hyperparameter.

### Key Observations

* **Identical Query Text:** The two queries generated from the GPT-2 block are both labeled `What if H₂ happens?`. This is likely a labeling error in the diagram, as the outputs (`H₁` and `H₂`) suggest the queries should be distinct (e.g., "What if H₁ happens?" and "What if H₂ happens?").

* **Asymmetric Task Inputs:** The αNLI task receives the raw hypotheses (`H₁ or H₂`), while the Similarity task receives the generated conditional observations (`O₂^{H₁} or O₂^{H₂}`). This indicates the tasks operate on different aspects of the generated data.

* **Central Role of BERT:** The BERT model is explicitly labeled as "Shared Layers," forming the core encoder for all input variations before task-specific heads are applied.

* **Explicit Loss Formulation:** The multi-task learning objective is clearly defined with a weighted linear combination of the individual task losses.

### Interpretation

This diagram represents a sophisticated **multi-task learning framework designed for counterfactual or hypothetical reasoning**. The architecture suggests a pipeline where:

1. **GPT-2 acts as a "hypothesis generator" or "scenario simulator."** Given an initial context (`O₁, O₂`), it produces alternative future states or explanations (`H₁, H₂`) and their consequences (`O₂^{H₁}, O₂^{H₂}`).

2. **BERT serves as a "universal reasoner."** Its shared layers are tasked with understanding the relationships between observations and hypotheses in multiple formats—both direct inference (for αNLI) and comparison of outcomes (for Similarity).

3. **The joint training (MTL) encourages the shared BERT encoder to learn robust, general-purpose representations** that are useful for both determining which hypothesis is more plausible (αNLI) and for assessing how different the resulting outcomes are (Similarity). The weighting parameter `w` allows balancing the importance of these two complementary objectives.

The overall goal appears to be building a model that can not only reason about "what happened" but also simulate and evaluate "what could have happened," a key capability for advanced question answering, explanation generation, and causal reasoning. The potential labeling error in the GPT-2 queries is a minor inconsistency in an otherwise clear technical schematic.

DECODING INTELLIGENCE...

EXPERT: jina-vlm VERSION 1

RUNTIME: jina-vlm

INTEL_VERIFIED

## Diagram Type: Flowchart

### Overview

The image is a flowchart that illustrates the process of similarity measurement in a machine learning model. It shows the relationship between different components of the model and how they interact to determine similarity.

### Components/Axes

- **αNLI**: A parameter that represents the similarity between two entities.

- **H1 or H2**: Two entities being compared.

- **O1, O2**: Output values for the two entities.

- **Linear Layer**: A layer in the model that processes the input data.

- **Shared Layers**: Layers that are shared between the two entities.

- **BERT (MTL)**: A model that uses multiple tasks to learn from the data.

- **GPT-2 (LM1)**: A model that uses language modeling to learn from the data.

### Detailed Analysis or ### Content Details

The flowchart shows that the similarity between two entities is determined by the output values (O1, O2) and the shared layers in the model. The linear layer processes the input data and the shared layers learn from the data to determine the similarity. The BERT and GPT-2 models are used to learn from the data and determine the similarity.

### Key Observations

- The similarity between two entities is determined by the output values and the shared layers in the model.

- The linear layer processes the input data and the shared layers learn from the data to determine the similarity.

- The BERT and GPT-2 models are used to learn from the data and determine the similarity.

### Interpretation

The data suggests that the similarity between two entities is determined by the output values and the shared layers in the model. The linear layer processes the input data and the shared layers learn from the data to determine the similarity. The BERT and GPT-2 models are used to learn from the data and determine the similarity. This suggests that the model is able to learn from the data and determine the similarity between two entities.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Machine Learning Model Architecture Comparison (GPT-2 vs BERT with Custom Components)

### Overview

The diagram illustrates a hybrid machine learning architecture combining elements of GPT-2 (LM₁) and BERT (MTL) models, with additional components for αNLI (Adversarial Natural Language Inference), similarity scoring, and multi-task learning (MTL). The architecture includes shared layers, linear layers, hypothesis-specific outputs, and a composite loss function.

### Components/Axes

1. **Core Components**:

- **Shared Layers**: Central processing unit for both GPT-2 and BERT variants

- **Linear Layers**: Two separate linear transformation modules (one per model variant)

- **αNLI**: Adversarial Natural Language Inference component

- **Similarity**: Similarity scoring mechanism

- **Loss_MT**: Composite loss function (L_αNLI + w * L_similarity)

2. **Input/Output Flows**:

- **Inputs**: O₁, H₁, O₂ (base outputs)

- **Hypothesis Variants**: H₁ or H₂ (conditional processing paths)

- **Output Variants**:

- GPT-2 (LM₁): O₁, [M], O₂, O₁, H_j

- BERT (MTL): H₁, O₂¹, O₂; H₂, O₂², O₂

3. **Key Elements**:

- **Conditional Processing**: "What if H₁ happens?" and "What if H₂ happens?" decision nodes

- **Output Modifiers**: O₂¹/O₂² (hypothesis-specific output versions)

- **Loss Function**: Weighted combination of αNLI loss and similarity loss

### Detailed Analysis

1. **Shared Layers**:

- Process base inputs (O₁, H₁, O₂) for both model variants

- Serve as foundation for both GPT-2 and BERT implementations

2. **Linear Layers**:

- Two distinct linear transformation modules

- One for GPT-2 (LM₁) path

- One for BERT (MTL) path

3. **αNLI Component**:

- Takes H₁ or H₂ as input

- Feeds into Loss_MT calculation

4. **Similarity Scoring**:

- Processes O₂¹ or O₂²

- Contributes to Loss_MT via weighted term (w * L_similarity)

5. **Hypothesis-Specific Paths**:

- **H₁ Path**:

- Input: H₁

- Output: O₂¹, O₂

- **H₂ Path**:

- Input: H₂

- Output: O₂², O₂

6. **Output Variants**:

- **GPT-2 (LM₁)**: Maintains original output structure with additional hypothesis component (H_j)

- **BERT (MTL)**: Produces hypothesis-specific output versions (O₂¹/O₂²) while maintaining base output (O₂)

### Key Observations

1. **Architectural Integration**:

- Shared layers enable parameter efficiency

- Linear layers provide model-specific adaptations

2. **Multi-Task Capabilities**:

- αNLI component adds adversarial training dimension

- Similarity scoring enables cross-output consistency

3. **Conditional Processing**:

- Model behavior adapts based on hypothesis (H₁/H₂)

- Different output configurations for each hypothesis path

4. **Loss Function Design**:

- Balances adversarial training (L_αNLI) with output consistency (L_similarity)

- Weight parameter (w) controls similarity loss contribution

### Interpretation

This architecture demonstrates a sophisticated approach to multi-task learning in NLP:

1. **Efficiency Through Sharing**: Shared layers reduce parameter count while maintaining model flexibility

2. **Adversarial Robustness**: αNLI component suggests focus on handling challenging linguistic cases

3. **Hypothesis-Aware Processing**: The model can adapt its output based on different linguistic hypotheses

4. **Composite Training Objective**: The loss function balances multiple objectives, suggesting a focus on both adversarial performance and output consistency

The diagram reveals a hybrid approach that combines the strengths of transformer-based models (GPT-2's generative capabilities and BERT's bidirectional understanding) with custom components for specific NLP tasks. The conditional processing paths indicate the model's ability to handle different linguistic scenarios, while the shared architecture components suggest efficient parameter utilization.

DECODING INTELLIGENCE...