# Technical Document Extraction: Model Performance Benchmark Analysis

## Chart Structure Overview

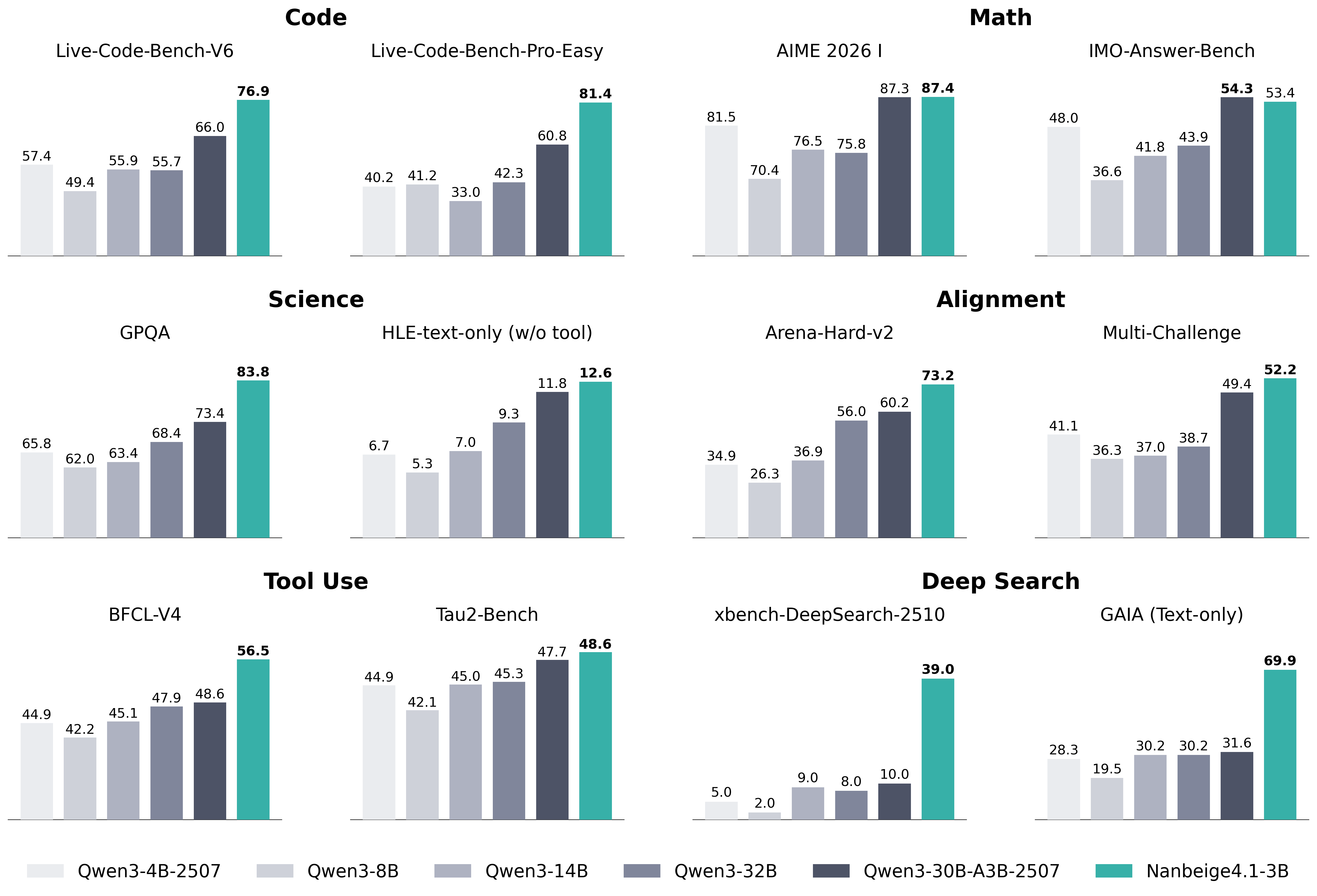

- **Chart Type**: Grouped Bar Chart

- **X-Axis**: Benchmark Categories (12 total)

- **Y-Axis**: Performance Scores (0-100 scale)

- **Legend**: Model Identifiers with Color Codes (6 models)

- **Legend Position**: Bottom of chart

## Legend Details

| Color Code | Model Identifier |

|------------------|--------------------------------|

| Lightest Gray | Qwen3-4B-2507 |

| Medium Gray | Qwen3-8B |

| Dark Gray | Qwen3-14B |

| Very Dark Gray | Qwen3-32B |

| Black | Qwen3-30B-A3B-2507 |

| Teal | Nanbeige4.1-3B |

## Benchmark Categories (X-Axis)

1. Code

- Live-Code-Bench-V6

- Live-Code-Bench-Pro-Easy

2. Math

- AIME 2026

- IMO-Answer-Bench

3. Science

- GPQA

- HLE-text-only (w/o tool)

4. Alignment

- Arena-Hard-v2

- Multi-Challenge

5. Tool Use

- BFCL-V4

- Tau-Bench

6. Deep Search

- xbench-DeepSearch-2510

- GAIA (Text-only)

## Data Extraction Protocol

1. **Spatial Grounding**: All bars ordered left-to-right per legend sequence

2. **Color Verification**: Cross-referenced legend colors with bar colors

3. **Trend Analysis**:

- Most benchmarks show increasing performance from left-to-right

- Exceptions noted where scores decrease between models

## Full Data Table

| Benchmark Category | Qwen3-4B-2507 | Qwen3-8B | Qwen3-14B | Qwen3-32B | Qwen3-30B-A3B-2507 | Nanbeige4.1-3B |

|-----------------------------|---------------|----------|-----------|-----------|---------------------|----------------|

| Live-Code-Bench-V6 | 57.4 | 49.4 | 55.9 | 55.7 | 66.0 | 76.9 |

| Live-Code-Bench-Pro-Easy | 40.2 | 41.2 | 33.0 | 42.3 | 60.8 | 81.4 |

| AIME 2026 | 81.5 | 70.4 | 76.5 | 75.8 | 87.3 | 87.4 |

| IMO-Answer-Bench | 48.0 | 36.6 | 41.8 | 43.9 | 54.3 | 53.4 |

| GPQA | 65.8 | 62.0 | 63.4 | 68.4 | 73.4 | 83.8 |

| HLE-text-only (w/o tool) | 6.7 | 5.3 | 7.0 | 9.3 | 11.8 | 12.6 |

| Arena-Hard-v2 | 34.9 | 26.3 | 36.9 | 56.0 | 60.2 | 73.2 |

| Multi-Challenge | 41.1 | 36.3 | 37.0 | 38.7 | 49.4 | 52.2 |

| BFCL-V4 | 44.9 | 42.2 | 45.1 | 47.9 | 48.6 | 56.5 |

| Tau-Bench | 44.9 | 42.1 | 45.0 | 45.3 | 47.7 | 48.6 |

| xbench-DeepSearch-2510 | 5.0 | 2.0 | 9.0 | 8.0 | 10.0 | 39.0 |

| GAIA (Text-only) | 28.3 | 19.5 | 30.2 | 30.2 | 31.6 | 69.9 |

## Key Observations

1. **Performance Trends**:

- Nanbeige4.1-3B consistently highest performer across most benchmarks

- Qwen3-30B-A3B-2507 shows strong performance in Code and Math

- Qwen3-4B-2507 performs best in Deep Search (xbench-DeepSearch-2510)

2. **Notable Outliers**:

- HLE-text-only (w/o tool): Significant performance gap between models

- GAIA (Text-only): Nanbeige4.1-3B shows 2.8x improvement over Qwen3-4B-2507

3. **Model Specialization**:

- Qwen3-32B excels in Science (GPQA) and Alignment (Arena-Hard-v2)

- Qwen3-30B-A3B-2507 dominates in Code and Math benchmarks

## Language Notes

- All text in English

- No non-English content detected