TECHNICAL ASSET FINGERPRINT

6ec31ba5aefe520f47a6706f

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

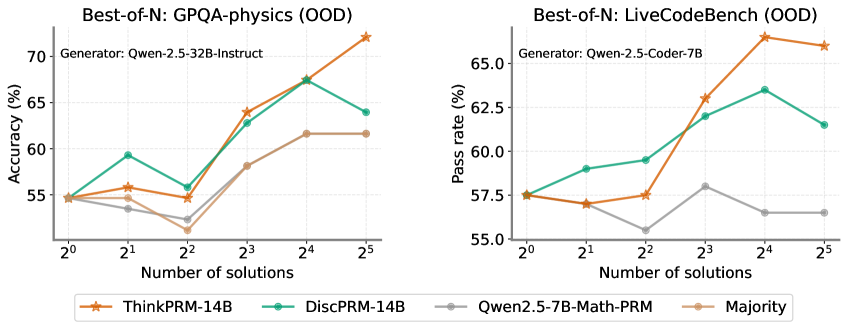

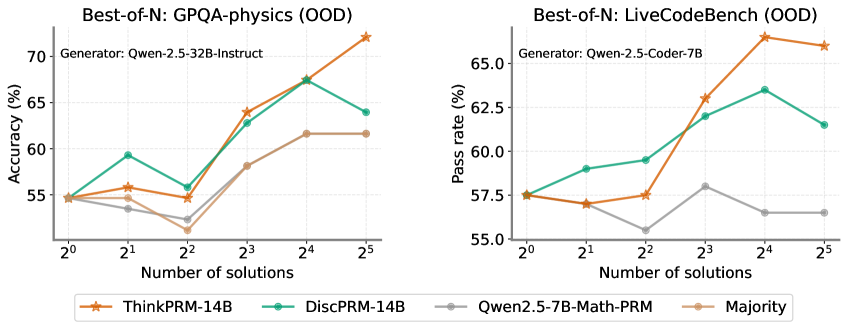

## Line Charts: Best-of-N Performance on GPQA-physics and LiveCodeBench (OOD)

### Overview

The image presents two line charts comparing the performance of different models on the GPQA-physics and LiveCodeBench datasets, both evaluated in an Out-of-Distribution (OOD) setting. The charts show how accuracy (for GPQA-physics) and pass rate (for LiveCodeBench) change with an increasing number of solutions considered ("Best-of-N"). The models compared are ThinkPRM-14B, DiscPRM-14B, Qwen2.5-7B-Math-PRM, and a "Majority" baseline.

### Components/Axes

**Left Chart (GPQA-physics):**

* **Title:** Best-of-N: GPQA-physics (OOD)

* **Generator:** Qwen-2.5-32B-Instruct

* **Y-axis:** Accuracy (%) - Scale ranges from 55 to 70.

* **X-axis:** Number of solutions - Logarithmic scale with values 2<sup>0</sup>, 2<sup>1</sup>, 2<sup>2</sup>, 2<sup>3</sup>, 2<sup>4</sup>, 2<sup>5</sup>.

* **Legend:** Located at the bottom of the chart.

* ThinkPRM-14B (Brown-Orange with star marker)

* DiscPRM-14B (Teal with circle marker)

* Qwen2.5-7B-Math-PRM (Gray with circle marker)

* Majority (Tan with no marker)

**Right Chart (LiveCodeBench):**

* **Title:** Best-of-N: LiveCodeBench (OOD)

* **Generator:** Qwen-2.5-Coder-7B

* **Y-axis:** Pass rate (%) - Scale ranges from 55.0 to 65.0.

* **X-axis:** Number of solutions - Logarithmic scale with values 2<sup>0</sup>, 2<sup>1</sup>, 2<sup>2</sup>, 2<sup>3</sup>, 2<sup>4</sup>, 2<sup>5</sup>.

* **Legend:** Located at the bottom of the left chart, shared between both charts.

* ThinkPRM-14B (Brown-Orange with star marker)

* DiscPRM-14B (Teal with circle marker)

* Qwen2.5-7B-Math-PRM (Gray with circle marker)

* Majority (Tan with no marker)

### Detailed Analysis

**Left Chart (GPQA-physics):**

* **ThinkPRM-14B (Brown-Orange):** Starts at approximately 55% accuracy at 2<sup>0</sup> solutions, shows a generally upward trend, reaching approximately 72% at 2<sup>5</sup> solutions.

* (2<sup>0</sup>, 55%), (2<sup>1</sup>, 56%), (2<sup>2</sup>, 55%), (2<sup>3</sup>, 64%), (2<sup>4</sup>, 67%), (2<sup>5</sup>, 72%)

* **DiscPRM-14B (Teal):** Starts at approximately 55% accuracy at 2<sup>0</sup> solutions, increases to approximately 59% at 2<sup>1</sup> solutions, dips to approximately 56% at 2<sup>2</sup> solutions, then increases to approximately 67% at 2<sup>4</sup> solutions, and decreases to approximately 64% at 2<sup>5</sup> solutions.

* (2<sup>0</sup>, 55%), (2<sup>1</sup>, 59%), (2<sup>2</sup>, 56%), (2<sup>3</sup>, 64%), (2<sup>4</sup>, 67%), (2<sup>5</sup>, 64%)

* **Qwen2.5-7B-Math-PRM (Gray):** Starts at approximately 55% accuracy at 2<sup>0</sup> solutions, decreases to approximately 53% at 2<sup>1</sup> solutions, decreases to approximately 52% at 2<sup>2</sup> solutions, then increases to approximately 58% at 2<sup>3</sup> solutions, and plateaus at approximately 62% at 2<sup>4</sup> and 2<sup>5</sup> solutions.

* (2<sup>0</sup>, 55%), (2<sup>1</sup>, 53%), (2<sup>2</sup>, 52%), (2<sup>3</sup>, 58%), (2<sup>4</sup>, 62%), (2<sup>5</sup>, 62%)

* **Majority (Tan):** Starts at approximately 55% accuracy at 2<sup>0</sup> solutions, increases to approximately 56% at 2<sup>1</sup> solutions, decreases to approximately 52% at 2<sup>2</sup> solutions, then increases to approximately 62% at 2<sup>3</sup> solutions, and plateaus at approximately 62% at 2<sup>4</sup> and 2<sup>5</sup> solutions.

* (2<sup>0</sup>, 55%), (2<sup>1</sup>, 56%), (2<sup>2</sup>, 52%), (2<sup>3</sup>, 62%), (2<sup>4</sup>, 62%), (2<sup>5</sup>, 62%)

**Right Chart (LiveCodeBench):**

* **ThinkPRM-14B (Brown-Orange):** Starts at approximately 57.5% pass rate at 2<sup>0</sup> solutions, shows a generally upward trend, reaching approximately 67% at 2<sup>4</sup> solutions, and decreases to approximately 66.5% at 2<sup>5</sup> solutions.

* (2<sup>0</sup>, 57.5%), (2<sup>1</sup>, 57%), (2<sup>2</sup>, 57%), (2<sup>3</sup>, 61%), (2<sup>4</sup>, 67%), (2<sup>5</sup>, 66.5%)

* **DiscPRM-14B (Teal):** Starts at approximately 58% pass rate at 2<sup>0</sup> solutions, increases to approximately 59% at 2<sup>1</sup> solutions, increases to approximately 60% at 2<sup>2</sup> solutions, then increases to approximately 63% at 2<sup>3</sup> solutions, and decreases to approximately 61% at 2<sup>5</sup> solutions.

* (2<sup>0</sup>, 58%), (2<sup>1</sup>, 59%), (2<sup>2</sup>, 60%), (2<sup>3</sup>, 63%), (2<sup>4</sup>, 63.5%), (2<sup>5</sup>, 61%)

* **Qwen2.5-7B-Math-PRM (Gray):** Starts at approximately 57.5% pass rate at 2<sup>0</sup> solutions, decreases to approximately 57% at 2<sup>1</sup> solutions, decreases to approximately 56% at 2<sup>2</sup> solutions, then increases to approximately 59% at 2<sup>3</sup> solutions, and plateaus at approximately 56% at 2<sup>4</sup> and 2<sup>5</sup> solutions.

* (2<sup>0</sup>, 57.5%), (2<sup>1</sup>, 57%), (2<sup>2</sup>, 56%), (2<sup>3</sup>, 59%), (2<sup>4</sup>, 56%), (2<sup>5</sup>, 56%)

* **Majority (Tan):** The "Majority" baseline is not present in the LiveCodeBench chart.

### Key Observations

* **GPQA-physics:** ThinkPRM-14B consistently outperforms the other models as the number of solutions increases. The Qwen2.5-7B-Math-PRM and Majority models show similar performance, plateauing after 2<sup>3</sup> solutions.

* **LiveCodeBench:** ThinkPRM-14B shows the highest pass rate, especially at higher numbers of solutions. DiscPRM-14B initially performs well but plateaus and decreases slightly at 2<sup>5</sup> solutions. Qwen2.5-7B-Math-PRM shows the lowest performance.

* **OOD Setting:** Both datasets are evaluated in an Out-of-Distribution setting, which likely explains the relatively lower performance compared to in-distribution benchmarks.

### Interpretation

The charts demonstrate the impact of "Best-of-N" sampling on the performance of different language models on two distinct tasks: physics problem-solving (GPQA-physics) and code generation (LiveCodeBench). The results suggest that increasing the number of solutions considered can significantly improve performance, particularly for the ThinkPRM-14B model. The OOD setting highlights the models' ability to generalize to unseen data distributions. The performance differences between the models likely reflect their architectural strengths and weaknesses, as well as their training data. The "Majority" baseline in GPQA-physics provides a reference point for understanding the added value of the other models. The absence of the "Majority" baseline in the LiveCodeBench chart suggests it was not applicable or relevant for that task.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Charts: Best-of-N Performance Comparison

### Overview

The image presents two line charts comparing the performance of different language models on two out-of-distribution (OOD) datasets: GPQA-physics and LiveCodeBench. The charts plot performance metrics (Accuracy for GPQA-physics and Pass Rate for LiveCodeBench) against the number of solutions generated by the models.

### Components/Axes

Both charts share the following components:

* **X-axis:** "Number of solutions" with markers at 2⁰, 2¹, 2², 2³, 2⁴, and 2⁵.

* **Y-axis:** The left chart displays "Accuracy (%)", ranging from approximately 54% to 72%. The right chart displays "Pass rate (%)", ranging from approximately 55% to 67%.

* **Legend:** Located at the bottom of each chart, identifying the different models/strategies:

* ThinkPRM-14B (Orange, dashed line)

* DiscPRM-14B (Green, solid line)

* Qwen2.5-7B-Math-PRM (Gray, dashed-dotted line)

* Majority (Gray, solid line)

* **Title:** Each chart has a title indicating the dataset being evaluated:

* Left Chart: "Best-of-N: GPQA-physics (OOD)"

* Right Chart: "Best-of-N: LiveCodeBench (OOD)"

* **Generator:** Each chart also indicates the generator used:

* Left Chart: "Generator: Qwen-2.5-32B-Instruct"

* Right Chart: "Generator: Qwen-2.5-Coder-7B"

### Detailed Analysis or Content Details

**Chart 1: GPQA-physics (OOD)**

* **ThinkPRM-14B (Orange):** Starts at approximately 54.5% at 2⁰, increases steadily to around 68% at 2⁴, and peaks at approximately 71.5% at 2⁵.

* **DiscPRM-14B (Green):** Begins at approximately 56% at 2⁰, rises to around 64% at 2³, then declines to approximately 62% at 2⁵.

* **Qwen2.5-7B-Math-PRM (Gray, dashed-dotted):** Starts at approximately 55% at 2⁰, increases to around 61% at 2⁴, and remains relatively stable at approximately 61% at 2⁵.

* **Majority (Gray, solid):** Starts at approximately 54% at 2⁰, increases to around 58% at 2³, and remains relatively stable at approximately 58% at 2⁵.

**Chart 2: LiveCodeBench (OOD)**

* **ThinkPRM-14B (Orange):** Starts at approximately 57.5% at 2⁰, increases to around 66% at 2⁴, and declines slightly to approximately 65% at 2⁵.

* **DiscPRM-14B (Green):** Begins at approximately 59% at 2⁰, rises to around 64% at 2³, and declines to approximately 62% at 2⁵.

* **Qwen2.5-7B-Math-PRM (Gray, dashed-dotted):** Starts at approximately 57.5% at 2⁰, increases to around 61% at 2³, and declines to approximately 58% at 2⁵.

* **Majority (Gray, solid):** Starts at approximately 55% at 2⁰, increases to around 59% at 2³, and remains relatively stable at approximately 58% at 2⁵.

### Key Observations

* In both charts, ThinkPRM-14B generally outperforms the other models, especially at higher numbers of solutions (2⁴ and 2⁵).

* DiscPRM-14B shows an initial increase in performance but then plateaus or declines.

* Qwen2.5-7B-Math-PRM and Majority consistently perform at a lower level than ThinkPRM-14B and DiscPRM-14B.

* The performance gap between the models tends to widen as the number of solutions increases.

### Interpretation

The data suggests that increasing the number of solutions generated by the models can improve performance on both GPQA-physics and LiveCodeBench datasets. ThinkPRM-14B appears to be the most effective strategy, benefiting significantly from generating more solutions. The decline in performance for DiscPRM-14B after 2³ might indicate a point of diminishing returns or potential overfitting to the generated solutions. The relatively stable performance of the Majority baseline suggests that simply selecting the most frequent solution doesn't yield the same benefits as more sophisticated generation and selection strategies. The difference in performance between the two generators (Qwen-2.5-32B-Instruct for GPQA-physics and Qwen-2.5-Coder-7B for LiveCodeBench) suggests that the choice of generator is also important and may be task-dependent. The "Best-of-N" approach, where multiple solutions are generated and the best one is selected, is a promising technique for improving the performance of language models on challenging tasks. The OOD nature of the datasets highlights the importance of evaluating models on data that differs from their training distribution.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Line Charts: Best-of-N Performance Comparison

### Overview

The image displays two side-by-side line charts comparing the performance of different AI models/methods on two distinct out-of-distribution (OOD) benchmarks as the number of generated solutions increases. The left chart measures accuracy on GPQA-physics, and the right chart measures pass rate on LiveCodeBench. A shared legend at the bottom identifies four data series.

### Components/Axes

**Common Elements:**

* **X-Axis (Both Charts):** Labeled "Number of solutions". It uses a logarithmic scale with base 2, marked at points: 2⁰ (1), 2¹ (2), 2² (4), 2³ (8), 2⁴ (16), 2⁵ (32).

* **Legend (Bottom Center):** Contains four entries with corresponding line colors and markers:

* **ThinkPRM-14B:** Orange line with star markers.

* **DiscPRM-14B:** Teal/Green line with circle markers.

* **Qwen2.5-7B-Math-PRM:** Gray line with circle markers.

* **Majority:** Light brown/Tan line with circle markers.

**Left Chart: GPQA-physics (OOD)**

* **Title:** "Best-of-N: GPQA-physics (OOD)"

* **Subtitle:** "Generator: Qwen-2.5-32B-Instruct"

* **Y-Axis:** Labeled "Accuracy (%)". Scale ranges from 55 to 70, with major ticks at 55, 60, 65, 70.

**Right Chart: LiveCodeBench (OOD)**

* **Title:** "Best-of-N: LiveCodeBench (OOD)"

* **Subtitle:** "Generator: Qwen-2.5-Coder-7B"

* **Y-Axis:** Labeled "Pass rate (%)". Scale ranges from 55.0 to 65.0, with major ticks at 55.0, 57.5, 60.0, 62.5, 65.0.

### Detailed Analysis

**Left Chart: GPQA-physics (OOD) - Accuracy (%)**

* **ThinkPRM-14B (Orange, Stars):** Shows a strong, generally upward trend. Starts at ~55% (2⁰), rises to ~56% (2¹), dips slightly to ~55% (2²), then climbs sharply to ~64% (2³), ~68% (2⁴), and peaks at ~72% (2⁵).

* **DiscPRM-14B (Teal, Circles):** Shows volatility. Starts at ~55% (2⁰), jumps to ~59% (2¹), drops to ~56% (2²), rises to ~63% (2³), peaks at ~67% (2⁴), then falls to ~64% (2⁵).

* **Qwen2.5-7B-Math-PRM (Gray, Circles):** Shows a shallow, fluctuating trend. Starts at ~55% (2⁰), dips to ~54% (2¹), drops further to ~52% (2²), rises to ~58% (2³), then plateaus at ~62% for both 2⁴ and 2⁵.

* **Majority (Tan, Circles):** Follows a similar but slightly lower path than Qwen2.5-7B-Math-PRM. Starts at ~55% (2⁰), dips to ~54% (2¹), drops to ~53% (2²), rises to ~58% (2³), then plateaus at ~62% for both 2⁴ and 2⁵.

**Right Chart: LiveCodeBench (OOD) - Pass rate (%)**

* **ThinkPRM-14B (Orange, Stars):** Shows a strong upward trend with a late plateau. Starts at ~57.5% (2⁰), dips slightly to ~57% (2¹), rises to ~57.5% (2²), jumps to ~63% (2³), peaks at ~66% (2⁴), then slightly decreases to ~65.5% (2⁵).

* **DiscPRM-14B (Teal, Circles):** Shows a steady rise then a fall. Starts at ~57.5% (2⁰), rises to ~59% (2¹), ~59.5% (2²), ~62% (2³), peaks at ~63.5% (2⁴), then falls to ~61.5% (2⁵).

* **Qwen2.5-7B-Math-PRM (Gray, Circles):** Shows a volatile, low trend. Starts at ~57.5% (2⁰), dips to ~57% (2¹), drops to a low of ~55.5% (2²), rises to ~58% (2³), then falls and plateaus at ~56.5% for both 2⁴ and 2⁵.

* **Majority (Tan, Circles):** Not plotted on this chart. The legend entry exists, but no corresponding tan line is visible in the right chart's plot area.

### Key Observations

1. **Dominant Performer:** ThinkPRM-14B (orange) is the top performer on both benchmarks, especially at higher solution counts (N=16, 32). Its performance scales most effectively with increased sampling.

2. **Performance Crossover:** On the GPQA-physics chart, DiscPRM-14B (teal) initially outperforms ThinkPRM at N=2 and N=4, but is overtaken at N=8 and beyond.

3. **Plateauing Effect:** Both Qwen2.5-7B-Math-PRM and the Majority method on the left chart show a clear performance plateau from N=16 to N=32, suggesting diminishing returns for these methods with more samples.

4. **Anomaly in Right Chart:** The "Majority" baseline, while present in the legend, has no visible data line on the LiveCodeBench chart. This could indicate missing data or that its performance was outside the plotted y-axis range.

5. **Volatility:** DiscPRM-14B shows more performance volatility (sharp rises and falls) compared to the steadier climb of ThinkPRM-14B.

### Interpretation

The data demonstrates the effectiveness of the "Best-of-N" sampling strategy, where generating multiple solutions and selecting the best one improves performance. However, the benefit is highly dependent on the underlying model or method used for scoring/selecting the "best" solution.

* **ThinkPRM-14B** appears to be a robust scoring model, as its associated accuracy/pass rate scales reliably with more candidate solutions. This suggests it is good at identifying higher-quality solutions from a larger pool.

* The **plateau** for simpler methods (like Majority voting or the Qwen-based PRM) indicates a ceiling to their improvement. They may lack the discriminative power to effectively leverage additional samples beyond a certain point.

* The **divergence in trends** between the two charts (e.g., DiscPRM's late drop on LiveCodeBench vs. its earlier peak on GPQA) highlights that model performance is benchmark-dependent. A method that works well for physics QA may not transfer perfectly to code generation tasks.

* The **missing Majority line** on the right chart is a critical data gap. It prevents a full comparison on the LiveCodeBench task, leaving open the question of whether simple majority voting is effective for code generation pass rates.

In summary, the charts argue for the use of advanced process reward models (like ThinkPRM) over simpler baselines when employing Best-of-N scaling, as they provide better and more consistent performance gains, particularly in out-of-distribution scenarios.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graphs: Best-of-N Performance Comparison (OOD Tasks)

### Overview

The image contains two line graphs comparing the performance of different AI models on out-of-distribution (OOD) tasks. The left graph evaluates physics problem-solving accuracy using the GPQA-physics benchmark, while the right graph measures code generation pass rates using the LiveCodeBench benchmark. Both graphs plot performance against the number of generated solutions (2⁰ to 2⁵).

### Components/Axes

**Left Graph (GPQA-physics):**

- **X-axis**: Number of solutions (2⁰ to 2⁵)

- **Y-axis**: Accuracy (%) [50-70% range]

- **Legend**:

- Orange stars: ThinkPRM-14B

- Teal circles: DiscPRM-14B

- Gray line: Qwen2.5-7B-Math-PRM

- Brown circles: Majority baseline

**Right Graph (LiveCodeBench):**

- **X-axis**: Number of solutions (2⁰ to 2⁵)

- **Y-axis**: Pass rate (%) [55-65% range]

- **Legend**:

- Orange stars: ThinkPRM-14B

- Teal circles: DiscPRM-14B

- Gray line: Qwen2.5-7B-Math-PRM

- Brown circles: Majority baseline

### Detailed Analysis

**Left Graph Trends:**

1. **ThinkPRM-14B** (orange):

- Starts at ~55% (2⁰), peaks at ~70% (2⁴), then drops to ~68% (2⁵)

- Shows strongest improvement with increasing solutions

2. **DiscPRM-14B** (teal):

- Follows similar trajectory: 55% → 64% → 68% → 63% (2⁵)

- Slight decline after 2⁴ suggests potential overfitting

3. **Qwen2.5-7B-Math-PRM** (gray):

- Starts at ~54%, peaks at ~61% (2⁴), drops to ~58% (2⁵)

- Less consistent improvement than PRM models

4. **Majority** (brown):

- Flat line at ~54-55% across all solution counts

**Right Graph Trends:**

1. **ThinkPRM-14B** (orange):

- Starts at ~55%, peaks at ~67.5% (2⁴), drops to ~66% (2⁵)

- Most significant improvement among methods

2. **DiscPRM-14B** (teal):

- 55% → 59% → 62% → 63% → 61% (2⁵)

- Steady improvement with minor regression at 2⁵

3. **Qwen2.5-7B-Math-PRM** (gray):

- Starts at ~54%, peaks at ~57.5% (2³), drops to ~53% (2⁵)

- Early peak suggests limited scalability

4. **Majority** (brown):

- Flat line at ~54-55% across all solution counts

### Key Observations

1. **Performance Scaling**: All methods show improved performance with increased solutions up to 2⁴, followed by declines at 2⁵

2. **Model Effectiveness**:

- ThinkPRM-14B consistently outperforms others in both tasks

- DiscPRM-14B shows strong second-place performance

- Majority baseline remains stagnant

3. **Task-Specific Behavior**:

- PRM models show more pronounced scaling in physics tasks

- Code generation tasks exhibit more stable improvement curves

4. **Diminishing Returns**: All methods show performance drops at 2⁵ solutions, suggesting potential overfitting or computational limits

### Interpretation

The data demonstrates that PRM-based models (ThinkPRM-14B and DiscPRM-14B) achieve superior performance on OOD tasks compared to specialized models (Qwen2.5-7B-Math-PRM) and simple majority voting. The consistent scaling pattern up to 2⁴ solutions suggests that generating multiple diverse solutions improves reasoning capabilities, but excessive sampling (2⁵) may introduce noise or redundant solutions that degrade performance. The stark contrast between PRM models and the Majority baseline highlights the importance of model architecture in handling OOD tasks. The performance drop at 2⁵ solutions across all methods warrants further investigation into optimal sampling strategies for complex reasoning tasks.

DECODING INTELLIGENCE...