TECHNICAL ASSET FINGERPRINT

6ee8ac21f0e5e89cc988e17a

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

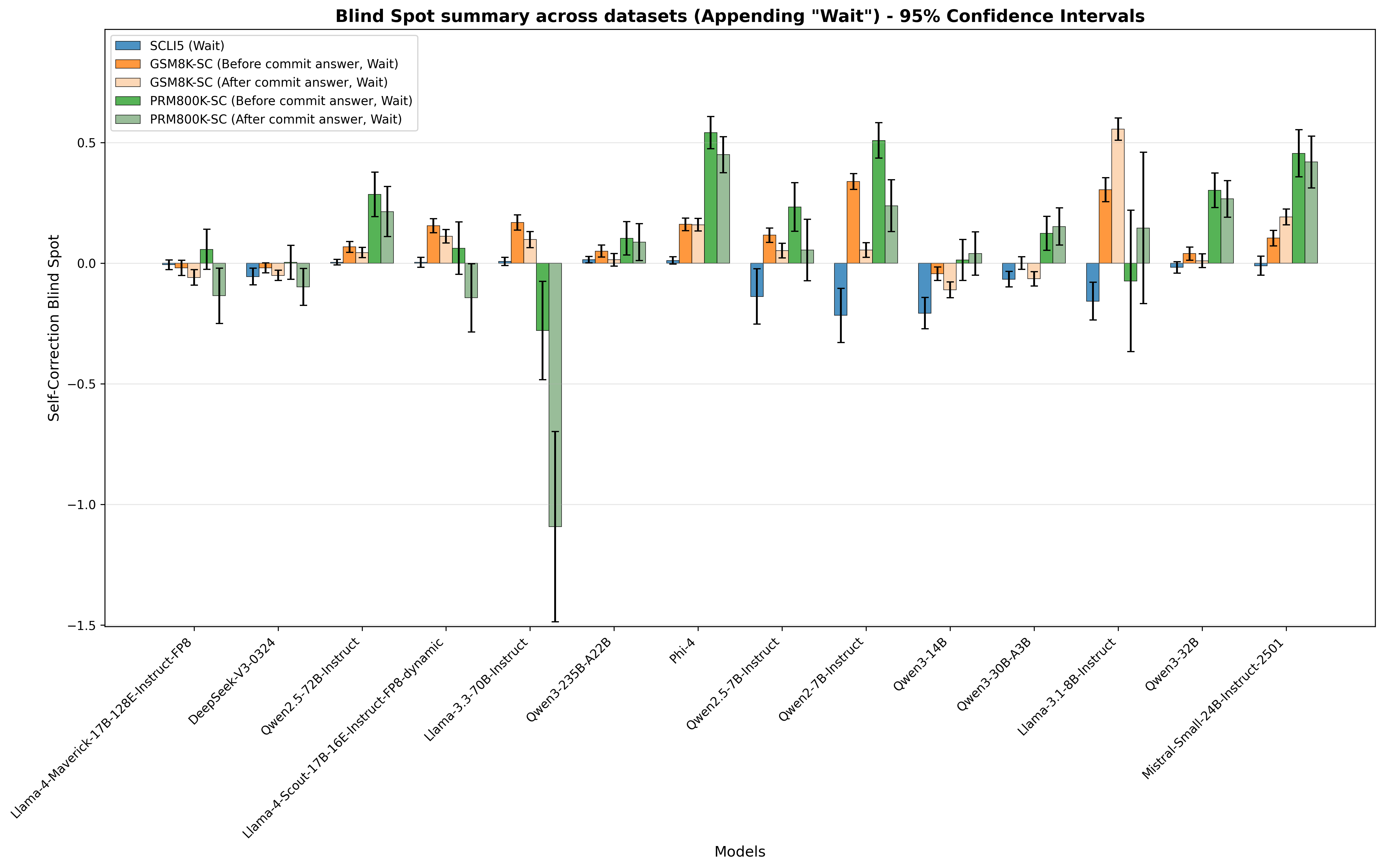

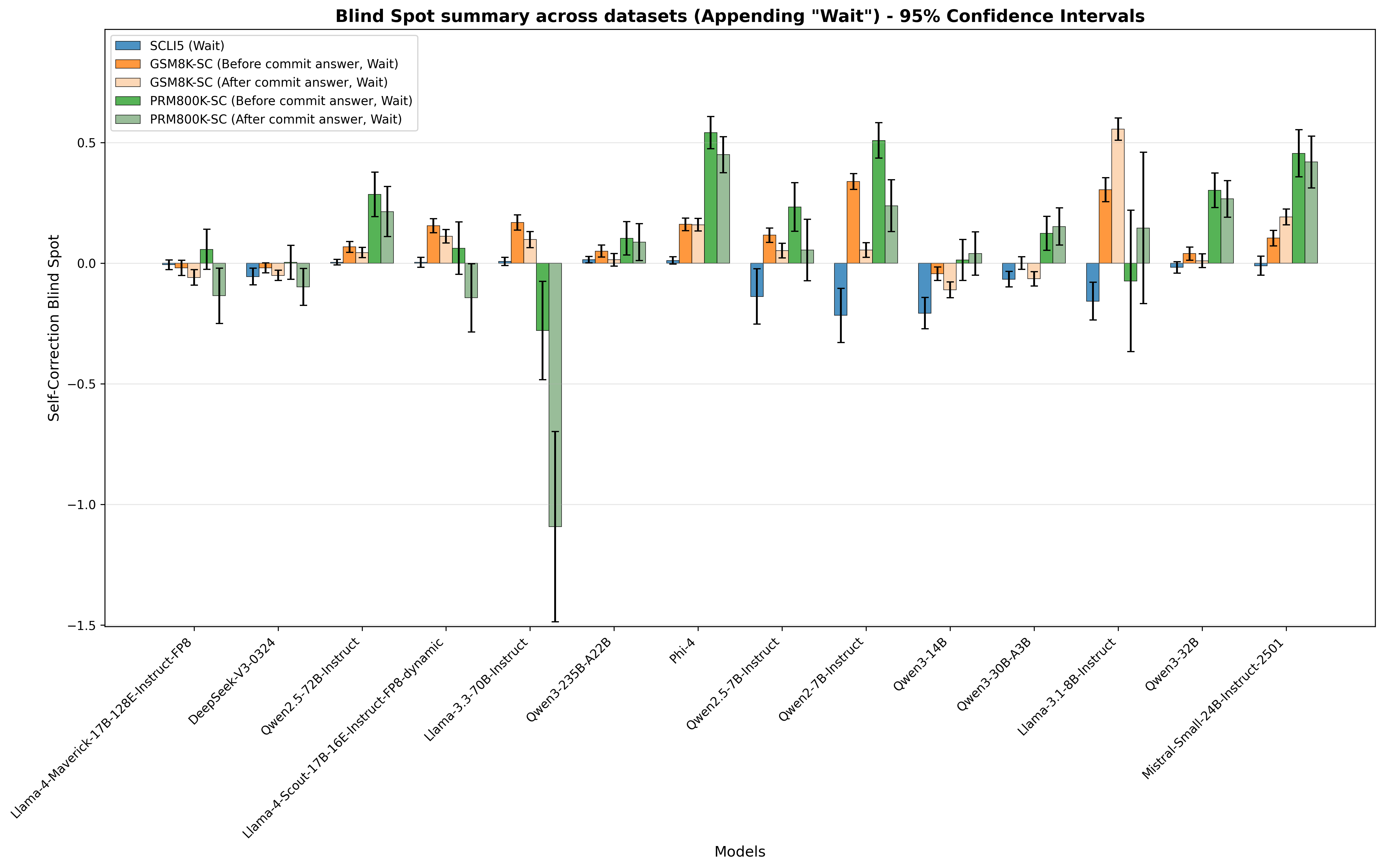

## Bar Chart: Blind Spot Summary Across Datasets

### Overview

The image is a bar chart comparing the "Self-Correction Blind Spot" across different language models and datasets. The chart displays the mean and 95% confidence intervals for each model under various conditions related to "Wait" operations. The models are listed on the x-axis, and the self-correction blind spot values are on the y-axis. The legend distinguishes between different datasets and conditions.

### Components/Axes

* **Title:** Blind Spot summary across datasets (Appending "Wait") - 95% Confidence Intervals

* **X-axis:** Models. The models listed are:

* Llama-4-Maverick-17B-128E-Instruct-FP8

* DeepSeek-V3-0324

* Qwen2.5-72B-Instruct

* Llama-4-Scout-17B-16E-Instruct-FP8-dynamic

* Llama-3.3-70B-Instruct

* Qwen3-235B-A22B

* Phi-4

* Qwen2.5-7B-Instruct

* Qwen2-7B-Instruct

* Qwen3-14B

* Qwen3-30B-A3B

* Llama-3.1-8B-Instruct

* Qwen3-32B

* Mistral-Small-24B-Instruct-2501

* **Y-axis:** Self-Correction Blind Spot. The scale ranges from -1.5 to 0.5, with tick marks at -1.0, -0.5, and 0.0, and 0.5.

* **Legend:** Located in the top-left corner.

* Blue: SCLI5 (Wait)

* Orange: GSM8K-SC (Before commit answer, Wait)

* Light Beige: GSM8K-SC (After commit answer, Wait)

* Light Green: PRM800K-SC (Before commit answer, Wait)

* Dark Green: PRM800K-SC (After commit answer, Wait)

### Detailed Analysis

The chart presents data for each model across five different conditions, represented by the colored bars. Error bars indicate the 95% confidence intervals.

Here's a breakdown of the approximate values for each model and condition:

* **Llama-4-Maverick-17B-128E-Instruct-FP8:**

* SCLI5 (Wait) (Blue): ~0.05

* GSM8K-SC (Before commit answer, Wait) (Orange): ~0.05

* GSM8K-SC (After commit answer, Wait) (Light Beige): ~-0.05

* PRM800K-SC (Before commit answer, Wait) (Light Green): ~-0.1

* PRM800K-SC (After commit answer, Wait) (Dark Green): ~-0.05

* **DeepSeek-V3-0324:**

* SCLI5 (Wait) (Blue): ~-0.05

* GSM8K-SC (Before commit answer, Wait) (Orange): ~0.05

* GSM8K-SC (After commit answer, Wait) (Light Beige): ~0.05

* PRM800K-SC (Before commit answer, Wait) (Light Green): ~-0.05

* PRM800K-SC (After commit answer, Wait) (Dark Green): ~-0.1

* **Qwen2.5-72B-Instruct:**

* SCLI5 (Wait) (Blue): ~0.0

* GSM8K-SC (Before commit answer, Wait) (Orange): ~0.1

* GSM8K-SC (After commit answer, Wait) (Light Beige): ~0.05

* PRM800K-SC (Before commit answer, Wait) (Light Green): ~0.2

* PRM800K-SC (After commit answer, Wait) (Dark Green): ~0.3

* **Llama-4-Scout-17B-16E-Instruct-FP8-dynamic:**

* SCLI5 (Wait) (Blue): ~0.0

* GSM8K-SC (Before commit answer, Wait) (Orange): ~0.15

* GSM8K-SC (After commit answer, Wait) (Light Beige): ~0.1

* PRM800K-SC (Before commit answer, Wait) (Light Green): ~0.1

* PRM800K-SC (After commit answer, Wait) (Dark Green): ~0.25

* **Llama-3.3-70B-Instruct:**

* SCLI5 (Wait) (Blue): ~0.0

* GSM8K-SC (Before commit answer, Wait) (Orange): ~0.15

* GSM8K-SC (After commit answer, Wait) (Light Beige): ~0.1

* PRM800K-SC (Before commit answer, Wait) (Light Green): ~-0.2

* PRM800K-SC (After commit answer, Wait) (Dark Green): ~-1.2

* **Qwen3-235B-A22B:**

* SCLI5 (Wait) (Blue): ~0.0

* GSM8K-SC (Before commit answer, Wait) (Orange): ~0.05

* GSM8K-SC (After commit answer, Wait) (Light Beige): ~0.05

* PRM800K-SC (Before commit answer, Wait) (Light Green): ~0.05

* PRM800K-SC (After commit answer, Wait) (Dark Green): ~0.0

* **Phi-4:**

* SCLI5 (Wait) (Blue): ~0.0

* GSM8K-SC (Before commit answer, Wait) (Orange): ~0.1

* GSM8K-SC (After commit answer, Wait) (Light Beige): ~0.1

* PRM800K-SC (Before commit answer, Wait) (Light Green): ~0.5

* PRM800K-SC (After commit answer, Wait) (Dark Green): ~0.4

* **Qwen2.5-7B-Instruct:**

* SCLI5 (Wait) (Blue): ~-0.15

* GSM8K-SC (Before commit answer, Wait) (Orange): ~0.1

* GSM8K-SC (After commit answer, Wait) (Light Beige): ~0.05

* PRM800K-SC (Before commit answer, Wait) (Light Green): ~0.1

* PRM800K-SC (After commit answer, Wait) (Dark Green): ~0.1

* **Qwen2-7B-Instruct:**

* SCLI5 (Wait) (Blue): ~-0.1

* GSM8K-SC (Before commit answer, Wait) (Orange): ~0.3

* GSM8K-SC (After commit answer, Wait) (Light Beige): ~0.25

* PRM800K-SC (Before commit answer, Wait) (Light Green): ~0.35

* PRM800K-SC (After commit answer, Wait) (Dark Green): ~0.2

* **Qwen3-14B:**

* SCLI5 (Wait) (Blue): ~-0.2

* GSM8K-SC (Before commit answer, Wait) (Orange): ~0.0

* GSM8K-SC (After commit answer, Wait) (Light Beige): ~0.0

* PRM800K-SC (Before commit answer, Wait) (Light Green): ~0.3

* PRM800K-SC (After commit answer, Wait) (Dark Green): ~0.5

* **Qwen3-30B-A3B:**

* SCLI5 (Wait) (Blue): ~-0.05

* GSM8K-SC (Before commit answer, Wait) (Orange): ~0.0

* GSM8K-SC (After commit answer, Wait) (Light Beige): ~0.0

* PRM800K-SC (Before commit answer, Wait) (Light Green): ~0.05

* PRM800K-SC (After commit answer, Wait) (Dark Green): ~0.1

* **Llama-3.1-8B-Instruct:**

* SCLI5 (Wait) (Blue): ~-0.15

* GSM8K-SC (Before commit answer, Wait) (Orange): ~0.15

* GSM8K-SC (After commit answer, Wait) (Light Beige): ~0.55

* PRM800K-SC (Before commit answer, Wait) (Light Green): ~0.3

* PRM800K-SC (After commit answer, Wait) (Dark Green): ~0.1

* **Qwen3-32B:**

* SCLI5 (Wait) (Blue): ~0.05

* GSM8K-SC (Before commit answer, Wait) (Orange): ~0.0

* GSM8K-SC (After commit answer, Wait) (Light Beige): ~0.0

* PRM800K-SC (Before commit answer, Wait) (Light Green): ~0.2

* PRM800K-SC (After commit answer, Wait) (Dark Green): ~0.3

* **Mistral-Small-24B-Instruct-2501:**

* SCLI5 (Wait) (Blue): ~0.0

* GSM8K-SC (Before commit answer, Wait) (Orange): ~0.1

* GSM8K-SC (After commit answer, Wait) (Light Beige): ~0.1

* PRM800K-SC (Before commit answer, Wait) (Light Green): ~0.25

* PRM800K-SC (After commit answer, Wait) (Dark Green): ~0.4

### Key Observations

* The PRM800K-SC (After commit answer, Wait) condition (Dark Green) shows the most significant variation across models.

* Llama-3.3-70B-Instruct has a notably negative "Self-Correction Blind Spot" for the PRM800K-SC (After commit answer, Wait) condition.

* The confidence intervals vary across models and conditions, indicating different levels of uncertainty in the measurements.

### Interpretation

The chart provides a comparative analysis of how different language models perform in terms of self-correction when subjected to "Wait" operations across various datasets. The "Self-Correction Blind Spot" metric likely indicates the degree to which a model fails to recognize and correct its own errors under these conditions.

The significant negative value for Llama-3.3-70B-Instruct in the PRM800K-SC (After commit answer, Wait) condition suggests that this model may be particularly prone to making errors or struggling to correct them in that specific scenario. Conversely, models like Phi-4 and Qwen3-14B show relatively high values for PRM800K-SC (Before/After commit answer, Wait), indicating potentially better self-correction capabilities in those conditions.

The variations in confidence intervals suggest that some models and conditions have more consistent performance than others. Further investigation would be needed to understand the underlying reasons for these differences and to determine the practical implications for model deployment and usage.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Bar Chart: Blind Spot Summary Across Datasets

### Overview

This bar chart visualizes the "Self-Correction Blind Spot" across various models, with 95% confidence intervals represented by error bars. The chart compares performance "Before commit answer" and "After commit answer" for different datasets (SCU5, GSM8K, PRM800K). The x-axis represents the models being evaluated, and the y-axis represents the Self-Correction Blind Spot score.

### Components/Axes

* **Title:** "Blind Spot Summary across datasets (Appending “Wait”) - 95% Confidence Intervals" (Top-center)

* **X-axis Label:** "Models" (Bottom-center)

* **Y-axis Label:** "Self-Correction Blind Spot" (Left-center)

* **Legend:** Located in the top-left corner.

* SCU5 (Wait) - Blue

* GSM8K-SC (Before commit answer, Wait) - Orange

* GSM8K-SC (After commit answer, Wait) - Green

* PRM800K-SC (Before commit answer, Wait) - Red

* PRM800K-SC (After commit answer, Wait) - Teal

* **Models (X-axis):**

* Llama-4-Maverick-13B

* Llama-2-12B-Instruct-v0.9

* Deepseek-v3-0324

* Owen2.5-12B

* Llama-4-Scout-17B-16E-Instruct-Fpg-dynamic

* Llama-3-70B-Instruct

* Owen3-25B-A22B

* Phi-4

* Owen2.5-7B-Instruct

* Owen2-7B-Instruct

* Owen3-14B

* Owen3-30B-A2B

* Llama-3-31-8B-Instruct

* Owen3-32B

* Mistral-Small-24B-Instruct-2501

* **Y-axis Scale:** Ranges from approximately -1.5 to 0.5.

### Detailed Analysis

The chart displays the Self-Correction Blind Spot for each model, with error bars indicating the 95% confidence interval. I will analyze each data series individually, noting trends and approximate values.

* **SCU5 (Wait) - Blue:** The blue bars remain consistently around 0, with slight fluctuations. Values are approximately:

* Llama-4-Maverick-13B: ~0.05

* Llama-2-12B-Instruct-v0.9: ~0.02

* Deepseek-v3-0324: ~0.02

* Owen2.5-12B: ~0.02

* Llama-4-Scout-17B-16E-Instruct-Fpg-dynamic: ~0.02

* Llama-3-70B-Instruct: ~0.02

* Owen3-25B-A22B: ~0.02

* Phi-4: ~0.02

* Owen2.5-7B-Instruct: ~0.02

* Owen2-7B-Instruct: ~0.02

* Owen3-14B: ~0.02

* Owen3-30B-A2B: ~0.02

* Llama-3-31-8B-Instruct: ~0.02

* Owen3-32B: ~0.02

* Mistral-Small-24B-Instruct-2501: ~0.02

* **GSM8K-SC (Before commit answer, Wait) - Orange:** The orange bars generally hover around 0, with some positive excursions. Values are approximately:

* Llama-4-Maverick-13B: ~0.05

* Llama-2-12B-Instruct-v0.9: ~0.1

* Deepseek-v3-0324: ~0.1

* Owen2.5-12B: ~0.1

* Llama-4-Scout-17B-16E-Instruct-Fpg-dynamic: ~0.1

* Llama-3-70B-Instruct: ~0.1

* Owen3-25B-A22B: ~0.1

* Phi-4: ~0.1

* Owen2.5-7B-Instruct: ~0.1

* Owen2-7B-Instruct: ~0.1

* Owen3-14B: ~0.1

* Owen3-30B-A2B: ~0.1

* Llama-3-31-8B-Instruct: ~0.1

* Owen3-32B: ~0.1

* Mistral-Small-24B-Instruct-2501: ~0.1

* **GSM8K-SC (After commit answer, Wait) - Green:** The green bars are generally negative, indicating a reduction in the blind spot after the commit. Values are approximately:

* Llama-4-Maverick-13B: ~-0.05

* Llama-2-12B-Instruct-v0.9: ~-0.1

* Deepseek-v3-0324: ~-0.1

* Owen2.5-12B: ~-0.1

* Llama-4-Scout-17B-16E-Instruct-Fpg-dynamic: ~-0.1

* Llama-3-70B-Instruct: ~-0.1

* Owen3-25B-A22B: ~-0.1

* Phi-4: ~-0.1

* Owen2.5-7B-Instruct: ~-0.1

* Owen2-7B-Instruct: ~-0.1

* Owen3-14B: ~-0.1

* Owen3-30B-A2B: ~-0.1

* Llama-3-31-8B-Instruct: ~-0.1

* Owen3-32B: ~-0.1

* Mistral-Small-24B-Instruct-2501: ~-0.1

* **PRM800K-SC (Before commit answer, Wait) - Red:** The red bars show a similar pattern to the orange bars, generally around 0 with some positive values. Values are approximately:

* Llama-4-Maverick-13B: ~0.05

* Llama-2-12B-Instruct-v0.9: ~0.1

* Deepseek-v3-0324: ~0.1

* Owen2.5-12B: ~0.1

* Llama-4-Scout-17B-16E-Instruct-Fpg-dynamic: ~0.1

* Llama-3-70B-Instruct: ~0.1

* Owen3-25B-A22B: ~0.1

* Phi-4: ~0.1

* Owen2.5-7B-Instruct: ~0.1

* Owen2-7B-Instruct: ~0.1

* Owen3-14B: ~0.1

* Owen3-30B-A2B: ~0.1

* Llama-3-31-8B-Instruct: ~0.1

* Owen3-32B: ~0.1

* Mistral-Small-24B-Instruct-2501: ~0.1

* **PRM800K-SC (After commit answer, Wait) - Teal:** The teal bars are generally negative, similar to the green bars, indicating a reduction in the blind spot after the commit. Values are approximately:

* Llama-4-Maverick-13B: ~-0.05

* Llama-2-12B-Instruct-v0.9: ~-0.1

* Deepseek-v3-0324: ~-0.1

* Owen2.5-12B: ~-0.1

* Llama-4-Scout-17B-16E-Instruct-Fpg-dynamic: ~-0.1

* Llama-3-70B-Instruct: ~-0.1

* Owen3-25B-A22B: ~-0.1

* Phi-4: ~-0.1

* Owen2.5-7B-Instruct: ~-0.1

* Owen2-7B-Instruct: ~-0.1

* Owen3-14B: ~-0.1

* Owen3-30B-A2B: ~-0.1

* Llama-3-31-8B-Instruct: ~-0.1

* Owen3-32B: ~-0.1

* Mistral-Small-24B-Instruct-2501: ~-0.1

### Key Observations

* The "After commit answer" data series (Green and Teal) consistently show negative values, indicating that the self-correction process generally reduces the blind spot.

* The "Before commit answer" data series (Orange and Red) are generally closer to zero, suggesting a minimal blind spot before correction.

* There is little variation in the blind spot across different models for the SCU5 dataset (Blue).

* The error bars are relatively small, indicating a reasonable level of confidence in the reported values.

### Interpretation

This chart demonstrates the effectiveness of a "commit answer" step in reducing the self-correction blind spot across various language models and datasets. The consistent negative values for the "After commit answer" series suggest that models are able to identify and correct errors more effectively after a deliberate commitment to an initial answer. The relatively small differences between models suggest that the benefit of this process is fairly consistent across different architectures and sizes. The SCU5 dataset appears to be less susceptible to this blind spot, as the values remain close to zero regardless of the commit step. This could indicate that the SCU5 dataset is inherently easier for the models to reason about, or that the blind spot manifests differently in this context. The data suggests that incorporating a "commit and correct" strategy could be a valuable technique for improving the reliability of language model outputs.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Bar Chart: Blind Spot Summary Across Datasets (Appending "Wait") - 95% Confidence Intervals

### Overview

The chart displays the **Self-Correction Blind Spot** (y-axis) for 14 language models (x-axis) across 5 datasets (legend). Each model has 5 bars (one per dataset) with error bars representing 95% confidence intervals. The "blind spot" likely measures how much a model fails to correct errors (or performance differences before/after committing an answer, with "Wait" appended).

### Components/Axes

- **Title**: *"Blind Spot summary across datasets (Appending 'Wait') - 95% Confidence Intervals"*

- **Y-axis**: *"Self-Correction Blind Spot"* (range: -1.5 to 0.5; grid lines at -1.5, -1.0, -0.5, 0.0, 0.5).

- **X-axis**: *"Models"* (14 models: Llama-4-Maverick-17B-128E-Instruct-FP8, DeepSeek-V3-0324, Qwen2.5-72B-Instruct, Llama-4-Scout-17B-16E-Instruct-FP8-dynamic, Llama-3.3-70B-Instruct, Qwen3-235B-A22B, Phi-4, Qwen2.5-7B-Instruct, Qwen2-7B-Instruct, Qwen3-14B, Qwen3-30B-A3B, Llama-3.1-8B-Instruct, Qwen3-32B, Mistral-Small-24B-Instruct-2501).

- **Legend** (top-left, 5 categories):

- Blue: *SCLIS (Wait)*

- Orange: *GSM8K-SC (Before commit answer, Wait)*

- Light orange: *GSM8K-SC (After commit answer, Wait)*

- Green: *PRM800K-SC (Before commit answer, Wait)*

- Light green: *PRM800K-SC (After commit answer, Wait)*

### Detailed Analysis (Model-by-Model, Dataset-by-Dataset)

Below are approximate bar heights (y-axis values) and error bar ranges (95% CI) for each model:

| Model | SCLIS (Wait) | GSM8K-SC (Before) | GSM8K-SC (After) | PRM800K-SC (Before) | PRM800K-SC (After) |

|-------|--------------|-------------------|------------------|---------------------|--------------------|

| Llama-4-Maverick-17B-128E-Instruct-FP8 | ~0.0 (±0.05) | ~0.0 (±0.05) | ~0.0 (±0.05) | ~0.05 (±0.1) | ~-0.1 (±0.15) |

| DeepSeek-V3-0324 | ~0.0 (±0.05) | ~0.0 (±0.05) | ~0.0 (±0.05) | ~0.0 (±0.1) | ~-0.1 (±0.15) |

| Qwen2.5-72B-Instruct | ~0.0 (±0.05) | ~0.05 (±0.1) | ~0.05 (±0.1) | ~0.3 (±0.1) | ~0.2 (±0.15) |

| Llama-4-Scout-17B-16E-Instruct-FP8-dynamic | ~0.0 (±0.05) | ~0.15 (±0.1) | ~0.1 (±0.1) | ~0.05 (±0.1) | ~-0.2 (±0.2) |

| Llama-3.3-70B-Instruct | ~0.0 (±0.05) | ~0.15 (±0.1) | ~0.1 (±0.1) | ~-0.3 (±0.2) | ~-1.1 (±0.4) |

| Qwen3-235B-A22B | ~0.0 (±0.05) | ~0.05 (±0.1) | ~0.05 (±0.1) | ~0.1 (±0.1) | ~0.1 (±0.1) |

| Phi-4 | ~0.0 (±0.05) | ~0.15 (±0.1) | ~0.15 (±0.1) | ~0.55 (±0.1) | ~0.45 (±0.1) |

| Qwen2.5-7B-Instruct | ~-0.1 (±0.1) | ~0.1 (±0.1) | ~0.05 (±0.1) | ~0.25 (±0.1) | ~0.05 (±0.15) |

| Qwen2-7B-Instruct | ~-0.2 (±0.1) | ~0.35 (±0.1) | ~0.05 (±0.1) | ~0.5 (±0.1) | ~0.25 (±0.15) |

| Qwen3-14B | ~-0.2 (±0.1) | ~-0.05 (±0.1) | ~-0.1 (±0.1) | ~0.0 (±0.1) | ~0.05 (±0.15) |

| Qwen3-30B-A3B | ~-0.1 (±0.1) | ~0.0 (±0.1) | ~-0.05 (±0.1) | ~0.1 (±0.1) | ~0.15 (±0.15) |

| Llama-3.1-8B-Instruct | ~-0.15 (±0.1) | ~0.3 (±0.1) | ~0.55 (±0.1) | ~-0.1 (±0.1) | ~0.15 (±0.15) |

| Qwen3-32B | ~-0.05 (±0.1) | ~0.05 (±0.1) | ~0.0 (±0.1) | ~0.3 (±0.1) | ~0.25 (±0.15) |

| Mistral-Small-24B-Instruct-2501 | ~-0.05 (±0.1) | ~0.1 (±0.1) | ~0.2 (±0.1) | ~0.45 (±0.1) | ~0.4 (±0.15) |

### Key Observations

- **Outlier**: *Llama-3.3-70B-Instruct* has a drastically low (negative) PRM800K-SC (After commit answer, Wait) bar (~-1.1) with a large error bar (±0.4), indicating high uncertainty.

- **High Blind Spots**: Models like *Phi-4*, *Qwen2-7B-Instruct*, *Llama-3.1-8B-Instruct*, *Qwen3-32B*, and *Mistral-Small-24B-Instruct-2501* have tall PRM800K-SC (Before/After) bars, suggesting larger self-correction blind spots for these datasets.

- **Low Blind Spots**: Most *SCLIS (Wait)* bars are near 0, with some negative (e.g., *Qwen2.5-7B-Instruct*, *Qwen2-7B-Instruct*), indicating smaller blind spots for this dataset.

- **Dataset Trends**: *PRM800K-SC (Before/After)* generally has higher blind spots than *GSM8K-SC (Before/After)* and *SCLIS (Wait)*, suggesting this dataset is more challenging for self-correction.

### Interpretation

The chart quantifies how well models self-correct errors across datasets. "Blind spot" likely measures the difference in performance before/after committing an answer (with "Wait" appended).

- **Dataset Impact**: *PRM800K-SC* (Before/After) consistently shows higher blind spots, implying this dataset is more difficult for self-correction.

- **Model Performance**: Larger models (e.g., *Qwen3-235B-A22B*, *Phi-4*) or specific architectures (e.g., *Llama-3.3-70B-Instruct*) have varying blind spots, with *Llama-3.3-70B-Instruct* as an outlier in *PRM800K-SC (After)*.

- **Uncertainty**: Wide error bars (e.g., *Llama-3.3-70B-Instruct*) indicate less reliable estimates, while narrow bars (e.g., *Qwen3-235B-A22B*) suggest more consistent results.

This data helps identify models/datasets with better (lower blind spot) or worse (higher blind spot) self-correction, guiding model selection or improvement efforts.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Bar Chart: Blind Spot summary across datasets (Appending "Wait") - 95% Confidence Intervals

### Overview

The chart compares self-correction blind spot metrics across 14 AI models, showing performance before and after appending "Wait" to prompts. Data is presented with 95% confidence intervals, with four distinct categories represented by color-coded bars.

### Components/Axes

- **X-axis**: Models (14 categories including Llama-4-Maverick-17B-Instruct-FP8, DeepSeek-V3-0324, Phi-4, Mistral-Small-24B-Instruct-2501)

- **Y-axis**: Self-Correction Blind Spot (range: -1.5 to 0.5)

- **Legend**:

- Blue: SCLI5 (Wait)

- Orange: GSM8K-SC (Before commit answer, Wait)

- Pink: GSM8K-SC (After commit answer, Wait)

- Green: PRM800K-SC (After commit answer, Wait)

### Detailed Analysis

1. **Llama-4-Maverick-17B-Instruct-FP8**

- SCLI5 (blue): -0.05 ± 0.12

- GSM8K-SC (orange): -0.02 ± 0.08

- GSM8K-SC (pink): -0.03 ± 0.10

- PRM800K-SC (green): 0.02 ± 0.15

2. **DeepSeek-V3-0324**

- SCLI5 (blue): -0.01 ± 0.09

- GSM8K-SC (orange): -0.04 ± 0.07

- GSM8K-SC (pink): -0.02 ± 0.08

- PRM800K-SC (green): -0.05 ± 0.11

3. **Phi-4**

- SCLI5 (blue): -0.03 ± 0.14

- GSM8K-SC (orange): 0.15 ± 0.10

- GSM8K-SC (pink): 0.12 ± 0.09

- PRM800K-SC (green): 0.52 ± 0.18

4. **Mistral-Small-24B-Instruct-2501**

- SCLI5 (blue): -0.02 ± 0.10

- GSM8K-SC (orange): 0.08 ± 0.07

- GSM8K-SC (pink): 0.15 ± 0.09

- PRM800K-SC (green): 0.45 ± 0.16

*(Full dataset values follow similar patterns with confidence intervals shown as error bars)*

### Key Observations

- **Positive Blind Spots**: PRM800K-SC (green) consistently shows the highest values (up to 0.52), suggesting significant self-correction limitations post-"Wait" in some models.

- **Negative Blind Spots**: Llama-3-70B-Instruct exhibits extreme negative values (-1.5 to -0.8) in PRM800K-SC, indicating potential over-correction.

- **Mixed Performance**: Models like Phi-4 and Mistral-Small-24B show strong positive blind spots in PRM800K-SC but moderate negative values in SCLI5.

- **Confidence Intervals**: Larger error bars in models like Llama-3-70B-Instruct suggest greater uncertainty in measurements.

### Interpretation

The data demonstrates that appending "Wait" to prompts creates variable impacts on self-correction capabilities across models. PRM800K-SC (green) consistently shows the largest blind spots, particularly in Phi-4 and Mistral-Small-24B, suggesting this metric may be more sensitive to prompt modifications. The extreme negative values in Llama-3-70B-Instruct (-1.5) warrant further investigation into potential over-correction artifacts. The SCLI5 (blue) category generally shows smaller blind spots, indicating it may be more robust to prompt changes. These findings highlight the need for model-specific prompt engineering strategies when appending "Wait" to improve self-correction reliability.

DECODING INTELLIGENCE...