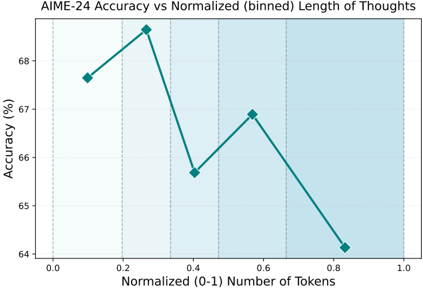

## Line Chart: AIME-24 Accuracy vs Normalized (binned) Length of Thoughts

### Overview

This is a line chart plotting the relationship between the accuracy of a model (presumably on the AIME-24 benchmark) and the normalized length of its "thoughts," measured in tokens. The chart shows a non-linear relationship where accuracy peaks at an intermediate thought length before declining.

### Components/Axes

* **Title:** "AIME-24 Accuracy vs Normalized (binned) Length of Thoughts" (Top center).

* **Y-Axis:** Labeled "Accuracy (%)". The scale runs from 64 to 68, with major tick marks at 64, 65, 66, 67, and 68.

* **X-Axis:** Labeled "Normalized (0-1) Number of Tokens". The scale runs from 0.0 to 1.0, with major tick marks at 0.0, 0.2, 0.4, 0.6, 0.8, and 1.0.

* **Data Series:** A single series represented by a teal-colored line with diamond-shaped markers. There is no separate legend, as there is only one data series.

* **Background:** The chart area has a light blue background with faint vertical grid lines aligned with the x-axis tick marks.

### Detailed Analysis

The data series consists of five distinct points connected by straight lines. The approximate coordinates (x, y) for each point, reading from left to right, are:

1. **Point 1:** (0.1, ~67.6%)

2. **Point 2:** (0.25, ~68.6%) - This is the peak accuracy.

3. **Point 3:** (0.4, ~65.7%)

4. **Point 4:** (0.55, ~66.9%)

5. **Point 5:** (0.85, ~64.2%) - This is the lowest accuracy.

**Trend Verification:** The line exhibits a clear pattern. It slopes upward from Point 1 to Point 2, indicating increasing accuracy with longer thought length initially. It then slopes sharply downward to Point 3, upward again to Point 4, and finally slopes downward steeply to Point 5. The overall trajectory from the peak (Point 2) to the final point (Point 5) is a significant decline.

### Key Observations

1. **Peak Performance:** The highest accuracy (~68.6%) is achieved at a normalized token length of approximately 0.25.

2. **Non-Monotonic Relationship:** Accuracy does not increase or decrease steadily with thought length. There is a local minimum at x=0.4 and a local maximum at x=0.55.

3. **Significant Drop-off:** The most substantial decrease in accuracy occurs between the peak (x=0.25) and the final data point (x=0.85), a drop of approximately 4.4 percentage points.

4. **Range of Performance:** Across the observed range of normalized token lengths (0.1 to 0.85), accuracy varies by about 4.4 percentage points (from ~64.2% to ~68.6%).

### Interpretation

The data suggests that for the AIME-24 task, there is an optimal "length of thought" or reasoning process, represented here by token count. Performance is best when the model's internal reasoning is of moderate length (around 25% of the normalized maximum). Shorter thoughts may be insufficient for complex problem-solving, leading to lower accuracy (~67.6% at x=0.1). Conversely, excessively long thoughts (approaching the upper end of the scale) are associated with a marked decline in accuracy, potentially indicating inefficiency, the introduction of noise, or a loss of focus in the model's reasoning process. The local recovery at x=0.55 is an interesting anomaly; it could represent a secondary, less optimal strategy or simply noise in the binned data. The primary takeaway is that more reasoning (in terms of raw token length) does not linearly translate to better performance on this benchmark; there is a "sweet spot."