## Diagram: Claim Verification Pipeline Flowchart

### Overview

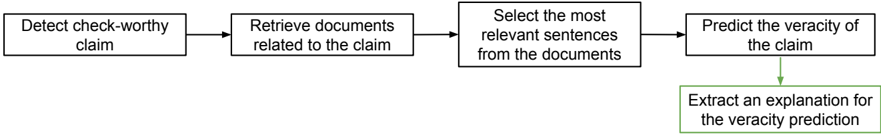

The image displays a linear, sequential flowchart illustrating a five-step process for automated claim verification or fact-checking. The diagram uses rectangular process boxes connected by directional arrows to indicate the flow of operations. The final step is visually distinguished with a green outline and arrow.

### Components/Axes

The diagram consists of five rectangular boxes arranged primarily in a horizontal sequence, with the final box positioned below the fourth. All text is in English.

1. **Box 1 (Far Left):** Contains the text "Detect check-worthy claim".

2. **Box 2 (Center-Left):** Contains the text "Retrieve documents related to the claim".

3. **Box 3 (Center):** Contains the text "Select the most relevant sentences from the documents".

4. **Box 4 (Center-Right):** Contains the text "Predict the veracity of the claim".

5. **Box 5 (Bottom-Right, below Box 4):** Contains the text "Extract an explanation for the veracity prediction". This box has a green outline.

**Flow Arrows:**

* A standard black arrow points from Box 1 to Box 2.

* A standard black arrow points from Box 2 to Box 3.

* A standard black arrow points from Box 3 to Box 4.

* A green arrow points downward from Box 4 to Box 5.

### Detailed Analysis

The process is a clear, step-by-step pipeline:

1. **Input Stage:** The system begins by identifying a claim that is worthy of verification ("check-worthy").

2. **Information Retrieval:** It then searches for and gathers source documents pertinent to that specific claim.

3. **Evidence Selection:** From the retrieved corpus, the system filters and selects the most pertinent sentences to serve as evidence.

4. **Analysis & Judgment:** Using the selected evidence, the system makes a prediction about the claim's truthfulness (its "veracity").

5. **Output Generation:** As a final, distinct step, the system produces a human-readable explanation justifying the veracity prediction made in the previous step.

### Key Observations

* The process is strictly linear and feed-forward; there are no feedback loops or decision branches shown.

* The final step ("Extract an explanation...") is visually set apart with a green color scheme (outline and connecting arrow), suggesting it is a key output or a secondary, explanatory phase of the process.

* The language is precise and technical, using terms like "check-worthy," "veracity," and "relevant sentences," indicating a formal, likely academic or engineering, context for the system design.

### Interpretation

This flowchart models a standard pipeline for automated fact-checking or claim verification systems, common in natural language processing (NLP) and artificial intelligence research. The sequence reflects a logical investigative methodology: identify the question, gather sources, find evidence, make a judgment, and explain the reasoning.

The separation of "Predict the veracity" and "Extract an explanation" is significant. It implies a system architecture where the core prediction model (which might be a complex "black box" like a neural network) is followed by a separate, possibly more interpretable, module that generates a rationale. This design addresses the critical need for explainability and transparency in AI systems, especially those making judgments about truth. The green highlighting emphasizes that providing an explanation is not an afterthought but a deliberate and crucial output of the entire verification process.