\n

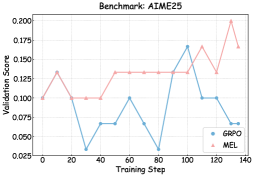

## Line Chart: Benchmark: AIME25

### Overview

The image is a line chart comparing the validation score performance of two models, labeled "GRPO" and "MEL," over the course of training steps on a benchmark titled "AIME25." The chart displays two distinct line series with markers, plotted against a grid.

### Components/Axes

* **Chart Title:** "Benchmark: AIME25" (centered at the top).

* **Y-Axis:** Labeled "Validation_Score". The scale runs from 0.025 to 0.200, with major tick marks at intervals of 0.025 (0.025, 0.050, 0.075, 0.100, 0.125, 0.150, 0.175, 0.200).

* **X-Axis:** Labeled "Training_Step". The scale runs from 0 to 140, with major tick marks at intervals of 20 (0, 20, 40, 60, 80, 100, 120, 140).

* **Legend:** Located in the bottom-right corner of the plot area.

* **GRPO:** Represented by a blue line with circular markers.

* **MEL:** Represented by a red line with triangular markers.

* **Grid:** A light gray grid is present, aligning with the major ticks on both axes.

### Detailed Analysis

**Data Series: GRPO (Blue Line, Circle Markers)**

* **Trend:** The GRPO line exhibits high volatility, characterized by sharp peaks and deep troughs throughout the training steps. There is no consistent upward or downward trend; performance fluctuates dramatically.

* **Approximate Data Points:**

* Step 0: ~0.100

* Step 20: ~0.035 (sharp drop)

* Step 40: ~0.075 (recovery)

* Step 60: ~0.100 (peak)

* Step 80: ~0.040 (sharp drop)

* Step 100: ~0.165 (highest peak)

* Step 120: ~0.100 (drop)

* Step 140: ~0.060 (final point)

**Data Series: MEL (Red Line, Triangle Markers)**

* **Trend:** The MEL line shows a more stable and generally upward trend. After an initial rise, it plateaus, dips slightly, and then climbs to its highest values in the later steps.

* **Approximate Data Points:**

* Step 0: ~0.100

* Step 20: ~0.130 (rise)

* Step 40: ~0.100 (dip)

* Step 60: ~0.100 (plateau)

* Step 80: ~0.130 (rise)

* Step 100: ~0.130 (plateau)

* Step 120: ~0.165 (peak, tied with GRPO's peak)

* Step 140: ~0.165 (final point, maintains peak)

### Key Observations

1. **Performance Crossover:** The two models start at the same validation score (~0.100). MEL immediately outperforms GRPO at step 20. GRPO only surpasses MEL at its single, dramatic peak at step 100 (~0.165 vs MEL's ~0.130).

2. **Volatility vs. Stability:** GRPO's performance is highly unstable, with a range of approximately 0.035 to 0.165. MEL's performance is more consistent, with a narrower range of approximately 0.100 to 0.165.

3. **Final Performance:** At the final recorded step (140), MEL (~0.165) significantly outperforms GRPO (~0.060).

4. **Peak Alignment:** Both models achieve their highest observed validation score of ~0.165, but at different times (GRPO at step 100, MEL at steps 120 & 140).

### Interpretation

The chart suggests a fundamental difference in the training dynamics of the two models on the AIME25 benchmark. The **MEL** model demonstrates more robust and reliable learning, achieving a high validation score and maintaining it. Its trajectory indicates stable convergence. In contrast, the **GRPO** model appears unstable; its performance is erratic, suggesting potential issues like overfitting to specific training batches, sensitivity to hyperparameters, or an unstable optimization process. While GRPO is capable of reaching a high score (step 100), it cannot sustain it.

The data implies that for this specific task, MEL is the more dependable model, offering predictable and high performance as training progresses. GRPO's volatility makes its final performance unpredictable and, in this case, poor. The benchmark likely measures a capability where consistent optimization (MEL) is more effective than the approach used by GRPO.