## Bar Chart: Model Performance Comparison (ECE and AUROC)

### Overview

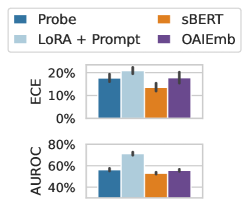

The image displays two vertically stacked bar charts comparing the performance of four different models or methods across two evaluation metrics: ECE (Expected Calibration Error) and AUROC (Area Under the Receiver Operating Characteristic Curve). The charts include error bars, indicating variability or confidence intervals for each measurement.

### Components/Axes

* **Legend:** Located at the top-left of the image. It defines four categories with associated colors:

* **Probe** (Dark Blue)

* **LoRA + Prompt** (Light Blue)

* **sBERT** (Orange)

* **OAIEmb** (Purple)

* **Top Chart (ECE):**

* **Y-axis Label:** "ECE"

* **Y-axis Scale:** Percentage, ranging from 0% to 20%, with major ticks at 0%, 10%, and 20%.

* **X-axis:** Implicitly represents the four model categories from the legend. No explicit x-axis labels are present below the bars.

* **Bottom Chart (AUROC):**

* **Y-axis Label:** "AUROC"

* **Y-axis Scale:** Percentage, ranging from 40% to 80%, with major ticks at 40%, 60%, and 80%.

* **X-axis:** Implicitly represents the same four model categories as the top chart.

### Detailed Analysis

**ECE Chart (Top):**

* **Trend Verification:** The bars for "Probe" and "LoRA + Prompt" are visually taller than those for "sBERT" and "OAIEmb". The error bars for "LoRA + Prompt" appear slightly larger than the others.

* **Data Points (Approximate):**

* **Probe (Dark Blue):** ~18%

* **LoRA + Prompt (Light Blue):** ~19%

* **sBERT (Orange):** ~14%

* **OAIEmb (Purple):** ~16%

**AUROC Chart (Bottom):**

* **Trend Verification:** The bar for "LoRA + Prompt" is distinctly the tallest. The bars for "Probe", "sBERT", and "OAIEmb" are of similar, lower height. The error bar for "LoRA + Prompt" is notably larger than the others.

* **Data Points (Approximate):**

* **Probe (Dark Blue):** ~55%

* **LoRA + Prompt (Light Blue):** ~65%

* **sBERT (Orange):** ~50%

* **OAIEmb (Purple):** ~52%

### Key Observations

1. **Performance Trade-off:** The model "LoRA + Prompt" achieves the highest (best) AUROC score but also has the highest (worst) ECE score among the four methods. This suggests a potential trade-off between discrimination ability (AUROC) and calibration (ECE).

2. **Relative Rankings:** The ranking of models is not consistent across metrics. "Probe" is second-best in both metrics. "sBERT" has the lowest ECE (best calibration) but also the lowest AUROC (worst discrimination). "OAIEmb" performs in the middle range for both metrics.

3. **Variability:** The "LoRA + Prompt" method shows the largest error bars, particularly in the AUROC chart, indicating greater variance or uncertainty in its performance estimate compared to the other methods.

### Interpretation

This chart likely comes from a machine learning or natural language processing study evaluating different techniques (probing, fine-tuning with LoRA and prompts, sentence-BERT embeddings, and OpenAI embeddings) on a classification task.

* **ECE (Expected Calibration Error)** measures how well a model's predicted probabilities match the actual correctness likelihood. A lower ECE is better, meaning the model is well-calibrated (e.g., when it predicts 70% confidence, it is correct about 70% of the time). The data suggests that simpler embedding methods (`sBERT`, `OAIEmb`) may be better calibrated than more complex adaptation methods (`LoRA + Prompt`).

* **AUROC** measures the model's ability to distinguish between classes. A higher AUROC is better. Here, `LoRA + Prompt` demonstrates superior discriminative power, which is often the primary goal in many applications.

The key takeaway is that the choice of method involves a balance. If reliable probability estimates are crucial (e.g., for risk assessment), `sBERT` might be preferable despite lower overall accuracy. If maximizing predictive accuracy is the sole objective, `LoRA + Prompt` is the best choice, albeit with less reliable confidence scores and higher performance variance. The `Probe` method offers a middle-ground performance on both metrics.