TECHNICAL ASSET FINGERPRINT

707bb78ba34001340f9c32e6

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

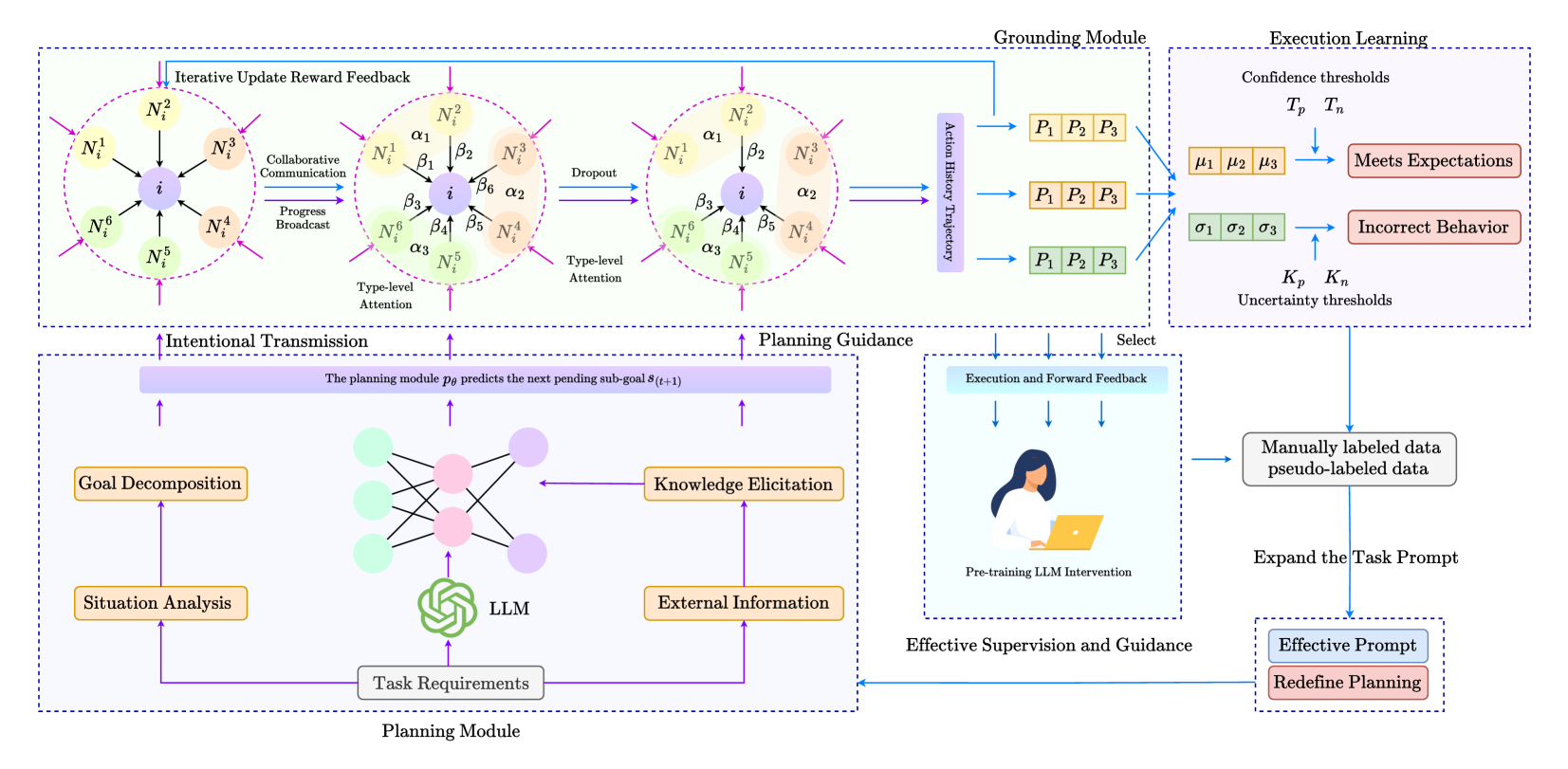

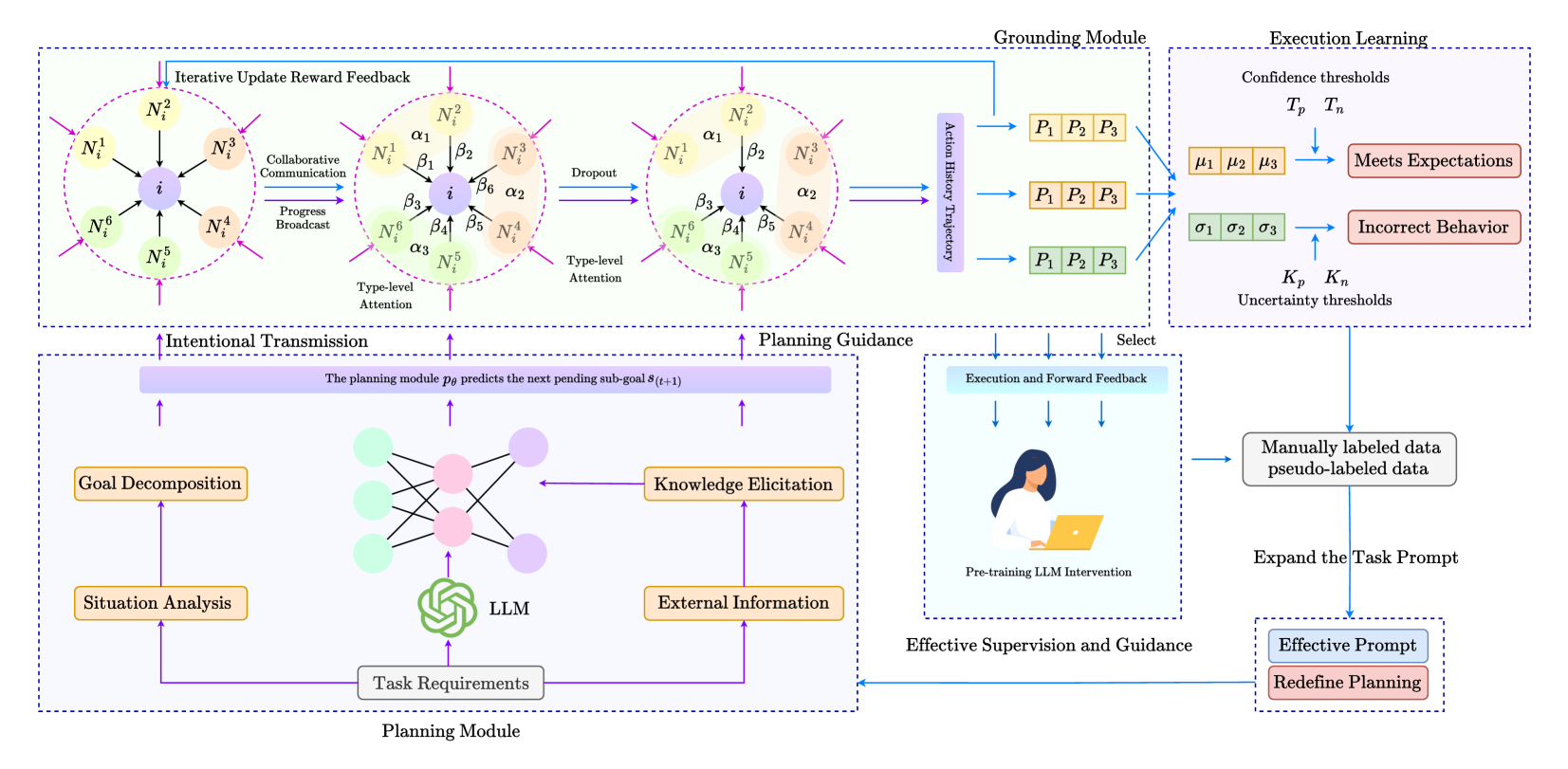

## System Diagram: Enhanced LLM Planning and Execution

### Overview

The image presents a system diagram illustrating an enhanced Large Language Model (LLM) planning and execution framework. It details the interaction between a Planning Module, a Grounding Module, and an Execution Learning component, emphasizing iterative feedback loops and attention mechanisms.

### Components/Axes

* **Modules:**

* Planning Module (bottom): Responsible for task decomposition, knowledge elicitation, and generating plans based on task requirements.

* Grounding Module (top-right): Connects the LLM's plans to real-world actions, using action history trajectories.

* Execution Learning (top-right): Evaluates the execution of plans, identifies incorrect behaviors, and refines the system through feedback.

* **Processes:**

* Iterative Update Reward Feedback (top): Refines the attention mechanisms based on the outcomes of actions.

* Intentional Transmission (bottom-center): Represents the flow of information from the Planning Module to guide the Grounding Module.

* Planning Guidance (top-center): Directs the Grounding Module based on the Planning Module's output.

* Effective Supervision and Guidance (bottom-center): Provides human intervention and feedback to improve the LLM's performance.

* **Nodes:**

* Nodes labeled N<sub>i</sub><sup>1</sup> to N<sub>i</sub><sup>6</sup> represent different aspects or features considered by the attention mechanisms.

* Nodes labeled α<sub>1</sub> to α<sub>3</sub> and β<sub>1</sub> to β<sub>6</sub> represent attention weights or parameters.

* **Data Flow:**

* Purple arrows indicate the flow of information and control signals between modules and processes.

* Blue arrows indicate feedback loops and refinement processes.

### Detailed Analysis

* **Planning Module (Bottom):**

* Inputs: Task Requirements (gray rectangle), Situation Analysis (orange rectangle), External Information (orange rectangle).

* Processes: Goal Decomposition (orange rectangle), Knowledge Elicitation (orange rectangle).

* Core: LLM (green stylized icon).

* Output: The planning module p<sub>θ</sub> predicts the next pending sub-goal s<sub>(t+1)</sub> (lavender rectangle).

* **Attention Mechanisms (Top-Center):**

* Collaborative Communication: Nodes N<sub>i</sub><sup>1</sup> to N<sub>i</sub><sup>6</sup> communicate with a central node 'i' (purple).

* Type-level Attention: Nodes N<sub>i</sub><sup>1</sup> to N<sub>i</sub><sup>6</sup> are associated with attention weights α<sub>1</sub> to α<sub>3</sub> and β<sub>1</sub> to β<sub>6</sub>.

* Dropout: Represents a regularization technique where some nodes are randomly dropped during training.

* **Grounding Module (Top-Right):**

* Input: Action History Trajectory (purple rectangle).

* Process: Transforms plans into actions P<sub>1</sub>, P<sub>2</sub>, P<sub>3</sub> (orange and green rectangles).

* **Execution Learning (Top-Right):**

* Confidence Thresholds: T<sub>p</sub>, T<sub>n</sub>.

* Evaluation: Compares predicted outcomes (μ<sub>1</sub>, μ<sub>2</sub>, μ<sub>3</sub> - orange rectangles; σ<sub>1</sub>, σ<sub>2</sub>, σ<sub>3</sub> - green rectangles) with actual results.

* Outcomes:

* Meets Expectations (red rectangle).

* Incorrect Behavior (red rectangle).

* Uncertainty Thresholds: K<sub>p</sub>, K<sub>n</sub>.

* **Feedback Loops:**

* Iterative Update Reward Feedback: Refines the attention mechanisms based on the outcomes of actions.

* Execution and Forward Feedback: Provides feedback to the Planning Module.

* Effective Supervision and Guidance: Allows for human intervention to correct and refine the LLM's behavior.

* Expand the Task Prompt: Modifies the task prompt based on the LLM's performance.

* Redefine Planning: Adjusts the planning strategy based on the LLM's performance.

* **Effective Supervision and Guidance (Bottom-Center):**

* Includes a visual representation of a person using a laptop, labeled "Pre-training LLM Intervention".

### Key Observations

* The diagram emphasizes the iterative nature of the planning and execution process, with multiple feedback loops for refinement.

* Attention mechanisms play a crucial role in focusing the LLM's resources on the most relevant aspects of the task.

* Human intervention is incorporated to provide supervision and guidance, ensuring the LLM's behavior aligns with desired outcomes.

* The system incorporates mechanisms for handling uncertainty and correcting incorrect behaviors.

### Interpretation

The diagram illustrates a sophisticated framework for enhancing LLM planning and execution. By incorporating attention mechanisms, feedback loops, and human supervision, the system aims to improve the LLM's ability to generate effective plans and execute them successfully in real-world scenarios. The iterative nature of the process allows the LLM to learn from its mistakes and refine its planning strategies over time. The inclusion of a Grounding Module bridges the gap between the LLM's abstract plans and concrete actions, enabling it to interact with the environment effectively. The system's ability to handle uncertainty and correct incorrect behaviors makes it more robust and reliable.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Iterative Planning and Execution Framework

### Overview

This diagram illustrates an iterative planning and execution framework, likely for a Large Language Model (LLM) based agent. The framework consists of a Planning Module and a Grounding Module, with iterative feedback loops between them and an Execution Learning component. The diagram emphasizes the interplay between goal decomposition, knowledge elicitation, planning guidance, and execution monitoring.

### Components/Axes

The diagram is segmented into three main modules:

* **Planning Module (Bottom):** Contains "Situation Analysis", "Task Requirements", "Goal Decomposition", "LLM", "Knowledge Elicitation", and "External Information".

* **Intentional Transmission/Planning Guidance (Center):** Connects the Planning Module to the Grounding Module. Includes elements like "Type-level Attention", "Dropout", and "Iterative Update Reward Feedback".

* **Grounding Module (Right):** Contains "Execution Learning", "Action History Trajectory", and "Confidence/Uncertainty Thresholds".

Key labels and text elements include:

* "Iterative Update Reward Feedback"

* "Collaborative Communication"

* "Progress Broadcast"

* "Type-level Attention" (appears twice, with slight variations)

* "Dropout"

* "Intentional Transmission"

* "Planning Guidance" - "The planning module predicts the next pending sub-goal s(t+1)"

* "Action History Trajectory"

* "Confidence thresholds: T<sub>p</sub>, T<sub>n</sub>"

* "Meets Expectations: μ<sub>1</sub>, μ<sub>2</sub>, μ<sub>3</sub>"

* "Incorrect Behavior: σ<sub>1</sub>, σ<sub>2</sub>, σ<sub>3</sub>"

* "Uncertainty thresholds: K<sub>p</sub>, K<sub>n</sub>"

* "Manually labeled data pseudo-labeled data"

* "Expand the Task Prompt"

* "Effective Supervision and Guidance"

* "Effective Prompt"

* "Redefine Planning"

* Nodes labeled N<sup>1</sup> through N<sup>6</sup> (appearing multiple times)

* Nodes labeled α<sub>1</sub> through α<sub>3</sub> (appearing multiple times)

* Nodes labeled β<sub>1</sub> through β<sub>3</sub> (appearing multiple times)

* "Pre-training LLM Intervention"

### Detailed Analysis or Content Details

The diagram depicts a cyclical process.

**Planning Module:**

* "Situation Analysis" and "Task Requirements" feed into the "LLM".

* The LLM outputs to "Goal Decomposition" and "Knowledge Elicitation".

* "Knowledge Elicitation" incorporates "External Information".

* Both "Goal Decomposition" and "Knowledge Elicitation" contribute to the "Intentional Transmission" stage.

**Intentional Transmission/Planning Guidance:**

* The "Intentional Transmission" stage involves a network of nodes (N<sup>1</sup>-N<sup>6</sup>, α<sub>1</sub>-α<sub>3</sub>, β<sub>1</sub>-β<sub>3</sub>) arranged in two parallel pathways.

* The left pathway shows nodes N<sup>1</sup>, N<sup>2</sup>, N<sup>3</sup>, N<sup>4</sup>, N<sup>5</sup>, N<sup>6</sup> connected by arrows.

* The right pathway shows nodes N<sup>1</sup>, N<sup>2</sup>, N<sup>3</sup>, N<sup>4</sup>, N<sup>5</sup>, N<sup>6</sup> connected by arrows.

* "Type-level Attention" and "Dropout" are applied within these pathways.

* "Iterative Update Reward Feedback" provides input to this stage.

**Grounding Module:**

* "Planning Guidance" feeds into the "Action History Trajectory".

* The "Action History Trajectory" is represented as a series of states P<sub>1</sub>, P<sub>2</sub>, P<sub>3</sub>, repeated three times.

* "Execution Learning" uses these states to determine "Meets Expectations" (μ<sub>1</sub>, μ<sub>2</sub>, μ<sub>3</sub>) or "Incorrect Behavior" (σ<sub>1</sub>, σ<sub>2</sub>, σ<sub>3</sub>) based on "Confidence" and "Uncertainty" thresholds.

* The "Execution Learning" component then influences the "Expand the Task Prompt" and "Redefine Planning" stages, completing the cycle.

* "Pre-training LLM Intervention" provides input to "Effective Supervision and Guidance".

### Key Observations

* The diagram emphasizes iterative refinement through feedback loops.

* The parallel pathways in the "Intentional Transmission" stage suggest exploration of multiple planning options.

* The use of confidence and uncertainty thresholds indicates a probabilistic approach to execution monitoring.

* The integration of manually labeled data with pseudo-labeled data suggests a semi-supervised learning approach.

* The diagram is highly conceptual and does not provide specific numerical data.

### Interpretation

This diagram represents a sophisticated framework for controlling an LLM-based agent. The core idea is to move beyond simple prompt engineering and towards a more robust, iterative planning and execution process. The "Planning Module" acts as the agent's reasoning engine, decomposing goals and leveraging external knowledge. The "Grounding Module" provides a mechanism for evaluating the agent's actions and providing feedback, allowing it to learn from its mistakes and refine its plans.

The "Intentional Transmission" stage, with its parallel pathways and attention mechanisms, suggests a process of exploring multiple potential plans and selecting the most promising one. The use of confidence and uncertainty thresholds in the "Execution Learning" component indicates a nuanced understanding of the agent's capabilities and limitations.

The diagram highlights the importance of both supervised learning (through manually labeled data) and unsupervised learning (through pseudo-labeled data) in training the agent. The feedback loops between the "Grounding Module" and the "Planning Module" suggest a continuous learning process, where the agent constantly adapts its plans based on its experiences.

The diagram is a high-level overview and does not provide details on the specific algorithms or techniques used in each component. However, it provides a valuable conceptual framework for understanding how an LLM-based agent can be designed to perform complex tasks in a reliable and efficient manner. The diagram suggests a system designed for continual improvement and adaptation, rather than a static, pre-programmed solution.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## System Architecture Diagram: Multi-Agent Planning and Execution Learning Framework

### Overview

This image is a technical system architecture diagram illustrating a multi-component framework for AI agent planning, grounding, execution learning, and human-in-the-loop supervision. The diagram is divided into four primary modules connected by data and feedback flows. The overall aesthetic uses a clean, academic style with color-coded components (orange, green, purple, blue) and directional arrows to indicate information flow.

### Components/Axes

The diagram is segmented into four major dashed-line boxes, each representing a core module:

1. **Top-Left Module: Grounding Module**

* **Sub-components:** Three circular diagrams representing iterative communication and attention among agent nodes.

* **Labels within circles:** Nodes labeled `N_i^1`, `N_i^2`, `N_i^3`, `N_i^4`, `N_i^5`, `N_i^6` surrounding a central node `i`.

* **Connection Labels:** Greek letters `α₁`, `α₂`, `α₃`, `β₁`, `β₂`, `β₃`, `β₄`, `β₅`, `β₆` on the connecting lines between nodes.

* **Process Labels:** "Iterative Update Reward Feedback", "Collaborative Communication", "Progress Broadcast", "Dropout", "Type-level Attention".

* **Output:** An "Action History Trajectory" block leading to three sets of `P₁ P₂ P₃` blocks (colored orange, yellow, green).

2. **Top-Right Module: Execution Learning**

* **Inputs:** The three `P₁ P₂ P₃` blocks from the Grounding Module.

* **Key Elements:**

* "Confidence thresholds" labeled `Tₚ`, `Tₙ`.

* "Uncertainty thresholds" labeled `Kₚ`, `Kₙ`.

* A set of orange boxes labeled `μ₁`, `μ₂`, `μ₃`.

* A set of green boxes labeled `σ₁`, `σ₂`, `σ₃`.

* **Decision Blocks:** "Meets Expectations" (orange outline) and "Incorrect Behavior" (green outline).

* **Flow:** Arrows indicate that the `P` blocks are evaluated against thresholds to produce either expectation-meeting or incorrect behavior outputs.

3. **Bottom-Left Module: Planning Module**

* **Central Element:** A green "LLM" icon (resembling the OpenAI logo) connected to a neural network diagram.

* **Input Blocks:** "Task Requirements" (grey), "Situation Analysis" (orange), "Goal Decomposition" (orange).

* **Process Blocks:** "Knowledge Elicitation" (orange), "External Information" (orange).

* **Core Function Text:** A purple bar states: "The planning module `p_θ` predicts the next pending sub-goal `s_(t+1)`".

* **Output:** Labeled "Intentional Transmission" and "Planning Guidance", with arrows pointing up to the Grounding Module.

4. **Bottom-Right Module: Effective Supervision and Guidance**

* **Central Element:** An illustration of a person at a laptop, labeled "Pre-training LLM Intervention".

* **Input:** "Execution and Forward Feedback" from the Execution Learning module.

* **Data Sources:** "Manually labeled data" and "pseudo-labeled data".

* **Process:** "Expand the Task Prompt".

* **Output Blocks:** "Effective Prompt" (blue) and "Redefine Planning" (red).

* **Feedback Loop:** A blue arrow labeled "Select" feeds back into the Execution Learning module. Another arrow feeds back into the Planning Module.

### Detailed Analysis

**Flow and Connections:**

1. The **Planning Module** receives "Task Requirements" and uses an LLM to perform situation analysis, goal decomposition, and knowledge elicitation. It predicts the next sub-goal (`s_(t+1)`) and sends "Intentional Transmission" and "Planning Guidance" to the Grounding Module.

2. The **Grounding Module** processes this guidance through a multi-agent communication protocol. Three stages are shown:

* Stage 1: Initial collaborative communication and progress broadcast among nodes (`N_i^1` to `N_i^6`).

* Stage 2: Application of "Type-level Attention" (weights `α` and `β`).

* Stage 3: A "Dropout" operation, resulting in a refined attention state.

* This process generates an "Action History Trajectory" which outputs three sequences of actions (`P₁ P₂ P₃`).

3. The **Execution Learning** module evaluates these action sequences. It uses confidence (`Tₚ`, `Tₙ`) and uncertainty (`Kₚ`, `Kₙ`) thresholds. The evaluation produces metrics (`μ` series, `σ` series) and classifies outcomes as "Meets Expectations" or "Incorrect Behavior".

4. The **Effective Supervision and Guidance** module takes the execution feedback. A human-in-the-loop ("Pre-training LLM Intervention") uses manually and pseudo-labeled data to "Expand the Task Prompt". This generates an "Effective Prompt" and helps "Redefine Planning", creating a feedback loop that refines both the Execution Learning selection criteria and the Planning Module itself.

**Spatial Grounding:**

* The **Legend/Color Code** is implicit but consistent:

* **Orange:** Associated with planning, goals, and positive outcomes ("Meets Expectations").

* **Green:** Associated with the core LLM and negative outcomes ("Incorrect Behavior").

* **Purple:** Associated with core predictive functions and attention mechanisms.

* **Blue:** Associated with feedback, supervision, and prompt engineering.

* The **Execution Learning** module is positioned in the top-right quadrant.

* The **Planning Module** is in the bottom-left quadrant.

* The **Grounding Module** spans the top-left and top-center.

* The **Supervision** module is in the bottom-right quadrant.

### Key Observations

1. **Closed-Loop System:** The diagram explicitly shows a closed-loop system where execution outcomes directly inform and refine the planning and prompting strategies.

2. **Human-in-the-Loop:** The inclusion of "Pre-training LLM Intervention" and manual data labeling indicates a hybrid system that combines automated learning with human oversight.

3. **Multi-Agent Attention:** The Grounding Module details a sophisticated attention mechanism (`α`, `β` weights) among multiple nodes (`N_i`), suggesting a distributed or ensemble approach to action selection.

4. **Threshold-Based Evaluation:** Execution success is not binary but is evaluated against continuous confidence and uncertainty thresholds (`T`, `K`), allowing for nuanced performance assessment.

5. **Prompt Engineering as a Control Lever:** The system uses "Expand the Task Prompt" and "Effective Prompt" as key mechanisms for supervision, highlighting the central role of prompt design in guiding the LLM's behavior.

### Interpretation

This diagram outlines a comprehensive framework for building more reliable and adaptable LLM-based agents. The core innovation appears to be the integration of three critical layers:

1. **Strategic Planning (Planning Module):** Where high-level goals are decomposed using an LLM.

2. **Tactical Grounding (Grounding Module):** Where plans are translated into coordinated actions through a multi-agent attention mechanism, adding robustness.

3. **Operational Learning (Execution Learning & Supervision):** Where actions are evaluated against real-world outcomes, and failures are used to systematically improve the system—either by adjusting internal thresholds or by refining the prompts that guide the core LLM.

The framework addresses key challenges in LLM deployment: the "grounding problem" (connecting plans to executable actions) and the "alignment problem" (ensuring actions meet expectations). By creating a feedback loop from execution failure back to prompt and plan redefinition, the system aims for continuous, supervised improvement. The presence of both manual and pseudo-labeled data suggests a practical approach to scaling supervision. This architecture would be relevant for complex, multi-step tasks where initial LLM plans require validation and iterative refinement in a dynamic environment.

DECODING INTELLIGENCE...

EXPERT: jina-vlm VERSION 2

RUNTIME: jina-vlm

INTEL_VERIFIED

## Diagram Type: Flowchart

### Overview

The diagram illustrates a complex system involving multiple components and processes. It appears to be a flowchart that outlines the steps and interactions within a system, possibly related to machine learning or artificial intelligence.

### Components/Axes

- **Grounding Module**: This is the central part of the diagram, where various elements are connected.

- **Execution Learning**: This section shows the process of learning and execution.

- **Planning Guidance**: This part involves planning and guidance.

- **Intentional Transmission**: This section deals with the transmission of intentions.

- **Goal Decomposition**: This involves breaking down goals into smaller parts.

- **Situation Analysis**: This section analyzes the current situation.

- **Knowledge Elicitation**: This involves eliciting knowledge.

- **External Information**: This section deals with external information.

- **Task Requirements**: This section outlines the task requirements.

- **LLM**: This stands for Large Language Model, which is integrated into the system.

- **Manually labeled data**: This section shows the use of labeled data for training.

- **Pseudo-labeled data**: This section shows the use of pseudo-labeled data for training.

- **Effective Supervision and Guidance**: This section deals with effective supervision and guidance.

- **Redefine Planning**: This section involves redefining planning.

### Detailed Analysis or ### Content Details

- **Grounding Module**: This module is connected to various other components, indicating its central role in the system.

- **Execution Learning**: This section shows the process of learning and execution, with arrows indicating the flow of information.

- **Planning Guidance**: This part involves planning and guidance, with arrows indicating the flow of information.

- **Intentional Transmission**: This section deals with the transmission of intentions, with arrows indicating the flow of information.

- **Goal Decomposition**: This involves breaking down goals into smaller parts, with arrows indicating the flow of information.

- **Situation Analysis**: This section analyzes the current situation, with arrows indicating the flow of information.

- **Knowledge Elicitation**: This involves eliciting knowledge, with arrows indicating the flow of information.

- **External Information**: This section deals with external information, with arrows indicating the flow of information.

- **Task Requirements**: This section outlines the task requirements, with arrows indicating the flow of information.

- **LLM**: This stands for Large Language Model, which is integrated into the system, with arrows indicating the flow of information.

- **Manually labeled data**: This section shows the use of labeled data for training, with arrows indicating the flow of information.

- **Pseudo-labeled data**: This section shows the use of pseudo-labeled data for training, with arrows indicating the flow of information.

- **Effective Supervision and Guidance**: This section deals with effective supervision and guidance, with arrows indicating the flow of information.

- **Redefine Planning**: This section involves redefining planning, with arrows indicating the flow of information.

### Key Observations

- The diagram is complex, with multiple components and processes.

- The Grounding Module is central to the system.

- The Execution Learning, Planning Guidance, Intentional Transmission, Goal Decomposition, Situation Analysis, Knowledge Elicitation, External Information, Task Requirements, LLM, Manually labeled data, Pseudo-labeled data, Effective Supervision and Guidance, and Redefine Planning are all interconnected.

- The diagram uses arrows to indicate the flow of information between components.

### Interpretation

The diagram illustrates a complex system involving multiple components and processes. The Grounding Module is central to the system, and the Execution Learning, Planning Guidance, Intentional Transmission, Goal Decomposition, Situation Analysis, Knowledge Elicitation, External Information, Task Requirements, LLM, Manually labeled data, Pseudo-labeled data, Effective Supervision and Guidance, and Redefine Planning are all interconnected. The diagram uses arrows to indicate the flow of information between components. The system appears to be designed to learn and execute tasks based on the input of various components. The use of LLM and manually labeled data suggests that the system is designed to learn from human input. The system also includes a mechanism for redefining planning, indicating that the system is designed to adapt to changing circumstances. Overall, the diagram illustrates a complex system that is designed to learn and execute tasks based on the input of various components.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Multi-Stage AI System Architecture with Human-in-the-Loop Supervision

### Overview

The diagram illustrates a complex AI system architecture divided into four interconnected modules: Planning Module, Grounding Module, Execution Learning, and Effective Supervision and Guidance. The system emphasizes iterative feedback loops, human oversight, and adaptive learning through confidence/uncertainty thresholds.

### Components/Axes

1. **Planning Module** (Bottom Left)

- **Goal Decomposition**: Breaks down high-level goals into sub-goals

- **Situation Analysis**: Evaluates current state/context

- **Task Requirements**: Defines operational constraints

- **LLM (Large Language Model)**: Core processing unit

- **Knowledge Elicitation**: Extracts domain-specific knowledge

- **External Information**: Integrates real-world data

- **Intentional Transmission**: Predicts next sub-goal (s(t+1))

2. **Grounding Module** (Top Left)

- **Iterative Update Reward Feedback**: Adjusts performance metrics

- **Collaborative Communication**: Enables agent coordination

- **Progress Broadcast**: Shares status updates

- **Type-level Attention**: Focuses on critical elements

- **Dropout**: Introduces stochasticity for robustness

- **Action History Trajectory**: Tracks past decisions

3. **Execution Learning** (Top Right)

- **Confidence Thresholds (T_p, T_n)**: Determines action validity

- **Uncertainty Thresholds (K_p, K_n)**: Manages risk assessment

- **Meets Expectations**: Successful outcomes

- **Incorrect Behavior**: Failure cases

4. **Effective Supervision and Guidance** (Bottom Right)

- **Pre-training LLM Intervention**: Human-guided model training

- **Manually labeled data**: Ground truth dataset

- **Pseudo-labeled data**: Automated annotations

- **Expand the Task Prompt**: Enhances task understanding

- **Effective Prompt**: Optimized input formulation

- **Redefine Planning**: Iterative strategy adjustment

### Detailed Analysis

- **Flow Direction**:

- Planning → Grounding → Execution → Supervision

- Feedback loops connect Execution Learning back to Planning Module

- Human intervention occurs at multiple stages (pre-training, supervision)

- **Color Coding**:

- Blue: Intentional Transmission/Planning Guidance

- Purple: Collaborative Communication/Action History

- Green: Type-level Attention/Effective Supervision

- Orange: Knowledge Elicitation/External Information

- **Key Connections**:

- Planning Module's LLM output feeds into Grounding Module's Type-level Attention

- Execution Learning's confidence thresholds influence Planning Module's sub-goal prediction

- Human supervision directly impacts all modules through data labeling and prompt engineering

### Key Observations

1. **Iterative Nature**: The system emphasizes continuous improvement through feedback loops

2. **Human-AI Collaboration**: Multiple points of human intervention (pre-training, supervision, prompt engineering)

3. **Risk Management**: Explicit confidence/uncertainty thresholds suggest safety-critical applications

4. **Modular Design**: Clear separation of concerns between planning, execution, and supervision

### Interpretation

This architecture represents a sophisticated AI system designed for complex, dynamic environments requiring:

1. **Adaptive Planning**: Continuous sub-goal prediction and adjustment

2. **Robust Execution**: Confidence-based decision making with uncertainty quantification

3. **Human Oversight**: Critical intervention points for training and supervision

4. **Collaborative Intelligence**: Agent coordination through progress broadcasting

The system's strength lies in its ability to balance autonomous operation with human guidance, particularly through the integration of manually labeled data and pseudo-labeling techniques. The confidence/uncertainty thresholds suggest applications where safety and reliability are paramount, such as autonomous systems or medical diagnostics.

The diagram implies a Peircean investigative approach where the AI system continuously tests hypotheses (sub-goals) against reality (execution feedback), refining its understanding through iterative cycles of action and observation.

DECODING INTELLIGENCE...