## Diagram: Multi-Stage AI System Architecture with Human-in-the-Loop Supervision

### Overview

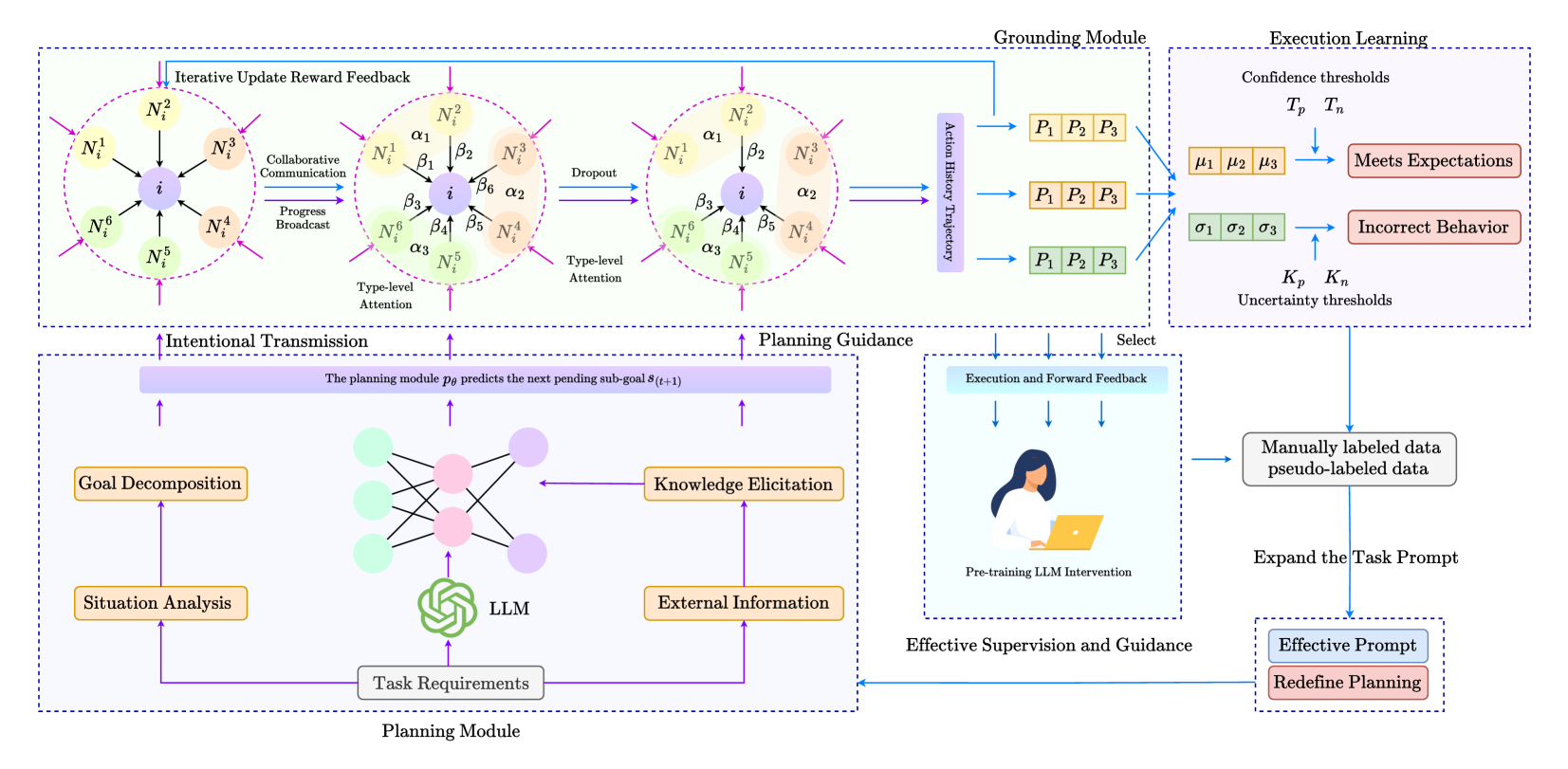

The diagram illustrates a complex AI system architecture divided into four interconnected modules: Planning Module, Grounding Module, Execution Learning, and Effective Supervision and Guidance. The system emphasizes iterative feedback loops, human oversight, and adaptive learning through confidence/uncertainty thresholds.

### Components/Axes

1. **Planning Module** (Bottom Left)

- **Goal Decomposition**: Breaks down high-level goals into sub-goals

- **Situation Analysis**: Evaluates current state/context

- **Task Requirements**: Defines operational constraints

- **LLM (Large Language Model)**: Core processing unit

- **Knowledge Elicitation**: Extracts domain-specific knowledge

- **External Information**: Integrates real-world data

- **Intentional Transmission**: Predicts next sub-goal (s(t+1))

2. **Grounding Module** (Top Left)

- **Iterative Update Reward Feedback**: Adjusts performance metrics

- **Collaborative Communication**: Enables agent coordination

- **Progress Broadcast**: Shares status updates

- **Type-level Attention**: Focuses on critical elements

- **Dropout**: Introduces stochasticity for robustness

- **Action History Trajectory**: Tracks past decisions

3. **Execution Learning** (Top Right)

- **Confidence Thresholds (T_p, T_n)**: Determines action validity

- **Uncertainty Thresholds (K_p, K_n)**: Manages risk assessment

- **Meets Expectations**: Successful outcomes

- **Incorrect Behavior**: Failure cases

4. **Effective Supervision and Guidance** (Bottom Right)

- **Pre-training LLM Intervention**: Human-guided model training

- **Manually labeled data**: Ground truth dataset

- **Pseudo-labeled data**: Automated annotations

- **Expand the Task Prompt**: Enhances task understanding

- **Effective Prompt**: Optimized input formulation

- **Redefine Planning**: Iterative strategy adjustment

### Detailed Analysis

- **Flow Direction**:

- Planning → Grounding → Execution → Supervision

- Feedback loops connect Execution Learning back to Planning Module

- Human intervention occurs at multiple stages (pre-training, supervision)

- **Color Coding**:

- Blue: Intentional Transmission/Planning Guidance

- Purple: Collaborative Communication/Action History

- Green: Type-level Attention/Effective Supervision

- Orange: Knowledge Elicitation/External Information

- **Key Connections**:

- Planning Module's LLM output feeds into Grounding Module's Type-level Attention

- Execution Learning's confidence thresholds influence Planning Module's sub-goal prediction

- Human supervision directly impacts all modules through data labeling and prompt engineering

### Key Observations

1. **Iterative Nature**: The system emphasizes continuous improvement through feedback loops

2. **Human-AI Collaboration**: Multiple points of human intervention (pre-training, supervision, prompt engineering)

3. **Risk Management**: Explicit confidence/uncertainty thresholds suggest safety-critical applications

4. **Modular Design**: Clear separation of concerns between planning, execution, and supervision

### Interpretation

This architecture represents a sophisticated AI system designed for complex, dynamic environments requiring:

1. **Adaptive Planning**: Continuous sub-goal prediction and adjustment

2. **Robust Execution**: Confidence-based decision making with uncertainty quantification

3. **Human Oversight**: Critical intervention points for training and supervision

4. **Collaborative Intelligence**: Agent coordination through progress broadcasting

The system's strength lies in its ability to balance autonomous operation with human guidance, particularly through the integration of manually labeled data and pseudo-labeling techniques. The confidence/uncertainty thresholds suggest applications where safety and reliability are paramount, such as autonomous systems or medical diagnostics.

The diagram implies a Peircean investigative approach where the AI system continuously tests hypotheses (sub-goals) against reality (execution feedback), refining its understanding through iterative cycles of action and observation.