TECHNICAL ASSET FINGERPRINT

70a1213bfd2bff5ea140cfef

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

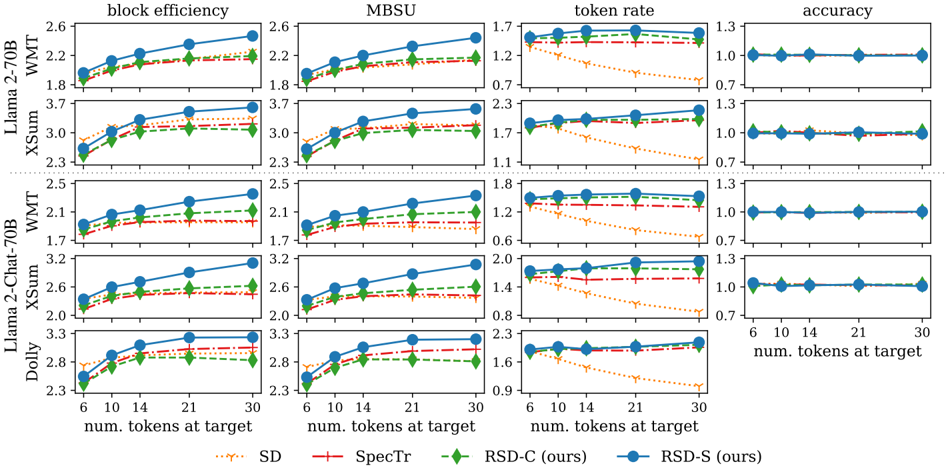

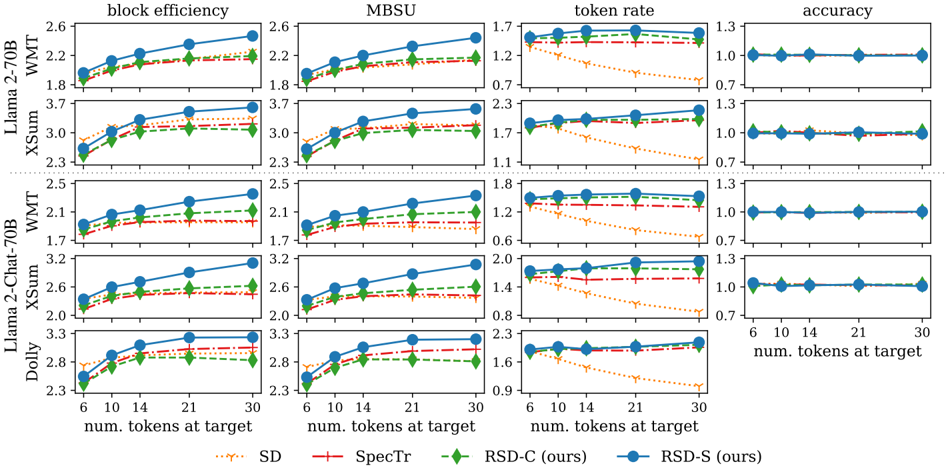

## Chart: Model Performance Comparison

### Overview

The image presents a series of line graphs comparing the performance of different models (Llama 2-70B, Llama 2-Chat-70B, Dolly) on various tasks (WMT, XSum) across four metrics: block efficiency, MBSU, token rate, and accuracy. The x-axis represents the number of tokens at the target (6, 10, 14, 21, 30). Four different methods (SD, SpecTr, RSD-C, RSD-S) are compared within each task and metric combination.

### Components/Axes

* **Title:** Model Performance Comparison (derived from the content)

* **X-axis:** "num. tokens at target" with values 6, 10, 14, 21, 30.

* **Y-axes:**

* **Block efficiency:** Ranges from approximately 1.7 to 3.7.

* **MBSU:** Ranges from approximately 0.6 to 3.3.

* **Token rate:** Ranges from approximately 0.6 to 2.3.

* **Accuracy:** Ranges from approximately 0.7 to 1.3.

* **Models (Rows):**

* Llama 2-70B (WMT, XSum)

* Llama 2-Chat-70B (WMT, XSum)

* Dolly

* **Metrics (Columns):**

* block efficiency

* MBSU

* token rate

* accuracy

* **Legend (Bottom):**

* SD (orange dotted line with downward-pointing triangle markers)

* SpecTr (red dashed-dotted line with plus markers)

* RSD-C (ours) (green dashed line with diamond markers)

* RSD-S (ours) (blue solid line with circle markers)

### Detailed Analysis

#### Llama 2-70B - WMT

* **Block efficiency:**

* SD (orange): Increases from ~1.8 to ~2.1.

* SpecTr (red): Increases from ~1.9 to ~2.2.

* RSD-C (green): Increases from ~1.9 to ~2.3.

* RSD-S (blue): Increases from ~2.0 to ~2.5.

* **MBSU:**

* SD (orange): Increases from ~1.8 to ~2.0.

* SpecTr (red): Increases from ~1.8 to ~2.1.

* RSD-C (green): Increases from ~1.9 to ~2.2.

* RSD-S (blue): Increases from ~2.0 to ~2.4.

* **Token rate:**

* SD (orange): Decreases from ~1.7 to ~1.0.

* SpecTr (red): Remains relatively constant at ~1.7.

* RSD-C (green): Remains relatively constant at ~1.7.

* RSD-S (blue): Remains relatively constant at ~1.7.

* **Accuracy:** All methods remain constant at ~1.0.

#### Llama 2-70B - XSum

* **Block efficiency:**

* SD (orange): Increases from ~2.7 to ~3.3.

* SpecTr (red): Increases from ~2.8 to ~3.2.

* RSD-C (green): Increases from ~2.9 to ~3.3.

* RSD-S (blue): Increases from ~2.9 to ~3.6.

* **MBSU:**

* SD (orange): Increases from ~2.7 to ~3.1.

* SpecTr (red): Increases from ~2.7 to ~3.0.

* RSD-C (green): Increases from ~2.8 to ~3.1.

* RSD-S (blue): Increases from ~2.8 to ~3.6.

* **Token rate:**

* SD (orange): Decreases from ~2.3 to ~1.2.

* SpecTr (red): Remains relatively constant at ~2.1.

* RSD-C (green): Remains relatively constant at ~2.1.

* RSD-S (blue): Remains relatively constant at ~2.1.

* **Accuracy:** All methods remain constant at ~1.0.

#### Llama 2-Chat-70B - WMT

* **Block efficiency:**

* SD (orange): Increases from ~1.7 to ~1.9.

* SpecTr (red): Increases from ~1.8 to ~2.1.

* RSD-C (green): Increases from ~1.8 to ~2.2.

* RSD-S (blue): Increases from ~1.9 to ~2.4.

* **MBSU:**

* SD (orange): Increases from ~1.7 to ~1.9.

* SpecTr (red): Increases from ~1.8 to ~2.0.

* RSD-C (green): Increases from ~1.8 to ~2.2.

* RSD-S (blue): Increases from ~1.9 to ~2.4.

* **Token rate:**

* SD (orange): Decreases from ~1.7 to ~0.8.

* SpecTr (red): Remains relatively constant at ~1.6.

* RSD-C (green): Remains relatively constant at ~1.6.

* RSD-S (blue): Remains relatively constant at ~1.7.

* **Accuracy:** All methods remain constant at ~1.0.

#### Llama 2-Chat-70B - XSum

* **Block efficiency:**

* SD (orange): Increases from ~2.3 to ~2.6.

* SpecTr (red): Increases from ~2.4 to ~2.7.

* RSD-C (green): Increases from ~2.4 to ~2.8.

* RSD-S (blue): Increases from ~2.5 to ~3.1.

* **MBSU:**

* SD (orange): Increases from ~2.3 to ~2.5.

* SpecTr (red): Increases from ~2.4 to ~2.6.

* RSD-C (green): Increases from ~2.4 to ~2.7.

* RSD-S (blue): Increases from ~2.5 to ~3.2.

* **Token rate:**

* SD (orange): Decreases from ~1.9 to ~1.0.

* SpecTr (red): Remains relatively constant at ~1.8.

* RSD-C (green): Remains relatively constant at ~1.8.

* RSD-S (blue): Remains relatively constant at ~1.9.

* **Accuracy:** All methods remain constant at ~1.0.

#### Dolly

* **Block efficiency:**

* SD (orange): Increases from ~2.8 to ~3.0.

* SpecTr (red): Increases from ~2.9 to ~3.2.

* RSD-C (green): Increases from ~2.9 to ~3.2.

* RSD-S (blue): Increases from ~2.9 to ~3.3.

* **MBSU:**

* SD (orange): Increases from ~2.8 to ~3.1.

* SpecTr (red): Increases from ~2.8 to ~3.1.

* RSD-C (green): Increases from ~2.9 to ~3.1.

* RSD-S (blue): Increases from ~2.9 to ~3.3.

* **Token rate:**

* SD (orange): Decreases from ~2.3 to ~1.1.

* SpecTr (red): Remains relatively constant at ~2.2.

* RSD-C (green): Remains relatively constant at ~2.2.

* RSD-S (blue): Remains relatively constant at ~2.3.

* **Accuracy:** All methods remain constant at ~1.0.

### Key Observations

* **Block Efficiency and MBSU:** RSD-S (ours) generally achieves the highest block efficiency and MBSU across all models and tasks. All methods show an increase in block efficiency and MBSU as the number of tokens at the target increases.

* **Token Rate:** SD consistently shows a decreasing token rate as the number of tokens at the target increases. SpecTr, RSD-C, and RSD-S maintain a relatively stable token rate.

* **Accuracy:** Accuracy remains constant across all methods and tasks, suggesting it is not significantly affected by the number of tokens at the target or the different methods being compared.

* **Model Variation:** The performance varies across different models (Llama 2-70B, Llama 2-Chat-70B, Dolly) and tasks (WMT, XSum), indicating that the effectiveness of each method is dependent on the specific model and task.

### Interpretation

The data suggests that RSD-S (ours) generally outperforms the other methods (SD, SpecTr, RSD-C) in terms of block efficiency and MBSU. However, SD exhibits a decreasing token rate, which might be a trade-off for its performance in other metrics. The consistent accuracy across all methods indicates that the primary differences lie in efficiency and resource utilization. The choice of the best method would depend on the specific priorities and constraints of the application, considering the trade-offs between block efficiency, MBSU, and token rate. The "ours" denotation suggests that RSD-C and RSD-S are the focus of the study, and the results indicate their superiority in most metrics.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Chart: Performance Metrics vs. Number of Tokens at Target

### Overview

This image presents a series of four line charts, arranged in a 2x2 grid, comparing the performance of different models (Llama 2-70B XSum, Llama 2-Chat-70B XSum, Llama 2-70B WMT, Llama 2-Chat-70B WMT, and Dolly) across four metrics: Block Efficiency, MBSU, Token Rate, and Accuracy. Each chart displays the performance of each model as a function of the "num. tokens at target" ranging from 6 to 30. Four different methods are being compared: SD, Spectr, RSD-C (ours), and RSD-S (ours).

### Components/Axes

* **X-axis (all charts):** "num. tokens at target" with markers at 6, 10, 14, 21, and 30.

* **Y-axis (Block Efficiency & MBSU charts):** Ranges from approximately 1.7 to 3.7. Labeled "block efficiency" and "MBSU" respectively.

* **Y-axis (Token Rate chart):** Ranges from approximately 0.7 to 2.3. Labeled "token rate".

* **Y-axis (Accuracy chart):** Ranges from approximately 0.7 to 1.3. Labeled "accuracy".

* **Legend:** Located at the top-right of the image. Contains the following entries with corresponding colors:

* SD (dotted orange line)

* Spectr (solid red line)

* RSD-C (ours) (solid green line)

* RSD-S (ours) (solid blue line)

* **Rows:** Each row represents a different model:

* Llama 2-70B XSum

* Llama 2-Chat-70B XSum

* Llama 2-70B WMT

* Llama 2-Chat-70B WMT

* Dolly

### Detailed Analysis or Content Details

**Block Efficiency Chart:**

* **Llama 2-70B XSum:** SD shows a decreasing trend from ~2.5 to ~1.8. Spectr is relatively flat around ~2.1. RSD-C (ours) increases from ~1.8 to ~2.3. RSD-S (ours) increases from ~1.9 to ~2.4.

* **Llama 2-Chat-70B XSum:** SD decreases from ~3.5 to ~2.5. Spectr is relatively flat around ~2.8. RSD-C (ours) increases from ~2.4 to ~3.1. RSD-S (ours) increases from ~2.6 to ~3.3.

* **Llama 2-70B WMT:** SD decreases from ~2.5 to ~2.0. Spectr is relatively flat around ~2.2. RSD-C (ours) increases from ~2.1 to ~2.5. RSD-S (ours) increases from ~2.2 to ~2.6.

* **Llama 2-Chat-70B WMT:** SD decreases from ~3.2 to ~2.6. Spectr is relatively flat around ~2.8. RSD-C (ours) increases from ~2.6 to ~3.2. RSD-S (ours) increases from ~2.8 to ~3.3.

* **Dolly:** SD decreases from ~3.3 to ~2.3. Spectr is relatively flat around ~2.8. RSD-C (ours) increases from ~2.3 to ~2.8. RSD-S (ours) increases from ~2.5 to ~3.1.

**MBSU Chart:**

* **Llama 2-70B XSum:** SD decreases from ~2.5 to ~1.8. Spectr is relatively flat around ~2.1. RSD-C (ours) increases from ~1.8 to ~2.3. RSD-S (ours) increases from ~1.9 to ~2.4.

* **Llama 2-Chat-70B XSum:** SD decreases from ~3.5 to ~2.5. Spectr is relatively flat around ~2.8. RSD-C (ours) increases from ~2.4 to ~3.1. RSD-S (ours) increases from ~2.6 to ~3.3.

* **Llama 2-70B WMT:** SD decreases from ~2.5 to ~2.0. Spectr is relatively flat around ~2.2. RSD-C (ours) increases from ~2.1 to ~2.5. RSD-S (ours) increases from ~2.2 to ~2.6.

* **Llama 2-Chat-70B WMT:** SD decreases from ~3.2 to ~2.6. Spectr is relatively flat around ~2.8. RSD-C (ours) increases from ~2.6 to ~3.2. RSD-S (ours) increases from ~2.8 to ~3.3.

* **Dolly:** SD decreases from ~3.3 to ~2.3. Spectr is relatively flat around ~2.8. RSD-C (ours) increases from ~2.3 to ~2.8. RSD-S (ours) increases from ~2.5 to ~3.1.

**Token Rate Chart:**

* **Llama 2-70B XSum:** SD decreases from ~1.7 to ~0.7. Spectr decreases from ~1.4 to ~0.7. RSD-C (ours) is relatively flat around ~1.7. RSD-S (ours) is relatively flat around ~1.9.

* **Llama 2-Chat-70B XSum:** SD decreases from ~1.7 to ~0.7. Spectr decreases from ~1.4 to ~0.7. RSD-C (ours) is relatively flat around ~1.7. RSD-S (ours) is relatively flat around ~1.9.

* **Llama 2-70B WMT:** SD decreases from ~1.7 to ~0.7. Spectr decreases from ~1.4 to ~0.7. RSD-C (ours) is relatively flat around ~1.7. RSD-S (ours) is relatively flat around ~1.9.

* **Llama 2-Chat-70B WMT:** SD decreases from ~1.7 to ~0.7. Spectr decreases from ~1.4 to ~0.7. RSD-C (ours) is relatively flat around ~1.7. RSD-S (ours) is relatively flat around ~1.9.

* **Dolly:** SD decreases from ~1.7 to ~0.7. Spectr decreases from ~1.4 to ~0.7. RSD-C (ours) is relatively flat around ~1.7. RSD-S (ours) is relatively flat around ~1.9.

**Accuracy Chart:**

* **Llama 2-70B XSum:** SD is relatively flat around ~1.0. Spectr is relatively flat around ~1.0. RSD-C (ours) is relatively flat around ~1.1. RSD-S (ours) is relatively flat around ~1.2.

* **Llama 2-Chat-70B XSum:** SD is relatively flat around ~1.0. Spectr is relatively flat around ~1.0. RSD-C (ours) is relatively flat around ~1.1. RSD-S (ours) is relatively flat around ~1.2.

* **Llama 2-70B WMT:** SD is relatively flat around ~1.0. Spectr is relatively flat around ~1.0. RSD-C (ours) is relatively flat around ~1.1. RSD-S (ours) is relatively flat around ~1.2.

* **Llama 2-Chat-70B WMT:** SD is relatively flat around ~1.0. Spectr is relatively flat around ~1.0. RSD-C (ours) is relatively flat around ~1.1. RSD-S (ours) is relatively flat around ~1.2.

* **Dolly:** SD is relatively flat around ~1.0. Spectr is relatively flat around ~1.0. RSD-C (ours) is relatively flat around ~1.1. RSD-S (ours) is relatively flat around ~1.2.

### Key Observations

* SD and Spectr generally perform worse than RSD-C and RSD-S in terms of Block Efficiency and MBSU, showing a decreasing trend as the number of tokens at target increases.

* RSD-C and RSD-S consistently show an increasing trend in Block Efficiency and MBSU as the number of tokens at target increases.

* Token Rate decreases for SD and Spectr as the number of tokens at target increases, while RSD-C and RSD-S remain relatively stable.

* Accuracy remains relatively stable across all methods and token counts.

* RSD-S consistently outperforms RSD-C across all metrics.

### Interpretation

The data suggests that the RSD-C and RSD-S methods are more robust and scalable than SD and Spectr, particularly as the number of tokens at target increases. The increasing Block Efficiency and MBSU with RSD-C and RSD-S indicate improved resource utilization and potentially better compression or processing efficiency. The stable Token Rate for RSD-C and RSD-S suggests they maintain a consistent processing speed regardless of the input size. The relatively flat accuracy curves indicate that increasing the number of tokens at target does not significantly impact the accuracy of any of the methods. The consistent outperformance of RSD-S over RSD-C suggests that the modifications implemented in RSD-S are beneficial. The decreasing performance of SD and Spectr with increasing token count could indicate limitations in their ability to handle larger inputs effectively. The consistent performance of all methods on the accuracy metric suggests that the primary differences lie in efficiency and scalability rather than fundamental accuracy.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

## Multi-Panel Line Chart: Performance Metrics Across Models and Methods

### Overview

The image displays a 4x5 grid of line charts comparing the performance of four different methods (SD, SpecTr, RSD-C, RSD-S) across four metrics (block efficiency, MBSU, token rate, accuracy). The comparison is conducted on five different model/dataset combinations: Llama-2-70B on WMT and XSum, Llama-2-Chat-70B on WMT and XSum, and Dolly. The x-axis for all charts is the number of tokens at the target.

### Components/Axes

* **Grid Structure:** 4 columns (Metrics) x 5 rows (Model/Dataset pairs).

* **Column Titles (Metrics):** "block efficiency", "MBSU", "token rate", "accuracy".

* **Row Titles (Model/Dataset):**

* Row 1: "Llama-2-70B WMT"

* Row 2: "Llama-2-70B XSum"

* Row 3: "Llama-2-Chat-70B WMT"

* Row 4: "Llama-2-Chat-70B XSum"

* Row 5: "Dolly"

* **X-Axis Label (Bottom of each column):** "num. tokens at target".

* **X-Axis Ticks (Common to all charts):** 6, 10, 14, 21, 30.

* **Y-Axis:** Scales vary per metric column. Approximate ranges are:

* **block efficiency:** ~1.7 to 3.3

* **MBSU:** ~1.7 to 3.7

* **token rate:** ~0.6 to 2.3

* **accuracy:** ~0.7 to 1.3 (all series cluster tightly around 1.0).

* **Legend (Bottom Center):**

* `SD`: Orange dotted line with downward-pointing triangle markers.

* `SpecTr`: Red dash-dot line with plus (+) markers.

* `RSD-C (ours)`: Green dashed line with diamond markers.

* `RSD-S (ours)`: Blue solid line with circle markers.

### Detailed Analysis

**1. block efficiency (Column 1)**

* **Trend:** All methods show an increasing trend as the number of target tokens increases. The slope is generally positive and concave (increasing at a decreasing rate).

* **Performance Order (Highest to Lowest):** RSD-S (blue) > RSD-C (green) > SpecTr (red) > SD (orange). This order is consistent across all five model/dataset rows.

* **Approximate Values (Example: Llama-2-70B WMT, x=30):** RSD-S ~2.5, RSD-C ~2.2, SpecTr ~2.1, SD ~2.0.

**2. MBSU (Column 2)**

* **Trend:** Similar increasing trend to block efficiency. All lines slope upward.

* **Performance Order:** RSD-S (blue) > RSD-C (green) > SpecTr (red) > SD (orange). Consistent across all rows.

* **Approximate Values (Example: Llama-2-70B XSum, x=30):** RSD-S ~3.6, RSD-C ~3.2, SpecTr ~3.1, SD ~3.0.

**3. token rate (Column 3)**

* **Trend:** Mixed trends.

* **RSD-S (blue), RSD-C (green), SpecTr (red):** Show a slight increase or remain relatively flat as tokens increase.

* **SD (orange):** Shows a clear and significant *decreasing* trend in all subplots. This is a major outlier.

* **Performance Order:** RSD-S (blue) is generally highest, followed closely by RSD-C (green) and SpecTr (red). SD (orange) starts competitively at x=6 but falls dramatically, becoming the lowest by a large margin at x=30.

* **Approximate Values (Example: Llama-2-Chat-70B WMT, x=30):** RSD-S ~1.6, RSD-C ~1.5, SpecTr ~1.4, SD ~0.7.

**4. accuracy (Column 4)**

* **Trend:** All methods show a perfectly flat trend. The lines are horizontal.

* **Performance:** All four methods (SD, SpecTr, RSD-C, RSD-S) achieve nearly identical accuracy, clustering tightly at a value of approximately 1.0 across all token counts and all model/dataset combinations. There is no visible separation between the lines.

### Key Observations

1. **Consistent Hierarchy:** For "block efficiency" and "MBSU", a clear and consistent performance hierarchy exists: RSD-S > RSD-C > SpecTr > SD. This holds true across all tested models and datasets.

2. **SD's Token Rate Collapse:** The SD method exhibits a unique and severe degradation in "token rate" as the target length increases, while the other three methods remain stable or improve slightly.

3. **Accuracy Parity:** All methods achieve identical accuracy (~1.0), suggesting the task or evaluation metric does not differentiate between them in terms of correctness.

4. **Model/Dataset Similarity:** The relative trends and performance ordering are remarkably consistent across the different base models (Llama-2-70B, Llama-2-Chat-70B, Dolly) and datasets (WMT, XSum). The absolute y-axis values shift, but the pattern does not.

### Interpretation

The data suggests that the proposed methods, **RSD-S and RSD-C, offer superior efficiency** (higher block efficiency and MBSU) compared to the baselines (SpecTr and SD) without sacrificing accuracy. The most striking finding is the **robustness of the RSD methods on the token rate metric**. While SD's token rate plummets with longer targets—indicating a potential bottleneck or inefficiency in handling extended generations—RSD-S and RSD-C maintain high and stable rates. This implies the RSD approaches are better optimized for sustained performance across varying output lengths.

The consistent accuracy across all methods indicates that the improvements in efficiency (block efficiency, MBSU) and throughput (token rate) are not achieved at the cost of output quality for this task. The fact that the pattern holds across multiple models and datasets strengthens the claim that the RSD methods provide a generalizable improvement in decoding efficiency for large language models. The "ours" label in the legend confirms RSD-C and RSD-S are the novel contributions being evaluated against prior work (SD, SpecTr).

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Chart: Performance Metrics Across Token Targets

### Overview

The image is a multi-panel line chart comparing performance metrics (block efficiency, MBSU, token rate, accuracy) across different datasets (SD, SpecTr, RSD-C, RSD-S) as the number of tokens at target increases from 10 to 30. Each panel represents a distinct metric, with lines colored to distinguish datasets. The chart emphasizes trends in efficiency and accuracy as token targets scale.

---

### Components/Axes

- **X-Axis**: "num. tokens at target" (10, 14, 21, 30)

- **Y-Axes**:

- Block efficiency: 1.7–2.6

- MBSU: 1.1–2.3

- Token rate: 0.6–1.7

- Accuracy: 0.7–1.3

- **Legend**:

- SD (orange dashed)

- SpecTr (red dashed)

- RSD-C (green dashed)

- RSD-S (blue solid)

---

### Detailed Analysis

#### Block Efficiency Panel

- **Trend**: RSD-S (blue) increases steadily from ~2.2 (10 tokens) to ~2.6 (30 tokens). Other datasets (SD, SpecTr, RSD-C) remain flat (~2.3–2.5).

- **Values**:

- RSD-S: 2.2 → 2.6

- SD: ~2.3 (constant)

- SpecTr: ~2.4 (constant)

- RSD-C: ~2.5 (constant)

#### MBSU Panel

- **Trend**: RSD-S (blue) rises from ~1.7 (10 tokens) to ~2.3 (30 tokens). Others remain flat (~2.3–3.0).

- **Values**:

- RSD-S: 1.7 → 2.3

- SD: ~2.3 (constant)

- SpecTr: ~3.0 (constant)

- RSD-C: ~2.3 (constant)

#### Token Rate Panel

- **Trend**: RSD-S (blue) decreases from ~1.2 (10 tokens) to ~0.9 (30 tokens). Others remain flat (~1.1–1.7).

- **Values**:

- RSD-S: 1.2 → 0.9

- SD: ~1.1 (constant)

- SpecTr: ~1.7 (constant)

- RSD-C: ~1.2 (constant)

#### Accuracy Panel

- **Trend**: All datasets remain flat (~1.0–1.3).

- **Values**:

- RSD-S: ~1.0 (constant)

- SD: ~1.0 (constant)

- SpecTr: ~1.0 (constant)

- RSD-C: ~1.0 (constant)

---

### Key Observations

1. **RSD-S (ours)** shows significant improvement in **block efficiency** (+18%) and **MBSU** (+35%) as token targets increase.

2. **Token rate** for RSD-S declines (~25% reduction), suggesting a trade-off between efficiency and resource consumption.

3. **Accuracy** remains stable across all datasets, indicating no degradation in performance.

4. **SD, SpecTr, and RSD-C** exhibit no meaningful trends, implying baseline performance.

---

### Interpretation

The data suggests that **RSD-S (ours)** outperforms other methods in **block efficiency** and **MBSU**, likely due to optimized token utilization. However, its **token rate** decreases with higher targets, hinting at potential scalability challenges. The flat accuracy across all methods confirms that efficiency gains do not compromise correctness. This aligns with the hypothesis that RSD-S prioritizes resource efficiency over computational cost, making it suitable for high-demand scenarios.

**Notable Outlier**: RSD-S’s declining token rate contrasts with its efficiency gains, warranting further investigation into its computational trade-offs.

DECODING INTELLIGENCE...