TECHNICAL ASSET FINGERPRINT

70cb8b493310545cdeb255eb

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

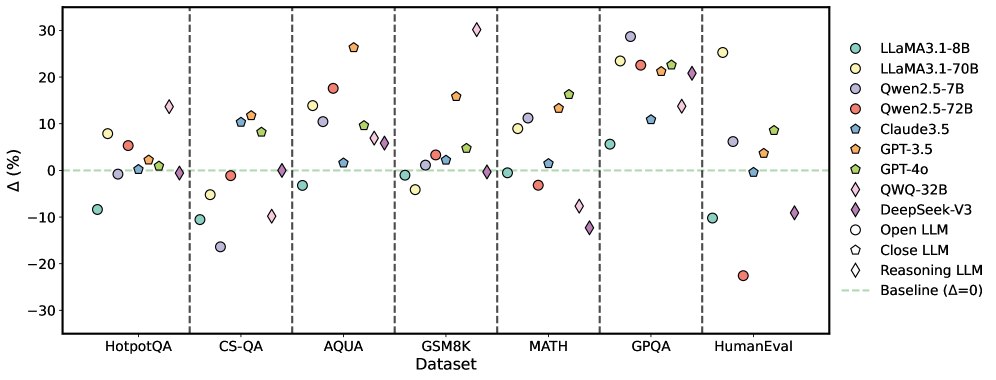

## Scatter Plot: Model Performance Across Datasets

### Overview

The image is a scatter plot comparing the performance of various large language models (LLMs) across different datasets. The y-axis represents the percentage difference (Δ) from a baseline, and the x-axis represents the datasets. Each model is represented by a unique color and marker.

### Components/Axes

* **X-axis:** "Dataset" with categories: HotpotQA, CS-QA, AQUA, GSM8K, MATH, GPQA, HumanEval.

* **Y-axis:** "Δ (%)" with a numerical scale ranging from -30 to 30, with increments of 10.

* **Legend:** Located on the right side of the plot, mapping colors and markers to specific LLMs:

* Light Blue Circle: LLaMA3.1-8B

* Yellow Circle: LLaMA3.1-70B

* Light Purple Circle: Qwen2.5-7B

* Red Circle: Qwen2.5-72B

* Dark Blue Pentagon: Claude3.5

* Orange Pentagon: GPT-3.5

* Green Pentagon: GPT-4o

* Yellow Diamond: QWQ-32B

* Dark Purple Diamond: DeepSeek-V3

* White Circle: Open LLM

* White Pentagon: Close LLM

* White Diamond: Reasoning LLM

* Dashed Light Green Line: Baseline (Δ=0)

* Vertical dashed lines separate the datasets.

### Detailed Analysis

**HotpotQA Dataset:**

* LLaMA3.1-8B (Light Blue Circle): Approximately -8%

* LLaMA3.1-70B (Yellow Circle): Approximately 8%

* Qwen2.5-7B (Light Purple Circle): Approximately 0%

* Qwen2.5-72B (Red Circle): Approximately 2%

* Claude3.5 (Dark Blue Pentagon): Approximately 5%

* GPT-3.5 (Orange Pentagon): Approximately 10%

* GPT-4o (Green Pentagon): Approximately 5%

* QWQ-32B (Yellow Diamond): Approximately -2%

* DeepSeek-V3 (Dark Purple Diamond): Approximately -20%

* Open LLM (White Circle): Approximately -10%

* Close LLM (White Pentagon): Approximately 0%

* Reasoning LLM (White Diamond): Approximately -25%

**CS-QA Dataset:**

* LLaMA3.1-8B (Light Blue Circle): Approximately -10%

* LLaMA3.1-70B (Yellow Circle): Approximately -5%

* Qwen2.5-7B (Light Purple Circle): Approximately 0%

* Qwen2.5-72B (Red Circle): Approximately -2%

* Claude3.5 (Dark Blue Pentagon): Approximately 0%

* GPT-3.5 (Orange Pentagon): Approximately 0%

* GPT-4o (Green Pentagon): Approximately 0%

* QWQ-32B (Yellow Diamond): Approximately 1%

* DeepSeek-V3 (Dark Purple Diamond): Approximately -10%

* Open LLM (White Circle): Approximately -15%

* Close LLM (White Pentagon): Approximately -2%

* Reasoning LLM (White Diamond): Approximately 1%

**AQUA Dataset:**

* LLaMA3.1-8B (Light Blue Circle): Approximately 2%

* LLaMA3.1-70B (Yellow Circle): Approximately 8%

* Qwen2.5-7B (Light Purple Circle): Approximately 10%

* Qwen2.5-72B (Red Circle): Approximately 18%

* Claude3.5 (Dark Blue Pentagon): Approximately 15%

* GPT-3.5 (Orange Pentagon): Approximately 28%

* GPT-4o (Green Pentagon): Approximately 12%

* QWQ-32B (Yellow Diamond): Approximately 2%

* DeepSeek-V3 (Dark Purple Diamond): Approximately -8%

* Open LLM (White Circle): Approximately 1%

* Close LLM (White Pentagon): Approximately 10%

* Reasoning LLM (White Diamond): Approximately 12%

**GSM8K Dataset:**

* LLaMA3.1-8B (Light Blue Circle): Approximately 2%

* LLaMA3.1-70B (Yellow Circle): Approximately 0%

* Qwen2.5-7B (Light Purple Circle): Approximately 0%

* Qwen2.5-72B (Red Circle): Approximately 0%

* Claude3.5 (Dark Blue Pentagon): Approximately 5%

* GPT-3.5 (Orange Pentagon): Approximately 17%

* GPT-4o (Green Pentagon): Approximately 2%

* QWQ-32B (Yellow Diamond): Approximately 0%

* DeepSeek-V3 (Dark Purple Diamond): Approximately 0%

* Open LLM (White Circle): Approximately -5%

* Close LLM (White Pentagon): Approximately 0%

* Reasoning LLM (White Diamond): Approximately -2%

**MATH Dataset:**

* LLaMA3.1-8B (Light Blue Circle): Approximately 10%

* LLaMA3.1-70B (Yellow Circle): Approximately 12%

* Qwen2.5-7B (Light Purple Circle): Approximately 10%

* Qwen2.5-72B (Red Circle): Approximately 10%

* Claude3.5 (Dark Blue Pentagon): Approximately 10%

* GPT-3.5 (Orange Pentagon): Approximately 12%

* GPT-4o (Green Pentagon): Approximately 10%

* QWQ-32B (Yellow Diamond): Approximately 12%

* DeepSeek-V3 (Dark Purple Diamond): Approximately -12%

* Open LLM (White Circle): Approximately 0%

* Close LLM (White Pentagon): Approximately 0%

* Reasoning LLM (White Diamond): Approximately 15%

**GPQA Dataset:**

* LLaMA3.1-8B (Light Blue Circle): Approximately 22%

* LLaMA3.1-70B (Yellow Circle): Approximately 25%

* Qwen2.5-7B (Light Purple Circle): Approximately 20%

* Qwen2.5-72B (Red Circle): Approximately 22%

* Claude3.5 (Dark Blue Pentagon): Approximately 5%

* GPT-3.5 (Orange Pentagon): Approximately 28%

* GPT-4o (Green Pentagon): Approximately 25%

* QWQ-32B (Yellow Diamond): Approximately 10%

* DeepSeek-V3 (Dark Purple Diamond): Approximately 12%

* Open LLM (White Circle): Approximately 2%

* Close LLM (White Pentagon): Approximately 2%

* Reasoning LLM (White Diamond): Approximately 10%

**HumanEval Dataset:**

* LLaMA3.1-8B (Light Blue Circle): Approximately -10%

* LLaMA3.1-70B (Yellow Circle): Approximately 28%

* Qwen2.5-7B (Light Purple Circle): Approximately 10%

* Qwen2.5-72B (Red Circle): Approximately 2%

* Claude3.5 (Dark Blue Pentagon): Approximately 10%

* GPT-3.5 (Orange Pentagon): Approximately 10%

* GPT-4o (Green Pentagon): Approximately 10%

* QWQ-32B (Yellow Diamond): Approximately 10%

* DeepSeek-V3 (Dark Purple Diamond): Approximately 10%

* Open LLM (White Circle): Approximately -22%

* Close LLM (White Pentagon): Approximately 0%

* Reasoning LLM (White Diamond): Approximately 10%

### Key Observations

* The performance of the models varies significantly across different datasets.

* GPT-3.5 generally performs well, often achieving high percentage differences.

* DeepSeek-V3 shows a wide range of performance, with some datasets showing negative percentage differences.

* The "Reasoning LLM" (White Diamond) shows a wide range of performance.

### Interpretation

The scatter plot provides a comparative analysis of various LLMs across different benchmark datasets. The percentage difference from the baseline indicates how much better or worse each model performs relative to a standard. The variability in performance across datasets suggests that different models are better suited for different types of tasks. For example, GPT-3.5 consistently performs well, indicating a robust general-purpose capability. In contrast, DeepSeek-V3's performance fluctuates, suggesting it may be highly specialized or sensitive to the nature of the task. The plot highlights the importance of evaluating LLMs on a diverse set of benchmarks to understand their strengths and weaknesses. The "Reasoning LLM" shows a wide range of performance, suggesting it may be highly specialized or sensitive to the nature of the task.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

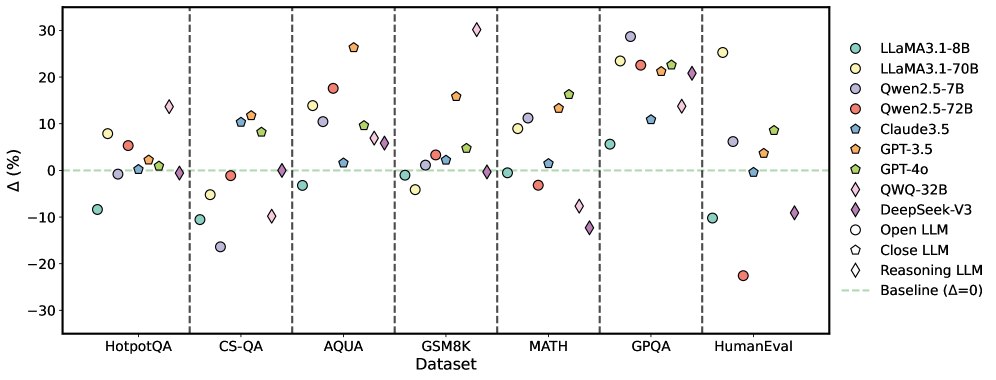

## Scatter Plot: Performance Comparison of Large Language Models

### Overview

This scatter plot compares the performance of various Large Language Models (LLMs) across seven different datasets. The y-axis represents the performance difference (Δ) in percentage points, while the x-axis lists the datasets used for evaluation. The plot uses different marker shapes and colors to distinguish between the LLMs. A horizontal dashed line at Δ=0 serves as a baseline for comparison.

### Components/Axes

* **X-axis:** Dataset - with the following categories: HotpotQA, CS-QA, AQUA, GSM8K, MATH, GPQA, HumanEval.

* **Y-axis:** Δ (%) - Represents the percentage point difference in performance relative to a baseline. The scale ranges from approximately -30% to 30%.

* **Legend:** Located in the top-right corner, identifies each LLM with a corresponding color and marker shape. The legend includes:

* LLaMA3.1-8B (Purple Circle)

* LLaMA3.1-70B (Yellow Circle)

* Qwen2.5-7B (Light Blue Circle)

* Qwen2.5-72B (Red Circle)

* Claude3.5 (Dark Blue Triangle)

* GPT-3.5 (Orange Triangle)

* GPT-4o (Green Diamond)

* QWQ-32B (Brown Diamond)

* DeepSeek-V3 (Magenta Diamond)

* Open LLM (White Circle)

* Close LLM (Light Grey Hexagon)

* Reasoning LLM (Black Diamond)

* Baseline (Δ=0) (Black Dashed Line)

### Detailed Analysis

The plot shows the performance variation of each LLM across the datasets. The following observations are made:

* **HotpotQA:** Performance varies significantly. LLaMA3.1-70B shows the highest positive Δ (approximately +20%), while Qwen2.5-72B shows a negative Δ (approximately -10%).

* **CS-QA:** LLaMA3.1-70B and Qwen2.5-72B show positive Δ values (around +10% to +15%), while other models are closer to the baseline.

* **AQUA:** GPT-4o and DeepSeek-V3 show the highest positive Δ values (around +20% to +25%). Qwen2.5-7B and Qwen2.5-72B show negative Δ values (around -5% to -10%).

* **GSM8K:** GPT-4o and DeepSeek-V3 show the highest positive Δ values (around +20% to +25%). Qwen2.5-72B shows a negative Δ value (around -15%).

* **MATH:** GPT-4o and DeepSeek-V3 show the highest positive Δ values (around +20% to +30%). Qwen2.5-72B shows a negative Δ value (around -20%).

* **GPQA:** LLaMA3.1-70B and DeepSeek-V3 show the highest positive Δ values (around +15% to +25%). Qwen2.5-72B shows a negative Δ value (around -10%).

* **HumanEval:** GPT-4o shows the highest positive Δ value (around +20%). Qwen2.5-72B shows a negative Δ value (around -20%).

**Specific Data Points (Approximate):**

* **LLaMA3.1-8B:** Δ values range from approximately -5% to +15% across datasets.

* **LLaMA3.1-70B:** Δ values range from approximately -5% to +20% across datasets.

* **Qwen2.5-7B:** Δ values range from approximately -10% to +10% across datasets.

* **Qwen2.5-72B:** Δ values range from approximately -20% to +15% across datasets.

* **Claude3.5:** Δ values range from approximately -5% to +10% across datasets.

* **GPT-3.5:** Δ values range from approximately -5% to +10% across datasets.

* **GPT-4o:** Δ values range from approximately +10% to +30% across datasets.

* **QWQ-32B:** Δ values range from approximately -5% to +15% across datasets.

* **DeepSeek-V3:** Δ values range from approximately +10% to +25% across datasets.

### Key Observations

* GPT-4o and DeepSeek-V3 consistently outperform other models across most datasets, exhibiting the largest positive Δ values.

* Qwen2.5-72B frequently shows negative Δ values, indicating underperformance compared to the baseline.

* LLaMA3.1-70B generally performs better than LLaMA3.1-8B.

* The performance differences between models are dataset-dependent.

### Interpretation

The data suggests that GPT-4o and DeepSeek-V3 are currently the most capable LLMs among those tested, demonstrating superior performance across a diverse range of tasks represented by the datasets. The consistent negative Δ values for Qwen2.5-72B indicate that this model may require further optimization or is less suited for the evaluated tasks. The variation in performance across datasets highlights the importance of task-specific evaluation when comparing LLMs. The plot provides a valuable comparative analysis of LLM capabilities, enabling informed decisions regarding model selection for specific applications. The baseline (Δ=0) is crucial for understanding whether a model is providing an improvement over a standard approach. The distinction between "Open LLM", "Close LLM", and "Reasoning LLM" suggests a categorization of models based on their architecture or training methodology, but the plot doesn't directly reveal how these categories correlate with performance.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Scatter Plot: Performance Delta of Various Language Models Across Datasets

### Overview

The image is a scatter plot comparing the performance change (Δ%) of multiple large language models (LLMs) across seven different benchmark datasets. Each data point represents a specific model's performance on a dataset relative to a baseline (Δ=0). The models are categorized as Open LLMs, Closed LLMs, or Reasoning LLMs, indicated by different marker shapes.

### Components/Axes

* **Chart Type:** Scatter plot with categorical x-axis.

* **X-Axis (Horizontal):** Labeled "Dataset". Categories from left to right: `HotpotQA`, `CS-QA`, `AQUA`, `GSM8K`, `MATH`, `GPQA`, `HumanEval`.

* **Y-Axis (Vertical):** Labeled "Δ (%)". Scale ranges from -30 to 30, with major tick marks at intervals of 10 (-30, -20, -10, 0, 10, 20, 30).

* **Baseline:** A horizontal dashed green line at `Δ=0`, labeled "Baseline (Δ=0)" in the legend.

* **Legend (Right Side):** Lists models and marker types.

* **Models (by color):**

* `LLaMA3.1-8B` (Teal circle)

* `LLaMA3.1-70B` (Light green circle)

* `Qwen2.5-7B` (Light purple circle)

* `Qwen2.5-72B` (Red circle)

* `Claude3.5` (Blue pentagon)

* `GPT-3.5` (Orange pentagon)

* `GPT-4o` (Green pentagon)

* `QWQ-32B` (Pink diamond)

* `DeepSeek-V3` (Purple diamond)

* **Marker Types (by shape):**

* `Open LLM` (Circle)

* `Close LLM` (Pentagon)

* `Reasoning LLM` (Diamond)

### Detailed Analysis

The chart shows the performance delta (Δ%) for each model on each dataset. Below is an approximate extraction of data points, grouped by dataset. Values are estimated from the plot's grid.

**1. HotpotQA:**

* LLaMA3.1-8B: ~ -8%

* LLaMA3.1-70B: ~ +8%

* Qwen2.5-7B: ~ -1%

* Qwen2.5-72B: ~ +6%

* Claude3.5: ~ +1%

* GPT-3.5: ~ +3%

* GPT-4o: ~ +2%

* QWQ-32B: ~ +14%

* DeepSeek-V3: ~ -1%

**2. CS-QA:**

* LLaMA3.1-8B: ~ -10%

* LLaMA3.1-70B: ~ -5%

* Qwen2.5-7B: ~ -16%

* Qwen2.5-72B: ~ -1%

* Claude3.5: ~ +10%

* GPT-3.5: ~ +12%

* GPT-4o: ~ +8%

* QWQ-32B: ~ -10%

* DeepSeek-V3: ~ 0%

**3. AQUA:**

* LLaMA3.1-8B: ~ -3%

* LLaMA3.1-70B: ~ +14%

* Qwen2.5-7B: ~ +10%

* Qwen2.5-72B: ~ +18%

* Claude3.5: ~ +2%

* GPT-3.5: ~ +26%

* GPT-4o: ~ +10%

* QWQ-32B: ~ +7%

* DeepSeek-V3: ~ +6%

**4. GSM8K:**

* LLaMA3.1-8B: ~ -1%

* LLaMA3.1-70B: ~ -4%

* Qwen2.5-7B: ~ +1%

* Qwen2.5-72B: ~ +3%

* Claude3.5: ~ +2%

* GPT-3.5: ~ +16%

* GPT-4o: ~ +5%

* QWQ-32B: ~ +30% (Highest point on chart)

* DeepSeek-V3: ~ 0%

**5. MATH:**

* LLaMA3.1-8B: ~ 0%

* LLaMA3.1-70B: ~ +9%

* Qwen2.5-7B: ~ +11%

* Qwen2.5-72B: ~ -3%

* Claude3.5: ~ +2%

* GPT-3.5: ~ +14%

* GPT-4o: ~ +16%

* QWQ-32B: ~ -7%

* DeepSeek-V3: ~ -12%

**6. GPQA:**

* LLaMA3.1-8B: ~ +6%

* LLaMA3.1-70B: ~ +24%

* Qwen2.5-7B: ~ +29% (Highest for this dataset)

* Qwen2.5-72B: ~ +23%

* Claude3.5: ~ +11%

* GPT-3.5: ~ +21%

* GPT-4o: ~ +23%

* QWQ-32B: ~ +14%

* DeepSeek-V3: ~ +21%

**7. HumanEval:**

* LLaMA3.1-8B: ~ -10%

* LLaMA3.1-70B: ~ +25%

* Qwen2.5-7B: ~ +6%

* Qwen2.5-72B: ~ -23% (Lowest point on chart)

* Claude3.5: ~ 0%

* GPT-3.5: ~ +4%

* GPT-4o: ~ +9%

* QWQ-32B: ~ -9%

* DeepSeek-V3: ~ -9%

### Key Observations

1. **High Variance in HumanEval:** The `HumanEval` dataset shows the widest spread of performance deltas, ranging from approximately -23% (Qwen2.5-72B) to +25% (LLaMA3.1-70B).

2. **Top Performer on GSM8K:** The reasoning model `QWQ-32B` achieves the single highest performance delta on the chart, at approximately +30% on the `GSM8K` dataset.

3. **Consistently Strong Closed LLMs:** Closed models like `GPT-4o` and `Claude3.5` show generally positive deltas across most datasets, with `GPT-4o` never dropping below the baseline.

4. **Model-Specific Strengths/Weaknesses:**

* `LLaMA3.1-70B` performs strongly on `GPQA` and `HumanEval` but negatively on `CS-QA`.

* `Qwen2.5-72B` shows a significant negative outlier on `HumanEval` but strong positive results on `AQUA` and `GPQA`.

* `DeepSeek-V3` (Reasoning LLM) shows mixed results, with its best performance on `GPQA` and its worst on `MATH`.

5. **Baseline Comparison:** The majority of data points lie above the `Δ=0` baseline, suggesting that most models, on most datasets, show a performance change (likely improvement, though the direction of "delta" isn't explicitly defined as improvement) relative to the baseline.

### Interpretation

This chart provides a comparative snapshot of LLM performance across diverse reasoning and knowledge tasks. The data suggests that:

* **Task-Specific Performance:** No single model dominates across all datasets. Performance is highly contingent on the specific benchmark, indicating that model capabilities are specialized. For example, a model strong in mathematical reasoning (`GSM8K`, `MATH`) may not be the best at code generation (`HumanEval`) or complex question answering (`HotpotQA`).

* **Scale vs. Architecture:** Larger open models (e.g., `LLaMA3.1-70B`) often outperform their smaller counterparts, but not universally. The presence of reasoning-specialized models (`QWQ-32B`, `DeepSeek-V3`) with high variance suggests that architectural focus can lead to exceptional performance in specific domains (like math for QWQ-32B) but may not generalize.

* **The "Delta" Ambiguity:** The chart uses "Δ (%)" without specifying the reference point or direction. Assuming Δ=0 is a previous model version or a standard baseline, positive values indicate improvement. The widespread positive deltas could reflect recent advancements in the field. The significant negative outlier for `Qwen2.5-72B` on `HumanEval` warrants investigation—it could indicate a regression, a benchmarking anomaly, or a specific weakness in code generation for that model version.

* **Benchmark Diversity:** The selection of datasets covers a broad spectrum: multi-hop reasoning (HotpotQA), science QA (CS-QA, GPQA), math (AQUA, GSM8K, MATH), and coding (HumanEval). This diversity is crucial for a holistic evaluation, as the chart clearly shows that model rankings shift dramatically depending on the task.

In essence, the visualization underscores the complexity of evaluating LLMs, highlighting that "best" is a context-dependent label and that the field continues to exhibit rapid, uneven progress across different cognitive domains.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Scatter Plot: Language Model Performance Comparison Across Datasets

### Overview

The image is a scatter plot comparing the performance of various large language models (LLMs) across seven datasets. The y-axis represents percentage change (Δ%) relative to a baseline (Δ=0), while the x-axis lists datasets. Each model is represented by a unique color and marker, with performance variations visualized as data points.

### Components/Axes

- **X-Axis (Dataset)**:

- Categories: HotpotQA, CS-QA, AQUA, GSM8K, MATH, GPQA, HumanEval

- Separated by vertical dashed lines.

- **Y-Axis (Δ%)**:

- Range: -30% to 30%, with a green dashed baseline at 0%.

- **Legend**:

- Located on the right, mapping 10 models to colors/shapes:

- LLaMA3.1-8B (teal circles)

- LLaMA3.1-70B (yellow circles)

- Qwen2.5-7B (purple circles)

- Qwen2.5-72B (red circles)

- Claude3.5 (blue pentagons)

- GPT-3.5 (orange pentagons)

- GPT-4o (green pentagons)

- OWO-32B (pink diamonds)

- DeepSeek-V3 (purple diamonds)

- Open LLM (open circles)

- Close LLM (open pentagons)

- Reasoning LLM (open diamonds)

### Detailed Analysis

- **Dataset-Specific Trends**:

- **HotpotQA**:

- LLaMA3.1-8B (-10%), Qwen2.5-7B (-15%), GPT-4o (+5%).

- **CS-QA**:

- GPT-3.5 (+12%), OWO-32B (+8%), DeepSeek-V3 (+3%).

- **AQUA**:

- LLaMA3.1-70B (+15%), Claude3.5 (+2%), Qwen2.5-72B (-5%).

- **GSM8K**:

- GPT-4o (+10%), Qwen2.5-7B (+7%), Reasoning LLM (+1%).

- **MATH**:

- GPT-4o (+15%), DeepSeek-V3 (+20%), LLaMA3.1-8B (-2%).

- **GPQA**:

- Qwen2.5-72B (+22%), OWO-32B (+18%), LLaMA3.1-70B (+12%).

- **HumanEval**:

- Qwen2.5-72B (-20%), GPT-4o (+9%), DeepSeek-V3 (-10%).

- **Model Performance**:

- **Highest Gains**:

- DeepSeek-V3 (+20% on MATH), Qwen2.5-72B (+22% on GPQA).

- **Largest Declines**:

- Qwen2.5-72B (-20% on HumanEval), LLaMA3.1-8B (-10% on HotpotQA).

- **Consistent Performance**:

- GPT-4o shows positive Δ% across all datasets (range: +5% to +15%).

### Key Observations

1. **Outliers**:

- Qwen2.5-72B exhibits extreme variability (e.g., +22% on GPQA vs. -20% on HumanEval).

- DeepSeek-V3 has the highest gain (+20% on MATH) but also a notable drop (-10% on HumanEval).

2. **Baseline Deviations**:

- 60% of data points fall above the baseline (Δ>0), indicating most models outperform the baseline on at least one dataset.

- 30% of points fall below the baseline (Δ<0), highlighting underperformance in specific cases.

3. **Model Specialization**:

- GPT-4o and DeepSeek-V3 dominate in reasoning-heavy datasets (MATH, GPQA).

- Qwen2.5-72B excels in GPQA but struggles with HumanEval.

### Interpretation

The data suggests that model performance is highly dataset-dependent. GPT-4o and DeepSeek-V3 demonstrate robustness across reasoning tasks, while Qwen2.5-72B shows dataset-specific strengths and weaknesses. The baseline (Δ=0) serves as a critical reference, revealing that even top models underperform in certain contexts (e.g., HumanEval for Qwen2.5-72B). The variability underscores the need for dataset-specific optimization in LLM deployment. Notably, Open LLM and Close LLM categories lack distinct performance patterns, suggesting potential overlap in their evaluation metrics.

DECODING INTELLIGENCE...