TECHNICAL ASSET FINGERPRINT

70ead07365e6fe6c8cc1cde6

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

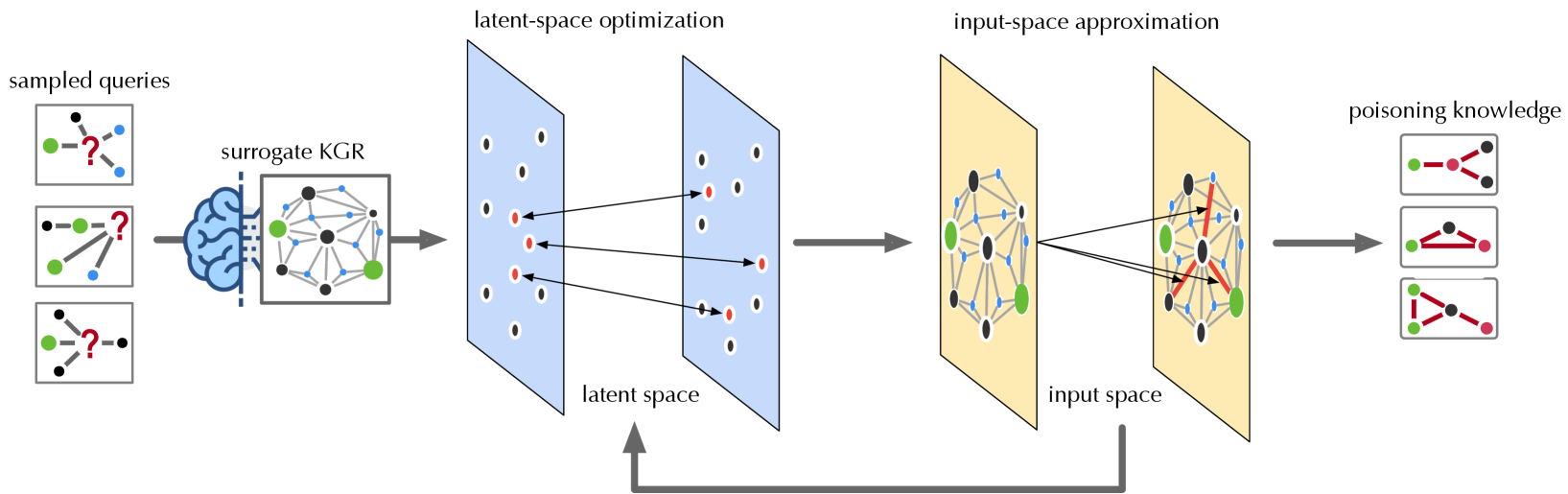

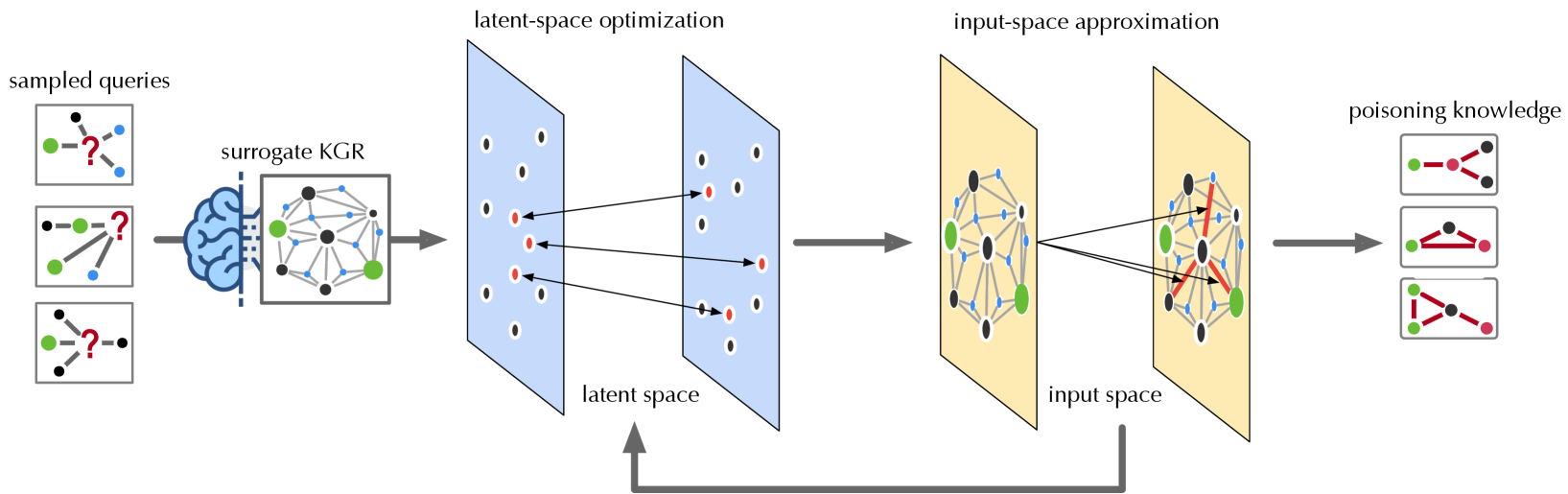

## Diagram: Knowledge Graph Poisoning Attack

### Overview

The image illustrates a knowledge graph poisoning attack process. It starts with sampled queries, proceeds through a surrogate knowledge graph representation (KGR), latent-space optimization, input-space approximation, and culminates in poisoning knowledge. The diagram shows how the attack manipulates the knowledge graph to introduce false or misleading information.

### Components/Axes

* **sampled queries:** Three example queries are shown, each consisting of nodes (black, green, blue) and edges. Each query has a red question mark indicating an unknown relationship.

* **surrogate KGR:** A brain icon is connected to a knowledge graph representation. The graph consists of nodes (black, green, blue) and edges.

* **latent-space optimization:** Two blue planes represent the latent space. The left plane has black and red nodes. The right plane has black and red nodes. Arrows connect some nodes between the two planes. The text "latent space" is below the planes.

* **input-space approximation:** Two yellow planes represent the input space. The left plane has black, green, and blue nodes connected by gray edges. The right plane has black, green, and blue nodes connected by gray edges, with some red edges. Arrows connect some nodes between the two planes. The text "input space" is below the planes.

* **poisoning knowledge:** Three example poisoned knowledge graphs are shown, each consisting of nodes (black, green, red) and edges.

* A gray arrow loops from the right yellow plane (input space) back to the bottom of the left blue plane (latent space).

### Detailed Analysis

* **sampled queries:**

* Query 1: Contains one black node, one green node, and two blue nodes.

* Query 2: Contains one black node, one green node, and two blue nodes.

* Query 3: Contains two black nodes and one green node.

* **surrogate KGR:** The knowledge graph contains approximately 10 black nodes, 3 green nodes, and 10 blue nodes.

* **latent-space optimization:** The left latent space plane contains approximately 8 black nodes and 4 red nodes. The right latent space plane contains approximately 8 black nodes and 4 red nodes.

* **input-space approximation:** The left input space plane contains approximately 8 black nodes, 3 green nodes, and 10 blue nodes. The right input space plane contains approximately 8 black nodes, 3 green nodes, and 10 blue nodes. The right plane also contains red edges.

* **poisoning knowledge:**

* Graph 1: Contains one green node, one red node, and one black node.

* Graph 2: Contains one green node, one red node, and one black node.

* Graph 3: Contains one green node, two red nodes, and one black node.

### Key Observations

* The process starts with incomplete queries and uses a surrogate KGR to generate a latent space representation.

* The latent space is optimized and approximated back to the input space, where poisoning is introduced by adding red edges.

* The final output is a set of poisoned knowledge graphs with altered relationships (red edges).

* The gray arrow indicates a feedback loop from the input space to the latent space.

### Interpretation

The diagram illustrates a method for injecting false information into a knowledge graph. The process involves transforming the initial queries into a latent space, optimizing this space, and then approximating it back to the input space. The key step is the introduction of "poisoning knowledge" in the input space, represented by the red edges. This suggests that the attack focuses on manipulating the relationships between entities in the knowledge graph. The feedback loop from the input space to the latent space implies an iterative refinement of the poisoning strategy. The goal is to create poisoned knowledge graphs that can mislead users or downstream applications that rely on the knowledge graph.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Knowledge Graph Poisoning Process

### Overview

This diagram illustrates a process for poisoning knowledge graphs, starting with sampled queries and culminating in the injection of "poisoning knowledge." The process involves a surrogate knowledge graph (KGR), latent-space optimization, and input-space approximation. The diagram depicts a flow of information and transformations between these stages.

### Components/Axes

The diagram is segmented into four main sections, arranged horizontally from left to right:

1. **Sampled Queries:** Represents initial queries, depicted as brain-like structures with question marks and connected nodes.

2. **Surrogate KGR:** A complex network of nodes and edges, representing a surrogate knowledge graph.

3. **Latent-Space Optimization:** A blue-tinted rectangular area containing nodes and edges, representing the latent space.

4. **Input-Space Approximation:** A yellow-tinted rectangular area containing nodes and edges, representing the input space.

5. **Poisoning Knowledge:** Represents the final output, depicted as connected nodes with arrows.

There are also labels indicating the process stages: "sampled queries", "surrogate KGR", "latent-space optimization", "input-space approximation", and "poisoning knowledge". An upward-pointing double arrow connects the "input space approximation" to the "latent space optimization", indicating a feedback loop.

### Detailed Analysis or Content Details

**1. Sampled Queries (Leftmost Section):**

* There are three query examples. Each consists of a brain-like shape with a question mark at the center, connected to several smaller nodes (approximately 5-7 per query).

* The connections between the brain and nodes are represented by lines.

* The nodes are colored green and black.

**2. Surrogate KGR (Center-Left Section):**

* This is a complex network of approximately 15-20 nodes connected by numerous edges.

* The nodes are colored green, black, and white.

* Edges are represented by lines, some dashed.

* The network appears to be a graph structure.

**3. Latent-Space Optimization (Center Section):**

* This section contains approximately 15-20 nodes arranged in a grid-like pattern within a blue rectangle.

* Nodes are colored green, black, and red.

* Edges connect the nodes, represented by lines.

* The connections appear to be sparse.

**4. Input-Space Approximation (Center-Right Section):**

* This section contains approximately 15-20 nodes arranged in a network within a yellow rectangle.

* Nodes are colored green, black, and red.

* Edges connect the nodes, represented by lines.

* The connections appear to be more dense than in the latent space.

**5. Poisoning Knowledge (Rightmost Section):**

* There are three examples of "poisoning knowledge". Each consists of a set of connected nodes (approximately 3-5 per example).

* Nodes are colored green and red.

* Edges are represented by arrows.

* The arrows indicate the direction of influence or flow.

**Flow of Information:**

* The "sampled queries" feed into the "surrogate KGR".

* The "surrogate KGR" transforms the queries and passes them to the "latent-space optimization".

* The "latent-space optimization" then passes the information to the "input-space approximation".

* The "input-space approximation" generates the "poisoning knowledge".

* There is a feedback loop from the "input-space approximation" back to the "latent-space optimization".

### Key Observations

* The diagram illustrates a multi-stage process for manipulating knowledge graphs.

* The color red consistently appears in the "latent-space optimization", "input-space approximation", and "poisoning knowledge" sections, potentially indicating the injected "poison".

* The density of connections increases from the latent space to the input space.

* The feedback loop suggests an iterative refinement process.

### Interpretation

The diagram depicts a method for injecting malicious information ("poisoning knowledge") into a knowledge graph. The process begins with sampling queries, which are then processed through a surrogate knowledge graph to create a latent representation. This latent representation is optimized and then approximated in the input space, ultimately resulting in the generation of poisoned knowledge. The feedback loop suggests that the process is iterative, allowing for refinement of the poisoned knowledge to maximize its impact. The use of a surrogate KGR and latent space optimization likely aims to obfuscate the poisoning attack, making it more difficult to detect. The red color coding suggests that the injected "poison" is represented by these nodes and edges. This diagram is a conceptual illustration of a potential attack vector, rather than a presentation of specific data. It demonstrates a process, not a quantifiable result.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## [Diagram]: Knowledge Graph Poisoning Attack via Latent-Space Optimization

### Overview

The image is a technical flowchart illustrating a multi-stage process for generating a "poisoning attack" on a Knowledge Graph Reasoning (KGR) system. The process transforms sampled queries into poisoned knowledge by performing optimization in a latent space and then approximating the result in the original input space. The flow moves from left to right, with a feedback loop connecting the later stages back to the optimization phase.

### Components/Axes

The diagram is segmented into five primary sequential components, connected by directional arrows:

1. **Sampled Queries (Far Left):**

* **Label:** "sampled queries"

* **Content:** Three rectangular boxes, each containing a small graph diagram. Each diagram consists of nodes (colored green, blue, and black) connected by lines, with a prominent red question mark (`?`) indicating a missing or target relationship to be inferred or attacked.

2. **Surrogate KGR (Left-Center):**

* **Label:** "surrogate KGR"

* **Content:** A blue brain icon (representing a model or reasoning engine) points to a square box containing a more complex knowledge graph. This graph has multiple nodes (green, black, blue) interconnected by lines, representing the surrogate model being targeted or used for the attack.

3. **Latent-Space Optimization (Center):**

* **Labels:** "latent-space optimization" (top), "latent space" (bottom).

* **Content:** Two parallel, light-blue rectangular planes are depicted in a 3D perspective, representing the latent feature space. Each plane contains an array of dots (mostly black, some red). Arrows show the movement of specific red dots from the left plane to the right plane, indicating an optimization process that adjusts representations within this abstract space.

4. **Input-Space Approximation (Right-Center):**

* **Labels:** "input-space approximation" (top), "input space" (bottom).

* **Content:** Two parallel, light-yellow rectangular planes represent the original input space (the knowledge graph structure). Inside these planes are graph structures similar to the "surrogate KGR." Arrows show the mapping of optimized points from the latent space into this input space, resulting in graphs where certain connections (edges) are highlighted in red.

5. **Poisoning Knowledge (Far Right):**

* **Label:** "poisoning knowledge"

* **Content:** Three rectangular boxes, each containing a final knowledge graph. These graphs feature nodes (green, black, red) with specific relationships highlighted by thick red lines, representing the malicious or "poisoned" knowledge injected into the system.

**Flow and Connections:**

* A thick grey arrow points from "sampled queries" to "surrogate KGR."

* A thick grey arrow points from "surrogate KGR" to the first plane of "latent-space optimization."

* A thick grey arrow points from the second plane of "latent-space optimization" to the first plane of "input-space approximation."

* A thick grey arrow points from the second plane of "input-space approximation" to "poisoning knowledge."

* **Feedback Loop:** A grey arrow originates from the bottom of the "input space" section and points back to the bottom of the "latent space" section, indicating an iterative or closed-loop optimization process.

### Detailed Analysis

The process describes a method for crafting adversarial attacks on knowledge graph models:

1. **Input:** The attack begins with "sampled queries," which are incomplete knowledge graph fragments where a specific relationship (the red `?`) is the target.

2. **Model Targeting:** These queries are processed by or against a "surrogate KGR," which is a model that mimics the behavior of the actual target system.

3. **Core Optimization:** The key attack generation happens in the "latent space." Instead of directly modifying the graph (which is discrete), the method optimizes continuous vector representations (the red dots) of the graph elements. The arrows between the blue planes show these vectors being adjusted to achieve a malicious objective.

4. **Mapping to Attack:** The optimized latent vectors are then projected back into the interpretable "input space" (the yellow planes). This "approximation" step translates the abstract optimized vectors into concrete modifications of the knowledge graph structure, shown as new or altered edges (red lines).

5. **Output:** The final result is "poisoning knowledge"—a set of crafted knowledge graph fragments designed to mislead the KGR system when ingested as training data or during inference.

### Key Observations

* **Color Coding:** The diagram uses color consistently: **Green** and **black** nodes represent standard entities. **Blue** nodes appear in the initial queries and surrogate model. **Red** is used exclusively for the attack elements: the target question mark (`?`), optimized points in latent space, highlighted malicious edges in the input space, and the final poisoned relationships.

* **Spatial Separation:** The "latent space" (blue) and "input space" (yellow) are visually distinct, emphasizing the transformation between abstract feature space and concrete data structure.

* **Iterative Process:** The feedback loop from "input space" back to "latent space" suggests the optimization is not a single pass but may involve refining the attack based on how it manifests in the input space.

### Interpretation

This diagram outlines a sophisticated, gradient-based method for performing data poisoning attacks against Knowledge Graph Reasoning systems. The core innovation it depicts is moving the attack optimization from the discrete, combinatorial space of graph structures (which is hard to optimize directly) into a continuous latent space (which is amenable to gradient descent).

**What it suggests:** The attack is "black-box" or "transfer-based," as it uses a *surrogate* model to generate attacks intended for another system. The process is automated and systematic, not manual.

**How elements relate:** The flow shows a clear cause-and-effect chain: malicious intent (question mark) -> model analysis -> latent optimization -> structural approximation -> poisoned output. The feedback loop is critical, implying the attacker iteratively checks if the optimized latent vector produces the desired poisoning effect in the actual graph structure before finalizing it.

**Notable implications:** This represents a significant security threat to AI systems relying on knowledge graphs (e.g., search engines, recommendation systems, question-answering bots). The poisoning knowledge could cause the system to learn false facts or make incorrect inferences. The diagram's technical nature suggests it is likely from a research paper demonstrating such an attack vector to raise awareness and prompt the development of defenses.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Flowchart: Knowledge Graph Reasoning and Poisoning Process

### Overview

The image depicts a multi-stage technical process involving knowledge graph reasoning (KGR), latent-space optimization, input-space approximation, and poisoning knowledge. The workflow begins with sampled queries, progresses through surrogate KGR and latent-space optimization, transitions to input-space approximation, and concludes with poisoning knowledge. Arrows indicate directional flow, and color-coded nodes/edges represent different components or statuses.

---

### Components/Axes

1. **Sampled Queries**

- Three diagrams with nodes (green, black) and edges (blue).

- One node in each diagram is marked with a red question mark (indicating uncertainty or target for poisoning).

2. **Surrogate KGR**

- A graph with interconnected nodes (green, black) and edges (blue).

- Positioned between "sampled queries" and "latent-space optimization."

3. **Latent-Space Optimization**

- Two panels:

- **Left Panel**: Nodes (black) with red points (targets) connected by arrows.

- **Right Panel**: Nodes (black) with red points (targets) and additional red edges.

- Arrows connect the surrogate KGR to both panels, indicating mapping to latent space.

4. **Input-Space Approximation**

- A graph with nodes (green, black) and edges (blue, red).

- Arrows connect the latent-space optimization panels to this stage, showing reconstruction in input space.

5. **Poisoning Knowledge**

- Three diagrams with nodes (green, black) and edges (red).

- Red edges dominate, suggesting adversarial modifications.

---

### Detailed Analysis

- **Color Coding**:

- **Green**: Likely represents correct/valid nodes.

- **Black**: Neutral or unspecified nodes.

- **Red**: Targets for poisoning or adversarial modifications.

- **Flow Direction**:

- Queries → Surrogate KGR → Latent-space optimization → Input-space approximation → Poisoning knowledge.

- **Key Relationships**:

- The surrogate KGR acts as a bridge between raw queries and latent-space optimization.

- Latent-space optimization refines targets (red points) before input-space approximation.

- Poisoning knowledge introduces adversarial edges (red) into the final graph.

---

### Key Observations

1. **Adversarial Focus**: The red question marks and edges highlight the process's focus on manipulating specific nodes/edges.

2. **Bidirectional Mapping**: The latent-space optimization includes feedback loops (arrows pointing back to input-space approximation), suggesting iterative refinement.

3. **Poisoning Mechanism**: The final stage replaces blue edges with red ones, indicating a shift from valid to adversarial relationships.

---

### Interpretation

This flowchart illustrates a machine learning pipeline for knowledge graph reasoning with adversarial attacks. The process begins by sampling queries, then uses a surrogate KGR to map them into a latent space for optimization. The optimized targets are reconstructed in the input space, and finally, adversarial modifications (poisoning) are introduced to corrupt the knowledge graph. The use of red nodes/edges in the poisoning stage suggests a deliberate attempt to degrade model performance by altering critical relationships. The bidirectional arrows between latent and input spaces imply a feedback mechanism to improve approximation accuracy.

DECODING INTELLIGENCE...