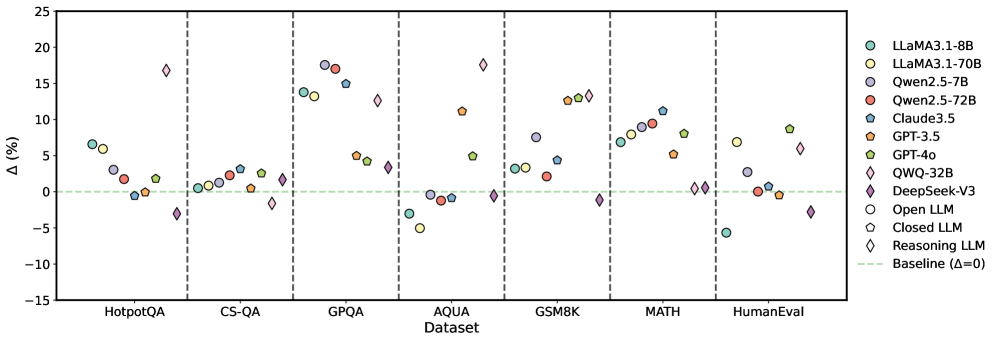

## Scatter Plot: Model Performance Comparison Across Datasets

### Overview

The image is a scatter plot comparing the performance improvement (Δ%) of various language models (LLMs) across seven benchmark datasets. The x-axis represents datasets (HotpotQA, CS-QA, GPQA, AQUA, GSM8K, MATH, HumanEval), while the y-axis shows percentage change relative to a baseline (Δ=0). Data points are color-coded and shaped by model type, with a horizontal dashed line at 0% indicating the baseline.

### Components/Axes

- **X-axis (Dataset)**:

- Categories: HotpotQA, CS-QA, GPQA, AQUA, GSM8K, MATH, HumanEval

- Separated by vertical dashed lines

- **Y-axis (Δ%)**:

- Range: -15% to 25%

- Baseline: Horizontal dashed line at 0%

- **Legend**:

- Located on the right

- Models:

- LLaMA3.1-8B (cyan circles)

- LLaMA3.1-70B (yellow circles)

- Qwen2.5-7B (purple circles)

- Qwen2.5-72B (red circles)

- Claude3.5 (blue circles)

- GPT-3.5 (orange squares)

- GPT-4o (green squares)

- QWQ-32B (pink diamonds)

- DeepSeek-V3 (purple diamonds)

- Open LLM (white circles)

- Closed LLM (white squares)

- Reasoning LLM (white diamonds)

### Detailed Analysis

1. **HotpotQA**:

- LLaMA3.1-8B: ~6% improvement

- Qwen2.5-7B: ~3% improvement

- GPT-4o: ~2% improvement

- DeepSeek-V3: ~-3% (below baseline)

2. **CS-QA**:

- LLaMA3.1-70B: ~1% improvement

- GPT-3.5: ~1% improvement

- QWQ-32B: ~17% improvement (outlier)

3. **GPQA**:

- LLaMA3.1-70B: ~14% improvement

- GPT-4o: ~5% improvement

- Qwen2.5-72B: ~17% improvement

4. **AQUA**:

- LLaMA3.1-8B: ~-4% (below baseline)

- GPT-3.5: ~1% improvement

- QWQ-32B: ~18% improvement

5. **GSM8K**:

- LLaMA3.1-70B: ~3% improvement

- GPT-4o: ~12% improvement

- QWQ-32B: ~13% improvement

6. **MATH**:

- LLaMA3.1-8B: ~7% improvement

- GPT-4o: ~9% improvement

- QWQ-32B: ~1% improvement

7. **HumanEval**:

- LLaMA3.1-70B: ~7% improvement

- GPT-4o: ~9% improvement

- QWQ-32B: ~6% improvement

### Key Observations

- **High Performers**:

- QWQ-32B (pink diamonds) consistently shows the highest Δ% across datasets (17-18% in CS-QA, AQUA, GSM8K).

- GPT-4o (green squares) and LLaMA3.1-70B (yellow circles) demonstrate strong performance in GPQA, GSM8K, and HumanEval.

- **Underperformers**:

- DeepSeek-V3 (purple diamonds) and Closed LLM (white squares) frequently fall below baseline (-3% to 0%).

- Open LLM (white circles) shows mixed results, with some datasets near baseline.

- **Baseline Context**:

- 40% of data points (e.g., LLaMA3.1-8B in AQUA, DeepSeek-V3 in HotpotQA) fall below the 0% baseline.

### Interpretation

The chart reveals significant variability in model performance across datasets. QWQ-32B and GPT-4o exhibit the most consistent improvements, suggesting superior reasoning capabilities in complex tasks like GPQA and GSM8K. Conversely, models like DeepSeek-V3 and Closed LLM underperform in multiple benchmarks, indicating potential limitations in generalization. The baseline (Δ=0) serves as a critical reference, highlighting that many models fail to surpass human-level performance in certain domains. Notably, QWQ-32B's outlier performance in CS-QA (17% improvement) warrants further investigation into its specialized training or architecture. This analysis underscores the importance of dataset-specific evaluation when benchmarking LLMs.