## Screenshot: Crowdsourcing Task Instructions

### Overview

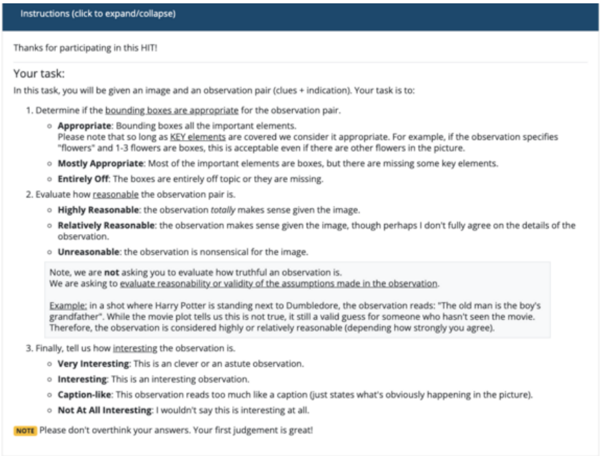

This image is a screenshot of a web-based instruction page for a crowdsourcing task, likely from a platform like Amazon Mechanical Turk (HIT). The page provides detailed guidelines for a human annotator ("worker") on how to evaluate an image-observation pair. The content is entirely textual, structured as a numbered list with sub-categories and definitions. The primary language is English.

### Components/Axes

The page is structured into three main sections:

1. **Header:** A dark blue bar at the top with the text "Instructions (click to expand/collapse)".

2. **Greeting & Task Introduction:** A thank you message and a brief overview of the task.

3. **Core Task Instructions:** A numbered list (1, 2, 3) detailing the three evaluation criteria.

4. **Footer Note:** A highlighted yellow box with a final instruction.

### Detailed Analysis

The text content is transcribed and structured below:

**Header:**

`Instructions (click to expand/collapse)`

**Main Body:**

`Thanks for participating in this HIT!`

`Your task:`

`In this task, you will be given an image and an observation pair (clues + indication). Your task is to:`

**1. Determine if the bounding boxes are appropriate for the observation pair.**

* **Appropriate:** Bounding boxes cover all the important elements. Please note that so long as KEY elements are covered we consider it appropriate. For example, if the observation specifies "flowers" and 1-3 flowers are boxed, this is acceptable even if there are other flowers in the picture.

* **Mostly Appropriate:** Most of the important elements are boxed, but some key elements are missing.

* **Entirely Off:** The observation is entirely off topic or the boxes are missing.

**2. Evaluate how reasonable the observation is.**

* **Highly Reasonable:** The observation totally makes sense given the image.

* **Relatively Reasonable:** The observation makes sense given the image, though perhaps I don't fully agree on the details of the observation.

* **Unreasonable:** The observation is nonsensical for the image.

`Note, we are not asking you to evaluate how truthful an observation is. We are asking to evaluate reasonability or validity of the assumptions made in the observation.`

`Example: In a shot where Harry Potter is standing next to Dumbledore, the observation reads: "The old man is the boy's grandfather". While the movie plot tells us this is not true, it is still a valid guess for someone who hasn't seen the movie. Therefore, the observation is considered highly or relatively reasonable (depending on how strongly you agree).`

**3. Finally, tell us how interesting the observation is.**

* **Very Interesting:** This is a clever or an astute observation.

* **Interesting:** This is an interesting observation.

* **Caption-like:** This observation reads too much like a caption (just states what's obviously happening in the picture).

* **Not At All Interesting:** I wouldn't say this is interesting at all.

**Footer Note (in a yellow box):**

`NOTE Please don't overthink your answers. Your first judgement is great!`

### Key Observations

* **Hierarchical Structure:** The instructions use a clear numbered list for main tasks and bullet points for sub-categories, creating a decision tree for the annotator.

* **Clarification via Example:** A specific example (Harry Potter) is provided to disambiguate the crucial difference between "truthfulness" and "reasonableness," which is central to the task's design.

* **Guidance on Judgment:** The final note explicitly encourages quick, intuitive responses over over-analysis, which is a common directive in crowdsourcing to improve efficiency and reduce worker fatigue.

* **Visual Emphasis:** The final note is highlighted in a yellow box, drawing the worker's attention to this meta-instruction about their workflow.

### Interpretation

This document is a protocol for collecting human judgment data on the alignment between images and textual observations. Its purpose is to train or evaluate AI models, likely in the domain of visual question answering, image captioning, or visual reasoning.

The three evaluation axes—**bounding box appropriateness**, **observation reasonableness**, and **observation interest**—reveal what the data collectors value:

1. **Spatial Grounding:** They care if the text refers to elements that are actually present and locatable in the image.

2. **Plausible Inference:** They prioritize logical, commonsense reasoning over factual correctness, as shown by the Harry Potter example. This suggests the goal is to assess a model's ability to make sensible inferences from visual data, not to test its knowledge of external facts.

3. **Informational Value:** They distinguish between trivial, caption-like descriptions and insightful observations, indicating a desire for data that captures deeper understanding or non-obvious relationships.

The instructions are meticulously designed to standardize subjective human judgments into categorical labels. The inclusion of "Mostly Appropriate" and "Relatively Reasonable" acknowledges the gray areas in visual-textual alignment, while the note against overthinking aims to capture natural, immediate human perception. This structured data would be invaluable for creating datasets to benchmark how well an AI can "see" and "reason" about the world in a human-like way.