\n

## Screenshot: HIT Instructions

### Overview

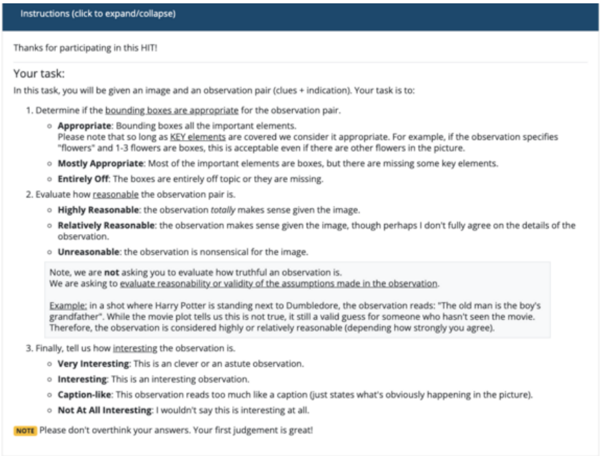

This is a screenshot of instructions for a Human Intelligence Task (HIT) on a platform like Amazon Mechanical Turk. The instructions detail the task of evaluating the appropriateness of bounding boxes around elements in an image and the reasonableness/interestingness of an observation related to that image. The document is primarily in English.

### Components/Axes

The screenshot is structured as a set of instructions with bullet points and nested sub-points. Key components include:

* **Title:** "Instructions (click to expand/collapse)"

* **Introductory Text:** "Thanks for participating in this HIT!"

* **Task Description:** "In this task, you will be given an image and an observation pair (clues + indication). Your task is to:"

* **Evaluation Criteria 1:** Appropriateness of bounding boxes (Appropriate, Mostly Appropriate, Entirely Off)

* **Evaluation Criteria 2:** Reasonableness of the observation (Highly Reasonable, Relatively Reasonable, Unreasonable)

* **Evaluation Criteria 3:** Interestingness of the observation (Very Interesting, Interesting, Caption-like, Not At All Interesting)

* **Note:** "Please don't overthink your answers. Your first judgement is great!"

### Detailed Analysis or Content Details

The text content is transcribed below:

"Instructions (click to expand/collapse)

Thanks for participating in this HIT!

Your task:

In this task, you will be given an image and an observation pair (clues + indication). Your task is to:

1. Determine if the bounding boxes are appropriate for the observation pair.

* Appropriate: Bounding boxes are the important elements.

Please note that so long as KEY elements are covered we consider it appropriate. For example, if the observation specifies “flowers” and 1-3 flowers are boxes, this is acceptable even if there are other flowers in the picture.

* Mostly Appropriate: Most of the important elements are boxes, but there are missing some key elements.

* Entirely Off: The boxes are entirely off topic or they are missing.

2. Evaluate how reasonable the observation pair is.

* Highly Reasonable: the observation totally makes sense given the image.

* Relatively Reasonable: the observation makes sense given the image, though perhaps I don’t fully agree on the details of the observation.

* Unreasonable: the observation is nonsensical for the image.

Note, we are not asking you to evaluate how truthful an observation is.

We are asking to evaluate reasonability or validity of the assumptions made in the observation

Example: in a short where Harry Potter is standing next to Dumbledore, the observation reads: “The old man is the boy’s grandfather.” While the movie plot tells us this is not true, it still a valid guess for someone who hasn’t seen the movie.

Therefore, the observation is considered highly or relatively reasonable (depending how strongly you agree).

3. Finally, tell us how interesting the observation is.

* Very Interesting: This is an clever or an astute observation.

* Interesting: This is an interesting observation.

* Caption-like: This observation reads too much like a caption (just states what’s obviously happening in the picture).

* Not At All Interesting: I wouldn’t say this is interesting at all.

NOTE Please don’t overthink your answers. Your first judgement is great!"

### Key Observations

The document is a procedural guide. It emphasizes that the task is not about determining the *truth* of an observation, but rather its *reasonableness* given the image. The instructions also caution against overthinking and encourage relying on initial judgment. The use of examples, like the Harry Potter scenario, clarifies the evaluation criteria.

### Interpretation

This document outlines the guidelines for a quality control task within a larger data annotation or image understanding project. The goal is to assess the quality of both bounding box annotations (identifying important elements in an image) and the logical connection between an image and a textual description (observation). The emphasis on "reasonableness" over "truth" suggests the project may be exploring subjective interpretations or dealing with ambiguous images where a single "correct" answer doesn't exist. The instructions are designed to standardize the evaluation process and minimize bias by encouraging quick, intuitive judgments. The HIT is likely part of a larger effort to train or evaluate computer vision or natural language processing models.