## Line Charts: Training Metrics Across Optimizers and Precision Settings

### Overview

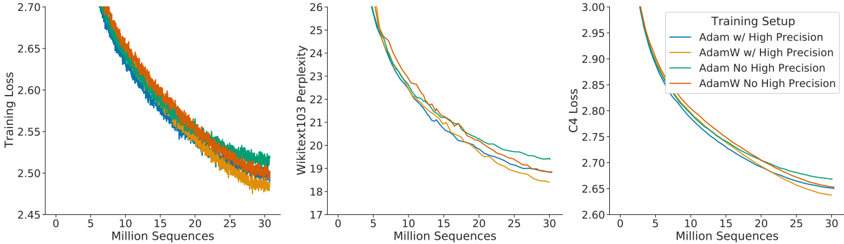

The image contains three line charts comparing training metrics (Training Loss, Wikitext103 Perplexity, and C4 Loss) across four optimizer configurations: Adam with High Precision, AdamW with High Precision, Adam without High Precision, and AdamW without High Precision. All charts plot metrics against "Million Sequences" (x-axis) up to 30 million sequences, showing decreasing trends as training progresses.

---

### Components/Axes

1. **Chart 1: Training Loss**

- **Y-axis**: Training Loss (2.45–2.70)

- **X-axis**: Million Sequences (0–30)

- **Legend**:

- Blue: Adam w/ High Precision

- Orange: AdamW w/ High Precision

- Green: Adam No High Precision

- Red: AdamW No High Precision

2. **Chart 2: Wikitext103 Perplexity**

- **Y-axis**: Perplexity (17–26)

- **X-axis**: Million Sequences (0–30)

- **Legend**: Same as Chart 1.

3. **Chart 3: C4 Loss**

- **Y-axis**: C4 Loss (2.60–3.00)

- **X-axis**: Million Sequences (0–30)

- **Legend**: Same as Chart 1.

---

### Detailed Analysis

#### Chart 1: Training Loss

- **Trend**: All lines show a steep decline from ~2.70 at 0 million sequences to ~2.50 at 30 million sequences.

- **Key Data Points**:

- **Adam w/ High Precision (Blue)**: Starts at 2.70, ends at 2.50. Intermediate values: ~2.65 at 10M, ~2.55 at 20M.

- **AdamW w/ High Precision (Orange)**: Similar trajectory to Blue, with slight divergence at 15M (~2.58 vs. 2.57 for Blue).

- **Adam No High Precision (Green)**: Slightly higher loss than Blue/Orange, ending at ~2.52.

- **AdamW No High Precision (Red)**: Closest to Green, ending at ~2.53.

#### Chart 2: Wikitext103 Perplexity

- **Trend**: All lines decrease from ~25.5 at 0M to ~19.5 at 30M.

- **Key Data Points**:

- **Adam w/ High Precision (Blue)**: Starts at 25.5, ends at 19.5. Intermediate: ~22.5 at 10M.

- **AdamW w/ High Precision (Orange)**: Slightly better performance, ending at ~19.3.

- **Adam No High Precision (Green)**: Ends at ~19.7.

- **AdamW No High Precision (Red)**: Ends at ~19.6.

#### Chart 3: C4 Loss

- **Trend**: All lines decline from ~3.00 at 0M to ~2.65 at 30M.

- **Key Data Points**:

- **Adam w/ High Precision (Blue)**: Starts at 3.00, ends at 2.65. Intermediate: ~2.85 at 10M.

- **AdamW w/ High Precision (Orange)**: Ends at ~2.63.

- **Adam No High Precision (Green)**: Ends at ~2.67.

- **AdamW No High Precision (Red)**: Ends at ~2.66.

---

### Key Observations

1. **Consistent Decline**: All configurations show improvement (lower loss/perplexity) as training progresses, indicating effective learning.

2. **High Precision Impact**:

- High precision settings (Blue/Orange) generally outperform no-high-precision (Green/Red) in Training Loss and C4 Loss.

- Wikitext103 Perplexity shows smaller differences between high/low precision.

3. **Adam vs. AdamW**:

- AdamW configurations (Orange/Red) slightly outperform Adam (Blue/Green) in Training Loss and C4 Loss, but differences are minimal (<0.05).

- Wikitext103 Perplexity shows AdamW w/ High Precision as the best performer.

---

### Interpretation

The data suggests that:

- **High Precision** improves training stability and final performance across all metrics, though the effect is more pronounced in Training Loss and C4 Loss.

- **AdamW** (with/without high precision) marginally outperforms Adam, likely due to its adaptive weight decay mechanism.

- The convergence patterns are similar across all configurations, indicating robustness in the training process. However, the choice of optimizer and precision has a smaller impact on Wikitext103 Perplexity compared to other metrics.

Notably, the lines for AdamW w/ High Precision (Orange) and Adam w/ High Precision (Blue) are nearly overlapping in all charts, suggesting that the optimizer choice has a smaller effect than precision settings in this context.